TechRadar's Sustainability Week 2024

All sustainability week coverage-

7 smart home tips to help you save energy and reduce waste

Smart home devices don’t just make life easier, they can also help you save energy and minimize waste.

-

-

eBay’s refurbished tech revolution – a quest to prevent e-waste and save you money

The challenges and successes of selling refurbished technology

-

I made my own nut milk for a month – here’s what I learned

I ditched store-bought dairy-free milk and made my own using the Milky Plant – and I’m never going back

-

This company just bioengineered a plant-bacteria combo to clean air better than an air purifier

Neoplants Neo PX is both a plant and an air-cleaning system

-

Brighter, low-energy OLEDs are going into production this year – but they won’t be coming to TVs just yet

New OLED displays could be twice as bright, twice as efficient and last three times longer but the best TVs won't be the first to see this new tech.

-

Get daily insight, inspiration and deals in your inbox

Get the hottest deals available in your inbox plus news, reviews, opinion, analysis and more from the TechRadar team.

Explore TechRadar

Reviews

All reviews-

T3 Featherweight StyleMax hair dryer review

The T3 Featherweight StyleMax holds plenty of promise, but doesn't always deliver.

-

-

Oppo Reno 10 review: cheap with a catch

The Oppo Reno 10 is one of the best cheap phones on the market right now, but it falls short in two major ways.

-

Updated

UpdatedAmazon Music Unlimited review

Amazon Music Unlimited delivers good value and hi-res audio in a simple package that’s ideal for both Prime and non-Prime subscribers alike.

-

Huawei MateBook D 16 review: an all-round solid laptop for those after a cheaper Dell XPS

It's no Dell XPS or MacBook Air, but the MateBook D 16 is a decent laptop with a sensible entry price.

-

BenQ Zowie EC2-CW review: no-nonsense esports performance

Thanks to its superior comfort, the Zowie EC2-CW is an excellent choice for competitive FPS games.

-

Moto G34 review

The Moto G34 is one of the cheapest 5G phones around, and it manages to exceed expectations in some key areas.

-

Updated

UpdatedDeezer review

Deezer has a huge library, minimal design and good recommendations – but there’s little to set it apart from its rivals.

-

How TechRadar tests

Product testing for the real world

You need to know that the device or service you’re about to spend money on works as advertised - and that it works in the real world.

- We test properly: objective and subjective testing

- We use experienced experts for our reviews

- We always offer 100 per cent unbiased, independent opinions

reviews

hours' testing

buying guides

Phones

All Phones-

Android 15 could give you a super-charged dark mode that forces incompatible apps to support it

Android 15 could get a better way to force apps into dark mode without the current compatibility issues.

-

-

Samsung Galaxy Z Fold 6 specs predictions: all the key specs we expect

Between leaks, rumors, and educated guesses, we have a good idea of the likely Samsung Galaxy Z Fold 6 specs.

-

Android’s next bright idea is adverts on your lock screen – and it sounds like the Windows 11 nightmare revisited

Adverts could be coming to some Android phones as Glance expands into the US in partnership with Motorola and Verizon.

-

Oppo Reno 10 review: cheap with a catch

The Oppo Reno 10 is one of the best cheap phones on the market right now, but it falls short in two major ways.

-

Samsung’s next Galaxy AI feature could revolutionize smartphone video

Samsung has confirmed that more Galaxy AI features are on the way, and a leaker thinks they have one figured out.

-

Don’t worry, Apple didn’t cancel your old iPhone trade in after all

A bug caused Apple to tell people their trade-ins were canceled, but everything is working normally.

-

Moto G34 review

The Moto G34 is one of the cheapest 5G phones around, and it manages to exceed expectations in some key areas.

-

Laptops & Computing

All Laptops & Computing-

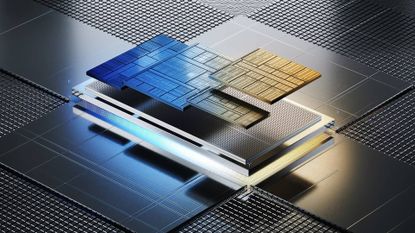

Intel boss confirms Panther Lake is on track for mid-2025 release date - with some bold claims

Intel CEO Pat Gelsinger has doubled down on Panther Lake coming in mid-2025 with some bold performance claims in a recent earnings call.

-

-

Signed, sealed, delivered, summarized: new Gemini-powered AI feature for Gmail looks like it’s close to launch

Gmail is getting a summarize feature powered by Gemini, promising faster email comprehension. Users can rate summaries, helping improve accuracy and efficiency.

-

The latest and top-rated MacBook Air M3 plummets to its lowest price yet

The MacBook Air M3 is our top-rated laptop and it's down to a new record-low price at Amazon.

-

Gigabyte’s heavy-handed fix for Intel Core i9 CPU instability drops performance to Core i7 levels in some cases – but don’t panic yet

Gigabyte seems to have been a lot stricter than other motherboard makers with its cure for game-crashing blues.

-

Microsoft is bringing Window’s 11’s slimmed-down updates to Windows 10, shedding megabytes for quicker upgrades

Windows 10's latest update brings smaller downloads, quicker installs, and improved features, closing the efficiency gap with Windows 11.

-

Huge AMD Strix Point leak gives Snapdragon X Elite CPU something to worry about – and Apple’s M4, too

AMD has some seriously powerful APUs – CPU + GPU + NPU – chips inbound and this Strix Point leak illustrates that clearly.

-

Quordle today – hints and answers for Friday, April 26 (game #823)

Looking for Quordle clues? We can help. Plus get the answers to Quordle today and past solutions.

-

Apple

All Apple-

The latest and top-rated MacBook Air M3 plummets to its lowest price yet

The MacBook Air M3 is our top-rated laptop and it's down to a new record-low price at Amazon.

-

-

Don’t worry, Apple didn’t cancel your old iPhone trade in after all

A bug caused Apple to tell people their trade-ins were canceled, but everything is working normally.

-

Apple is forging a path towards more ethical generative AI - something sorely needed in today's AI-powered world

Amidst generative AI copyright chaos, Apple looks like it’s leading with ethical training methods, navigating legal hurdles to forge a path of compliance and innovation.

-

iOS 18 could be loaded with AI, as Apple reveals 8 new artificial intelligence models that run on-device

Apple announces multiple new open-source AI models. But what does this mean for iOS and Mac users?

-

Finished Fallout on Amazon? Then Silo is your next must-watch show

If you want more post-apocalypse Vault-like action after Fallout, then Silo on Apple TV Plus is for you.

-

Streaming

All Streaming-

Avengers 5 rumored to start filming in early 2025, and one Marvel supergroup isn't expected to feature

Two new reports have seemingly provided some fascinating new details about Marvel's next Avengers movie.

-

-

Netflix movie of the day: The Hunger Games is still a great dose of dystopian thrills

Sure, I'll volunteer as tribute to watch this again.

-

Prime Video movie of the day: How to Train Your Dragon is a perfect family movie with 99% on Rotten Tomatoes

This 2010 movie is still fun for all ages, and is ideal for weekend viewing.

-

7 new movies and TV shows to stream on Netflix, Prime Video, Max, and more this weekend (April 26)

From Oscar-winning dramas to star-studded video game adaptations, there’s plenty to watch this weekend.

-

Updated

UpdatedNew movies: the most exciting films coming to theaters in 2024

A whole host of new movies are set to arrive in 2024 – here are the biggest ones to keep an eye out for.

-

TVs

All TVs-

-

This Android TV update will stop your Gmail details from being exposed

Some Android TVs have a loophole that could expose your email. Thankfully, Google's going to close it.

-

Best Buy is slashing prices on our best-rated OLED TVs - save over $1,000 while you can

Best Buy is having a huge sale on our best-rated OLED TVs, and I'm rounding up the best deals with over $1,000 from Samsung and LG.

-

Updated

UpdatedThe best indoor TV antennas for 2024

These are the best indoor TV antennas for watching free TV channels at home.

-

How to buy a good secondhand TV, or make your old TV last longer

Buying a secondhand TV is a great way to save money and help prevent waste, but navigating it can be a bit of a minefield - we're here to help with that.

-

Brighter, low-energy OLEDs are going into production this year – but they won’t be coming to TVs just yet

New OLED displays could be twice as bright, twice as efficient and last three times longer but the best TVs won't be the first to see this new tech.

-

JMGO’s new 4K projector has a built-in gimbal so you can place it anywhere in your home

The new JMGO N1 Ultra promises to be the perfect projector for pretty much any space.

-

New Google TV 4K streaming stick tipped to land soon – and it could come with a new remote

Nearly four years after the Chromecast with Google TV 4K launched, there are rumors that a new one could launch soon.

-

Audio

All Audio-

-

You can’t call yourself an audiophile until you’ve seriously considered Luxman’s elite network transport

So you're a hi-fi buff? You may need to level up, although Luxman's first Network Transport NT-07 won't come cheap.

-

How to connect your AirPods to a laptop

Connecting your AirPods to a laptop or Chromebook may not be as effortless as connecting them to your MacBook, but it’s still easier than you probably think.

-

Updated

UpdatedThe best music streaming services 2024

The very best music streaming services and apps for every kind of music fan.

-

Updated

UpdatedAmazon Music Unlimited review

Amazon Music Unlimited delivers good value and hi-res audio in a simple package that’s ideal for both Prime and non-Prime subscribers alike.

-

Spotify just launched a quiz to reveal your K-Pop persona – which band member are you?

Spotify is stopping at nothing to make music streaming interactive and social, with a new fan-specific K-Pop feature in its latest addition.

-

Samsung Galaxy Buds 3 Pro – everything we know so far and what we want to see

We've been waiting a while for the successors to the Galaxy Buds 2 Pro, but the wait might almost be over.

-

Samsung Galaxy Buds 3 Pro leak gives us a clue about battery capacity – don't expect any surprises

Based on a new leak, the Galaxy Buds 3 Pro charging case battery is going to match its predecessor.

-

Health & Fitness

All Health & Fitness-

-

Should you buy a refurbished Apple Watch?

A refurbished Apple Watch could save you money, but is there a catch?

-

From Apple and Casio to recycling running shoes, how sustainable is fitness tech in 2024?

The use of recycled materials in outdoor tech is growing and it's clear why that is a very good thing all round.

-

A mystery Wear OS watch has just surfaced as the Pixel Watch 3 gets closer

A new FCC listing may have revealed that Google might be secretly working on a new mid-range smartwatch.

-

Cameras

All Cameras-

-

Sony tipped to launch two major full-frame cameras in 2024, but no new flagship

Sony is tipped to announce a full-frame Sony Alpha and FX cine camera later in 2024.

-

Updated

UpdatedThe best 4K camera 2024

Searching for the best 4K camera? These top, affordable stars love shooting for the big screen.

-

Fujifilm's next budget camera may house surprisingly powerful hardware

Fujifilm's rumored X-T50 will reportedly support in-body image stabilization and have a 40MP image sensor inside.

-

DJI Mini 4K release date confirmed: here's what to expect from DJI's cheapest-ever 4K drone

DJI is refreshing its entry-level Mini 2 SE drone with the Mini 4K, which will likely feature the same 1/1.3-inch 12MP sensor as pricier models.

-

Home

All Home-

-

T3 Featherweight StyleMax hair dryer review

The T3 Featherweight StyleMax holds plenty of promise, but doesn't always deliver.

-

I made my own nut milk for a month – here’s what I learned

I ditched store-bought dairy-free milk and made my own using the Milky Plant – and I’m never going back

-

Updated

UpdatedThe best vacuum cleaner 2024

These are the fastest, quietest, smartest, most powerful vacuum cleaners for every kind of home.

-

New Google Nest Audio and Nest Hub Max devices could be in the works

Google could be working on new Nest Audio and Nest Hub Max devices, and there's potential for generative AI to be front and center.

-

Buying guides

All Buying Guides-

Updated

UpdatedThe best 4K camera 2024

Searching for the best 4K camera? These top, affordable stars love shooting for the big screen.

-

-

Updated

UpdatedThe best music streaming services 2024

The very best music streaming services and apps for every kind of music fan.

-

Buying Guide

Buying GuideThe best mirrorless camera for 2024

Our best mirrorless camera guide will help you find the perfect pick for you, whatever your budget or shooting style.

-

Updated

UpdatedThe best gaming PC 2024

Finding the best gaming PC for you can be tricky, with confusing spec sheets and numerous configurations - but we're here to help.

-

Updated

UpdatedThe best cheap tablets 2024

Looking for the best cheap tablet that's actually worth buying? Whether you're after an Amazon, Android, or iPad tab, you'll find our favorite budget options here.

-

Updated

UpdatedThe best power banks 2024

Here are the best power banks, whether you're after a slim portable charger for phones or a large bank to juice up your tablet.

-

Updated

UpdatedThe best iPad 2024

Whether you want the best iPad Pro or the best cheaper iPad, a small iPad mini or a thin-yet-powerful iPad Air, you'll find the top options here.

-

Why we're experts

We care passionately about tech

The TechRadar team has a life-long passion for the latest innovations – over 300 years of experience between us, in fact – and we’ve made it our mission to share that combined knowledge and expertise with you.

We’re here to provide an independent voice that cuts through all the noise to inspire, inform and entertain you; ensuring you get maximum enjoyment from your tech at all times. Technology is our passion, so let us be your expert guide.

years' experience

how-tos written

Apple events covered

Deals

All Deals-

-

Best Buy's huge 3-day sale just went live - shop the 15 best tech deals from $59.99

Best Buy just launched a huge three-day sale, and I'm rounding up the 15 best tech deals, with prices starting at just $59.99.

-

The latest and top-rated MacBook Air M3 plummets to its lowest price yet

The MacBook Air M3 is our top-rated laptop and it's down to a new record-low price at Amazon.

-

The price of one of the fastest gaming SSD cards keeps on free falling - now it's under $155

One of the fastest gaming PCIe 5.0 SSDs, the Crucial T705, is now on sale for its lowest price ever - dipping below $155.

-

Missed Dell's latest flash sale? I recommend these 4 laptop deals that are just as good

Dell's most recent flash sale is now over but the manufacturer still has some great laptop deals available - here are 4 of the best ones I recommend.

-

Deals

DealsMother's Day sales 2024: deals from Walmart, Target, Amazon and more

Your 2024 Mother's Day sales guide with all the best deals from Walmart, Target, and Amazon on gifts, jewelry, flowers, and more.

-

Amazon has a ton of cheap tech gadgets on sale – I've found the 13 best ones

Amazon has a ton of its cheap tech gadgets on sale right now, and I'm rounding up the 13 best ones with prices starting at $19.99.

-

-

-

OnePlus Coupons for April 2024

Use these OnePlus coupons to get a better price on mobiles & accessories from the leading Android smartphone retailer.

-

Keeper Security Promo Codes for April 2024

Look through our Keeper Security promo codes to save on subscriptions to the online password manager and protect your details online for less.

-

Casper Coupons for April 2024

These Casper coupons can help you save big on your next bedding purchase from sheets, pillows, mattresses, and more.

-

Paramount Plus Coupon Codes for April 2024

Our Paramount Plus coupon codes can be added to your subscription to help you save on monthly streaming fees.

-

TechRadar's story

Our mission is unchanged

TechRadar was launched in January 2008 with the goal of helping regular people navigate the world of technology. It quickly grew to become the UK's biggest consumer technology site.

Expansions into the US and Australia followed in 2012 and we are now one of the biggest tech sites in the world.

- We've been covering tech since 2008

- 17 international editions from Mexico to New Zealand

- We're a globally respected brand worldwide

Software

All Software-

-

Signed, sealed, delivered, summarized: new Gemini-powered AI feature for Gmail looks like it’s close to launch

Gmail is getting a summarize feature powered by Gemini, promising faster email comprehension. Users can rate summaries, helping improve accuracy and efficiency.

-

Microsoft is bringing Window’s 11’s slimmed-down updates to Windows 10, shedding megabytes for quicker upgrades

Windows 10's latest update brings smaller downloads, quicker installs, and improved features, closing the efficiency gap with Windows 11.

-

Apple is forging a path towards more ethical generative AI - something sorely needed in today's AI-powered world

Amidst generative AI copyright chaos, Apple looks like it’s leading with ethical training methods, navigating legal hurdles to forge a path of compliance and innovation.

-

iOS 18 could be loaded with AI, as Apple reveals 8 new artificial intelligence models that run on-device

Apple announces multiple new open-source AI models. But what does this mean for iOS and Mac users?

-

-

-

Manor Lords already feels like it has all the ingredients of a superb strategy game - but one of its greatest tricks is a camera that brings you down to earth

Strategy games often struggle to retain the humanity of the population you lead, but Manor Lords has a clever solution.

-

Nintendo Switch 2 will reportedly be larger than its predecessor and feature magnetic Joy-Con controllers

A reliable source claims that the Nintendo Switch 2 will reportedly feature magnetic Joy-Con controllers.

-

PlayStation Portal restock tracker - the latest tips on where to check for stock

We're still tracking the PlayStation Portal restock situation for you, trying to give you the best advice on where to buy the PlayStation Portal remote play device.

-

Fallout 4 free update bugged on PS5, with PS Plus Extra subscribers unable to access the new version – but a fix is coming

PS Plus Extra subscribers haven't been able to access Fallout 4's free update, but Bethesda is aware of the issue.

-

Meet Your Experts

Between them, the TechRadar team have 300 years' experience in tech journalism. Here's why you should trust them.

-

-

Hackers attempt to hijack a major WordPress plugin that could allow for site takeovers

WP-Automatic was found to be vulnerable to a critical flaw that can be used to take over sites and steal data.

-

Security and interoperability on the cards for US government use of Zoom, Slack and Teams

Federal agencies need to kick their collaboration into gear following series of recent security breaches.

-

iKier K1 Pro Max 48W review

Versatile and powerful laser cutter with time-saving autofocus

-

US health giant Kaiser hit by data breach — millions of customers informed they could be at risk

Kaiser shared sensitive data with third parties by mistake and is now informing all affected users.

-