Microsoft has always been big on Vision learning and now the company is partnering up with some of the biggest names in imaging.

On the first day of its Build 2018 conference, Microsoft announced it is partnering up with DJI to develop commercial drone solutions. Before you start imagining a dystopic world of airborne surveillance, the two companies plan to develop drones for the agriculture, construction, and public safety industries.

Together with DJI, Microsoft hopes to build life-changing solutions, such as applications that can help farmers produce more crops.

Article continues below

Additionally, Microsoft is also partnering with Qualcomm in a joint effort to create a vision AI dev kit (pictured in the lead image) running Azure Internet of Things (IoT Edge). The companies hope to create a camera-based IoT solution that can power advanced Azure services like machine learning and cognitive services – which we’ll get more into shortly.

Qualcomm isn’t just providing the silicon in this team up, the company’s Vision Intelligence Platform and AI Engine will also help accelerate the hardware inside the vision AI developer camera kit. Azure services will be a big component to the developer platform, powering the machine learning, stream analytics and cognitive services – all of which can be downloaded from the cloud to run locally on the edge (aka non-connected AI).

Team-ups aside, Microsoft is also bringing Project Kinect back, but this time for Azure. The repurposed device still contains all the unmatched time of flight depth camera and onboard computing power as previous editions, but now it has been reprogrammed for AI on the Edge.

Azure meets AI

Azure, Microsoft’s cloud computing service has always been tangentially related to the company’s burgeoning AI ambitions, but that all changes today. Along with partnerships, Microsoft let loose a fury of announcements that Azure will power multiple new projects in artificial intelligence.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

First up, Microsoft is opening up the Azure Internet of Things Edge Runtime, which will allow developers to modify and customize applications at the edge.

That might not seem like it will matter much to you now, but there could be big implications in the future. Microsoft expects that there will be 20 billion connected IoT devices by 2020, and the company wants to help to get them online to share information in their greater Intelligent Cloud.

The Azure IoT Edge Runtime also serves as a sort of backbone and platform from which all of Azure’s new AI driven applications will be built upon.

To this end, Microsoft also announced the first Azure Cognitive Service available for the edge. With this, developers will be able to more readily build applications that use powerful AI algorithms to interpret, listen, speak and see for edge devices. Azure Cognitive Services itself is also seeing an update with unified Speech service that makes it easier for developers to add speech recognition, text-to-speech, customized voice models and translation to their applications.

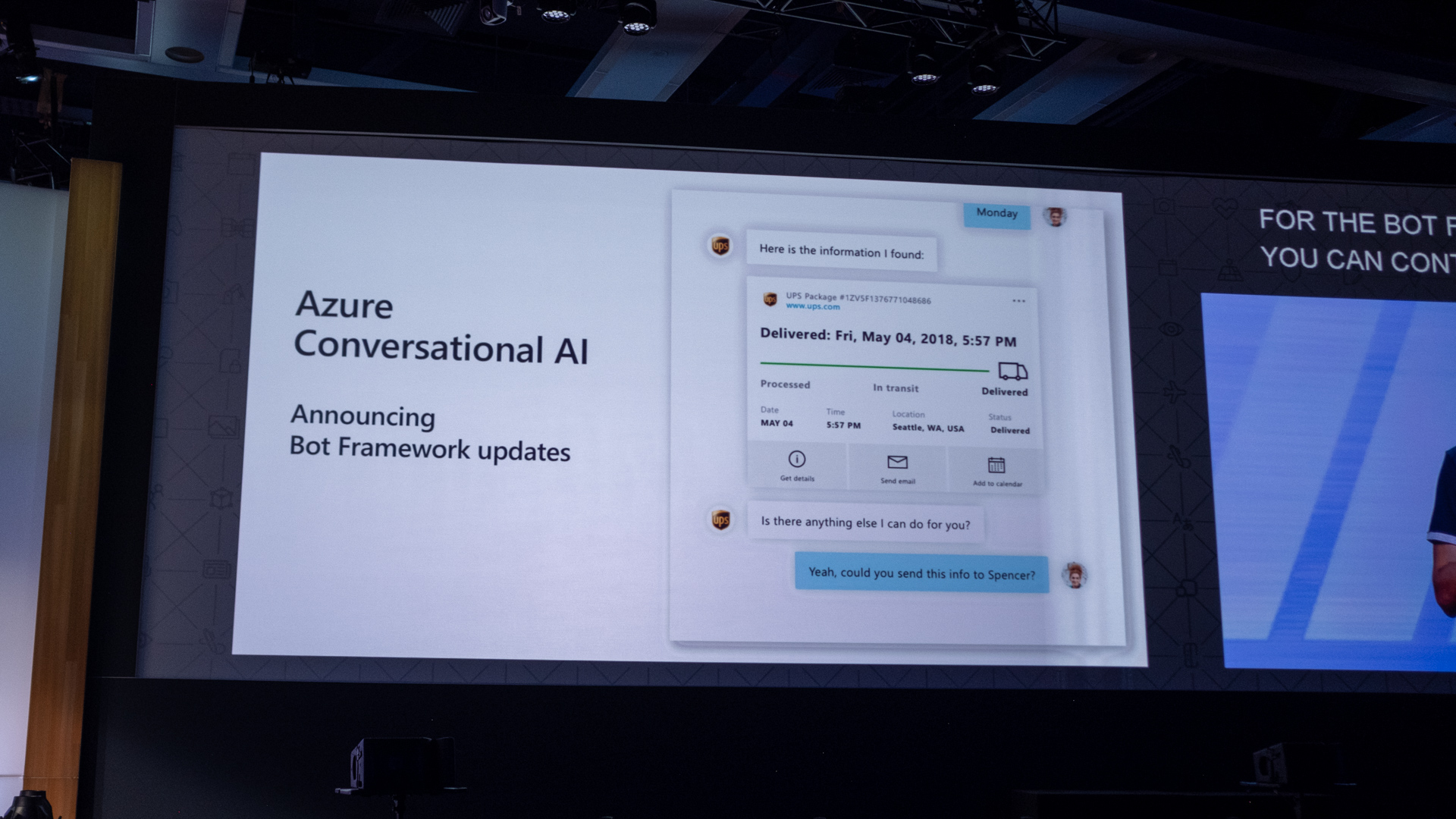

Microsoft has also updated its Bot Framework, which when combined with its new Cognitive Services updates, will enhance the next generation of conversational bots. The software company expects its future bots will deliver richer dialogs, full personality and voice customization for developers.

Last but not least, Microsoft previewed Project Brainwave, an architecture for deep neural net processing that will theoretically make Azure the fastest cloud to run real-time AI today.

Kevin Lee was a former computing reporter at TechRadar. Kevin is now the SEO Updates Editor at IGN based in New York. He handles all of the best of tech buying guides while also dipping his hand in the entertainment and games evergreen content. Kevin has over eight years of experience in the tech and games publications with previous bylines at Polygon, PC World, and more. Outside of work, Kevin is major movie buff of cult and bad films. He also regularly plays flight & space sim and racing games. IRL he's a fan of archery, axe throwing, and board games.

Become a TechRadar Insider

Become a TechRadar Insider