What iPhone 12 details can we learn from iOS 14?

Deciphering the clues in Apple's new iOS

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

We got a first look at iOS 14 at the WWDC keynote. This next version of Apple’s iPhone operating system will make its debut when the iPhone 12 handsets are officially announced later this year.

Apple knows what it's doing – a quick peek at iOS 14 was never suddenly going to tell us everything we want to know about the iPhone 12. But a few of the new features do point to some hardware advances that have been rumored, and perhaps the odd change that hasn't.

We’ve dug through the words of Tim Cook, Craig Federighi and others to see what iOS 14 really means for the next set of iPhones – and despite the rolling waves of catastrophe 2020 has brought, we still expect to see those phones around September this year.

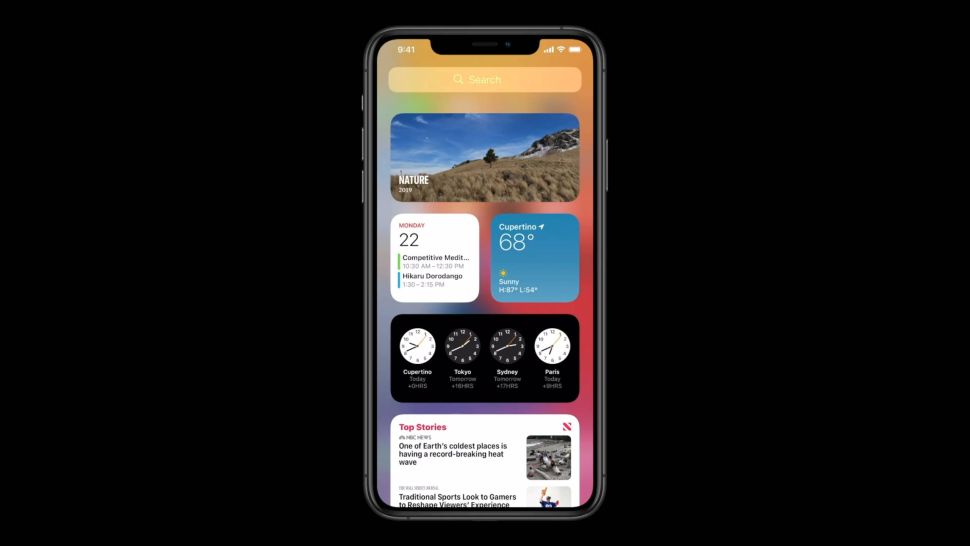

Article continues belowFresh widgets mean bigger screens?

During the WWDC 2020 keynote, Craig Federighi sold us the new complexity of iOS 14 as simplicity.

Siri will no longer take up the whole screen, but conjure contextual overlays depending on what you ask for. App Clips are mini applets that pop up on part of the screen when you scan a code or pass your phone over an NFC tag. And, most important of all, multi-size widgets can now sit on any home screen.

iPhone widgets are now much more like Android ones – but fewer Android fans seem to like them now than they did in 2012. Why? Few are as useful as the simple app icons that could use that same space.

Asking more of the screen space supports the rumors that Apple will increase the screen size of its larger iPhone 12 models – let’s call them the iPhone 12 Pro and iPhone 12 Pro Max.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

As the current iPhone 11 models still have fairly significant bezels around the screen, we expect the display on the new handsets to push further into the sides of the phone.

Apple may also choose to bump the iPhone 12 Pro Max up to display five columns of app icons, which in turn will give the phone more room for widgets without making you get rid of almost all of your app icons.

Right now, all iPhones from the iPhone SE up to the iPhone 11 Pro Max use four app columns, and this may start to look regressive in a phone with an even larger display, and which can make greater use of the space when iOS 14 lands.

Time for better mics?

Two new features announced at WWDC, one for iOS 14 and the other for the AirPods Pro, suggest that the iPhone 12 series may have better, or at least different, microphones than the iPhone 11.

Current iPhone use high-quality MEMS microphones. There’s a front-facing one by the earpiece, another on the bottom edge, and third in the camera housing.

Conversation translation was one of the big new iOS 14 features Apple revealed, and this features a landscape mode that can live translate conversations, laying the app out to give each speaker one half of the screen. This is nothing the iPhone 11 couldn't in theory do now, as the above-screen and bottom microphones can distinguish between people speaking on either side of the phone’s display.

However, that announcement, in tandem with the news that the AirPods Pro will soon support spatial audio, which is surround sound via psychoacoustic processing, suggests that Apple may alter the mic array on the iPhone 12.

We’re more excited to listen to a Netflix movie using earphones with the spatial information of a great surround system. But we’d be surprised if this isn't added to the iPhone’s camera video capture at some point.

Apple made the first steps towards this in 2019 with a feature called Audio Zoom. This is an application of beamforming. The phone uses multiple mics to detect the origin of, say, a person’s voice and block out all other sound – a similar feature was used way back in 2013 in the LG G3.

However, surround sound in video is potentially more exciting. And this is a feature we’ll see in many more areas outside of the iPhone over the next 12 months. The Microsoft Xbox Series X and Sony PlayStation 5 both have spatial audio, which will, with any luck, let you hear convincing surround sound through a normal pair of headphones.

AR is still a big deal

Apple continues to focus on how its iPhones interact with the world around us, and App Clips are the latest nod to this.

These are app fragments that will load when you scan a specific QR code, tap into an NFC point, or scan one of Apple’s new App Clip codes, which are Apple’s own version of a QR code. You’ll likely see NFC points spout up all over the place by the end of the year.

This is not quite AR (augmented reality), but it is perhaps a cousin.

We think this supports the popular rumor that some of the iPhone 12 series phones will have a lidar sensor, just like the iPad Pro 2020. Apple has much less of a stop-start relationship with AR and its tangentially linked technologies than Google’s Pixel division does.

Lidar stands for 'light detection and ranging', and is a little like the ToF (time-of-flight) technology used in many higher-end Android phones, including the Samsung Galaxy S20 Ultra, but more accurate.

The sensor sends out IR light, and measures the time it takes to return to calculate the distance between the camera and objects in front of it.

This should also have knock-on effects for the iPhone 12’s background blur portrait-mode effects, making the phone's depth maps far more precise than those of the iPhone 11 range, and perhaps better than those of any phone you can currently buy.

Social focus suggests no change in hardware direction

Most of the iOS 14 features we’ve seen so far are interface complications Apple now thinks it can fit comfortably into iOS. And some, like advanced widgets and picture-in-picture video, have been available on Android phones for approximately forever.

We’re yet to hear of any groundbreaking new APIs that suggest a major shift in how Apple makes iPhones, for the next crop at least.

So far iOS 14 has offered no information on whether the iPhone 12 phones will have 5G, or if Apple has a folding iPhone almost ready for the spotlight, or of any potential new camera tech beyond lidar.

However, we'll hear plenty more before the phones’ expected reveal later this year – and Apple always tends to keep a secret or two held back for the big day.

Andrew is a freelance journalist and has been writing and editing for some of the UK's top tech and lifestyle publications including TrustedReviews, Stuff, T3, TechRadar, Lifehacker and others.