How it works: the complete guide to HD

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Next time you slip a DVD into your player and relax in front of your new 1080p HDTV to watch a crystal clear movie, take a moment to consider the digital video manipulation that's going on.

After all, your DVD is encoded in one resolution and your HDTV is another, and not an exact multiple at that. How does it display a clear video with minimal image artifacts?

Television in the old days seemed pretty simple: it was analogue, it existed in the air around us and it used cathode ray tubes (CRTs). That was about it really, from the viewer's point of view.

Article continues belowBehind it all though, there was some pretty clever stuff going on – for the time. First, the framerate was geared to the frequency of the AC power that fed the television.

In England and the rest of Europe, which uses the PAL encoding system, the AC frequency was 50Hz, and so the framerate was set at 25Hz – enough to fool the eye into interpreting a rapid succession of frames as continuous motion. (The SECAM system is similar to PAL, at least as far as this article is concerned.)

In the US, which uses the NTSC system (people joked that NTSC stood for 'never twice the same colour'), the AC frequency is 60Hz and so the framerate was set to 30Hz (29.97Hz to be precise).

That's oversimplifying the situation a little. A television CRT displays the signal as a series of lines from left to right – a process known as scanning – jumping back to the left before scanning the next line. Scanning proceeds not by processing the lines in order, but by skipping lines.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

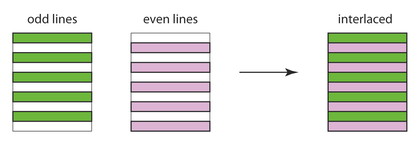

The CRT scans all the even lines, jumps back to the top left, and then scans the odd lines, as shown in below. This is known as interlacing.

The display of all the even lines (or all the odd lines) is known as a field, with two fields per frame. This means that the field display rate is equal to the AC frequency (50Hz in Europe and 60Hz in the US).

For the PAL system, there are 625 lines per frame (only 576 lines are visible, the rest carry other information), and for NTSC there are 525 (of which 486 are visible).

Interlaced video reduces the bandwidth of the television signal by half, and helps smooth out motion (at least on an interlaced TV).

The odd field is displayed in 1/25 of a second, and remains on the screen (the phosphors on the screen maintain the image for another 1/25 of a second as they decay) as the even field is displayed. This remains on the screen for the 1/25 of a second that it takes for the next odd field to be displayed, and so on.

The fields are taken 1/50 of a second apart. In other words, a single frame isn't broken apart into odd and even frames; the frames are shot separately.

Converting framerates

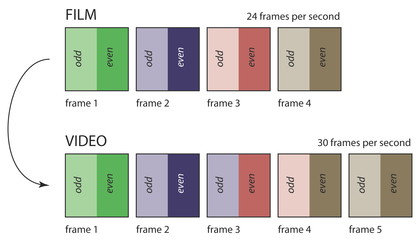

Since film is shot at 24 frames per second, it must be converted to another framerate for viewing on TV – a process called telecine.

For Europe, the solution was to speed up the film by 4 per cent and convert the frames of the film into fields (usually by duplicating frames). The audio had to be corrected to ensure it wasn't too high pitched. This meant films ran quicker on television (a 90-minute film is 129,600 frames, which would take 86.24 minutes to show on TV), but this wasn't noticeable.

For the US, the problem was more acute: the framerate had to be increased from 24 to 30 frames per second. One simple way would be to duplicate one frame in four. However, this would produce jerky motion and was therefore never used.

In fact, the US telecine process (known as 3:2 pulldown) works by adding extra duplicate fields. In essence, four frames (or eight fields) were converted to five frames (10 fields) by duplicating two of the fields. This ensured much smoother motion in the video. The image below shows the process.

Each of the fields for each frame is shown in different tints of the same colour, with the earlier field as the lighter hue. As you can see, frame three of the video is formed from the earlier field of film frame two, coupled with the later field of film frame three; frame four is formed from the earlier field of the film frame three, coupled with the later field of film frame four.

The computer age

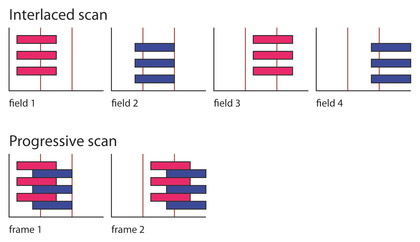

This was all good until computer monitors came along with the ability to display video, especially from DVDs. The biggest issue with computer monitors is that they don't use an interlaced scan system – they use progressive scan.

The display is still made up of lines, but the monitor scans them in strict sequence to build up a complete frame, not as an odd field followed by an even field. A PC monitor is also pixel-based – the signal displayed is digital.

The main issue then with displaying video on a computer monitor is that the signal, which is interlaced, needs to be converted to a progressive scan. This is called deinterlacing.

Each frame must be recreated from two frames, one odd and one even. Since each of the frames in a pair is taken at two slightly different times (1/50th second apart), if they were simply combined in a single frame, the motion of objects being photographed would be discernible as visible defects.

These defects are commonly known as combing, because the scans from odd and even fields wouldn't match up and would be visible as short lines. The image below shows the effect with a box moving from right to left.

The top part shows the interlaced version playing on an interlaced scan device; the bottom part shows that simple deinterlacing and playing on a progressive scan device produces a comb-like pattern.

- 1

- 2

Current page: How HD works: Converting framerates

Next Page How HD works: Deinterlacing and upscaling