What are teraflops?

Teraflops explained

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

With all the speculation over the power of the PS5 and Xbox Series X, you may find yourself wonder what are teraflops?

It's a word you've probably seen bandied about recently, and we're here to explain everything.

Teraflops are one of the stranger-sounding pieces of computing jargon. They may sound like early-level enemies in some 90s jurassic-themed platform game, but actually they represent something far more powerful, and are a factor when discussing graphical power and performance on a gaming platform or GPU.

Article continues below

Teraflops don’t tell the whole story

So teraflops are a convenient and all-encompassing measurement of graphical power on a games console or GPU. But as is often the case with computing, the reality isn’t quite as simple and you probably shouldn’t use tflops as the ultimate barometer when researching your next GPU or console.

First, let’s begin with other factors on the GPU itself. Probably the most important hardware consideration that’s not included in the teraflops calculation is memory bandwidth - or the VRAM speed, as measured in gigabytes per second (GB/s).

Then there’s the size of the memory cache on your GPU, the GPU architecture, and even the very software drivers that translate your GPU’s power into actual gameplay.

With all these things considered, comparing the tflops between, say an AMD GPU and an Nvidia GPU, or a PS5 and Xbox Series X, is kind of redundant because the software and hardware architecture are so different between these things.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

With all these factors in play, the real-world performance of a GPU can deviate considerably from what you’d expect its tflops to deliver. There are plenty of instances of GPUs with less tflops running certain games at higher frame-rates than GPUs with more tflops.

The optimisations of an individual game and even the CPU will also affect a game’s graphical performance. If, for example, there are more characters on-screen and more AI calculations to make - in games like Grand Theft Auto V or Assassin’s Creed - this will be a job for the CPU, which can actually affect that precious frame rate.

So, do teraflops matter?

Yes they do, but not as much as the Microsoft marketing machine would have you think. This foregrounding of flops as some kind of supreme measure of graphical performance is a relatively recent phenomenon, perhaps because it gives consumers a small, simple number that’s easy to latch onto.

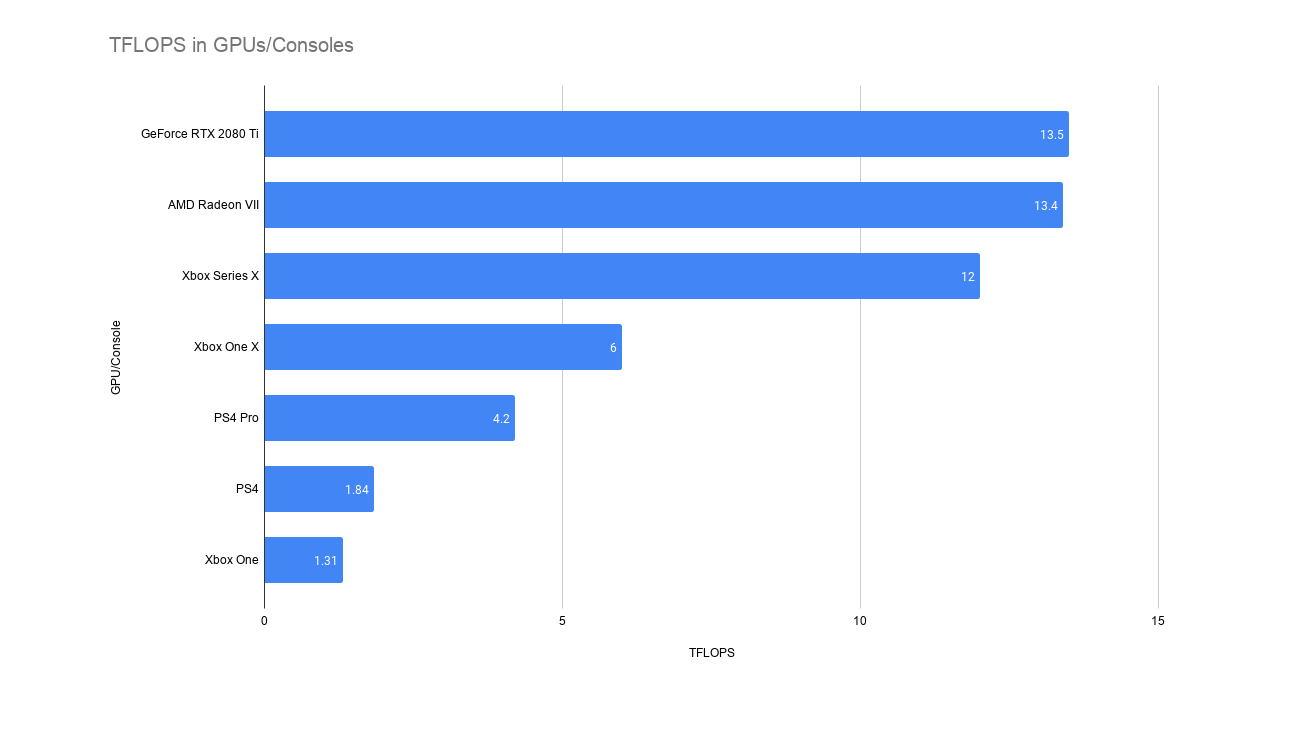

Remember in the 1990s when all that fuss was made about ‘bits’ when the 64-bit N64 came out, and how your young brain assumed this must be twice as good as the 32-bit PS1? While flops are a more tangible measure of performance than those N64 bits, it’s still far from an exact science that the 12-tflop Xbox Series X will be twice as powerful as the 6-tflop Xbox One X.

It may be more, it may be less depending on many other factors.

Yes, the 12 tflops on the Xbox Series X sound impressive, but for now it’s just theoretical, and actual gaming performance will depend on other aspects of the GPU architecture, and how well the console’s software, drivers and APIs take advantage of that power.

So our advice: don’t let yourself be blown away by theoretical numbers!

- PS5 vs Xbox Series X: which is best?

Robert Zak is a freelance writer for Official Xbox Magazine, PC Gamer, TechRadar and more. He writes in print and digital publishing, specialising in video games. He has previous experience as editor and writer for tech sites/publications including AndroidPIT and ComputerActive! Magazine.