ChatGPT users are flocking to Claude then realizing they can’t use it the same way — ‘For new users, it’s a shock’

Switched to Claude? Prepare for a shock

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Huge numbers of people are canceling their ChatGPT subscriptions and switching to Claude. This exodus began after OpenAI announced a deal with the Pentagon. But Anthropic, the company behind Claude, made it clear it wouldn’t be doing the same, maintaining its restrictions on using AI for mass surveillance of the American people and autonomous weapons.

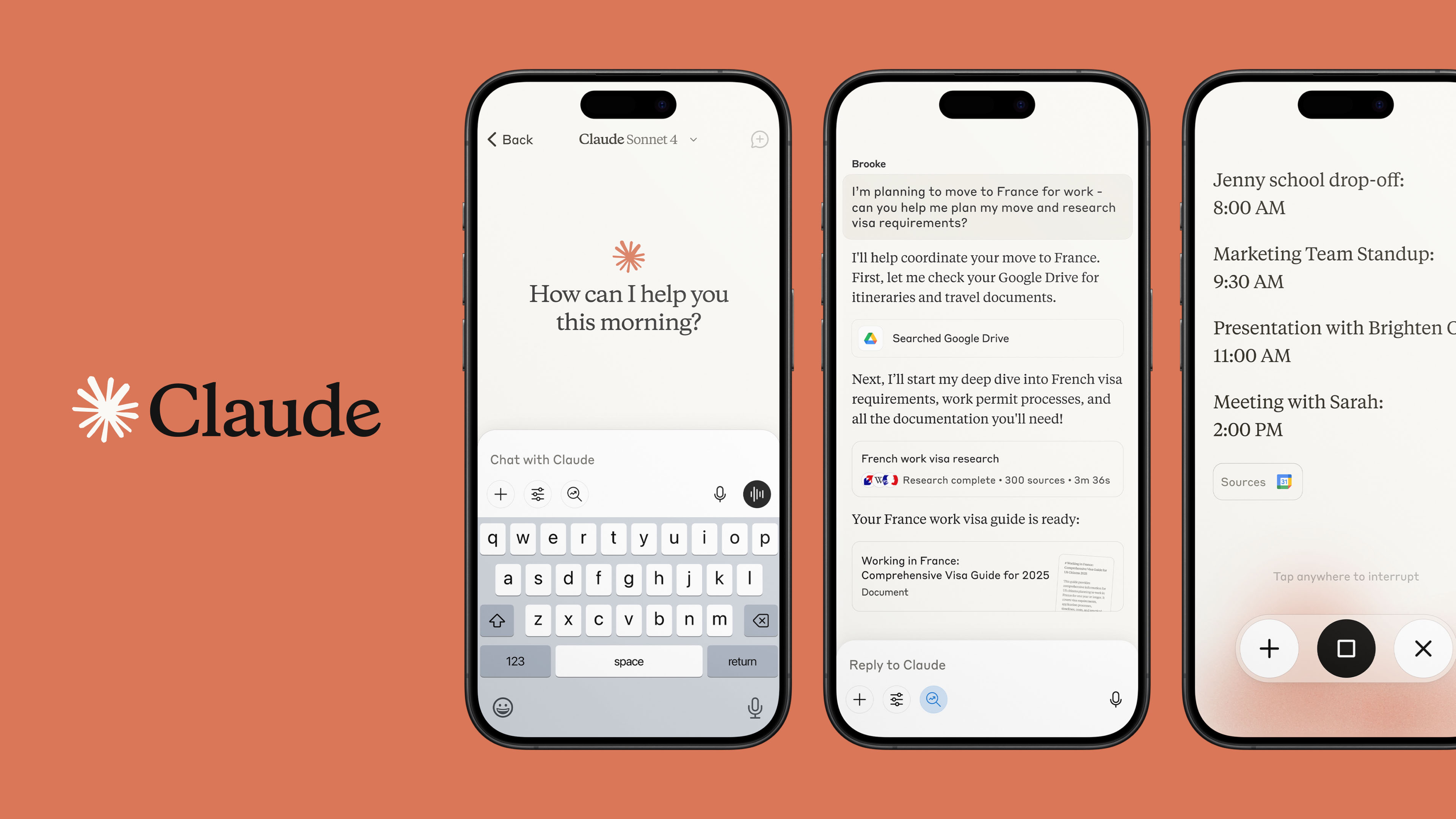

We don’t know exactly how many people have made the switch, but Claude overtook ChatGPT in app downloads on the Apple App Store.

As people move over, some without properly testing whether Claude is right for them first, many are discovering some significant differences between Claude and ChatGPT. The interface looks different. Claude is less of a “yes man”. And it has usage limits that have genuinely surprised some new arrivals. Unlike ChatGPT, you can't have a seemingly endless conversation with Claude, even on a paid plan.

Article continues belowUnderstanding Claude’s usage limits

For anyone arriving from ChatGPT, the usage limits can feel like a slap in the face. "For new users, it's a shock," says Kyle Balmer, an AI educator who has been helping his audience make sense of Claude and the recent exodus. "They're used to the practically unlimited usage that ChatGPT provides, which is what's led to a wave of outrage over the past few days as new users realize how stingy Claude can be — especially on its highest model, Opus."

Like ChatGPT, Claude runs on different models, called Haiku, Sonnet, and Opus, ranging from quick and lightweight to slow and powerful. The trouble is, the most capable one burns through your allowance fast.

The free plan is tight, but that's true of most AI tools. The real surprise is that the $20 paid plan isn't much more generous. "Just a few exchanges with Opus will deplete your usage for the day," Balmer says. "Whilst Opus is technically available on the $20 plan, realistically Sonnet is the only model most people can actually use."

How many conversations are we talking before you hit the wall? Exact numbers are hard to pin down. That's because Anthropic doesn't publish exact figures, and the limits shift depending on which model you're using and how long your messages are. But some users report hitting the wall after as few as ten to fifteen substantial exchanges on Opus on the free plan, sometimes less.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Long-time Claude users have mostly adapted, either by upgrading or learning to pace themselves. The next tier up is the Max plan at $100 a month, and here's where it gets interesting. "These Max plans are very generous with usage," Balmer says. "In fact you get far more usage than you would paying via the API. Anthropic is potentially making a loss on them. They are, if you have the funds, one of the best deals in AI right now."

But "if you have the funds" is doing a lot of heavy lifting. For most people, $100 a month is simply not an option.

So why is Claude built this way? The limits aren't an accident or an oversight. Instead, they reflect a fundamentally different business model. "Claude has always been focused on coders and the business market," Balmer says. "Anthropic aims at a small number of higher-paying customers, whilst OpenAI has gone wide, with an expectation to hit one billion users." Claude was never really designed for the mass market. You could say that the wave of new arrivals is a surprise to everyone, including Anthropic.

Maybe we need more limits?

Bear with me, but maybe the usage limit is going to be a good thing? And I say that as someone who likes to get their money’s worth, but is also very clued into how people become way too dependent on technology. I even wrote a whole book about that exact topic called Screen Time.

Sure, a usage limit won’t feel good when you're mid-project and Claude suddenly tells you that you've hit your limit for the day, that's genuinely frustrating. But step back from that moment of irritation, and there's a case to be made that a stopping point isn't the worst thing.

We already know that tech without limits tends to be bad for us. The European Commission recently found TikTok in breach of the Digital Services Act for what it described as addictive design — and one of the biggest culprits was its infinite scroll. The mechanism is different with Claude, but the principle looks really similar to me. Frictionless, endless access might encourage mindless consumption. A hard stop forces a pause.

That pause might matter more than it sounds. I've written before about the concept of smoothout — a term coined by journalist and author Ellen Scott to describe a specific kind of burnout that comes from over-relying on AI. The idea is that our brains actually need friction and challenge to stay engaged and healthy. When we outsource too much thinking, we don't just get lazier, we feel flatter. Less motivated, less fulfilled, less mentally well.

Scott's advice is: think twice before reaching for AI, especially for the parts of your work that actually matter to you. Claude's limit might, accidentally, be doing some of that work for you.

Because, to be clear, Anthropic didn't introduce usage limits for your well-being. This is a cost and business decision, full stop. But sometimes the side effects of a commercial choice turn out to be unexpectedly good for us.

There's also the question of emotional dependence. Over the past year I've reported extensively on people forming genuine emotional attachments to AI tools, particularly ChatGPT, in ways that concern psychologists and researchers. The always-on, always-available nature of these tools is part of what makes that dependence so easy to slip into. A usage limit is an interruption to that pattern, a prompt to ask yourself: do I actually need this right now?

Will people stick with Claude?

Will people actually stick with Claude once they realize how quickly the limits bite? Balmer isn't sure. "I think some people will likely just bounce back to the old habit of using ChatGPT," he tells me. Though he's quick to add that despite his reservations, he still thinks Claude is the best option for most people right now.

He also reminds us that this isn't a simple good-guys-versus-bad-guys story. "They are still a corporation run and owned by billionaires," he says of Anthropic. "Let's not get too excited supporting them."

The wave of people switching to Claude has carried a certain moral charge, a sense of voting with your feet for the more ethical option. That probably deserves some skepticism.

But whether or not Anthropic turns out to be the hero of this story, the question its usage limits accidentally raise is still a good one. How much AI do you actually need? When did you last sit with a problem before outsourcing it? Yes, the limit is just a business decision, but what you do with that much-needed pause is up to you.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Becca is a contributor to TechRadar, a freelance journalist and author. She’s been writing about consumer tech and popular science for more than ten years, covering all kinds of topics, including why robots have eyes and whether we’ll experience the overview effect one day. She’s particularly interested in VR/AR, wearables, digital health, space tech and chatting to experts and academics about the future. She’s contributed to TechRadar, T3, Wired, New Scientist, The Guardian, Inverse and many more. Her first book, Screen Time, came out in January 2021 with Bonnier Books. She loves science-fiction, brutalist architecture, and spending too much time floating through space in virtual reality.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Become a TechRadar Insider

Become a TechRadar Insider