High performance computing will be free by 2020

Will the success of Moore's Law be too much of a good thing for chip makers?

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

By 2020, high performance computing will be free. Or at least, very nearly free. That high end gaming rig you desire? Pennies on the pound.

So says no a lesser an authority on the matter than Intel. Well, those aren't Intel's exact words.

To understand what I'm getting at, let's have a think about Moore's law. This, of course, is the notion that transistor density in computer chips doubles every two years.

Article continues belowMoore, Moore, Moore

It was first mooted by Intel co-founder Gordon Moore back in 1965 and it has proven astonishingly prescient. And if there's one thing you can rely on from Intel at its IDF tech fest, it's confirmation that Moore's law is still a goer.

This year's IDF was no exception, with Intel declaring Moore's Law good for another 10 years.

Intel's current tech maxes out at 22nm for chips you can actually buy. But the master of all things silicon reckons it's well on the way to working out the secrets of achieving the 5nm node. If all goes to plan, that will happen towards the end of 2020.

If that sounds like just another incremental step in a trend measuring 50 years, think again. Already, the emphasis in terms of computing has shifted away from raw performance.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Enough is enough

That's why Intel's upcoming Haswell CPU architecture sticks with four cores for mainstream PCs. In other words, a quad-core PC processor is already good enough.

In that context, the maths of Moore's law in terms of the cost of computing becomes extremely compelling. In very simple terms, if you shrink a computer chip from today's 22nm tech down to 5nm, adding nor removing any transistors, you end up with something roughly six per cent the size of the original.

Yes, six per cent. Things don't always work out exactly like that in practice. But those figures give an accurate impression in terms of order of magnitude.

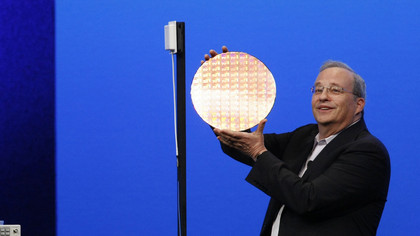

And when it comes to making chips, size matters. Again, the rough rule here is that it costs the same to crank out a silicon wafer composed of chips regardless of how many chips lie therein.

It's only wafer thin

Thus, the individual chip cost is directly proportional to how many you can cram into a wafer. And if you're cramming in 15 times more chips, well...

Of course there's more to the cost of a computing than just the main processing and system chip. Screens, memory, batteries, they all cost money. But it sure will help if one of the main aspects – the compute power – is near enough to free as dammit.

At this stage, you might be wondering whether Intel and the rest of the microprocessor industry is engineering itself out of existence. How is it going to survive selling chips for chump change?

Chips with everything

This answer is sheer volume, you might say chips with everything. The cost of putting meaningful compute power into an object will be so low, they'll be sticking compute into almost everything.

A quad-core Core i5 in your loaf of bread by 2025? It sounds ridiculous. It sounds pointless. But I think you'll be surprised by just how close reality comes.

Technology and cars. Increasingly the twain shall meet. Which is handy, because Jeremy (Twitter) is addicted to both. Long-time tech journalist, former editor of iCar magazine and incumbent car guru for T3 magazine, Jeremy reckons in-car technology is about to go thermonuclear. No, not exploding cars. That would be silly. And dangerous. But rather an explosive period of unprecedented innovation. Enjoy the ride.

Become a TechRadar Insider

Become a TechRadar Insider