Before Cerebras, there was Amdahl: How legendary US engineer was way ahead of his time with wafer-scale integration and plotted supercomputer performance for the humble PC 43 years ago

Gene Amdahl was the architect of IBM’s System/360 mainframe family but he had far greater ambitions

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Long before wafer-scale processors became associated with AI accelerators and ultra-large chips, Gene Amdahl was already trying to turn an entire silicon wafer into a single processor.

Amdahl’s reputation alone made the industry pay attention. Known as the architect of IBM’s System/360 mainframe family, he helped define enterprise computing before leaving IBM in 1970 to found Amdahl Corporation, which became the leading maker of IBM-compatible mainframes.

By the time he launched Trilogy Systems Corp. with his son Carl, he had already proven he could challenge the industry giant on its own turf. Trilogy’s executives believed their reputations were on the line, and the company was pursuing funding on a scale unusual for a startup in the early 1980s.

Article continues belowIn its July 18, 1983 issue, InfoWorld reported Amdahl had publicly unveiled “a prototype of a new semiconductor technology that he hopes will make him once again a giant killer.” The article described Trilogy’s audacious plan: wafer-scale integration.

Wafer is the processor

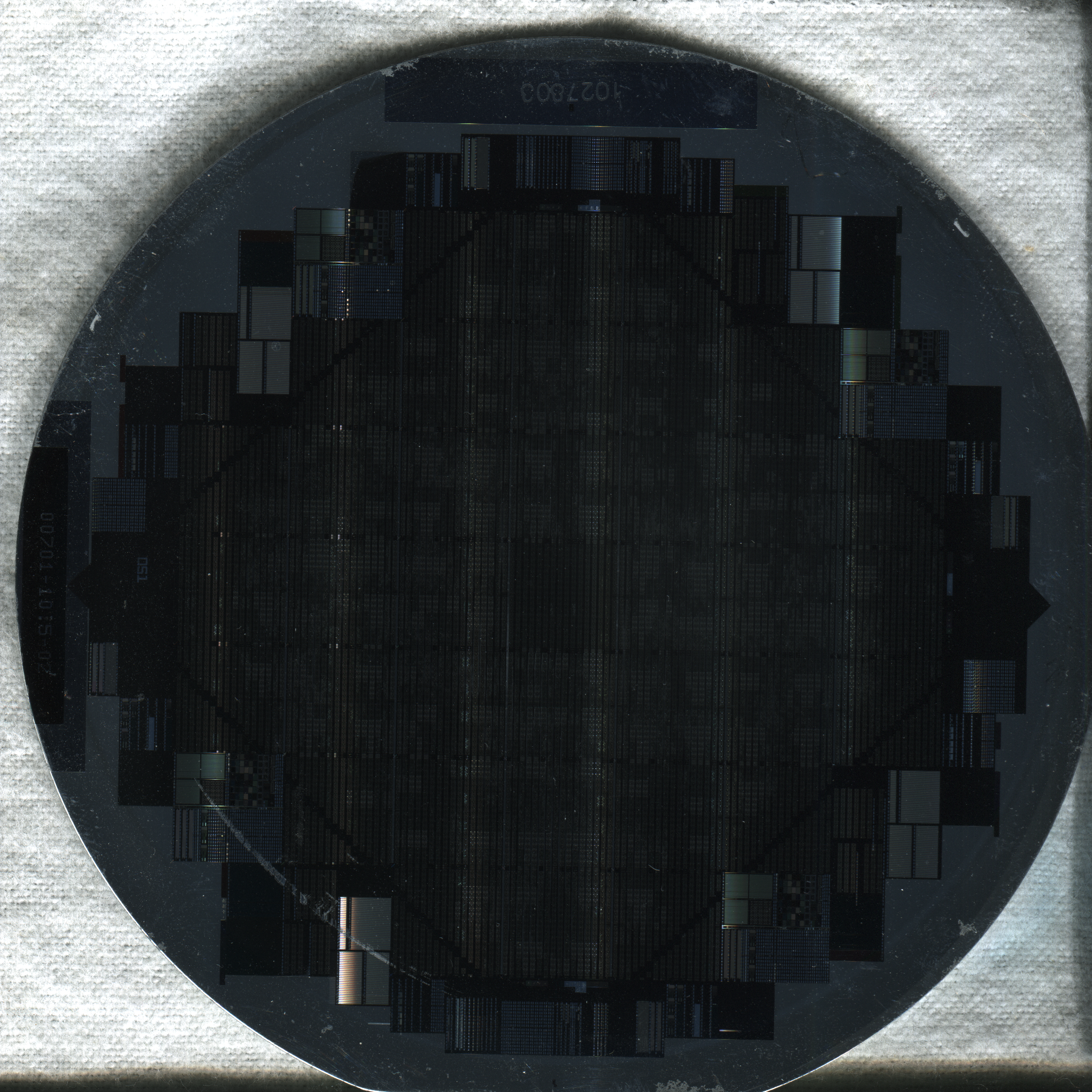

Rather than cutting wafers into hundreds of individual chips, Trilogy intended to use the wafer itself as the processor.

“Based on an as-yet-unproven technology,” InfoWorld wrote, the company was attempting to build a new generation of mainframes using wafers measuring 2½ inches square. Each “macrochip” would contain circuitry equivalent to roughly 100 conventional chips.

The performance goals were striking. Trilogy planned to build a supercomputer that would outperform the fastest IBM systems of the time while taking “only 10% of the space” and potentially undercutting IBM pricing by up to 30%. This was not an incremental improvement but a direct challenge to the design assumptions of the semiconductor industry.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

At the time, chip manufacturing depended on redundancy through volume. Manufacturers etched hundreds of identical dies onto a wafer because defects were unavoidable.

The idea of producing one giant chip seemed reckless. As InfoWorld explained, silicon fabrication was so sensitive that even microscopic contamination could ruin circuitry, requiring extreme clean-room conditions.

Trilogy turned this logic upside down. Instead of accepting defects as waste, the company planned to design around them.

With a larger surface area, the chip could include extra circuits able to reconfigure themselves around damaged regions.

Amdahl called this concept “redundancy,” giving the macrochip a better chance of working even when imperfections were present.

The idea was ambitious technically and commercially. Amdahl claimed Trilogy would build a prototype computer using just 40 macrochips.

If successful, it would deliver about 32 million instructions per second, placing it ahead of several IBM systems then in development. In interviews cited by InfoWorld, Amdahl even suggested the system could compare favorably with the Cray-1 supercomputer.

A really personal computer

The ambition extended beyond mainframes. Analyst Bob Simko told InfoWorld that wafer-scale integration could bring chip manufacturers “a step closer to the end market,” and Amdahl hinted the technology could eventually reach desktop machines.

“The technology could be applied to a personal computer,” he said. “It would be a really personal computer!”

In 1983, that idea sounded almost absurd. The IBM PC had only recently launched, and personal computing remained modest compared with mainframe power. Yet Amdahl's vision was clear: compress supercomputer-class performance into smaller systems through radical silicon integration.

Behind the scenes, Trilogy was building aggressively. The company planned to recruit hundreds of engineers, considered manufacturing overseas, and emphasized volume production from the outset. This scale showed how seriously Amdahl viewed the opportunity — the goal was not a lab experiment but a brand new computing platform.

Trilogy crashes and burns

Reality, inevitably, proved harsher. Wafer-scale integration was far harder to commercialize than Trilogy had anticipated. The company burned through large amounts of capital in pursuit of its design, but it never established the breakthrough product it had promised.

By 1985, Trilogy had agreed in principle to merge with Elxsi, a smaller computer manufacturer, and later acquired it in a restructuring that effectively marked the end of Trilogy as an independent wafer-scale challenger.

The original vision of large monolithic macrochips driving a new class of supercomputers faded from the market for decades.

Even so, the core ideas were remarkably forward-looking. Designing fault tolerance directly into silicon, treating the wafer as a single computational unit, and pursuing extreme performance density all echo modern approaches seen in today’s large AI chips.

What looked impractical in the early 1980s is now accepted engineering strategy. Companies such as Cerebras have demonstrated that wafer-scale processors can be manufactured and deployed at scale, powering modern AI workloads with chips that span almost an entire silicon wafer.

The concept Amdahl described in 1983 — treat the wafer as the processor and build redundancy directly into it — has become a viable commercial architecture, even if the technology and fabrication ecosystems required decades to mature.

Looking back, Trilogy’s story reads less like failure and more like a preview of the future arriving too early. The semiconductor ecosystem, manufacturing precision, and market demand simply were not ready for what Amdahl envisioned.

“Silicon is now the platform,” Analyst Bob Simko said in the original InfoWorld article. Four decades later, that statement feels prophetic. Modern wafer-scale processors finally deliver the kind of computational density Amdahl imagined, validating a vision that sat dormant for years.

Before today’s AI-era giants embraced wafer-scale design, Gene Amdahl had already sketched the blueprint — betting that one giant piece of silicon could change the economics of computing itself.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Wayne Williams is a freelancer writing news for TechRadar Pro. He has been writing about computers, technology, and the web for 30 years. In that time he wrote for most of the UK’s PC magazines, and launched, edited and published a number of them too.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Become a TechRadar Insider

Become a TechRadar Insider