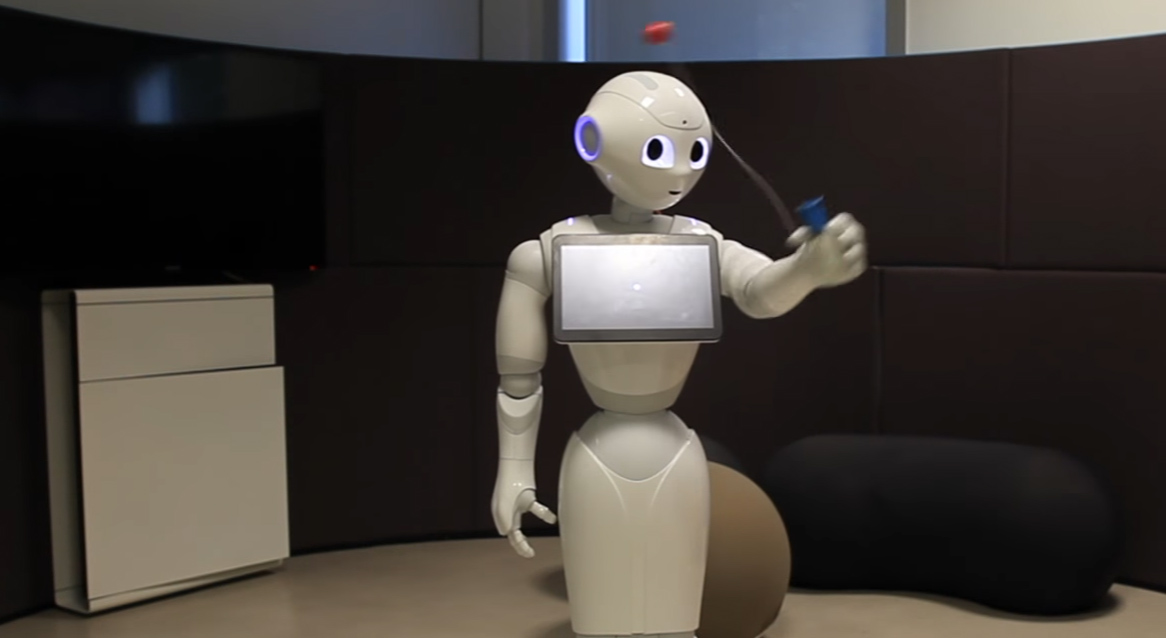

Watch Pepper the robot learn how to catch a ball in a cup

Abort/retry/fail

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Robots aren't always so dextrous. They spend a lot of time falling over. Getting them to perform agility tasks that come naturally to humans can be hard to code, but they're easy to demonstrate.

That's why engineers at the AI Lab of SoftBank Robotics have been teaching robots to learn how to perform tasks demonstrated by a human, which they then practise over and over again until they get them right.

In the video below, you'll see the company's Pepper robot learning how to play that game where you have to catch a ball in a cup. To begin with, humans help the robot by guiding its arm.

Article continues belowEvery success was logged, and every failure noted. After each failed attempt, the robot would alter its movement slightly to try and get closer to the solution. After 100 tries, it was able to catch the ball with a 100 percent success rate.

Softbank says that the task was accomplished using the free software library dmpbbo, which you can get here if you want to do a bit of robot-ball-catching yourself.

Eventually it's hoped that the same principles could be used to teach robots other tasks that would otherwise require lengthy, complex programming and testing.

- Duncan Geere is TechRadar's science writer. Every day he finds the most interesting science news and explains why you should care. You can read more of his stories here, and you can find him on Twitter under the handle @duncangeere.

Sign up for breaking news, reviews, opinion, top tech deals, and more.