See the touchscreen of the future: your own skin

Experimental sensor turns an entire arm into a controller

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

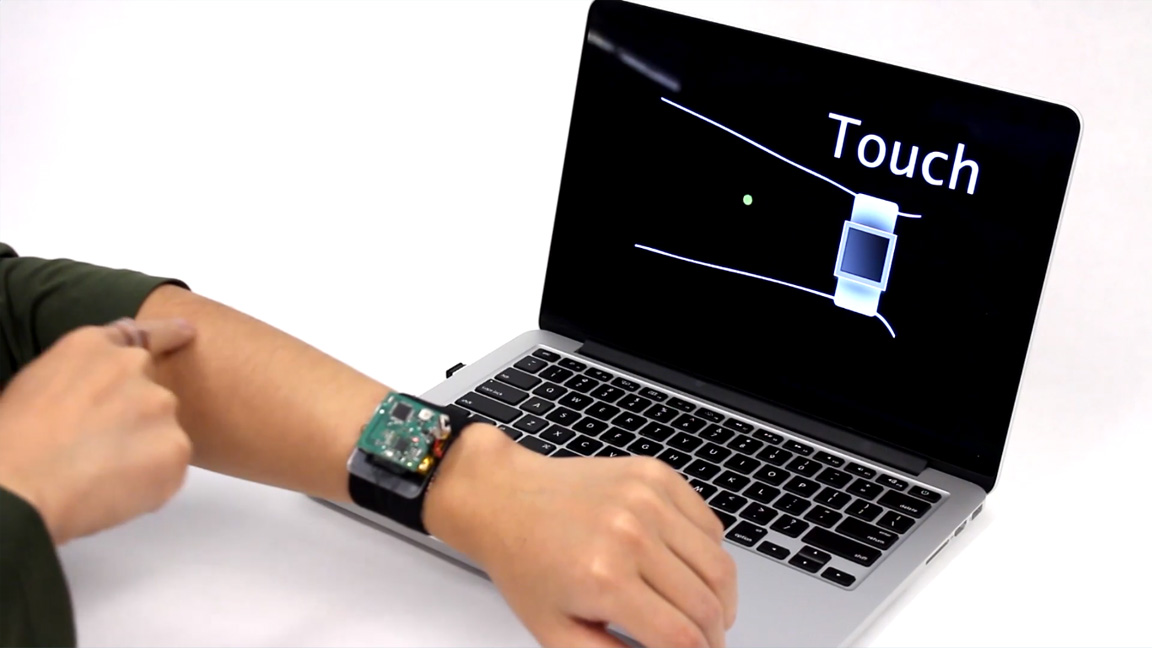

There's only so much control a tiny touchscreen and dial can bring to a smartwatch, but what if you could control your wearable gadget using your whole arm as a trackpad?

This is the sorcery... I mean, concept behind SkinTrack, an experimental technology that allows a device, like a smartwatch.

Effectively, it would be able to read swipes, drags, presses, and even drawings made against your skin as if they were inputs on a touchscreen.

Article continues belowHow it works

The core technology behind the skin-powered screen are pairs of electrodes that read a signal-emitting ring that essentially turns the user's forefinger into a stylus. The electrodes trace an X-and-Y-axis reading on your skin relative to where your finger is placed - even with clothing on - and translate the motions into commands.

Created by Carnegie Mellon University's Future Interfaces Group, SkinTrack is one of many projects the team has under their belt for advancing how we control our technology. Other ideas range from using sound to control valves and switches to a wearable that can sense what you're touching.

While the technology is impressive to see in action, the researchers do still see limitations that will need to be worked on - especially the ever-changing state of the organ that is human skin.

Involuntary actions would have to be considered. Accidentally calling someone because you scratched an itch presents an issue or two for us, and could bring a new literal meaning to butt dialing.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Become a TechRadar Insider

Become a TechRadar Insider