Nvidia ShadowPlay Highlights share your coolest PC gaming moments

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

On top of announcing some new graphics cards with the GTX 1080 Ti, Nvidia also announced new software features to its ever expanding GeForce Experience at GDC 2017.

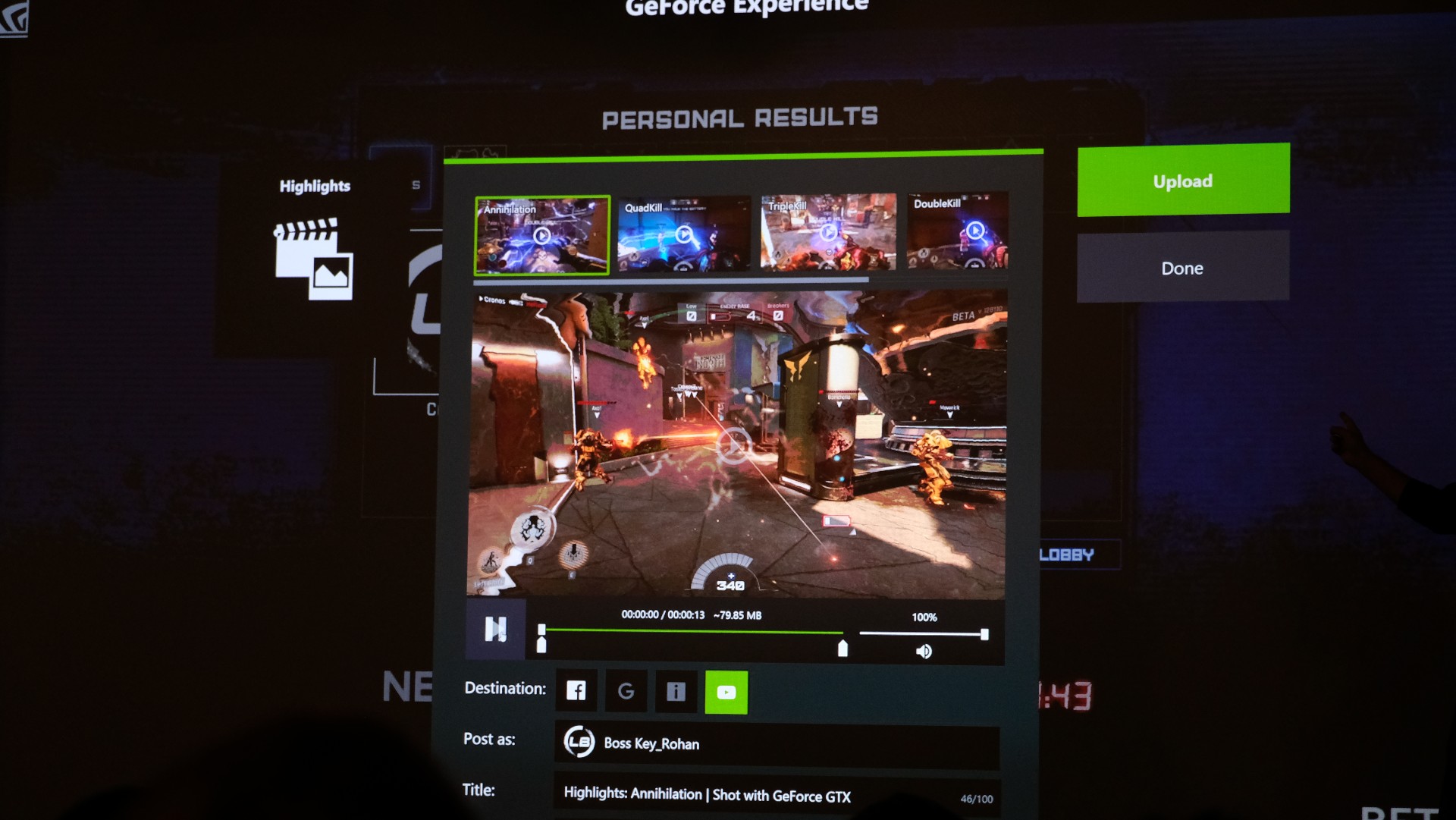

ShadowPlay Highlights finally makes sharing your gaming moments as easy as hitting the share button on the Xbox One and PS4. Using a newly developed API, ShadowPlay Highlights will detect when you’ve just gone on a kill streak and automatically set aside a few clips for you to review after the game.

Post-match users will have 60 seconds to edit their footage and share it on social networks like YouTube, Facebook, Twitch and Google+ to name a few. That might sound like a lot to do in just a minute, but Nvidia’s interface makes it easy, judging from our hands-on time with it.

Article continues belowNvidia is also extending its screen-capturing Ansel tool to yet another game with Ghost Recon: Wildlands when it launches on March 7. What’s more, Ansel support is also coming to Amazon’s LumberYard game engine as well as a public software developer kit.

Evolving graphics

Beyond expanding the GeForce Experience app with more functions, Nvidia is also working on making games looking that much better.

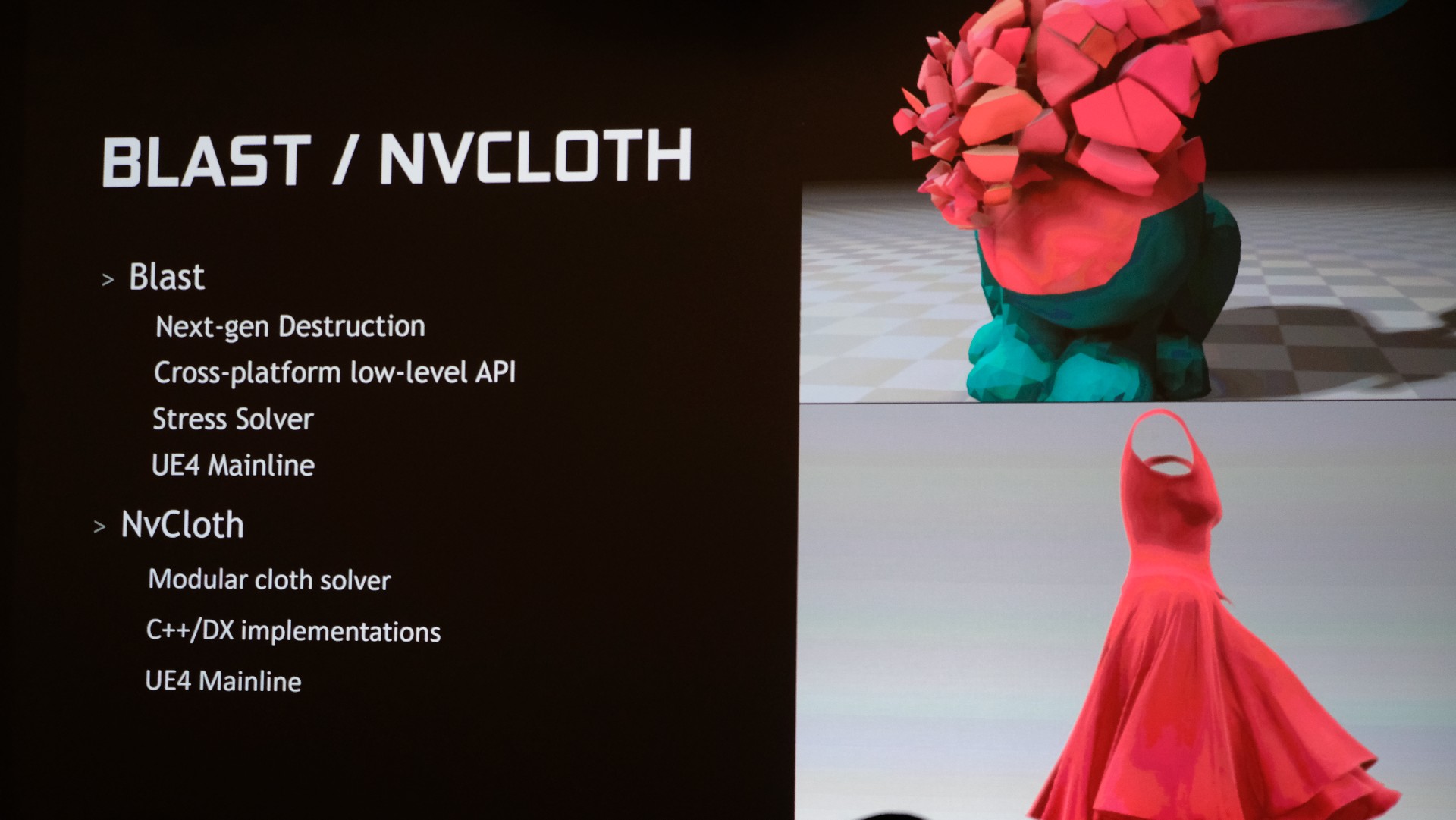

The GPU maker announced two new additions to its GameWorks platform. Blast adds next-gen destruction similar to that we’ve seen in games like the Battlefield series and in a cross-platform API that could see wider adoption than DICE’s Frostbite Engine.

NvCloth, on the other hand, introduces better cloth physics that react separately from the rest of the in game elements for a more realistic simulation.

Nvidia also plans to make DirectX 12 even better by adding Flow and Flex, which help improve fluid dynamics and the travel of gaseous elements, respectively.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

On the VR front, users could see better performing games now that VRWorks supports DirectX 12 and the Unity engine.

Analyze this!

Last but not least, Nvidia is making monitoring your components a little easier. Nvidia Aftermath can help you identify the causes of GPU crashes by location and type, while Pix for Windows gives you a live view of graphics card performance.

Nvidia is also introducing the Fraps of virtual reality with FCAT. It works by essentially creating a virtual video out to analyze exactly what the VR headset would see. From there, it keeps track of whether the VR runtime produces a proper rendered frame while also tracking position changes.

The result is a complicated CSV with all key performance metrics, but Nvidia is also rolling out a FCAT Data analyzer application that takes all that data and puts it into a visual graph.

Measuring virtual reality performance has always been tricky because of the many steps of the GPU creating graphics to VR run time adapting it for the VR headset and finally showing the image on the HMD. So, we’re glad Nvidia has added another tool to the box and it will even work with AMD cards.

- Catch up with what the competition – AMD Vega – is all about

Kevin Lee was a former computing reporter at TechRadar. Kevin is now the SEO Updates Editor at IGN based in New York. He handles all of the best of tech buying guides while also dipping his hand in the entertainment and games evergreen content. Kevin has over eight years of experience in the tech and games publications with previous bylines at Polygon, PC World, and more. Outside of work, Kevin is major movie buff of cult and bad films. He also regularly plays flight & space sim and racing games. IRL he's a fan of archery, axe throwing, and board games.