Forget the Turing test: driverless cars need to pass a driving test first

Drive-by computing

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

You have the UK driving test to thank for the Kardashians. Way back in 1997, BBC One broadcast a series called Driving School, focusing on the trials, tribulations and literal near-misses of a gang of learner drivers as they struggled to pass the driving test and earn their licenses.

Potty-mouthed cleaner Maureen Rees escaped several head-on collisions during the show, nearly inducing a heart attack in her husband-cum-driving teacher. It was hilarious – an early 'fly-on-the-wall' documentary that's cited as kickstarting the whole reality TV genre. So, join the dots, and you go from driving test to Kim’s 'break the internet' near-nude photoshoot.

Had driverless cars emerged 20 years earlier then, we may now live in a world free of Kardashians, free of Jersey Shore, free of The Apprentice. Maybe even free of Archlord Trump himself.

Article continues belowWhy? Because self-driving cars don’t have to take a driving test.

But maybe they should.

For some, gaining a driver’s licence is a challenging experience. Of 731,925 drivers taking the UK driving test between 2015 and 2016, only 347,825 passed. That’s fewer than half of all test subjects, at an annual pass rate of 47.5 percent. And while states such as Kansas and Idaho will issue driver’s licenses to those as young as 14 in the United States, all drivers must complete a six-month course of instruction.

Of 731,925 drivers taking the UK driving test between 2015 and 2016, only 347,825 passed.

That’s before considering those with disabilities or conditions that prevent them from safely taking the wheel at all – the visually impaired and those suffering from seizure-inducing epilepsy, for instance.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Whatever the restriction, the option of a driverless car is a welcome one for many.

But how do we make sure a driverless car and, in particular, the artificial intelligence making the moment-to-moment on-the-road decisions, is up to the task?

Information superhighway

Many of the biggest names in tech are pushing forward with driverless car initiatives, from Silicon Valley big hitters like Google and Elon Musk’s Tesla, to traditional automotive companies like Ford and BMW. And though we’re being promised driverless cars on our roads by as soon as the early 2020s, the nascent technology - advancing as rapidly as it is - regularly grabs the headlines for the wrong reasons.

Tesla’s semi-autonomous features in its Model S electric car were implicated in a fatal crash in Florida in May of this year - with the present driver’s misplaced trust in the Tesla Autopilot system’s ability to apply the brakes at the heart of the accident. (As a result, Tesla has since issued an update to Autopilot that it believes would have prevented the crash entirely.) In February of 2016, a driverless Google car had an avoidable accident with a bus, with the AI choosing to avoid sandbags without accounting for the danger of the other vehicle.

“We're assuming that artificial intelligence is more advanced than it really is - that it can replicate the subtle commonsensical knowledge that people use when they're doing everyday things like driving around”, says Nicholas Carr, best-selling, Pulitzer Prize non-fiction nominee author of The Shallows: What the Internet is Doing to Our Brains, and Utopia is Creepy.

“Whether it's Tesla or Uber or Google - it's not as though there's an AI driving a car that can make sense of the world and make sense of traffic. They have to constantly update the software whenever a new problem arises, because the software is very rigid.”

We're assuming that artificial intelligence is more advanced than it really is.

Nicholas Carr

“We might find that it's not that hard to get up to a self-driving car being able to handle 99 percent of driving situations,” says the skeptical Carr, “but getting that last one percent is extraordinarily difficult, and 99 percent in driving isn't good enough for total automation.”

Current driverless tests, at least on public roads, usually have a human as a nuclear-option safety element. Uber’s recent driverless taxi trial in Pittsburgh, for instance, still required a person be available to take the wheel. It’s something Carr can’t see changing anytime soon.

“You'll still have to involve a human behind the wheel who'll have to take over in situations that the software can't handle,” says Carr. “And as soon as that happens, you get the very very difficult situation of how do you trade responsibility back and forth between a computer and a human being without the human being losing interest, becoming complacent, checking their phone.

“That's a really hard problem. We've seen it in aviation in pilots tuning out when they become overly dependent on autopilot systems. The challenge is even harder with self-driving cars, because with self-driving cars you only have a fraction of a second to react before you hit a pedestrian or a tree.”

Silverstone for cyber cars

As such, the driverless car test, or at least some standardised form of regulation, is an absolute must according to Carr:

“I do think that just as we have a regulatory regime that makes sure that human drivers are capable of handling an automobile, we need to have a certain testing programme of some kind that applies more types of requirements on artificial drivers.”

Facilitating AI driving tests may pose an infrastructural challenge in itself though - whereas human tests occur on regular roads at sporadic times based on the flow of new learner drivers, autonomous vehicles are being positioned to be mass produced, and will require large scale bespoke testing spaces. It’s something that the UK’s Transport Research Laboratory (TRL) is already considering.

A combination of off-road, on-road and validated virtual evaluation is likely to be required.

Professor Nick Reed, TRL

“It is clear that before we allow highly or fully automated vehicles to drive on our roads, we will need confidence that they behave appropriately in the broadest possible range of situations that they might encounter,” explains Professor Nick Reed, academy director at TRL.

“However, this cannot be achieved using traditional test track environments. To provide an environment in which connected and automated vehicles can be evaluated within a complex urban environment, TRL has created the UK Smart Mobility Living Lab, the use of the Royal Borough of Greenwich as testbed location in London for connected and automated vehicle concepts.

“Whilst this location meets many of the criteria needed for evaluating automated vehicles, it does not provide all the answers and a combination of off-road, on-road and validated virtual evaluation is likely to be required.”

Driving data

Data harvesting will be crucial to determining the nature of any automated car test, and TRL is working alongside partners including Bosch and Jaguar Land Rover to collect vast quantities of information sourced from vehicles equipped with automated systems.

Currently, in order to determine discrepancies between human and AI performance, the data is collected with automated systems operating in ‘silent mode’, allowing the human driver full control of the vehicle while the output of the automation systems is recorded. According to Reed, this “[enables TRL] to compare the behaviour of the automation systems with the behaviour of human drivers and, by understanding how humans navigate safely in urban environments, it will help to improve the safety and validation of automation systems.”

Information could be sent directly to in-vehicle information systems, supported by roadside Wi-Fi.

Professor Nick Reed, TRL

In time, as different levels of automation are standardised, Reed suggests that different tiers or categories of driving licence could be introduced beyond the current manual and automatic types.

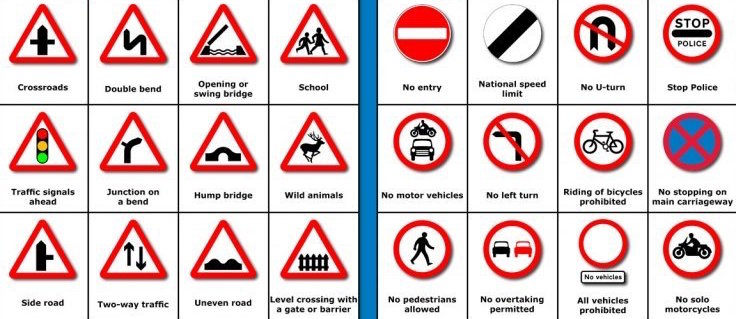

It’s worth considering however that, unless sweeping legislation is introduced, it’s almost inevitable that human-controlled and AI-controlled cars will share roads on a regular basis. As such, there’s a strong chance that signage - tracked by driverless car systems - will need to change, and so too then any mandated human theory and practical driving tests.

As Professor Reed explains:

“We envisage that future vehicles will be connected. This means vehicles will be able to communicate with one another to ensure safe and effective behaviour, such as changing vehicle spacing. This could reduce the need for information to be displayed on road signs, as information could be sent directly to in-vehicle information systems, supported by roadside Wi-Fi.

“TRL’s work on the ‘connected corridor’ between London and Kent in the UK has already seen progress in this area, with the goal of vehicles receiving and responding to real-time updates transmitted by a series of roadside beacons that provide seamless connectivity along the highway.”

Green light for creativity

Progress however requires some risk taking. Carr raises valid concerns over the accountability and reliability of AI drivers, but is careful to stress that, to push technology forward, development teams will still need to let their imaginations run wild.

“I'm not arguing for blocking the creativity of engineers and programmers,” says Carr.

“But we see with Tesla's approach that over-promising what the car can do tends to get people to become overly confident in the software. I think society, and its institutions, has to be very careful about what companies are doing, and making sure that they're not over-promising what automation can do in a way that actually creates risky situations rather than avoiding them.”

It’s worth remembering that, while caution must be urged, driverless cars offer great potential benefits beyond accessibility.

“Connectivity between vehicles and the road infrastructure will enable a wide range of functions,” says Professor Reed.

“For example, smarter control of traffic flows to relieve congestion and reduce emissions could be extended across a larger proportion of the road network without the need to install and maintain traffic signals and gantries.”

Enjoy the ride

If driverless cars in some shape or form are an inevitable part of our future, and if we accept that for many they could eventually offer a freeing mode of transport that also offers the green opportunity for enhanced route and fuel efficiency, with benefits outweighing the bad, what would be the optimum human-machine interface?

“The ideal interface is not one that seeks to have the software replace human activity,” suggests Carr.

“Say, the role of the human becomes simply taking over when the software fails - that's the worst way to do things. We have to figure out a way where the responsibility is shared for hard things back and forth between computers and humans, so computers can be very good at sensing things that people might miss.

“We're seeing some very interesting uses of software in the automotive area that, simply by monitoring how a person moves the steering wheel, or monitoring the person's gaze, can pick up early on when a person's getting tired or beginning to nod off, and can send an alert. And so computers can do very very valuable things like that.”

It's those subtle rich, human talents that we have to ensure as we're designing these automation interfaces.

Nicholas Carr

Ultimately, we must be careful not to become too passive in allowing AI too much responsibility in any aspect of our lives. Not only for safety’s sake, but so as to prevent our passivity sliding into apathy - one in which the thrill of learning new skills and the joy of acquiring knowledge is diminished.

In a culture where where sci-fi visions of AI range from everything from R2-D2 to Terminator's holocaust-triggering Skynet, trust in inhuman technology, equipped with a driving licence or otherwise, may end up being a final emotional hurdle to be vaulted.

“We're kidding ourselves if we think that artificial intelligence can take over or can replicate the highest forms of human thought,” says Carr, “which all come from being a living being in the world and having common sense and having general intelligence, and being able to take things you've experienced in one aspect of your life and apply them in a different space.”

“It's those kind of subtle rich, human talents that we have to ensure as we're designing these automation interfaces, we have to make sure we figure out how to continue to develop so that we don't become just passive observers of machines doing everything for us.”

Ask Maureen (or anyone else who’s suffered the frustration of a failed driving test) whether they’d let a perfect machine driver do all the hard work for them, and chances are they’d offer an emphatic “yes”. Their gain would be reality TV’s loss. But it’d be the human race’s greatest failing if we let our current obsession with convenience overtake our drive for personal achievement.

Gerald is Editor-in-Chief of Shortlist.com. Previously he was the Executive Editor for TechRadar, taking care of the site's home cinema, gaming, smart home, entertainment and audio output. He loves gaming, but don't expect him to play with you unless your console is hooked up to a 4K HDR screen and a 7.1 surround system. Before TechRadar, Gerald was Editor of Gizmodo UK. He was also the EIC of iMore.com, and is the author of 'Get Technology: Upgrade Your Future', published by Aurum Press.