Dying Light 2 recommends an RTX 3080 for 1080p? You've got to be kidding me

Opinion: an RTX 3080 for 1080p? Really?

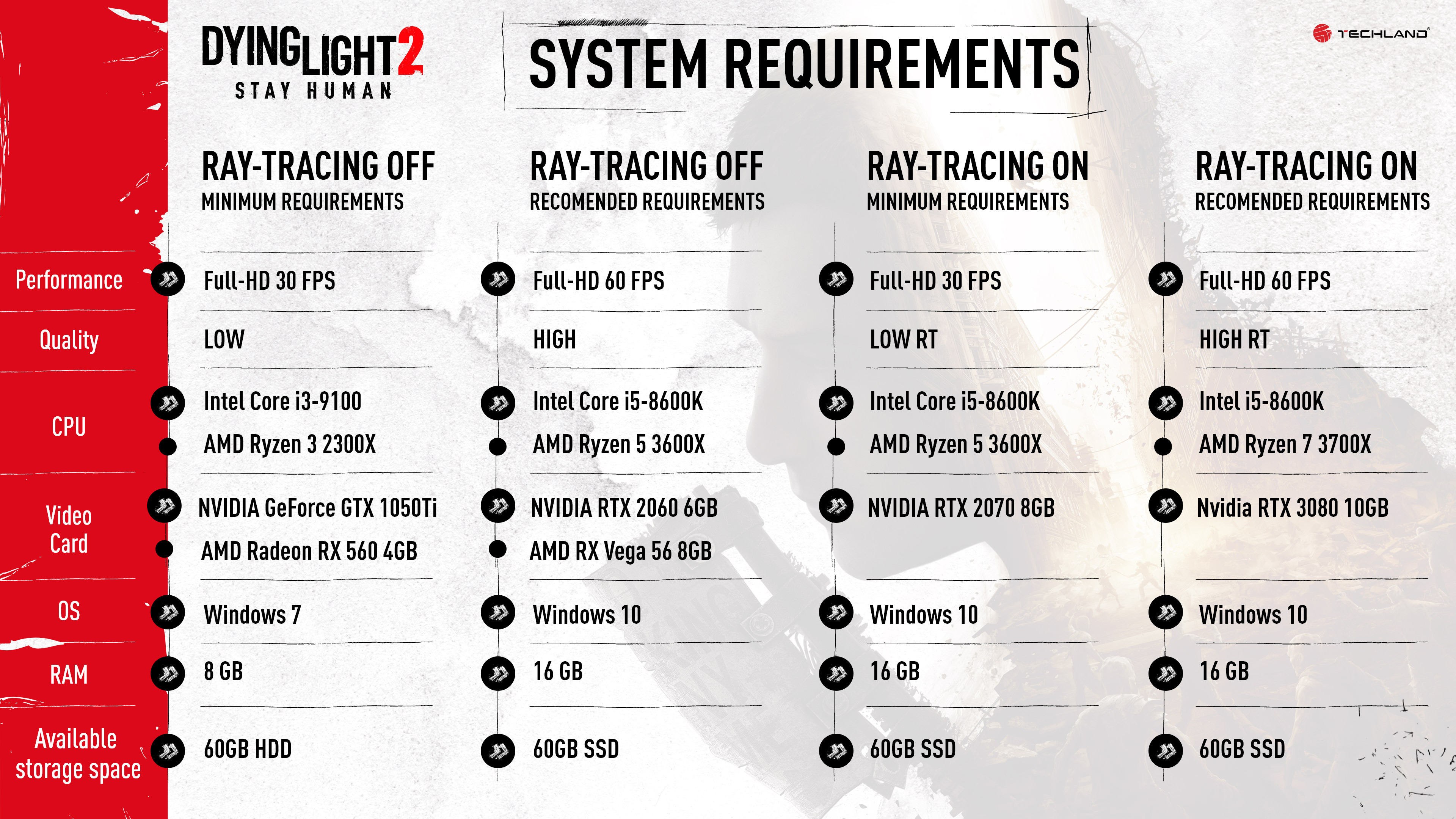

Techland has finally released system requirements for Dying Light 2, and while they start off pretty reasonable, the recommended requirements are a bit obnoxious for a PC game in 2022.

For instance, the game will apparently only require a GTX 1050 Ti as a minimum spec, which will be good enough for 1080p at low, with about 30 fps. However, if you want to turn on all the graphics settings and stay at 1080p, you're going to need an Nvidia GeForce RTX 2060 - a graphics card that is way more powerful.

It gets even worse when you look at ray tracing specifications. At a minimum, the developer is suggesting an Nvidia GeForce RTX 2070 for the "Low RT" preset at 1080p, 30fps. A bit high, but that's fine, it's a next-generation game and all. However, for the 'High RT' preset at 60 fps, it's calling for an Nvidia GeForce RTX 3080. For 1080p.

I legitimately doubt you'll need an RTX 3080 for 1080p in Dying Light 2.

A question of scale

While the RTX 3080 is a popular graphics card (even though it's notoriously difficult to find), there's no reason that it should be recommended for 1080p gaming, as it's a 4K GPU, especially when an RTX 2070 is what's recommended for the lower RT preset at the same resolution.

We're talking about a gigantic jump in performance between those GPUs. The Nvidia GeForce RTX 3080 was a gigantic generational leap over the flagship of the 2070's era, the RTX 2080 Ti, let alone the mid-range Turing child.

In terms of raw performance, the Nvidia GeForce RTX 3080 is nearly double the power that an RTX 2070 brings to the table, and you cannot convince me that the delta between the low graphics preset and the high graphics preset is going to be enough that it's going to require that much of an upgrade.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

At most, the requirement for the High RT preset is probably going to be closer to the RTX 2080 Ti and worst-case RTX 3070, and that's if (big if) Nvidia DLSS doesn't end up being supported.

Why freak people out?

It's no secret that graphics cards are extremely hard to come by right now, and that's especially true for the Nvidia GeForce RTX 3080. By making that graphics card the "recommended spec", even for a preset with ray tracing, at 1080p could make people think they have to go out and shell out for an extremely expensive piece of hardware to play a game.

The fact of the matter is, the RTX 3080 will likely be overkill at 1080p, regardless of what settings you're playing the game at. Unless there's some setting in there that is downscaling the game from a higher resolution, a la Ubersampling in The Witcher 2, there's no reason that it would require such a powerful GPU.

I'm not sure why Techland decided that putting such an expensive and coveted GPU as its recommended spec at 1080p was a good idea, and I've reached out to the studio for comment and will update this story if I hear back. I suspect it might just be because it is a GPU the company can be absolutely sure will clear that 60 fps threshold.

There's also a possibility that there are some genuine problems with the game's optimization that will make it harder to run on more reasonable hardware at those settings. You can count me as intrigued, and I fully intend to test this game upon release to see what you'll really need to play this game at its best.

I guess forget about playing this game at 4K

If the game really does require an Nvidia GeForce RTX 3080 just to play the game at 1080p with ray tracing enabled, then you can pretty much forget about playing the game at 4K, period.

Even the RTX 3090 is only about 10-15% faster than the RTX 3080 in most situations, and you're going to need something much more powerful than that to push Dying Light 2 at 4K if these system requirements are at all indicative of how the finished title is going to perform.

There are certainly games and franchises that are known for putting hardware to the test, and are designed to push hardware for years to come. But even Metro Exodus was playable at 4K with ray tracing with the Nvidia GeForce RTX 2080 Ti. And even Cyberpunk 2077, with all the problems it had at launch, was also capable of running at 4K, thanks to the inclusion of DLSS.

Dying Light 2 does not seem like it's going to be one of those games, and with how long we've been waiting for it, I sincerely hope the developers don't try to make it a tech showcase - because it probably will not end well.

Jackie Thomas is the Hardware and Buying Guides Editor at IGN. Previously, she was TechRadar's US computing editor. She is fat, queer and extremely online. Computers are the devil, but she just happens to be a satanist. If you need to know anything about computing components, PC gaming or the best laptop on the market, don't be afraid to drop her a line on Twitter or through email.

Become a TechRadar Insider

Become a TechRadar Insider