The future of facial recognition: big brother or our new best friend?

Innovation vs privacy and your face is in the middle

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

For better or worse facial recognition has become the technological elephant in the room. It's there, but nobody really likes to think about or talk about too much.

Despite the continued advancements of software and algorithms that would elicit gasps of awe in some of the other tech sectors, the ever-improving ability for machines to put a name to human faces is considered, in most cases, unwelcome.

Take Facebook's recent unveiling of its DeepFace research paper for example. The algorithm, which is still seemingly long way from being integrated into consumer-facing element of the social network, uses 3D analysis of human faces to identify them with a 97.25 per cent success rate. The human brain only can do a fractionally better job with 97.5 per cent.

Article continues belowAs incredible as that breakthrough appears to be, you'll struggle to find reports that don't contain words like "creepy," "scary," or "stalkerish," just like the verdicts on Facebook's previous iterations of identifying tech, that have been protested in some countries and banned in plenty of others.

Meanwhile, Google is busy worrying about the overwhelmingly negative perception of facial recognition tech in this post-Snowden surveillance society. It has banned such Google Glass apps, fearing a backlash that could seriously derail the pending acceptance of its AR headset.

Despite that, we stand on the precipice of a coming out party for the technology. Facial recognition has been simmering beneath the surface, reticent, and also unwilling to show its real face to the world, but now it is almost ready to emerge.

Unwanted intrusion

Applications are graduating beyond clandestine uses in military and law enforcement and the unwanted intrusion into social networking. Facial recognition is entering consumer industries like shopping and marketing, personal security (face unlocking Android phones have been around a while now) and is even helping us to finding lost pets.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

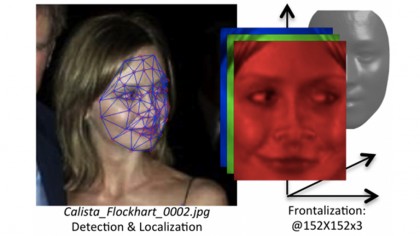

Animetrics, a decade-old facial recognition pioneer, has tech that enables a 3D model of the face to be created from 2D photos that can be forensically analysed. This negates how angles, direction, light, age, weight and other factors make identifying a human face such a complex and infinitely tricky endeavour. (Animetrics is looking into how Facebook's research possibly "overlaps" with its patents, shall we say.)

The company is moving away from its roots of using facial recognition tech to stop people doing bad things and getting away with it, towards applications that can assist with improving lives.

Once the public sees it can be a force for good, rather than a means of stripping away civil liberties and compromising our ever-dwindling right to privacy, the wider perception of facial recognition will change dramatically, Animetrics' CEO Paul Shuepp says.

"What I'm trying to advocate is that this will be something that will benefit our lives rather than something to be fearful of. I find myself telling people it's not the technology itself to be afraid of when it comes to privacy, stalking and ID theft," he said.

The firm recently re-launched its API, allowing "anyone with an idea," to build it "without needing to buy a bunch of expensive software" to get started. Now it's up to third party developers to shape the course of the tech for the better.

How about a doorbell, which uses a camera and software loaded with facial recognition tech? It is one of the first ideas being built on Animetrics' platform.

"The application will look your face up using the API and if you're on the home owners list of approved people they can choose to let you in. You don't have to answer the door, you can see the person on your phone, let them in or tell them to go away."

Within the context of this discussion, it's also important to look at why we are so scared of facial recognition technology. What role does that fear play in affecting our perception of innovation? Schuepp believes Hollywood and television can be partially blamed for overblowing the capabilities of the technology.

"We're persuaded by what we see," he tells us. "They make it look like it's being used every day, so easily, so ubiquitously. There's a show called Person of Interest and they make it look like all faces are recognised by a super computer at all times, that every camera is enabled in a homogenous fashion, so the police always know where these people are in the world wherever they're walking. They make it look real and people get concerned about that."

The technology just isn't that good. Neither is it close to being that good. So we should stop fretting about these Hollywood fantasies, Schuepp says.

- 1

- 2

Current page: The future of facial reconigiton

Next Page Good news for big brother, or man's best friend?A technology journalist, writer and videographer of many magazines and websites including T3, Gadget Magazine and TechRadar.com. He specializes in applications for smartphones, tablets and handheld devices, with bylines also at The Guardian, WIRED, Trusted Reviews and Wareable. Chris is also the podcast host for The Liverpool Way. As well as tech and football, Chris is a pop-punk fan and enjoys the art of wrasslin'.

Become a TechRadar Insider

Become a TechRadar Insider