What is AMD’s Vega battle plan? To fight on multiple fronts

Four ways AMD is positioning Vega to win

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

AMD’s history in high-end gaming is shaky. If it’s any indication of the company’s position in the race, its place among our picks of the best graphics cards is an entry-level spot with the Radeon RX 460.

With that, it’s hard to believe that the upcoming AMD Vega, or Radeon RX Vega, cards will bear much competitive edge over, say, Nvidia’s GeForce GTX 1080 Ti. A whopping 11GB of VRAM is a tough egg to crack for a company that’s best known for budget-friendly offerings.

AMD tried its damnedest at its Capsaicin & Cream 2017 event at the Game Developers Conference (GDC) this past Tuesday to keep details of its Vega graphics architecture as vague as possible. While the company revealed an unforeseen partnership with Fallout publisher Bethesda Softworks, it kept mum on concrete Vega specs and launch details.

Article continues belowThis was intentional, of course, as the company had to know its long-time rival would make a major reveal the same night. Nevertheless, we managed to squeeze as much info on Vega and this odd partnership as we could from AMD technical marketing manager Scott Wasson.

Below, Wasson elucidates Vega’s to-be-instated high-bandwidth cache controller and its foray into cloud gaming, while addressing concerns following its vague announcement regarding its new deal with Bethesda.

TechRadar: What was the biggest announcement you made today?

Scott Wasson: The biggest story is – I’m going to spill the beans here – the last thing that we announced, which was a partnership with Bethesda Softworks. They’re a game publisher, they publish under their various studios. Doom, Quake, Wolfenstein, Skyrim, Elder Scrolls, Fallout, Dishonored, Prey – I mean they have a huge range of titles.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

We’ve worked with developers, one off, on individual games or we’ll provide engineering assistance and help optimize a game and maybe do some co-marketing around it. But what we’re doing with Bethesda is a multi-title, deep collaboration in engineering across all of their titles for a good period of time. So it’s a different approach. The idea is that we’ll help them optimize for Ryzen, and Radeon, both.

Do you think that future games in this partnership are going to have significant advantages with Ryzen and Vega?

So, we’ll be looking obviously for helping optimize the traditional things to do in graphics. I think there will be some Vulkan optimizations, low-level APIs, but then also helping with core scaling across Ryzen because now we have eight cores, 16 threads and very affordable price points. We want games to be able to take advantage of that.

Why Bethesda?

A number of reasons. They have great PC games, and they have good tech, and we already have a nice template that we can work from. If you look at what happened with Doom Vulkan, and we worked with them on that, and they’re able to achieve really nice performance with a new API. It has some really smart folks working there, and so that’s a good company to work with going forward.

Are you concerned that, by working with specific game developers, you’re dividing AMD and Nvidia or Intel fans further when certain games run better on AMD hardware than on competing hardware?

We’re the only company that has those CPUs and GPUs that we provide, and we know our users. And they have one of our CPUs and the competitor’s graphics card or vice versa. So we want to benefit the PC gamers generally. So it’s in our interest to do good optimization that will work for whoever has our hardware even if they’re mixing it with the competition.

Regarding the Vega-powered, LiquidSky game streaming service, how is that going to differ from Nvidia’s GeForce Now and other streaming services?

My understanding is it’s a lot more economical price-wise. We have a new model, because you install the games you own on their service. However, the piece that we announced is that they’ll be using our Vega GPUs for their servers.

One thing Vega does is it has hardware support for virtualization, not software. It’s actually hardware partitioning on GPU resources, so they can guarantee customers that they get the slice of a GPU that’s consistent, and the performance is consistent. So, [the game itself] is partitioned on hardware. We’ll have a better user experience because you’re getting a piece of the GPU consistently.

There’s another piece of it that we announced today too which is the Vega GPUs can virtualize Nvidia encoding hardware as well.

What does that mean?

We have a video encoding VCE in all Radeons. Virtualization allows for us to slice up the GPU across multiple user sessions that we secure and split between the resources.

We can now do that with the video encoder in Vega, which means that when they’re serving a gaming session and then they have to send it downstream to a user, they can encode it on the hardware encoder on Vega for multiple gaming sessions at one time.

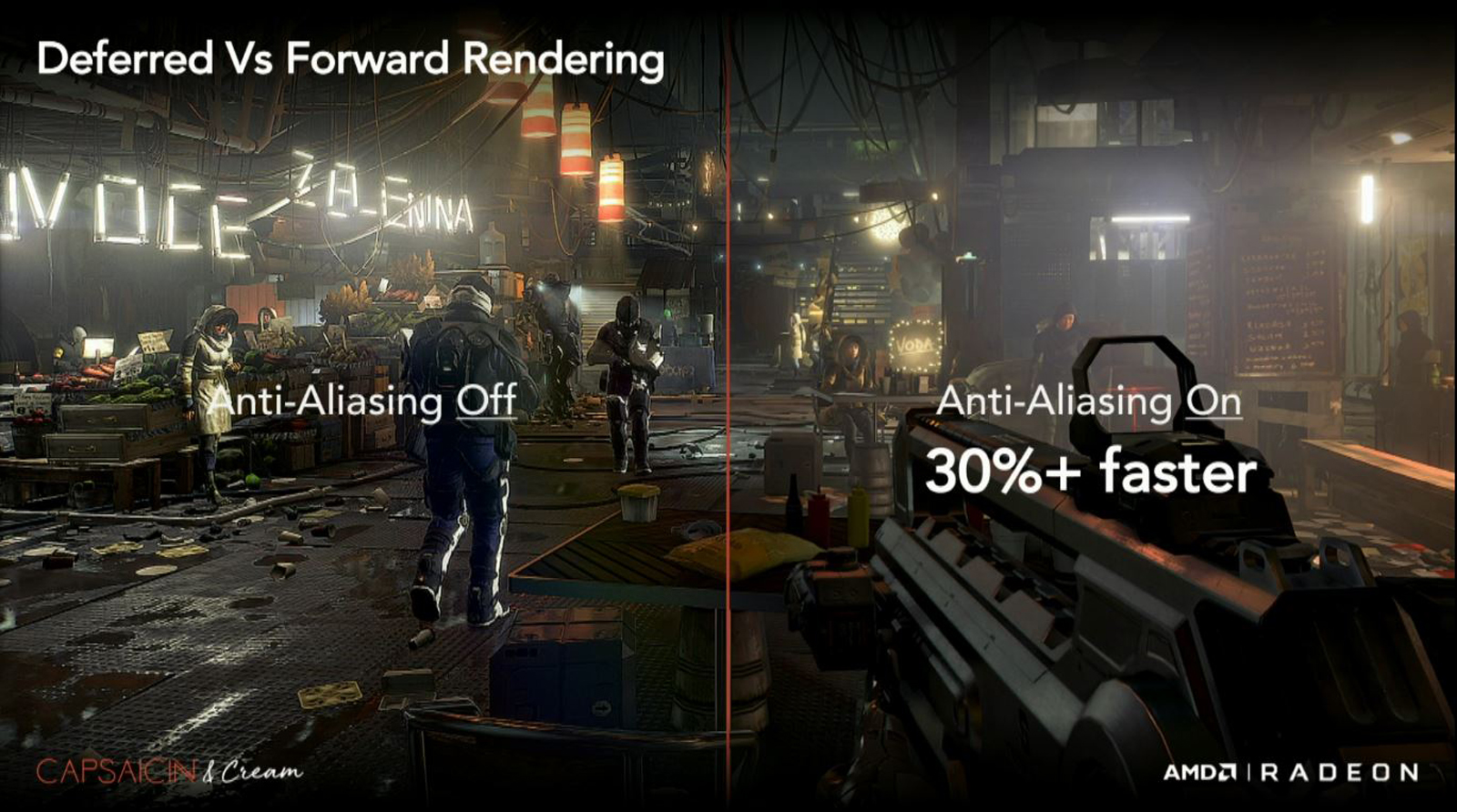

About AMD’s take on forward rendering for VR, what advantages does this pose over traditional deferred rendering?

Deferred rendering is actually newer than forward, but it’s widely used now because it works well, especially on the last generation of consoles. It has some performance costs when you start it up, but then deferred can offer lots of lights and reflections and other features, and they’re nice to have.

However, in VR you have to be at 90 frames per second, and so there’s no reason to use deferred rendering because you can’t take advantage of the extra features while hitting the performance standard that you have to meet. So it’s just not always a good fit for VR. The other thing that deferred doesn’t do well is post-processing anti-aliasing like effects AA.

That isn’t good when you have two eyes with two different views, you know what I mean? We want smart, subpixel AA and that’s multi-sampling, so if you switch back to forward rendering, it can be quick to start. It’s a good fit for VR’s use case. It’s a performance uplift, and there’s an image quality improvement with multi-sampling.

So Epic has built a forward-rendering path into the Unreal Engine version 4.15, and we've supported them by making sure it runs well on our hardware. And we showed on stage multiple VR games including Epic’s Robo Recall using the forward path to perform better and provide better anti-aliasing.

Getting to the elephant in the room, what is Vega’s high-bandwidth cache controller and, more importantly, how does it make our games better?

The idea with high-bandwidth cache and the high-bandwidth cache controller is that the traditional sort of VRAM that you had in the past can now really act as a cache for a larger pool of memory that can all get in the system.

Ultimately, hopefully game developers build bigger, more complex worlds and get the ability to not worry so much about overrunning the memory balance and still have beautiful images that flow smoothly.

So, what we did was demonstrate the feature naturally. We took a current game, Deus Ex: Mankind Divided, built for 4GB, and we constrained two Vega cards to just two gigs of RAM artificially as a test case.

And then we showed it running without the high-bandwidth cache controller – it was slow and it stuttered, and then we turned on the high-bandwidth cache controller to increase the effect in memory size by mapping some into system RAM and being smart about what we kept on the 2GB of local memory.

It gets 50% faster frames per second and 100% faster minimum fps than the non-high-bandwidth cache controller case. You can imagine future games; developers can build very complex worlds against very high memory requirements on this new architecture.

So, effectively, people will be able to use lower spec hardware for higher spec games?

Potentially. What we really want is for developers to not have to worry about it, and just go build what they want – make it beautiful, and then we can be smart about the amount of memory that goes on the card.

- Now, what about AMD Ryzen?