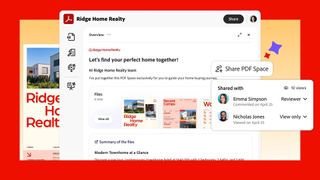

'We’re not just adding new features, we’re introducing a new format': Adobe is changing how PDF and other files get shared

Static PDF sharing is outdated – Adobe wants you to build your own PDF Spaces with a productivity agent that curates everything for you.