TechRadar Verdict

The Nvidia GeForce RTX 3090 is the most powerful graphics card you can buy today, delivering playable 8K gaming performance, along with jaw-dropping 3D rendering and encoding performance. However, you have to pay a high price for this level of power. For the vast majority of people, it's probably not worth the investment, but for creative professionals (who are the ones Nvidia is primarily targeting with this card), the RTX 3090 is a downright bargain.

Pros

- +

Unstoppable performance

- +

Excellent cooling

- +

Great value for creative professionals

Cons

- -

Extremely expensive

- -

Massive footprint

- -

Very power-hungry

Why you can trust TechRadar

Nvidia GeForce RTX 3090: Thirty second review

Despite AMD’s best efforts, the Nvidia GeForce RTX 3090 still sits atop the pantheon of most powerful GPUs. Powered by 24GB of GDDR6X RAM and cooled by a gigantic heatsink, it can keep up with the most strenuous demands, whether it’s 3D rendering or hardcore gaming.

It’s so powerful that it’s taken the place of two of the best graphics cards of the previous generation, the Nvidia Titan RTX and the RTX 2080 Ti. The Nvidia GeForce RTX 3090 has filled their shoes admirably.

It can not only provide stunning performance in 4K but can even run the latest AAA games in 8K at 60fps, even if it’s not perfect at that extreme resolution. And, as capable as it is running the best PC games, this GPU is more suited to those who have to run graphically intense tasks such as video rendering or 3D animation.

One of the reasons this is more suited for more professional workloads is its hefty price. Most mainstream gamers will most likely find the slightly cheaper cards from out Nvidia GeForce RTX 3080 review or our AMD Radeon RX 6800 XT review much more attractive for this reason.

With that said, we've only seen a more powerful graphics card than the Nvidia GeForce RTX 3090 in our Nvidia GeForce RTX 3090 Ti review, and that card was so expensive it simple is not worth it under almost any condition.

If you don’t care about price and want the best or have projects that need the most powerful hardware-accelerated rendering, then the RTX 3090 Ti is a better bet, but if you don't need that marginal increase in performance, then the Nvidia GeForce RTX 3090 is very likely the graphics card for you.

Nvidia GeForce RTX 3090: Price and availability

The Nvidia GeForce RTX 3090 is available right now, starting at $1,499 (£1,399, around AU$2,030) for Nvidia's own Founders Edition. However, this will be the first time Nvidia has opened up a Titan-level card up to third party graphics card manufacturers like MSI, Asus and Zotac, which means you can expect some versions of the RTX 3090 to be significantly more expensive.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

It's hard to pin down whether this is a price increase or a price cut over the previous generation. Compared to the Nvidia Titan RTX, it's a massive price cut, where that card cost an outrageous $2,499 (£2,399, AU$3,999) for similar, albeit last-generation, specs. However, the card in our Nvidia GeForce RTX 2080 Ti review launched at $1,199 (£1,099, AU$1,899), which isn't that much cheaper than the RTX 3090.

The Gigabyte card in our AMD Radeon RX 6950 XT review, meanwhile, cost $1,299 (about £1,040 / AU$1,820). And while that card had comparable gaming performance to the RTX 3090 in some games, it fell behind in others and got absolutely crushed in creative workloads.

Given the present market and the previous generation of professional-grade graphics cards, the RTX 3090 exists in kind of a middle ground. The GeForce name suggests that this graphics card is aimed at gamers, but the specs and pricing suggest that it's more geared towards prosumers that need raw rendering power, but aren't quite ready to jump into the Nvidia Quadro and Tesla worlds.

- Value: 4 / 5

Nvidia GeForce RTX 3090: Features and chipset

- Full-fat GA102 GPU

- Staggering 24GB GDDR6X VRAM

- Enormous power draw

GPU: GA102

Stream multiprocessors: 82 (128 CUDA per SM)

CUDA Cores: 10,496

Tensor cores: 328

Ray tracing cores: 82

Power Draw (TGP): 350W

Boost clock: 1,695MHz

VRAM: 24GB GDDR6X

Memory Speed: 20Gbps

Interface: PCIe 4.0 x16

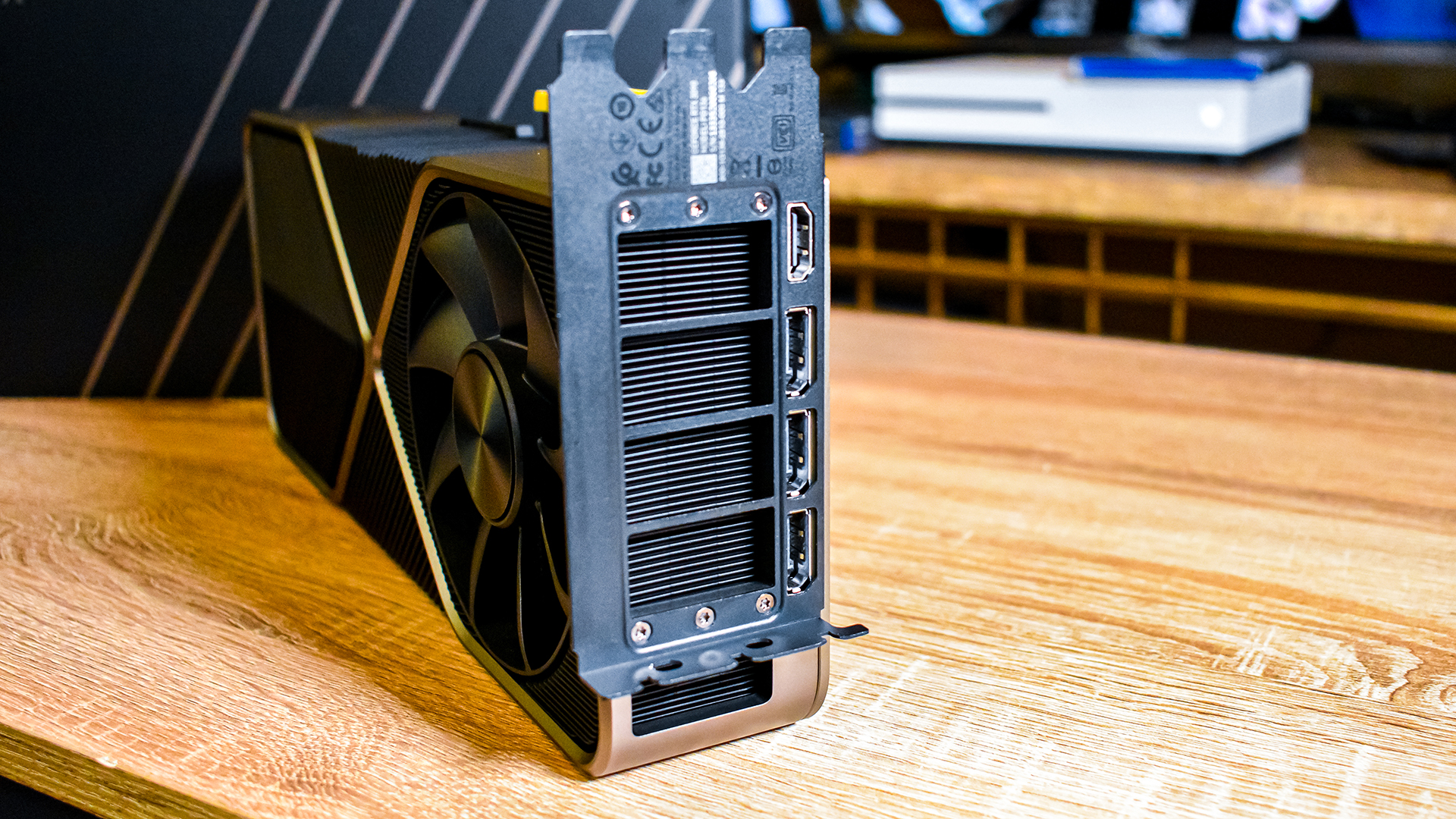

Outputs: 1 x HDMI 2.1, 3 x DisplayPort 1.4

Power connector: 1 x 12-pin

Just like its little sibling, the RTX 3080, the RTX 3090 is built on the Nvidia Ampere architecture, using the full-fat GA102 GPU. This time around, we're getting 82 Streaming Multiprocessors (SM), making for a total of 10,496 CUDA cores, along with 328 Tensor cores and 82 RT Cores.

At first glance, the small bump up from the 72 SMs on the Nvidia Turing-based Titan RTX seems like a minor improvement, but one of the most groundbreaking differences with the Ampere architecture is the ability for both datapaths on each SM being able to handle FP32 workloads. This means that CUDA core counts per SM is effectively doubled, which is why the RTX 3090 is such a rendering behemoth.

The RTX 3090 is also rocking 24GB of GDDR6X video memory on a 384-bit bus, which makes for 936 GB/s of memory bandwidth – that's nearly a terabyte of data every second. Having such a huge allocation of VRAM that is this fast means that anyone that does heavy 3D rendering work in applications like Davinci Resolve and Blender will get a huge benefit. And, when your work involves these applications, anything that can shave time off of project times saves you money in the long term. Combined with the comparatively low cost – at least compared to the Titan RTX – the RTX 3090 is a straight up bargain.

As we mentioned in our RTX 3080 review, both the Tensor cores and RT cores that Nvidia has made such a huge deal of these past couple graphics card generations see big improvements, too. Namely, throughput of RT cores has doubled with the second-generation ones present on RTX 3000 series cards.

In ray tracing applications, the SM will essentially cast a light ray, then offload ray tracing workloads to the RT cores, where they will calculate where in the scene it bounces, reporting that data back to the SM. In the past, ray tracing was basically impossible to do in real time, as the SM would be responsible for doing that whole calculation on its own, on top of any rasterization it had to do at the same time.

But while the RT Core takes on a huge bulk of that workload, ray tracing is still a very computationally expensive technology, which means that it still has a heavy performance cost, which is why DLSS is becoming more and more important, both in gaming and in programs like D5 Render.

The third-generation Tensor Cores present in Nvidia Ampere graphics cards have also seen a massive improvement, doubling in speed over the Turing Tensor Core. However, DLSS performance hasn't seen a 2x performance bump overall, as each SM now packs a single Tensor Core, whereas Turing had two Tensor Cores per SM.

These Tensor Cores do more than just power DLSS, however. They're also the technology that enables Nvidia Broadcast, which is by far one of the most underrated features released for this generation.

We have been using Nvidia Broadcast during all of our meetings and late-night Discord hangouts over the last few weeks, and it is phenomenal at both filtering unwanted noise out of your microphone and replacing your background when you're in the 50th Zoom call of the week, and you have lost all energy and will to clean up.

Now that we've all been working from home for so long, and with it looking like that's going to become the new normal, anything that makes video conferencing less stressful is a huge benefit. The best part is that this technology is available to anyone with an Nvidia RTX-powered device.

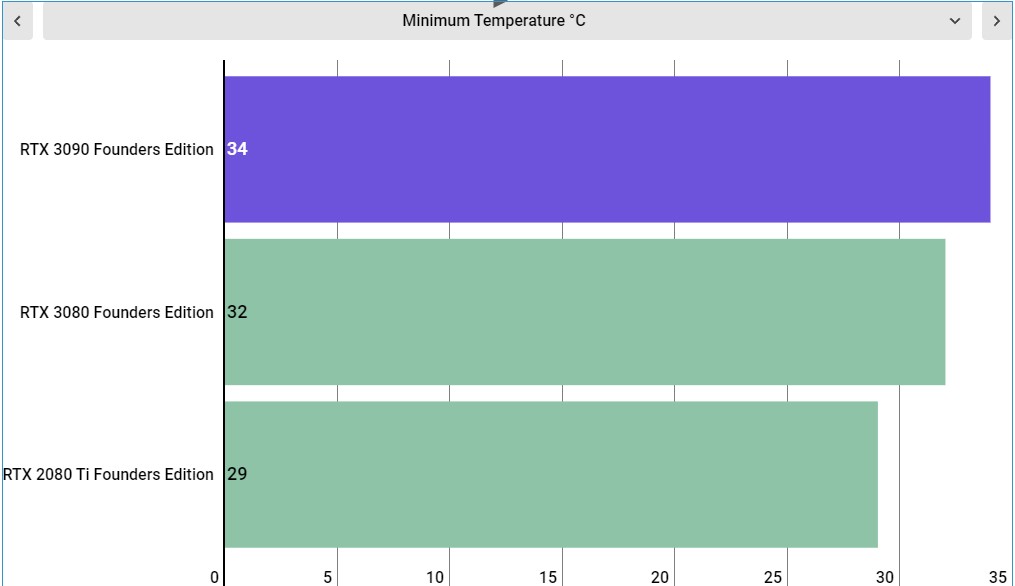

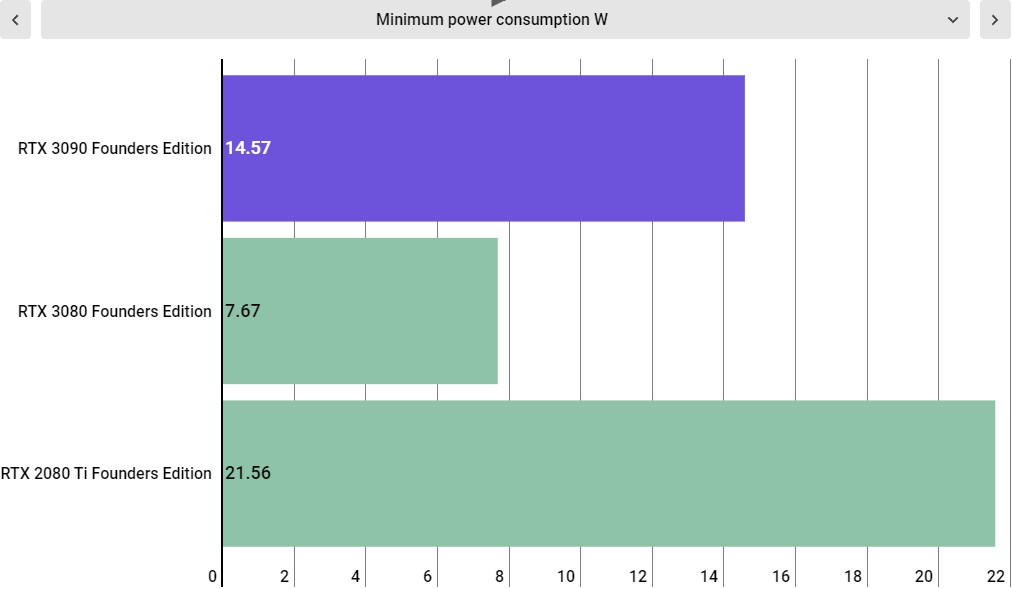

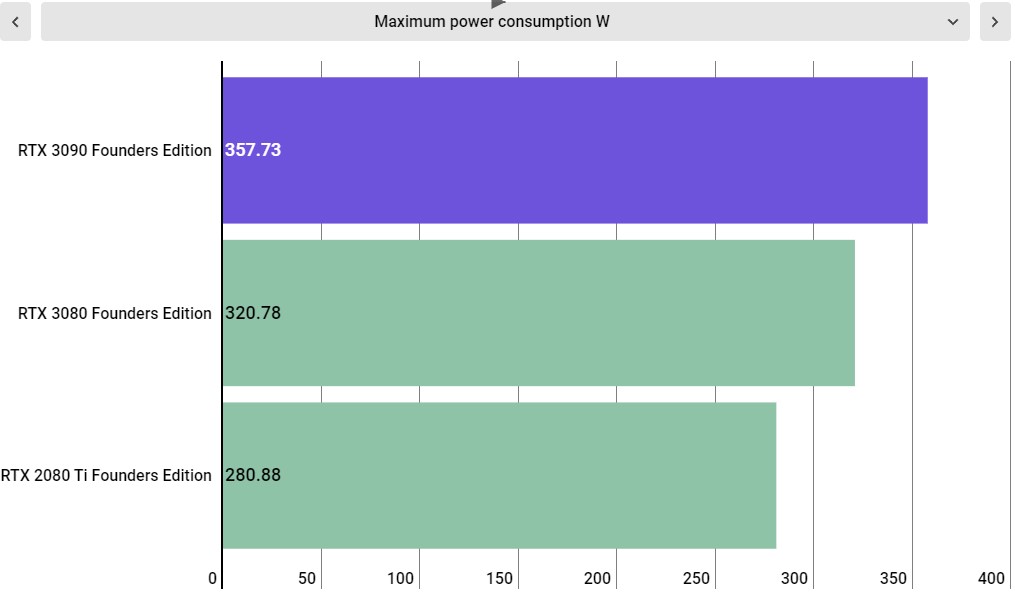

However, we have to talk about power consumption. At its peak during our testing, the Nvidia GeForce RTX 3090 consumed a maximum of 357 Watts. That's a lot of juice, especially if you're pairing it with a powerful processor. Nvidia recommends a minimum power supply of 750W, but we would go further and just recommend a full 1,000W PSU with this card, just to be sure you don't run into any random shutdowns if your power supply isn't running at peak efficiency.

- Features & chipset: 5 / 5

Nvidia GeForce RTX 3090: Design

- Absolutely massive footprint

- Phenomenal cooler design

- 12-pin connector requires an adapter, which ships with the card

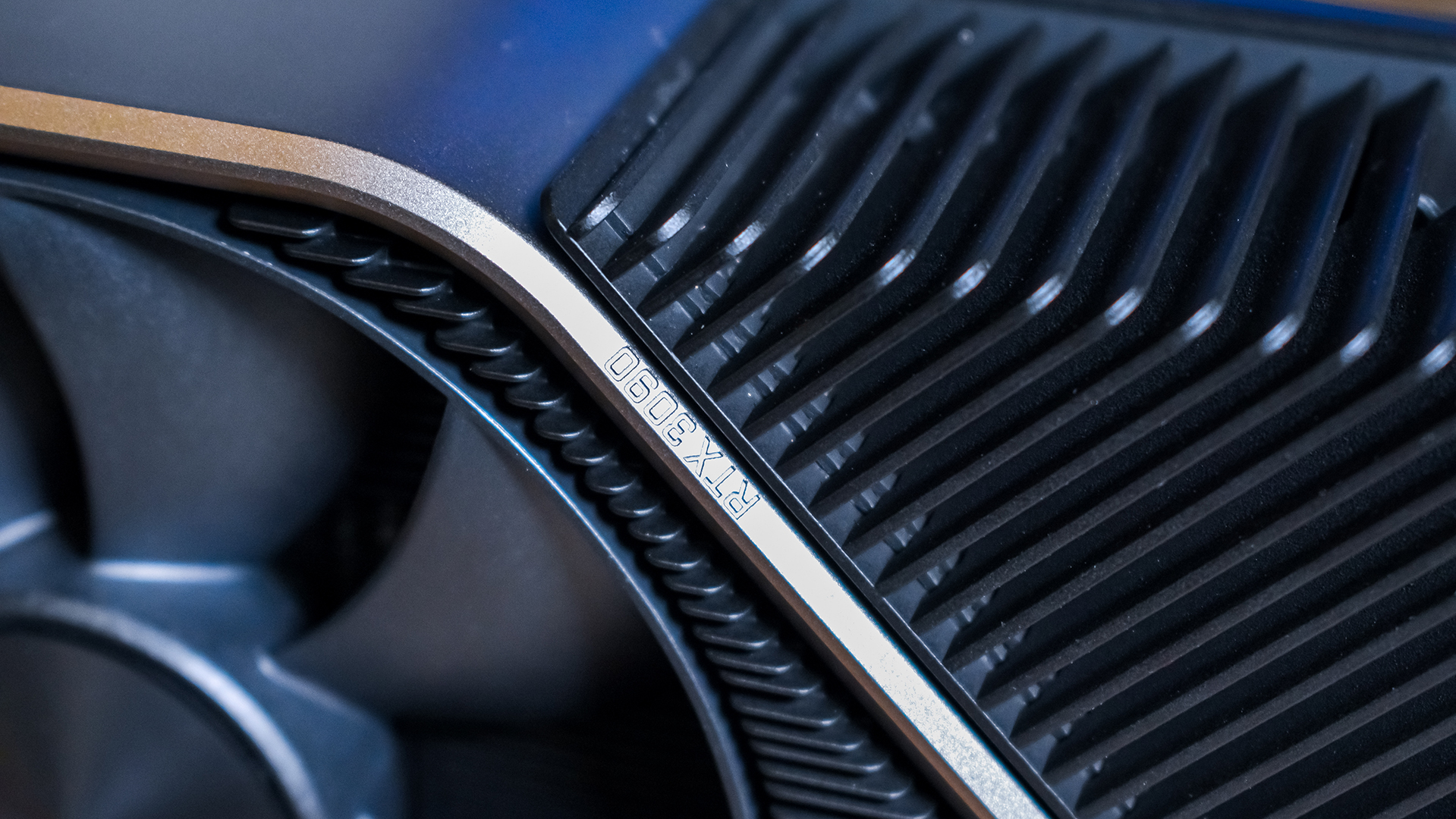

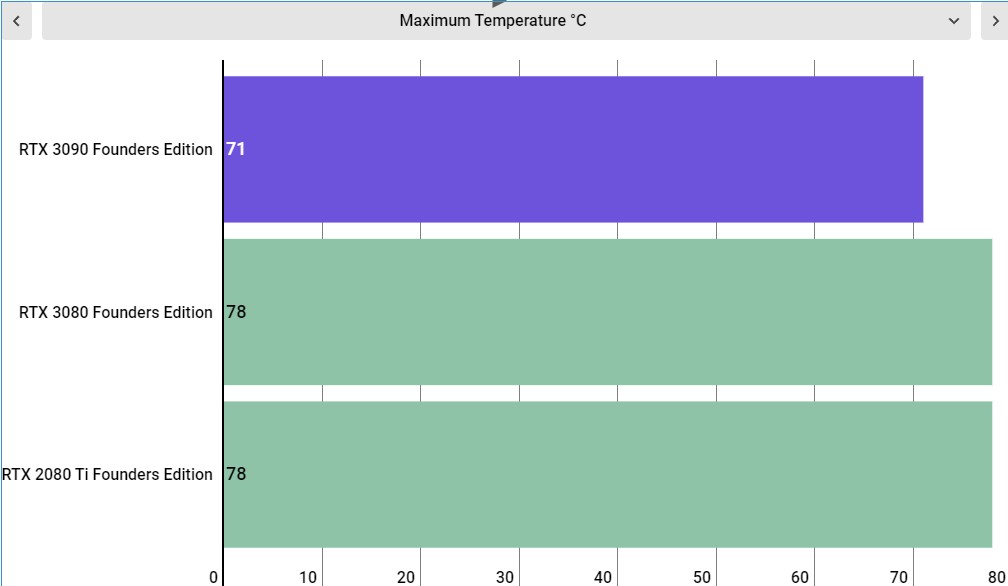

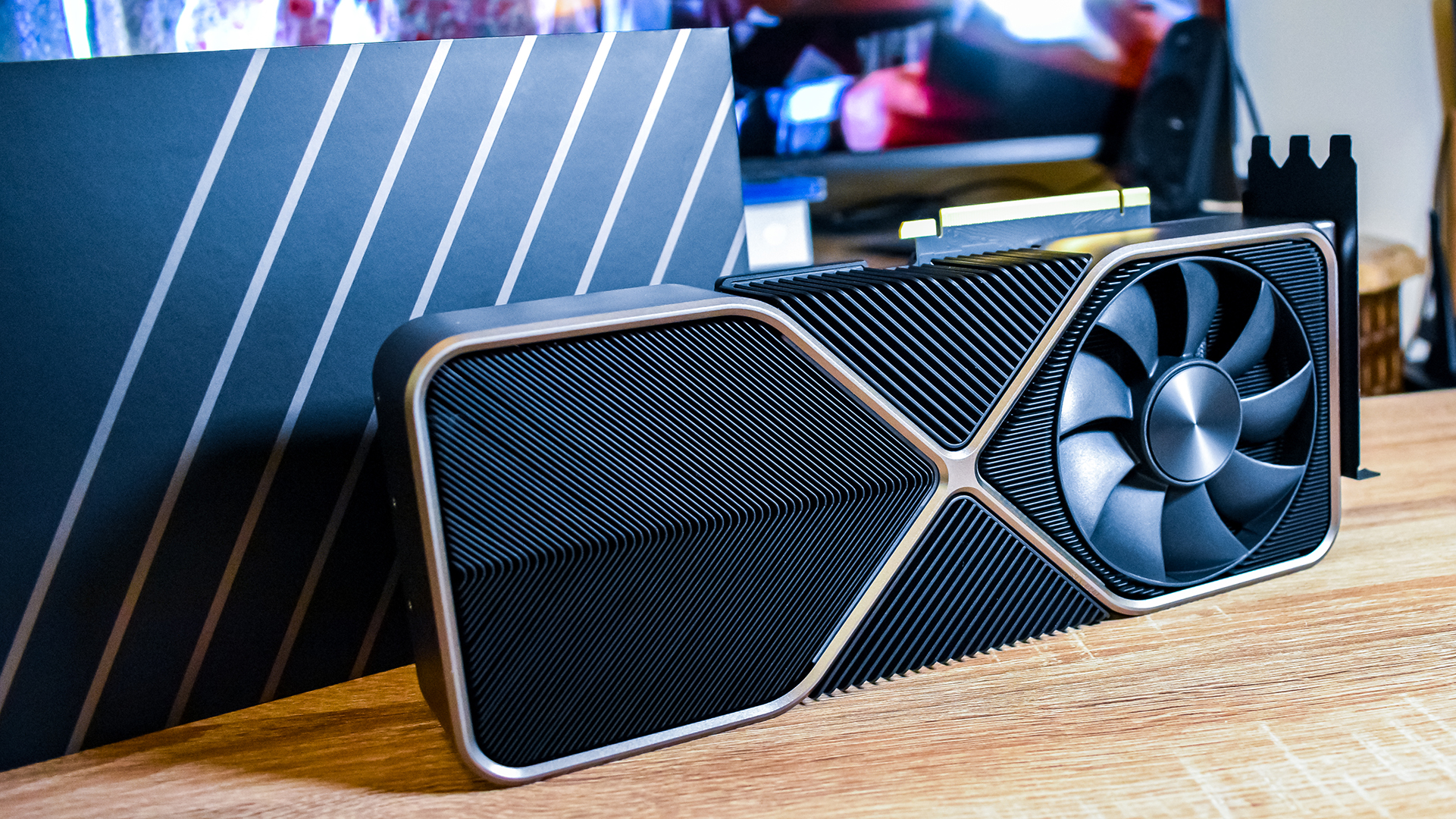

Just like the RTX 3080, the Founders Edition of the Nvidia GeForce RTX 3090 is hands-down the best cooler design Team Green has ever shipped itself. While this is an absolutely massive graphics card – the thing is 12.3 inches long, is a 3-slot card, and weighs, well a lot. Look, we don't have a scale, and Nvidia doesn't list the weight, but it's easily more than 7 lb.

Aesthetically it looks very similar to the RTX 3080 Founders Edition, with a black and gunmetal gray color scheme, and fans on either side of the GPU. The same cooling philosophy applies here – suck air up through the graphics card and exhaust it towards the top of the case. The heatsink also has a ton of surface area, which lends to more efficient cooling.

In order to facilitate this cooler design, the PCB had to be completely redesigned, in order for it to even be possible to pass air through the back of the graphics card. Because of this, Nvidia implemented a new 12-pin PCIe power connector, rather than the dual 8-pin connectors you may have expected.

At the time of writing there aren't really any power supplies that natively support this new power connector, though there are some custom cables from the likes of Corsair in the works. Luckily, Nvidia includes a 2 x 8-pin to 1 x 12-pin power connector in the box, so it's not something you have to worry about right now.

It is a bit unsightly, however, and it's hard to have the same kind of clean cable setup as you would have otherwise. However, third-party graphics card manufacturers are using the same 8-pin power connector as always, so you can always just go with one of those if you need to.

Because this is a large triple-slot card, you're going to want to double check to make sure your case can actually support it. Some people with trendy micro ATX and mini-ITX cards will sadly be out of luck.

- Design: 4.5 / 5

Nvidia GeForce RTX 3090: Performance

- Absolutely chews through creative workloads like nothing

- Actually playable 8K gaming. Yes, we said 8K gaming.

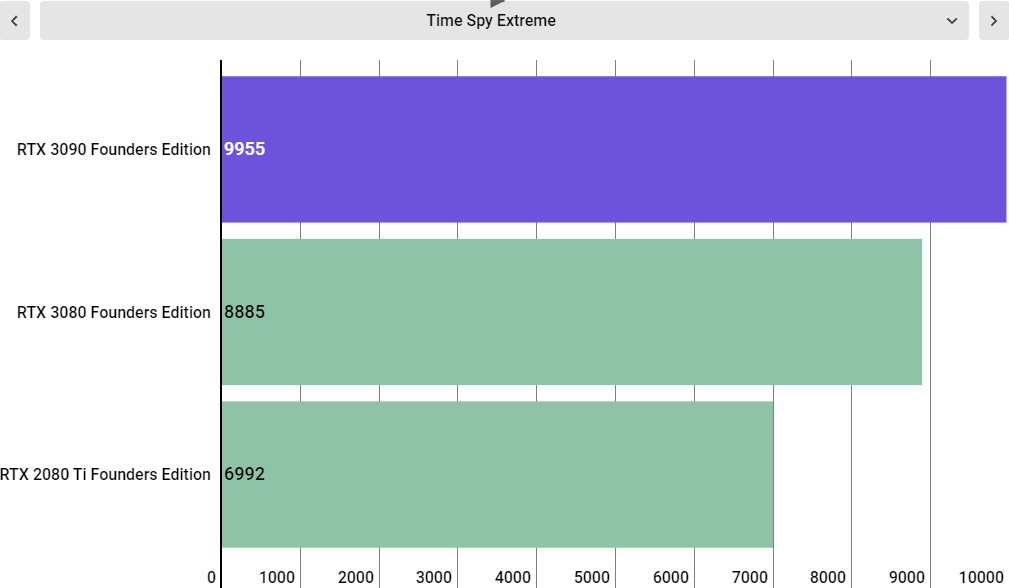

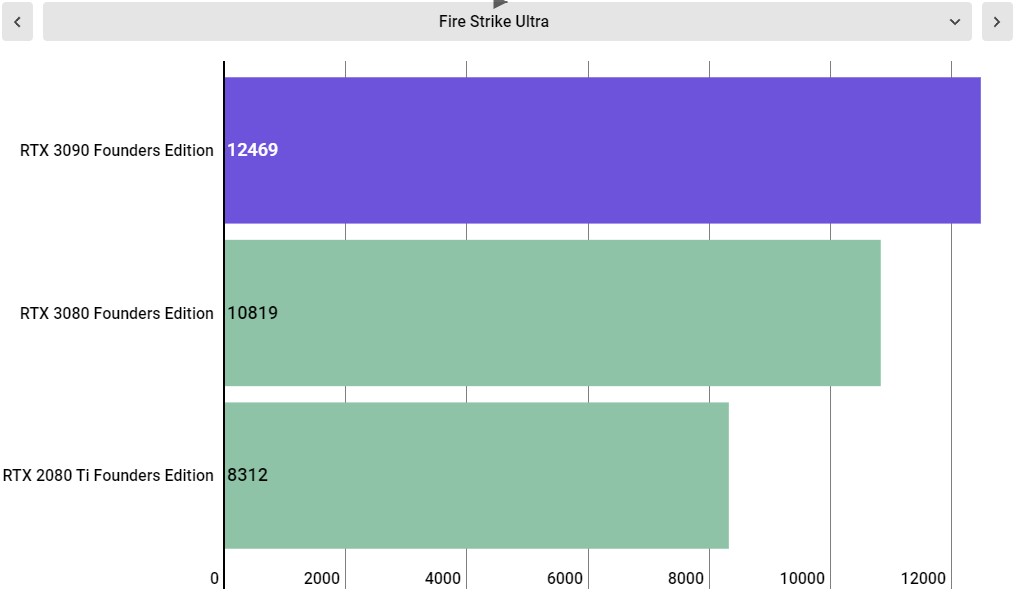

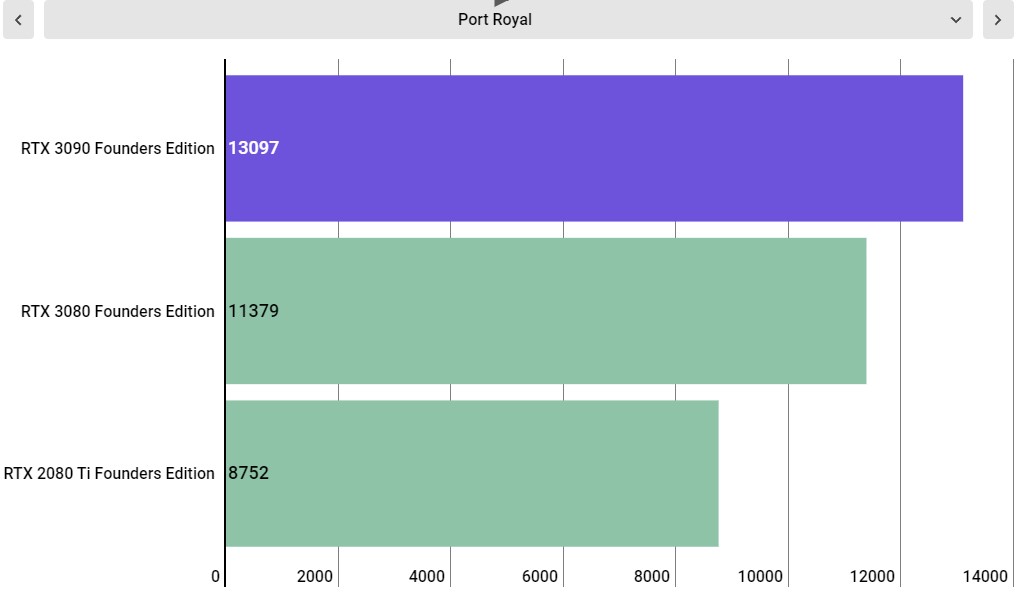

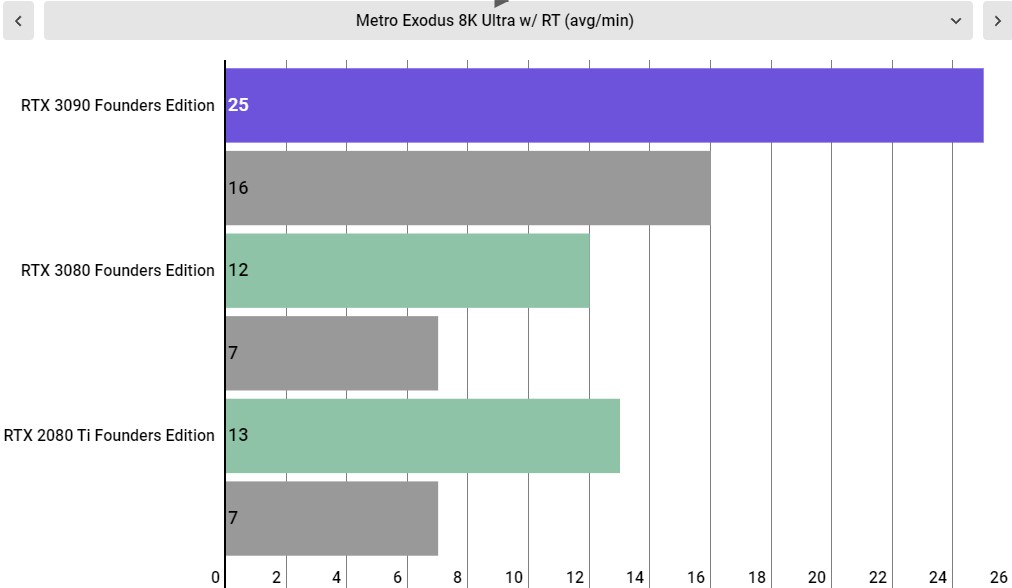

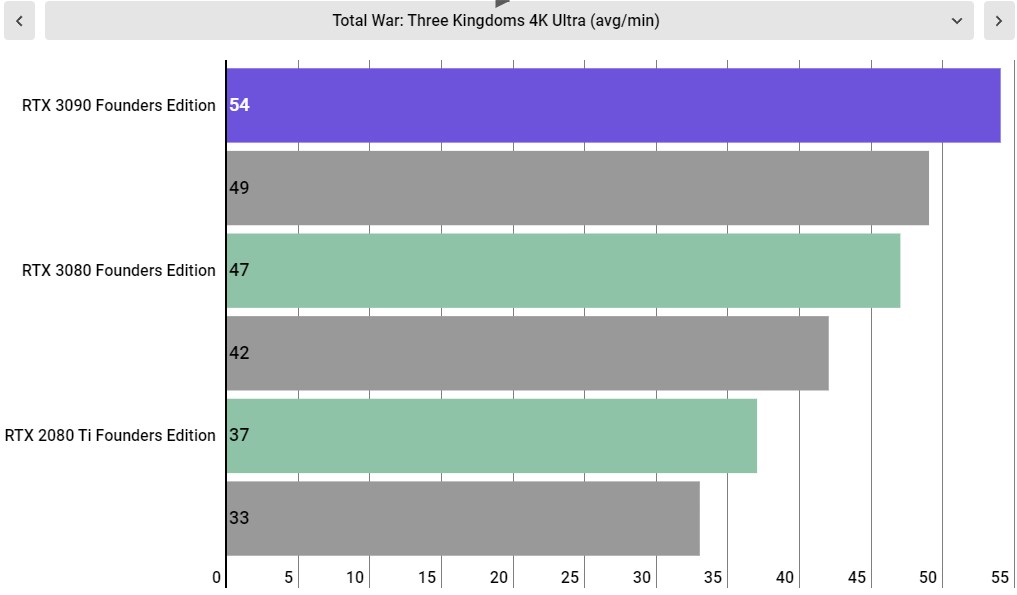

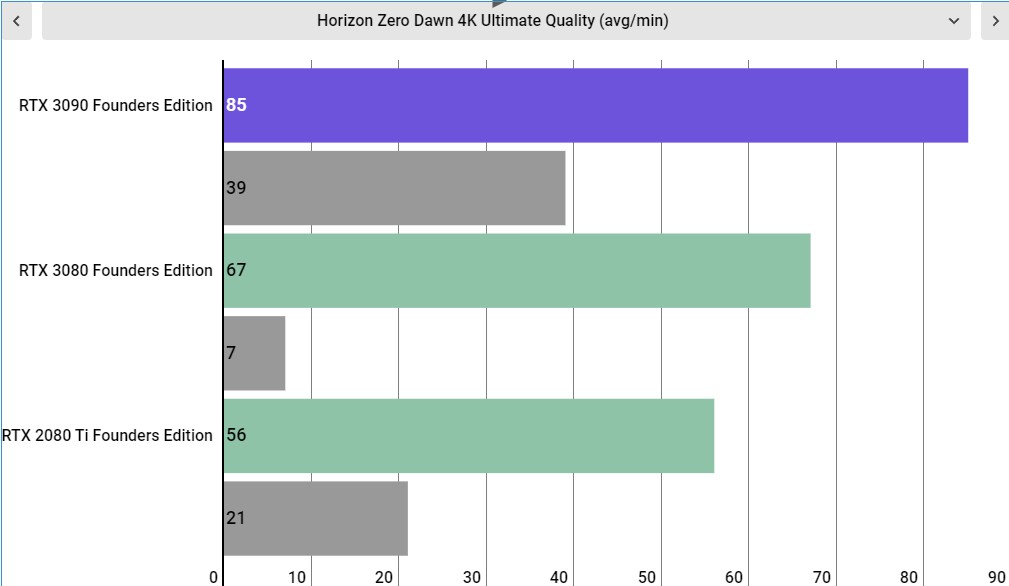

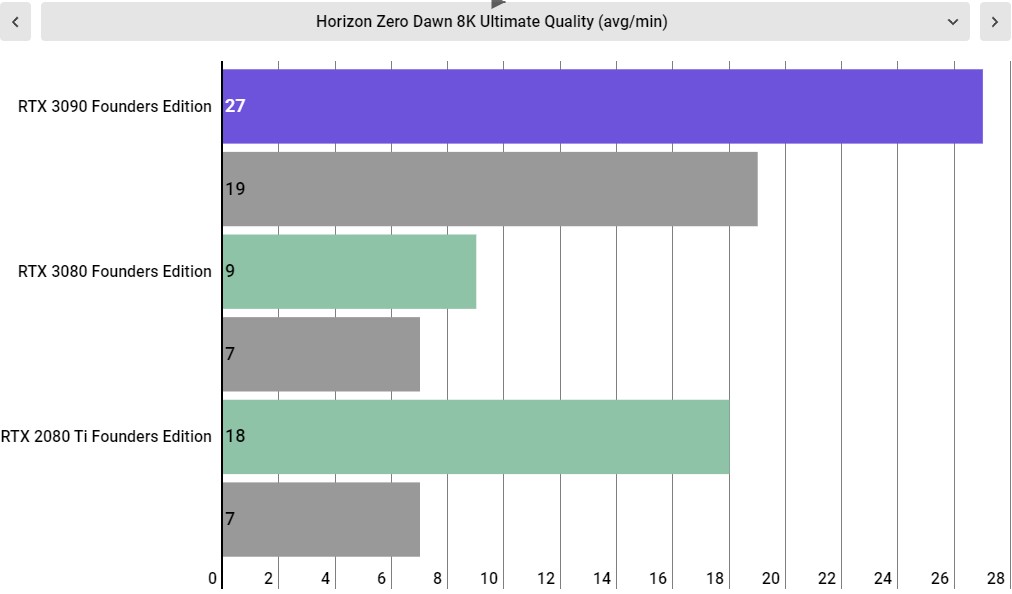

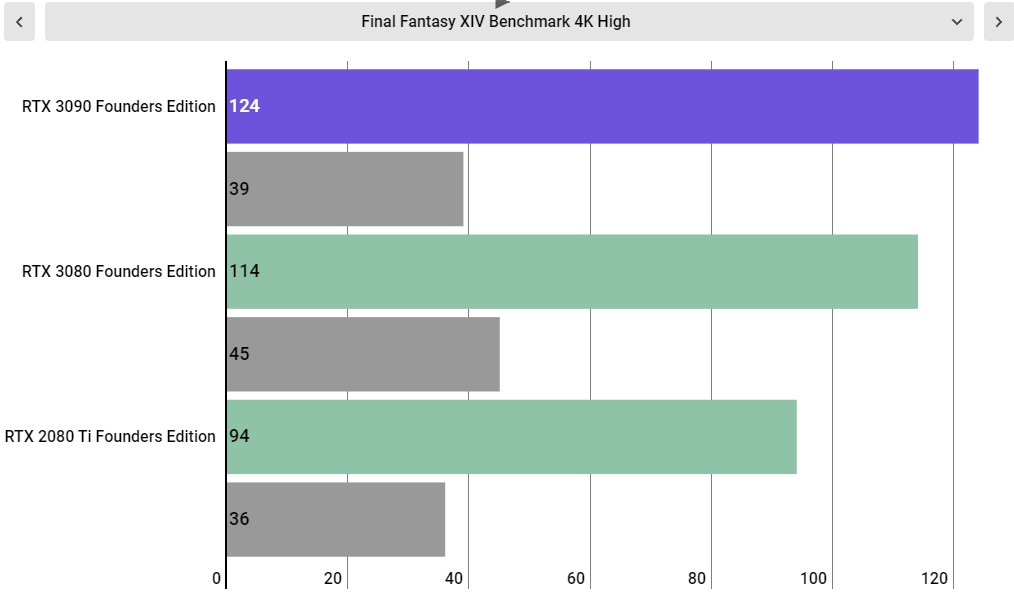

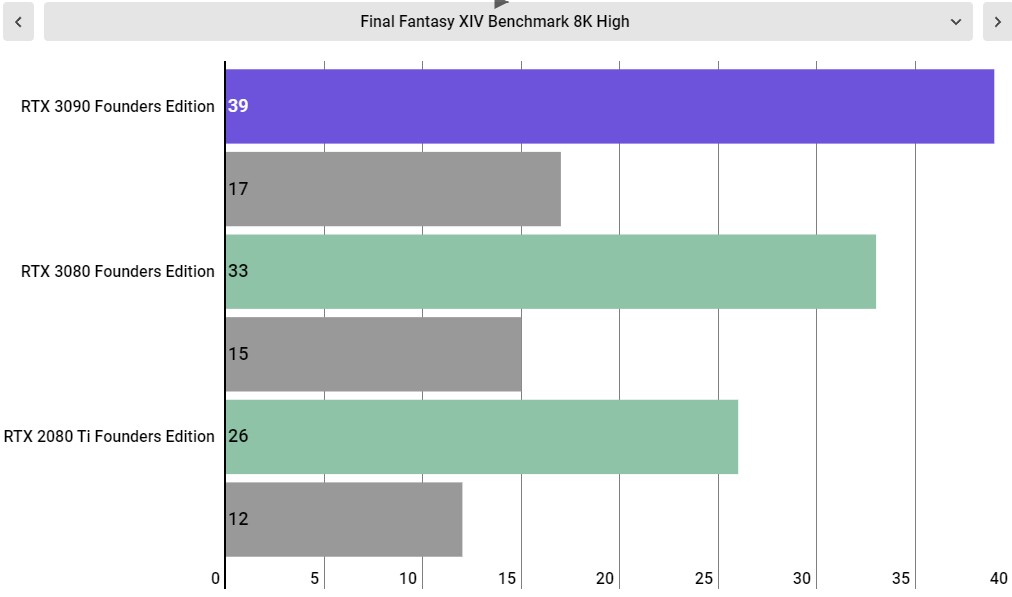

When it comes to gaming performance, we're going to just come out and say it – the Nvidia GeForce RTX 3090 is just 10-20% faster than the RTX 3080 at more than double the price. That increase in performance, if all you're looking for is a gaming card, is absolutely not worth it, and that's ok.

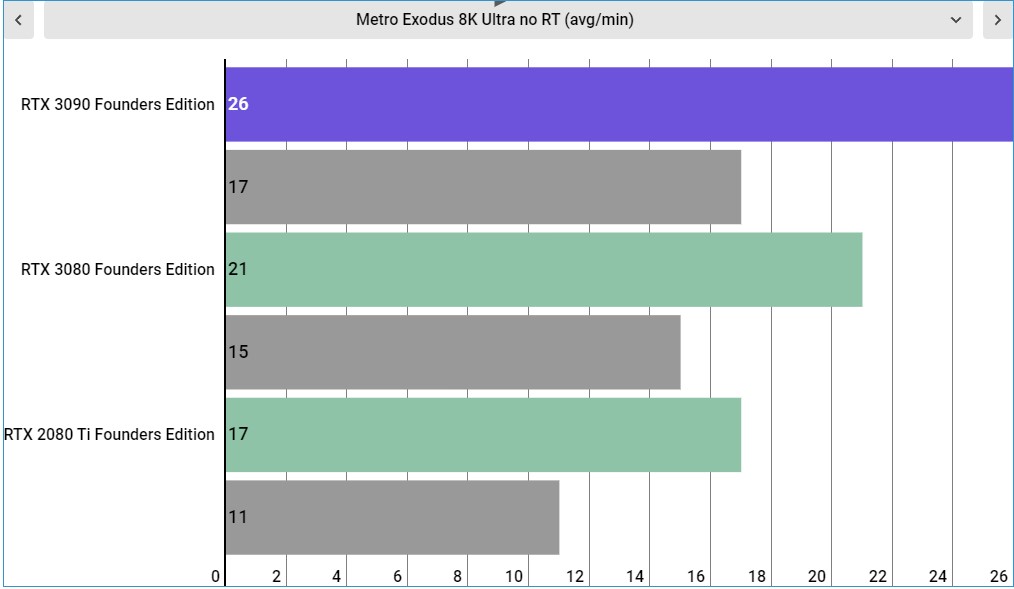

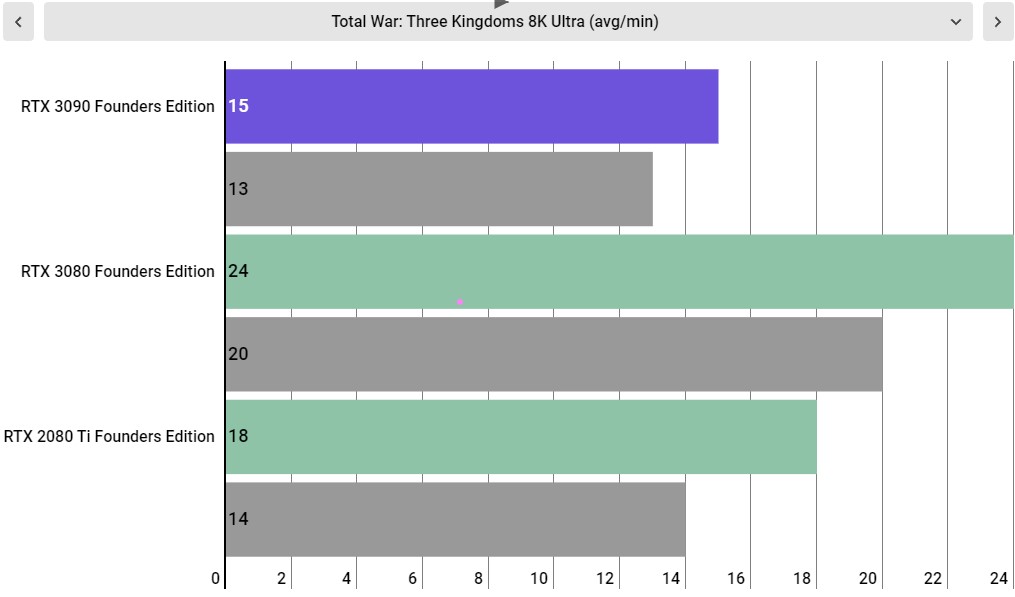

This is a card that is primarily aimed at the beginning of 8K gaming and professional 3D rendering applications. That being said, we can't really talk about the RTX 3090 without talking about 8K gaming.

This is the system we used to test the Nvidia GeForce RTX 3080 Founders Edition:

CPU: AMD Ryzen 9 3950X (16-core, up to 4.7GHz)

CPU Cooler: Cooler Master Masterliquid 360P Silver Edition

RAM: 64GB Corsair Dominator Platinum @ 3,600MHz

Motherboard: ASRock X570 Taichi

SSD: ADATA XPG SX8200 Pro @ 1TB

Power Supply: Corsair AX1000

Case: Praxis Wetbench

Right now, 8K pretty much exclusively exists in the realm of high-end TVs, which means the panels aren't exactly widespread. That will almost certainly change in the coming years, but right now there isn't a single gaming monitor that supports the resolution.

We don't have an 8K display handy, either – the display we use for our 8K performance features is on the opposite side of the Atlantic ocean as our controlled setup for testing computing components. However, thanks to Nvidia's Dynamic Super Resolution, or DSR, we can test games in 8K, scaling it back down to the native 4K resolution of the monitor we use for testing.

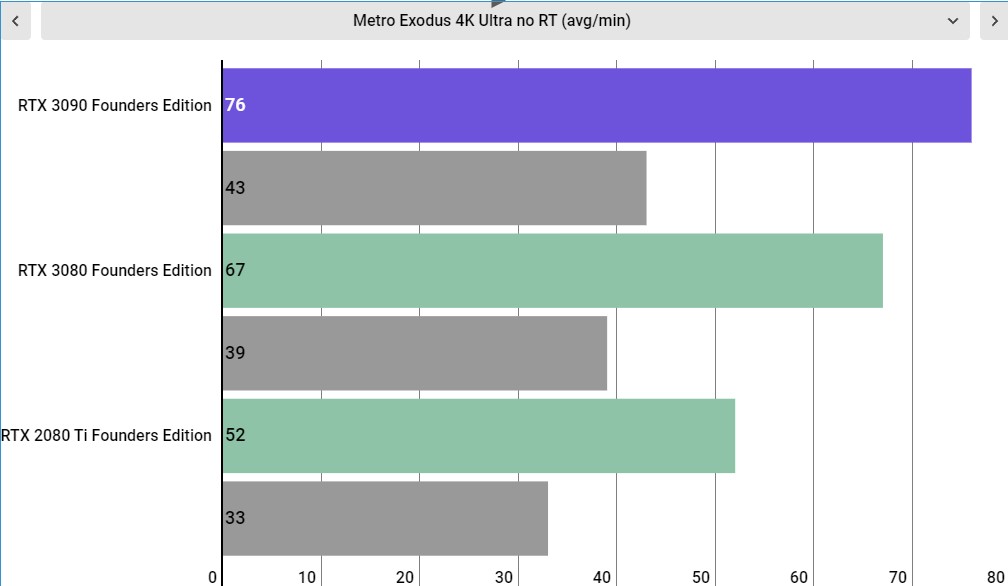

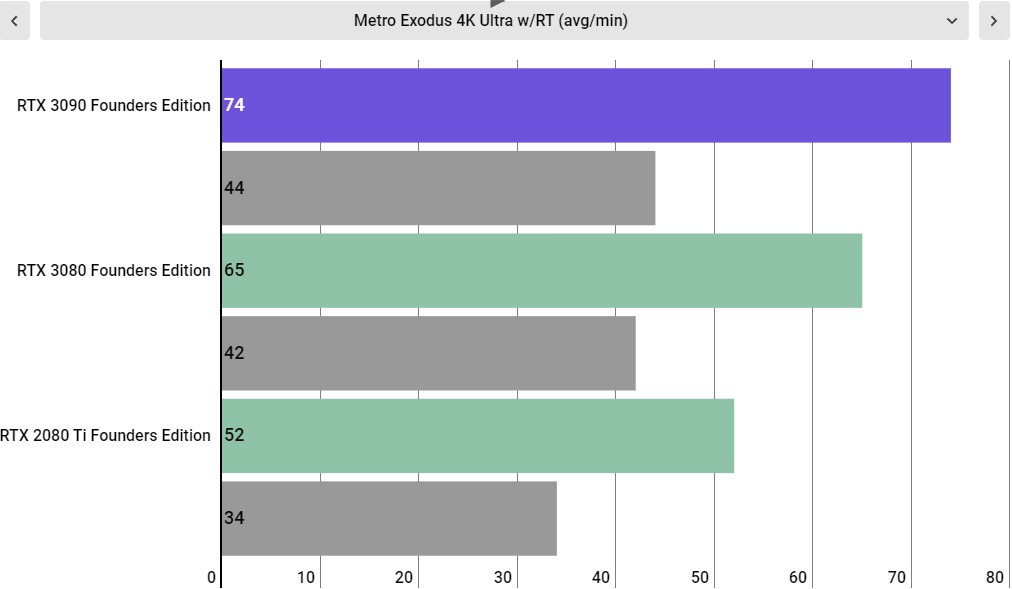

This comes with a sizable 3-5% performance loss, but otherwise provides a good glimpse at what 8K gaming performance looks like. As you may be able to discern from the charts above, the RTX 3090 doesn't provide the straight 8K 60 fps experience in the most demanding games on PC. But like most things, it's complicated.

The games we selected for testing are there to push the GPU to its limits, so we can compare the performance delta between different graphics cards. They provide easily repeatable benchmark scripts, so we can be sure the exact same workload is applied to each graphics card.

But you can bet your bottom dollar that we threw this graphics card into our personal machine and tried it across a wide spectrum of PC games, both at 4K and 8K DSR to see how it performs.

In games like World of Warcraft: Battle for Azeroth and Destiny 2, we were able to get a steady 60-70 fps at this extremely high resolution, but with quality settings at high, rather than having all the eye candy turned on. This isn't as big a loss as it sounds, as the 24GB of VRAM is enough so that we didn't have to turn down textures.

However, once we start diving into more visually intense single player games like Control and even Dark Souls III, we typically see frame rates in the 40s – which is definitely still playable performance. So, while we probably wouldn't advise people start playing games in 8K yet, this is definitely the first graphics card we've ever tested that provides actual playable gameplay at this resolution – similar to how the Nvidia GeForce GTX 980 Ti and the Titan X broke into 4K gaming back in 2015.

That alone makes the Nvidia GeForce RTX 3090 appealing; however, 8K gaming isn't perfect yet, and it's definitely not at the point where you can just crank up the settings and forget about it. For 8K gaming, the Nvidia GeForce RTX 3090 is early adopter tech in every single way – you have to take into consideration that this is the first graphics card that can provide playable frame rates at this high resolution, so expecting silky smooth gameplay in every game is kind of a pipe dream. It's impressive that the RTX 3090 can handle this resolution at all.

At 4K, however, the RTX 3090 does provide the level of performance where you can just crank every setting up to max and just forget about it. Every single game we tried to play with this graphics card at 4K ran like a dream. Final Fantasy XV with everything turned up? 70+ fps – with the stutter that game is infamous for almost completely eliminated thanks to the 24GB frame buffer.

Ditto with Metro Exodus and Control, which were both games that made us constantly conscious about the limits of 4K gaming with the RTX 2080 Ti just a few months before the RTX 3090 launched but now run like an absolute dream with all the eye candy enabled. Yes, the RTX 3090 only provides a 15% improvement over the RTX 3080. But the kind of people that are going to be buying this card for gaming are the type of person that just wants the bleeding edge tech, price be damned. And, well, the experience here is definitely premium, even if the price-to-performance ratio won't make sense for a vast majority of people.

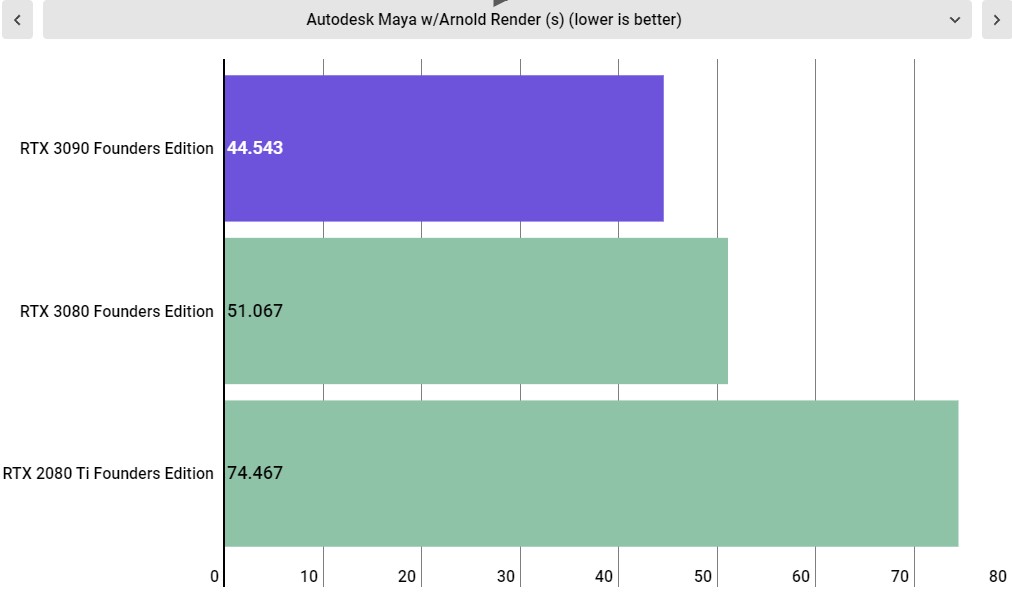

Where the RTX 3090 really shines, however, is in 3D Rendering and encoding, making it hugely compelling to creative professionals that need this level of performance without investing thousands of dollars in a Quadro or Tesla card.

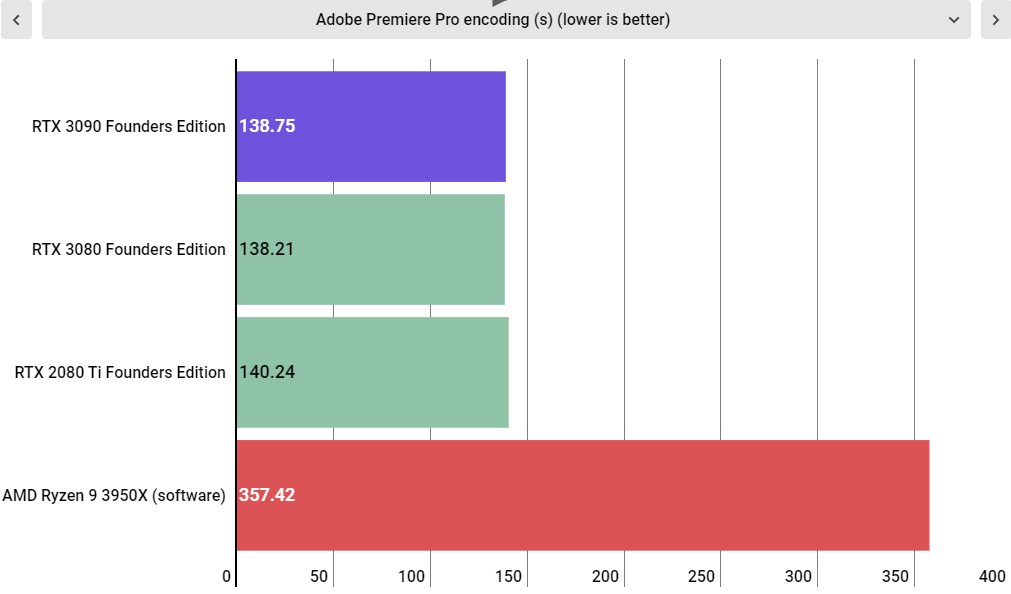

It should be noted that the NVENC encoder is the same here as on the RTX 2080 Ti, which means performance doesn't change much generation-to-generation. However, now that this encoder is supported in Adobe Premiere, nearly the entire video production workflow is now accelerated by the GPU, which makes a dedicated GPU incredibly beneficial to video editors. Encoding a video using the NVENC encoder, rather than through software cuts the time in half – even when we're using the 16-core AMD Ryzen 9 3950X paired with 64GB of RAM running at 3,600MHz.

However, while there isn't really a difference between RTX GPUs in straight encoding performance – as they're all using the same chip – processing effects do see improvement depending on the GPU you're using. With an RTX 3090 in your video editing PC, you should have absolutely no problem playing back video in real time while editing.

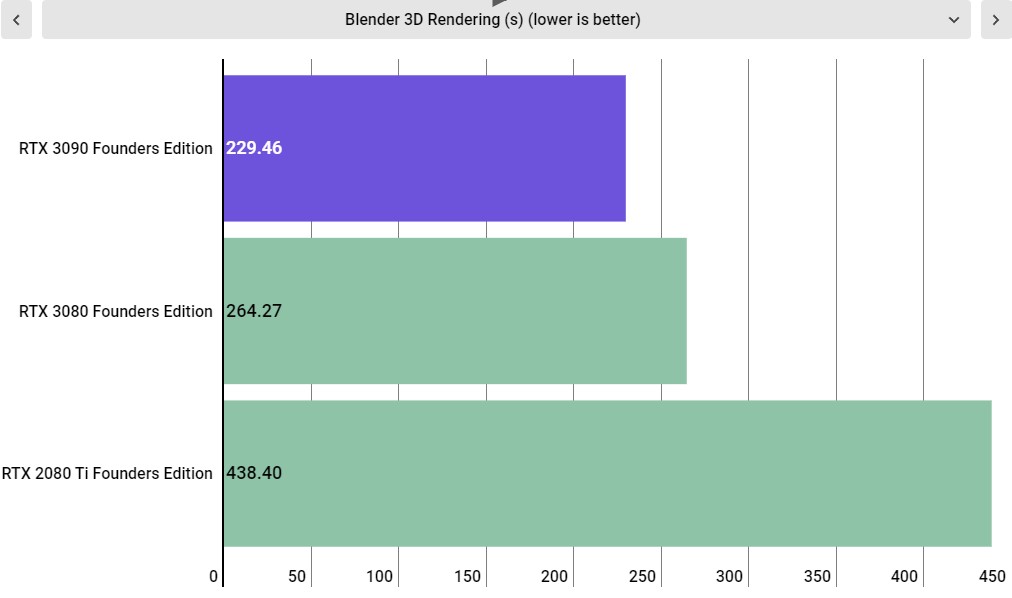

But where the RTX 3090 really shines is in 3D Rendering through applications like Blender and Autodesk Maya. In fact, while in games the RTX 3090 is around 50% faster than the RTX 2080 Ti, the 3090 is nearly twice as fast as the Turing flagship in Blender, rendering through the benchmark in just 229 seconds to the RTX 2080 Ti's 438 seconds. That's a 91% improvement, thanks in large part to the sheer amount of VRAM and FP32 performance on offer here.

By pricing this graphics card at the $1,499 (£1,399, around AU$2,030) price point, rather than just putting it at the same price that the Titan RTX launched at, Nvidia may be making strides to democratizing the kind of 3D Rendering performance that will let people try their hands at 3D animation without having to invest in extremely expensive hardware. Maybe with Nvidia Lovelace, the RTX 4080 card can deliver this kind of rendering performance to make it even easier for the layperson to do 3D content creation. But, hey, at least it's more accessible now than ever before, and we'll consider that a win.

- Performance: 5 / 5

Should you buy an Nvidia GeForce RTX 3090?

Buy it if...

You want to be an 8K gaming pioneer

The Nvidia GeForce RTX 3090 is the first graphics card to provide playable gaming performance at 8K. It's not perfect, but it's the only card that won't give you a slideshow at that resolution.

You are a creative professional

If a large part of your daily workflow involves 3D Rendering, the massive compute performance and copious amount of VRAM on offer here will be a dream come true.

You don't want to ever worry about 4K gaming performance

With the Nvidia GeForce RTX 3090, we've finally reached the point where you can just max out whatever game you're playing at 4K and forget about it. All the eye candy that's inadvisable for most folks to turn on works like a breeze with the RTX 3090.

Don't buy it if...

You're on any semblance of a budget

The Nvidia GeForce RTX 3090 is the fastest graphics card in the world, yes, but it's also extremely expensive. The gains over the RTX 3080 aren't worth the price increase, so only people that want the very best no matter the price need apply here.

You're playing at any resolution under 4K

The RTX 3090 is a massively powerful graphics card and you simply won't get what you're paying for unless you're playing games at 4K.

You don't need the very best

If you're fine with solid gaming performance, and you don't want to pay twice the price of the RTX 3080 for 15% added gaming performance, you should probably go for the cheaper card – Nvidia itself called it the flagship, after all.

Also consider

Nvidia GeForce RTX 3080 Ti

The RTX 3090 is an expensive graphics card, without question, so if it is just a little bit too expensive for your wallet to bear this time around, give the RTX 3080 Ti a look. It has comparable specs and performance, with a more accessible price.

Read the full Nvidia GeForce RTX 3080 Ti review

AMD Radeon RX 6950 XT

The AMD Radeon RX 6950 XT isn't competitive against the RTX 3090 when it comes to creative performance, at all. But there are a number of cases where it's gaming performance was on par with the RTX 3090, and with a starting MSRP of $1,099 (about £880 / AU$1420), it's a viable alternative for a gaming rig.

Read the full AMD Radeon RX 6950 XT review

Nvidia GeForce RTX 3080

If what you're looking to do is dive into the wonderful world of 4K gaming, then honestly the RTX 3090 might be overkill, especially for the price. The RTX 3080 gets 4K@60fps pretty easily and consistently for about half the price of the RTX 3090, so if you're just looking to game, you can save yourself some real money by dropping down just a bit to the next lowest tier.

Read the full Nvidia GeForce RTX 3080 review

Nvidia GeForce RTX 3090: Report card

| Value | It's strange to give the RTX 3090 credit for its value proposition, given that its such an expensive card, but considering that this is much more of a prosumer/professional card, this is downright cheap for a workstation-class GPU. | 4 / 5 |

| Features & chipset | Nvidia's impressive Ampere architecture is on full display with the RTX 3090, featuring over 10,000 CUDA cores, second generation RT cores, and third generation Tensor cores to accelerate machine learning tasks. | 5 / 5 |

| Design | The RTX 3090 is an impressively huge graphics card, thanks in large part to its incredible cooling solution that keeps this powerful GPU running at a safe temperature, but that does mean that a lot of cases won't be able to fit it. | 4 / 5 |

| Performance | From playable 8K gaming to absolutely jaw-dropping performance in creative workloads, the RTX 3090 can do just about anything with best-in-class performance. | 5 / 5 |

| Total | While there are going to be some elite gamers out there who are going to buy this card more for status than performance, this is much more of a creative professional's graphics card. As such, it does for professional workloads what the RTX 3080 does for 4K gaming at a compelling price (relatively), making this the best graphics card for creative professionals. | 4.5 / 5 |

First reviewed September 2020

Jackie Thomas is the Hardware and Buying Guides Editor at IGN. Previously, she was TechRadar's US computing editor. She is fat, queer and extremely online. Computers are the devil, but she just happens to be a satanist. If you need to know anything about computing components, PC gaming or the best laptop on the market, don't be afraid to drop her a line on Twitter or through email.