As we continue to fill our homes with ever more wireless-connected devices, it has become essential that we have a strong and reliable Wi-Fi network that can reach every corner of our house. Unfortunately, that’s not always the case, and suffering from poor Wi-Fi signal can be incredibly frustrating as download speeds slow to a crawl, or websites fail to load altogether.

There are many factors that can play a part in weakening your Wi-Fi signal, from the placement of your router, to thick stone walls and reinforced ceilings of your property. This can cause problems for devices that rely on a stable Wi-Fi connection, but the good news is that there are a number of ways to ensure that you get Wi-Fi throughout your home. Here are some top tips for improving your home wireless signal.

Wi-Fi anywhere – for fast web browsing, gaming and streaming

First of all, make sure your wireless router is correctly positioned. Many people hide their routers in cupboards or behind ornaments, but this can weaken the signal.

Instead, you’ll want to put your router out in the open in a central location in your home, ideally as high up as possible. A shelf works well, and many routers can also be wall mounted. If your router has external antennae that can be repositioned, try pointing them in directions that will give your entire house the best possible Wi-Fi coverage.

You may also want to consider buying a new router, especially if you rely on the free one that your ISP gave you. Modern routers come with a range of features that can boost your wireless network. If you do buy a new router, make sure it supports the Wi-Fi ac standard (also known as 802.11ac). This will make sure that your new router is capable of the fastest possible Wi-Fi speeds, with Wi-Fi ac being substantially faster than the previous Wi-Fi n standard.

A modern router should also be dual band and able to broadcast on both 2.4GHz and 5GHz bands. This means your Wi-Fi network won’t get overloaded with traffic if you have a lot of Wi-Fi devices in your home, which could weaken your wireless signal.

Wi-Fi repeaters are another way of boosting your Wi-Fi over short distances in your home. These take your existing Wi-Fi signal, then repeat it to areas that your original network may struggle to reach. However, it’s important to note that Wi-Fi repeaters still struggle with thick walls and ceilings, or with larger homes that have multiple floors. If your Wi-Fi signal is already poor, a Wi-Fi repeater may not be adequate, as it will just repeat that already weak signal.

The best, and most robust, solution for boosting your home’s Wi-Fi is using Powerline technology, which is not affected by thick walls and multiple floors.

Strong and reliable Wi-Fi with Powerline

Powerline technology is a clever method of turning your home’s electrical circuit into a wired network. It’s completely safe and easy to set up, and means you don’t have to trail network cables throughout your home.

Because it uses your home’s electrical circuit, it also means that thick walls and floors are not a problem. From your home office to the basement – or even the garden – you can now create Wi-Fi hotspots and internet connections wherever there is a power socket in your home.

All you need to do is plug a Powerline adapter into a power socket near your router and connect them up with an Ethernet cable. Then, plug in a second Powerline adapter into a power socket in another room to create a new Wi-Fi hotspot for a faster, more reliable, internet connection.

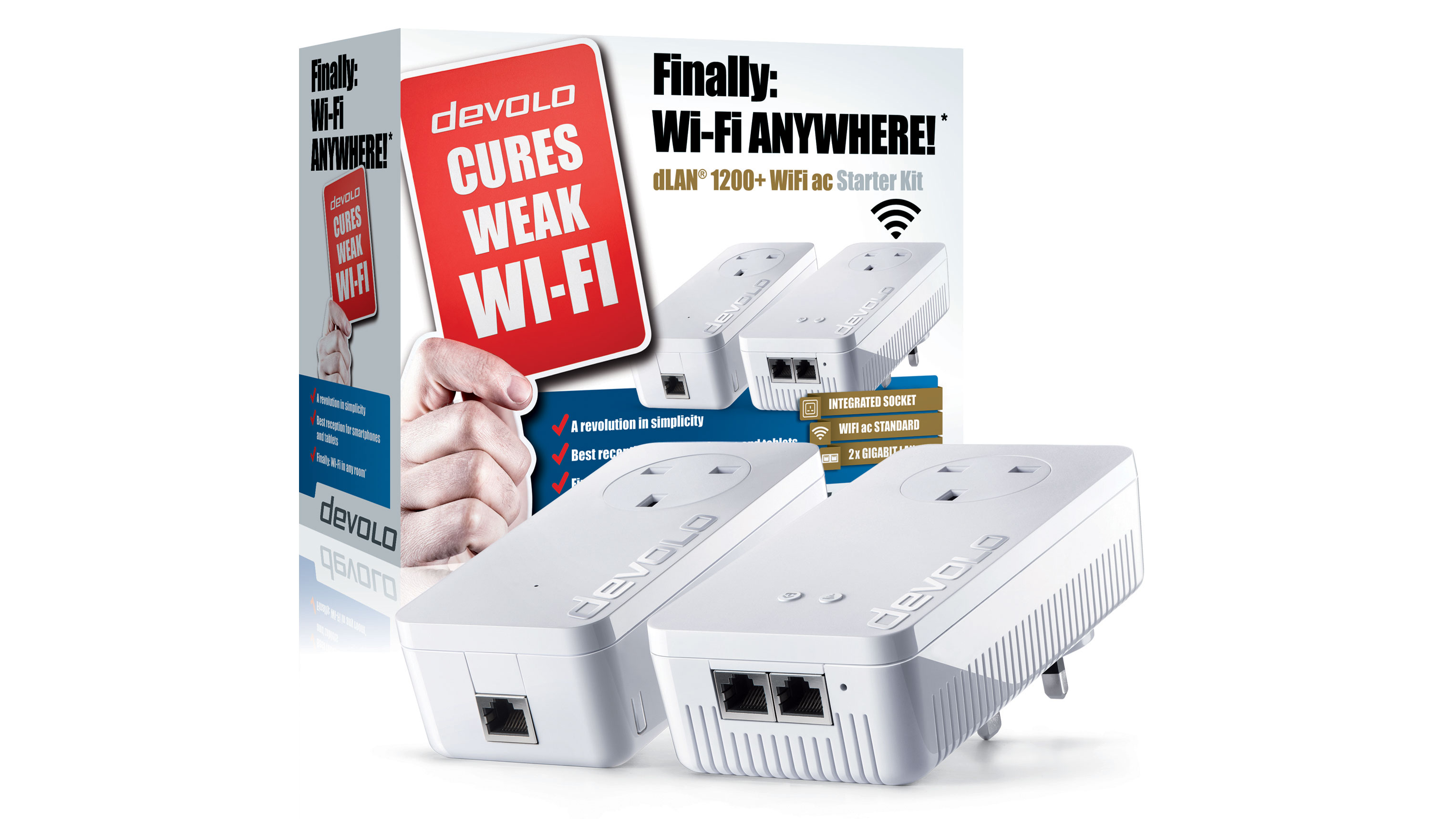

Introducing devolo’s strongest Powerline adapter: The dLAN 1200+ WiFi ac

For the ideal solution for boosting your Wi-Fi signal throughout the home, devolo’s dLAN 1200+ WiFi ac Starter Kit is the perfect choice. devolo specialises in Powerline technology, and this new kit allows you to transmit data through your home’s electrical circuit at incredibly fast speeds.

Two adapters come in the Starter Kit, one for connecting to your router and one for placing at the desired location in your home, and it can broadcast a powerful wireless ac network without struggling with obstacles. Your home will now have strong and reliable Wi-Fi in every room.

You can buy the dLAN 1200+ Wi-Fi ac Powerline starter kit for £159.99. Additional adapters are available from £109.99. Find out more here.

devolo also produce GigaGate, a Wi-Fi bridge with 2 Gbps network speed designed to boost internet signal, as well as Home Control, a smart home system designed to improve comfort levels, energy savings and safety.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

The TechRadar hive mind. The Megazord. The Voltron. When our powers combine, we become 'TECHRADAR STAFF'. You'll usually see this author name when the entire team has collaborated on a project or an article, whether that's a run-down ranking of our favorite Marvel films, or a round-up of all the coolest things we've collectively seen at annual tech shows like CES and MWC. We are one.

Become a TechRadar Insider

Become a TechRadar Insider