Facebook's social graph: lock up your datas!

If you think Facebook is annoying and invasive now, wait till Graph Search kicks in

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

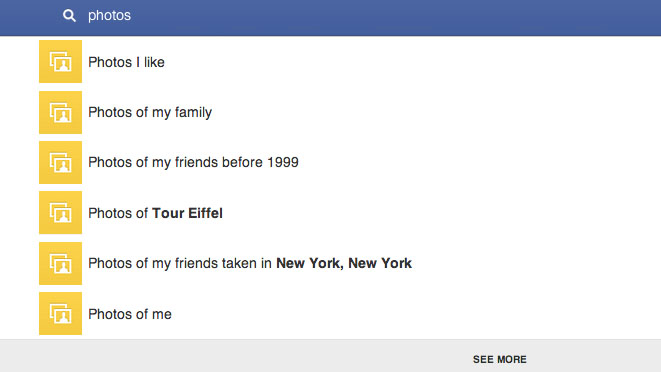

Facebook's big announcement turned out to be very big indeed: Social Graph Search, which will enable you to stalk exes and search for things such as "vulnerable women near me who've been dumped in the last few weeks" and "pictures of my friends' female friends in bikinis".

That's not what Facebook says, of course. According to it, we'll be able to get astonishingly useful search results: not just "find me a fusion restaurant in Manchester", but "Find me a fusion restaurant in Manchester that my Mancunian friends love and that everybody who goes there raves about."

All we need to do is let Facebook monitor us 24/7 and share everything we do with the entire planet.

Article continues belowPrivate lies

With a straight face, Facebook says that Social Graph Search has been "built with privacy in mind": nothing you don't want shared will be searchable.

There are only two problems with that. One, Facebook. And two, everyone on the internet.

Facebook first. Facebook says that the Social Graph search won't uncover things that aren't already public, and that's true. Unfortunately, though, Facebook is awfully good at the whole 'Oopsie! Did we accidentally bump into your privacy settings and make all your private stuff public again? Man, we're always doing that! We're such klutzes!' thing.

Without fail, whenever Facebook announces an improvement to its privacy tools, it makes stuff I don't want public, public.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

For example, about two weeks ago Facebook announced new, simpler privacy tools. I checked my settings, and my friends-only posts were now public. I changed the setting back, and yesterday Facebook announced new, better privacy tools. You'll never guess what my posts' default setting was this morning.

This is not the kind of behaviour that makes you trust a company.

The other concern is that bits of data that don't amount to much can be a very big deal when they're combined: even really innocuous stuff can end up biting you in the backside. The Electronic Frontier Foundation gives the example of liking a Samsung Mobile page in college and then going on to work for Apple. Turning up in a search of "people who like Samsung Mobile and work for Apple" would be embarrassing.

The possibilities can be quite scary, given that Facebook enables and encourages people to post details of their relationship status, religious views, sexuality, political views and so on. Imagine the searches predators, bigots and other fun people might use the Social Graph for - especially if those searches are location-aware. And they will be: writing in Wired, Steven Levy says that "Before long, Zuckerberg says, searching capabilities will be added to Facebook's mobile apps too. Though he won't share the product specs, you can bet that Graph Search on phones will include location, adding a powerful new dimension."

The future might include notifications, too, so Facebook would notify you if someone meeting your particular criteria was nearby, and the indexing will ultimately include not just likes and information people provide in their profiles, but the actual content of their status updates and news feed conversations too.

What could possibly go wrong?

The Book of everything

I've been covering online privacy long enough to know things are rarely as dire as predicted - the future usually turns out to be annoying rather than sinister - but there is a worry here: to make this work, and Facebook really wants it to work, it needs to massively increase the amount of stuff everybody shares on the site and on sites where Facebook has buttons and comments. If it happens elsewhere, Social Graph can't index it.

That means Facebook doesn't just want to be Facebook. It wants to be Yelp, and LinkedIn, and GoodReads, and Spotify, and Flickr, and everything else you do on the internet. It wants to know what you buy, what you listen to, what you like and where you go, and it wants to share that information with everyone.

If you think Facebook is noisy, annoying and invasive now, just wait until Social Graph search becomes its bread and butter.

Contributor

Writer, broadcaster, musician and kitchen gadget obsessive Carrie Marshall has been writing about tech since 1998, contributing sage advice and odd opinions to all kinds of magazines and websites as well as writing more than twenty books. Her latest, a love letter to music titled Small Town Joy, is on sale now. She is the singer in spectacularly obscure Glaswegian rock band Unquiet Mind.