TechRadar Verdict

An awesome step forward for Intel's latest line of processors. We can't wait for the desktop variants to trickle down to the masses

Pros

- +

Awesome memory bandwidth

- +

QPI function

- +

Excellent scalability

Cons

- -

Very expensive

Why you can trust TechRadar

Around 2004, it became clear that Intel's quest for clockspeed had burned out. The final "Prescott" revision of the Pentium 4 processor architecture was struggling to meet ambitious targets for operating frequency. A new paradigm in CPU design was needed.

Since then, Intel has been working to re-architect its entire family of x86 processors. First came the Core Duo mobile processor. It revolutionised notebook performance and gave an early glimpse of the enormous performance-per-watt pay off possible with a relatively low-clocking multi-core processor. Next was the Core 2 Duo processor, which did much the same for desktop computing.

The final piece of the puzzle

This month, Intel is putting the final piece of its multi-core, high efficiency strategy into place. Its latest Nehalem processor architecture is now available in Xeon trim, enabling Intel to make a strong claim to best-in-class across all segments – mobile, desktop and enterprise.

Of course, the Nehalem architecture has already been available for several months in the shape of Intel's enormously powerful Core i7 desktop processor. However, the upgrading of the Xeon workstation and server family of processors to Nehalem specification is much more significant than Core i7. Partly, that's because Intel had a healthy performance advantage on the desktop. The arrival of Core i7 merely served to cement what was already an extremely solid lead.

But it's also because, more than anything, Nehalem has been designed to be an enterprise class chip with killer parallel processing performance. Central to that aim is the concept of scalability in multi-core configurations and multi-socket scenarios. Adding extra CPUs and cores to a given platform is all very well. But if those CPUs and cores are not fed with sufficient data, the consequence is idle processing cycles and compromised performance.

Until Nehalem, that's exactly the problem that Intel's multi-socket platforms have suffered from. Thanks to an old fashioned interface composed of a front side bus connecting the CPU to a secondary chip, which in turn housed the memory controller, system I/O and inter-socket communications, Intel's multi-socket Xeons were often starved of data. This shared bus was simply too slow and narrow to keep up with the demands of the CPUs, particularly as per-socket core counts increased.

Improved memory controller

But with Nehalem, Intel has moved both the memory controller and I/O onto the CPU itself. The result is a massive leap in available bandwidth. Take memory performance. Intel's outgoing dual-socket Xeon processor based on the Penryn architecture had to make do with at best 6GB/s of memory bandwidth for each CPU.

The new Xeon EPs based on Nehalem enjoy a staggering 16GB/s per socket. Of course, the boost in bandwidth is not only down to the controller's on-die location. Intel has also added an extra channel for a grand total of three and upped memory support to 1,333MHz DDR3. It's by far the most powerful memory controller of any x86 processor.

Just as significant is the new Quick Path Interconnect (QPI). This provides a high speed interface both between the CPUs themselves and out to the rest of the system. Each QPI link delivers no less than 32GB/s and each CPU sports two links. For workloads where data must be shared between CPU sockets, it's an enormous boon.

Elsewhere, Nehalem EP benefits from the same detailed enhancements as the Core i7 processor. Thanks to Hyperthreading, therefore, each of the processor's four cores are capable of processing two threads simultaneously and hence no less than 16 overall in dual-socket EP configuration. As for the overall impact of the effort Intel has put into Nehalem, well, it translates into probably the biggest ever step forward in performance for an enterprise class x86 processor.

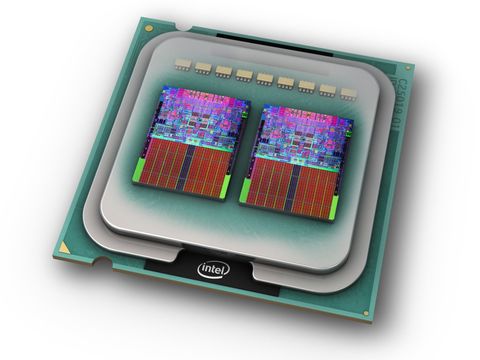

Familiar silicon

The silicon inside Intel's latest dual-socket Xeon processor is actually rather familiar. It's essentially the same CPU die found in the Core i7 desktop CPU and therefore a monolithic quad-core design manufactured using Intel's 45nm production technology. Likewise, each core has a dedicated L2 cache memory pool of 256KB, supplemented by 8MB of shared L3 cache.

Things get much more exciting, however, when you compare this new Nehalem-based Xeon with Intel's outgoing Xeons from the Penryn family, and indeed to AMD's competing Opteron CPU. Thanks to the combination of Nehalem's high bandwidth, low latency architecture and the raw power of its processor cores, it delivers truly monstrous parallel processing performance.

Nearest rival

In our benchmarks, a pair X5570s running at 2.93GHz are anywhere from 50 to 100 per cent faster than two 2.7GHz "Shanghai" Opterons, the fastest multi-socket AMD processor we have tested. That's right, in some benchmarks, including our computational fluid dynamics test, Nehalem is literally twice as quick. Admittedly, AMD has recently released a slightly higher clocked 2.9GHz Opteron. But suffice to say it's unlikely the extra 200MHz will come close to closing the gap.

Of course, it's not just the Opteron that is left looking a little silly. Intel's previous Xeons also take a brutal beating. In a preliminary run of SPEC CPU2006, arguably the most widely respected CPU performance benchmark in the world, the X5570 is capable of an estimated floating point base score of over 150. A 3.2GHz Xeon based on the old architecture scores just 86. Enough said, really.

Contributor

Technology and cars. Increasingly the twain shall meet. Which is handy, because Jeremy (Twitter) is addicted to both. Long-time tech journalist, former editor of iCar magazine and incumbent car guru for T3 magazine, Jeremy reckons in-car technology is about to go thermonuclear. No, not exploding cars. That would be silly. And dangerous. But rather an explosive period of unprecedented innovation. Enjoy the ride.