'Mass surveillance isn’t just viable, it already happens' — AI experts warn the threat is already here

AI use in the military is a big topic, here’s what’s really happening

A few weeks ago, the Pentagon asked Anthropic, the company behind AI assistant Claude, to modify an existing $200 million contract and remove two key guardrails. These were prohibitions on using its technology for domestic mass surveillance and fully autonomous weapons. Anthropic refused and the contract went to OpenAI instead.

This dispute has thrust a question most of us probably hadn't thought much about into the spotlight: can AI actually do these things? And if so, how worried should we be?

The short answer, according to the experts I spoke to, is that this isn't science-fiction. It's already here. But the picture is more complicated — and in some ways more troubling — than the killer robots we are used to seeing on the big screen.

Mass surveillance is already happening

"Mass surveillance isn’t just viable, it already happens," James Wilson, a Global AI Ethicist and author of Artificial Negligence, tells me. "Technologies such as Palantir and CCTV have been making this possible for years. It’s just down to the individual states as to whether they choose to do it."

The US government's PRISM program — exposed by Edward Snowden over a decade ago — was an early example of surveillance at a massive scale.

"The advances in AI have simply made it easier to do this at scale," Wilson says, "and our increasingly connected existence means there are so many more data sources they can access, with or without people's permission."

The recent controversy around Ring doorbell cameras and Flock licence plate readers being used by police after the Super Bowl is just the latest example.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

This matters for ordinary people, not just political dissidents. Jeff Watkins, an AI advisor specializing in governance and security tells me this sort of surveillance points to a pattern already visible in the UK.

"We've seen multiple recent news articles around people being misidentified by supermarket facial recognition systems, with the longstanding concern that these misidentifications can disproportionately affect women and ethnic minorities," Watkins tells me.

The cumulative effect is a shift in how society works. "Being subject to the algorithmic use of surveillance technologies moves the dial towards a 'suspicion by default' society, where innocent parties, going about their everyday lives, could have their rights trampled by AI classification," Watkins says.

Autonomous weapons are already here

The same is true of lethal autonomous weapons. "The first recorded use was by Turkey against a Libyan target using a Kargu drone in 2021," says Wilson. Since then, the technology has moved fast. "The advances in AI have meant that this is now possible at a much larger swarm scale, as well as being incredibly cheap."

But the core problem here is accuracy — and what inaccuracy means when the stakes are life and death. "Computer vision to facially recognize people is only 90% accurate at the best of times, and if the system uses generative AI, it will hallucinate, because it is a feature not a bug in the technology," Wilson says.

The Israeli Defence Force's AI targeting program, Lavender, which was used to identify suspected Hamas members, has since been acknowledged to have been wrong 10% of the time. Even the best large language models still hallucinate at a rate of 5-10%, according to Hugging Face’s Vectara benchmark leaderboard. That ten percent may still sound small. But at the scale these systems operate, it really isn't.

You may think that the answer is more human oversight. But that's exactly what some military applications are designed to reduce. "Removing human-in-the-loop determination of the target is therefore an ethical minefield," Wilson says. "At a more basic level, removing human determination from the kill chain is removing any form of human dignity."

It also removes responsibility, Watkins says. "If nobody is there to press the 'fire' button, who can be held accountable in the case of a loss of life, justified or otherwise? AI is not a legal person and cannot be held accountable itself.”

Should we be worried about Terminators?

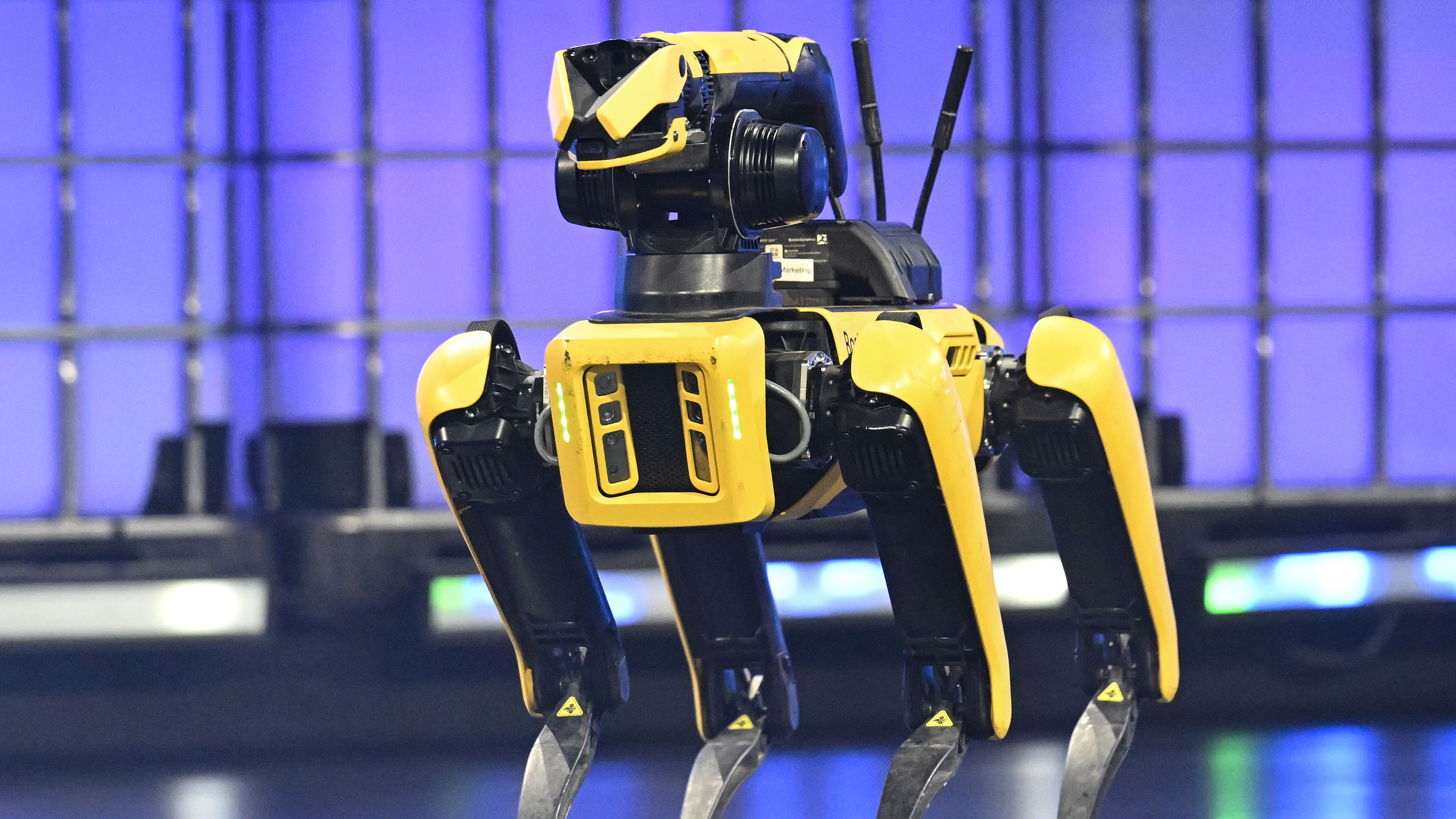

For anyone who grew up watching the Terminator films, recent robot videos from Boston Dynamics and Chinese tech firms like Xpeng probably feel uncomfortably familiar.

But Wilson, who has spent time with similar models, urges perspective. "Despite all of the fancy, and very choreographed, robot videos that come out of China and the US — they are not quite there. They still need a lot of work to get them to a stage where they could fully autonomously interact with our world."

The more pressing concern, he says, isn't humanoid robots. "I am more worried about swarms of drone autonomous weapons. This technology is already there, and it is cheap enough that it can be built today en masse, by literally anyone."

But the broader warning comes from Watkins, and it extends well beyond the military context. "When organizations and governments hand off too much decision-making to flawed and immature systems that are not fully understood or explainable, without robust auditing, it can erode human rights and muddy the waters of accountability."

The Anthropic standoff was less about one company's contract and more about a question we're all going to have to answer across the board: who decides how much we trust these systems — and who's accountable when they're wrong?

Becca is a contributor to TechRadar, a freelance journalist and author. She’s been writing about consumer tech and popular science for more than ten years, covering all kinds of topics, including why robots have eyes and whether we’ll experience the overview effect one day. She’s particularly interested in VR/AR, wearables, digital health, space tech and chatting to experts and academics about the future. She’s contributed to TechRadar, T3, Wired, New Scientist, The Guardian, Inverse and many more. Her first book, Screen Time, came out in January 2021 with Bonnier Books. She loves science-fiction, brutalist architecture, and spending too much time floating through space in virtual reality.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Become a TechRadar Insider

Become a TechRadar Insider