Why Siri is just the start for natural input

Showing off to non-iPhone owning friends has never been easier.

Pick up your phone in the pub, confidently say 'Siri, what's the circumference of the Earth divided by the radius of the Moon?' and barely seconds later, you're the only one there who knows the answer is 23.065.

It's a magical experience, and a great toy.

Compared to what we'll have in a couple of generations of phones, though, it's a Speak & Spell. Best of all, voice is just the start of the natural input revolution.

Imagine a world with no keyboards, no tiny buttons, no tutorials and no manuals. You'll just do what comes naturally, and your phone will adapt, using artificial intelligence (AI) to deduce that you're dictating, or that when you say 'Order take-out', you're going to want Thai that day. Or a million other seamless interactions, combining your camera, location, search, databases, music and more, based on massive databases of information and probabilities and tuned to your personal tastes and past history. It's going to be glorious.

It's also just on the edge of being science fiction at the moment. But how is this kind of natural input unlocking our world in the here-and-now? That's one question Siri can't answer. Fortunately, we can.

Beep. Request. Respond

VOICE CONTROL: If developers can perfect voice control then it will really open up the power of natural input

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Like most magic, Siri works by taking an incredibly complex series of actions and hiding them behind a simple flourish.

At its most basic level, pressing Siri's microphone button records a short audio clip of your instruction, which your phone passes to its online servers as a highly compressed audio file. Here, your speech is converted into text and fired back, as a piece of dictation or instruction for your iPhone.

There is, of course, more to it than this – as part of the conversion process, for instance, the server doesn't just send back what it thinks you said, but how confident it is about every word. Artificial intelligence is also required to keep track of the conversation and to maintain context by understanding what you mean by tricky words like 'it' and 'that', or if you were more likely to have said 'we went to see' or 'we went to sea'.

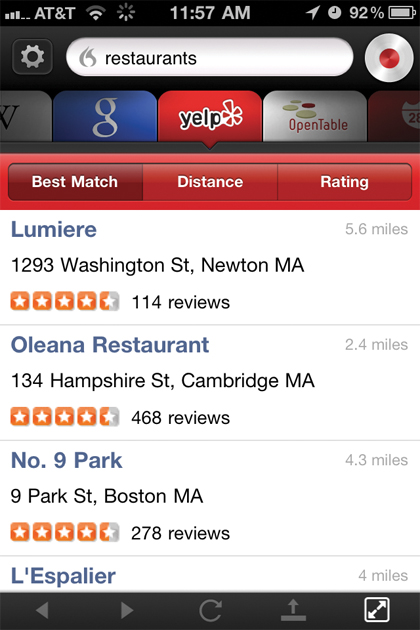

That's the gist, though, and iPhone 4S owners will tell you it often works damn well. At least, it does in the US. One of the few major problems with Siri is that much of the best stuff, like finding a restaurant, has yet to arrive internationally, leaving us with much of the gimmickier stuff.

WOLFRAM ALPHA: Siri gets a lot of its data from Wolfram-Aplha

For now then, the rest of us will have to just imagine asking it to find lunch, having the map plotted directly, and in some cases, even booking a restaurant with nothing more than the word 'Yes'. But give it time, these things will come.

Siri isn't the only tool capable of this, though, and while it is currently the most efficient, the competition works in the same way – just two of them being Nuance's Dragon Go! and the Android-only Iris from Indian startup Dexetra. With Apple's legendary secrecy in full effect, it's often by looking at these that we can see what's going on under the surface, and where Siri is likely to go in future.

An assistant in the cloud

DRAGON GO!: Dragon Go! pre-dates Siri, but does a similar job – with a wider range of search destinations

Knowing how it works, two questions will likely immediately pop into your mind: if all the heavy lifting is happening elsewhere, in the cloud, why do you need an iPhone 4S to use Siri? And why can't it all just work right on the phone?

In truth, the likely answer to the first one is simply 'because Apple wanted a cool selling point for the iPhone 4S'. The original version of Siri was a standalone app that ran on a regular iPhone 4, and on the face of it the latest incarnation isn't doing anything that really requires the more powerful A5 processor. There are future-gazing reasons why Apple might want to restrict it, but precious few non-marketing related ones as it stands now.

What everyone does agree on is the importance of sparing your phone the technical heavy lifting, for two reasons: efficiency and updating.

"The original Iris 1.0 did not use a server, everything was being processed from the phone," explains Narayan Babu, CEO of Dexetra. "Even on powerful phones with dual-core processors, this was inefficient. Natural language processing (NLP) and voice-to-text require real horsepower. When we tried doing serious NLP on Android phones, it almost always crashed. It is also easy to add features seamlessly when processing happens in the cloud, without having to update the actual app."

Those features aren't simply a question of plugging in more information sources for searches, either. The more people who use a tool like Siri, the more powerful it's capable of becoming.

Vlad Sejnoha, chief technology officer of Dragon Go! creator Nuance, one of the most highly regarded companies in the field, told us: "10 years ago, speech recognition systems were trained on a few thousand hours of user speech; today we train on hundreds of thousands. Our systems are [also] adaptive in that they learn about each individual user and get better over time."

To put this into context, speech-to-text tools have been available for many years, but traditionally had to be trained to your voice by having you painstakingly read out long stretches of prose. Modern equivalents still struggle with strong accents, but now failure isn't forever. Over time, their understanding of, for example, a Geordie 'reet' versus a careful Received Pronunciation 'right' can only improve.