Explore Internet

Latest about Internet

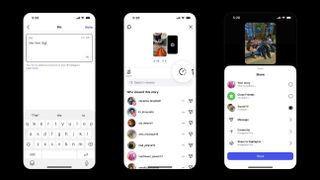

Instagram Plus launches globally with new subscription features

By James Rogerson published

Instagram Plus bulks up the app with numerous new and improved features for those prepared to pay, and early reactions haven't been positive.

Past Wordle answers — every solution so far, alphabetical and by date

By Marc McLaren last updated

Knowing past Wordle answers can help with today's game. Here's the full list so far.

NYT Wordle today — answer and my hints for game #1812, Friday, June 5

By Marc McLaren last updated

Looking for Wordle hints? I can help. Plus get the answers to Wordle today and yesterday.

Quordle hints and answers for Friday, June 5 (game #1593)

By Johnny Dee published

Looking for Quordle clues? We can help. Plus get the answers to Quordle today and past solutions.

NYT Strands hints and answers for Friday, June 5 (game #824)

By Johnny Dee published

Looking for NYT Strands answers and hints? Here's all you need to know to solve today's game, including the spangram.

The best Wi-Fi extenders in 2026: top devices for boosting your WiFi network

By Matt Hanson last updated

Buying Guide The best Wi-Fi extenders will make sure you can get online anywhere in your house.

NYT Strands hints and answers for Thursday, June 4 (game #823)

By Johnny Dee published

Looking for NYT Strands answers and hints? Here's all you need to know to solve today's game, including the spangram.

Quordle hints and answers for Thursday, June 4 (game #1592)

By Johnny Dee published

Looking for Quordle clues? We can help. Plus get the answers to Quordle today and past solutions.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Become a TechRadar Insider

Become a TechRadar Insider