Computer scientists call for internet expansion to be limited

To control future energy consumption

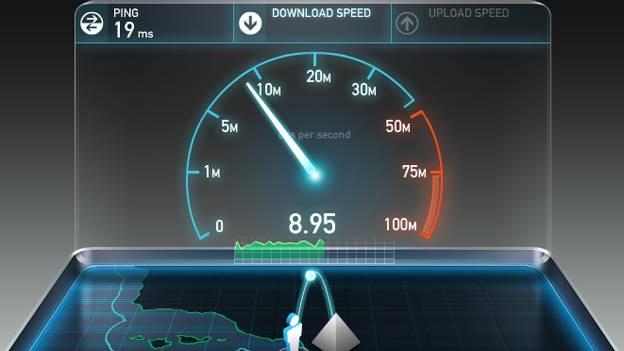

Between 2011 and 2015, the average amount of data used per month in a British home rocketed from 17GB to 82GB. Mobile data usage shows a similar pattern. More of us are using more data than ever before.

That fact is troubling computer scientists at Lancaster University, who've written a research paper titled "Are there limits to growth in data traffic?". It argues that the increase in data use as accompanied a huge increase in energy use, despite improvements in efficiency. This energy use comes with a massive carbon footprint.

Right now, the internet accounts for about five percent of global electricity use - but it's growing at about seven percent per year. Some predictions, the researchers say, find that IT could account for as much as 20 percent of total energy use by 2030.

Natural Limits

There are some natural limits to how much data humans can use. There are a finite number of people on the planet, and they need to sleep sometimes. But a significant chunk of the growth in data usage doesn't come from humans - it comes from machines.

From smart thermostats and other household gadgets, to connected cars and industrial equipment, more and more technology has an internet connection. These objects don't sleep and are proliferating very rapidly. There are currently 6.4 billion connected Internet of Things devices and it is estimated this could reach 21 billion by 2020.

"The nature of internet use is changing and forms of growth, such as the Internet of Things, are more disconnected from human activity and time-use," explains Mike Hazas, who co-authored the paper. "Communication with these devices occurs without observation, interaction and potentially without limit."

Volume Quotas

The researchers conclude that the world should be examining whether limits should be applied to data growth, ideally before it occurs. Ways that could be accomplished include volume quotas, and different pricing for different internet services - though this would fall foul of network neutrality laws.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

"The Internet of Things is still in the making and it is important to consider existing ideas for a 'speed limit' to the system, especially in comparison to having to retrospectively reduce internet traffic in the future," said Hazas.

- Duncan Geere is TechRadar's science writer. Every day he finds the most interesting science news and explains why you should care. You can read more of his stories here, and you can find him on Twitter under the handle @duncangeere.

Become a TechRadar Insider

Become a TechRadar Insider