Scientists make cameras work more like human eyes and this could be good news for future smartphones

Always on the move

A little-known quirk of the human eye might pave the way for better self-driving car cameras and even more effective smartphone photography.

As you're reading this, your eyes are in most cases slowly scanning from left to right but even when not reading or looking at a fixed object your eyes are constantly on the move and this, it turns out, is the key to the quality of human vision and how robots, self-driving cars, and maybe even smartphones could see more clearly.

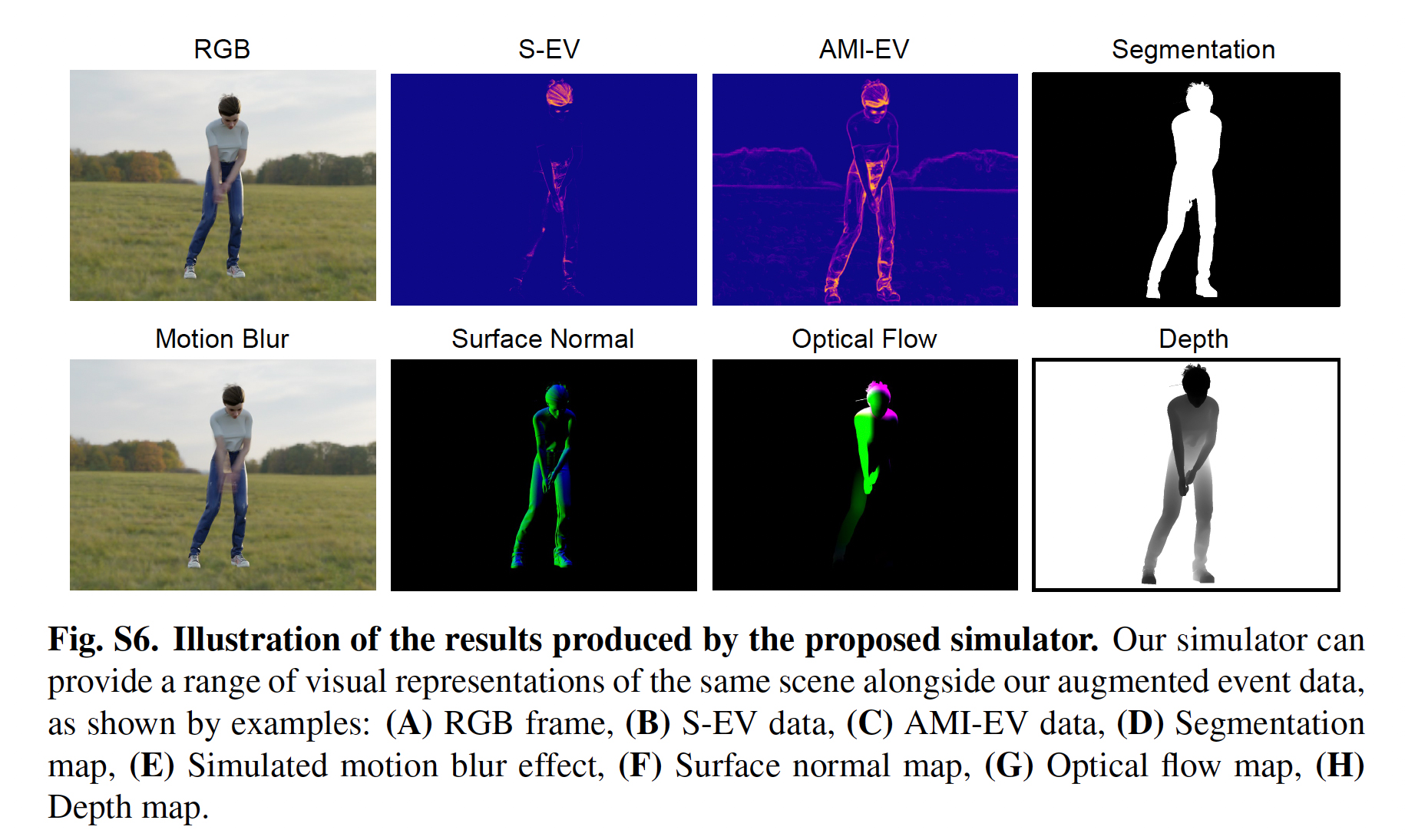

A team of University of Maryland researchers created a camera that mimics human eye movements. Called the Artificial Microsaccade-Enhanced Event Camera (AMI-EV), it uses a rotating round wedge prism (round, but one face of the prism is sharply angled) rotating in front of an event camera, in this case, an Intel RealSense D435 camera, to move the images around.

Even though the movements are small, they're meant to mimic the saccades of the human eye. Saccades describe three different levels of movement the eye makes – Rapid, small tremors, slower eye drift, and microsaccades, which happen multiple times per second and are small enough to be imperceptible to the human eye.

This last movement may help us see more clearly, especially moving objects where our eye shifts to put the image against the best part of our retina, replacing blurs with shape and color.

With the understanding of how these micro-movements help human perception, the team equipped its camera with a rotating prism.

According to the paper's abstract, "Inspired by microsaccades, we designed an event-based perception system capable of simultaneously maintaining low reaction time and stable texture. In this design, a rotating wedge prism was mounted in front of the aperture of an event camera to redirect light and trigger events."

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Researchers paired the hardware solution with software that could compensate for the movement and combine captured images for a stable and clear image.

According to a report in Science Daily, the experiments were so successful that AMI EV-equipped cameras detected everything from quickly moving objects to the human pulse. That's some precise vision.

Making robotic eyes see more like humans offers the potential of not only robots that can share our vision skills but, for instance, self-driving cars that could finally discern between people and other objects. There is already evidence that self-driving cars struggle to identify some humans. A self-driving Tesla equipped with an AMI-EV camera might be able to tell the difference between a bag blowing by and a child running into the street.

Equipped with AMI EV cameras, mixed-reality headsets, which use cameras to combine real and virtual worlds, might do a better job combining them for a more realistic experience.

"...it has many applications that much of the general public already interacts with, like autonomous driving systems or even smartphone cameras. We believe that our novel camera system is paving the way for more advanced and capable systems to come.," Yiannis Aloimonos, a professor of computer science at UMD and the study's co-author, told Science Daily.

These are early days, and the hardware looks more like something you'd put in an engine than the ultra-tiny and thin camera you might need for the best smartphone.

Still, the realization that something we can't see happening is responsible for what we can see and how that small but critical vision capability can be replicated in robotic cameras is a significant step on the path to a future where robots match human visual perception.

You might also like

- It's high time mirrorless cameras had built-in memory as standard ...

- AirPods with IR cameras are rumored to be on the way – but we'll ...

- Best camera for photography: top picks for any budget ...

- I built the new Lego Retro Camera – and it proved the perfect ...

- This 'digital film roll' promises a new lease of life for your old analog ...

A 38-year industry veteran and award-winning journalist, Lance has covered technology since PCs were the size of suitcases and “on line” meant “waiting.” He’s a former Lifewire Editor-in-Chief, Mashable Editor-in-Chief, and, before that, Editor in Chief of PCMag.com and Senior Vice President of Content for Ziff Davis, Inc. He also wrote a popular, weekly tech column for Medium called The Upgrade.

Lance Ulanoff makes frequent appearances on national, international, and local news programs including Live with Kelly and Mark, the Today Show, Good Morning America, CNBC, CNN, and the BBC.

Become a TechRadar Insider

Become a TechRadar Insider