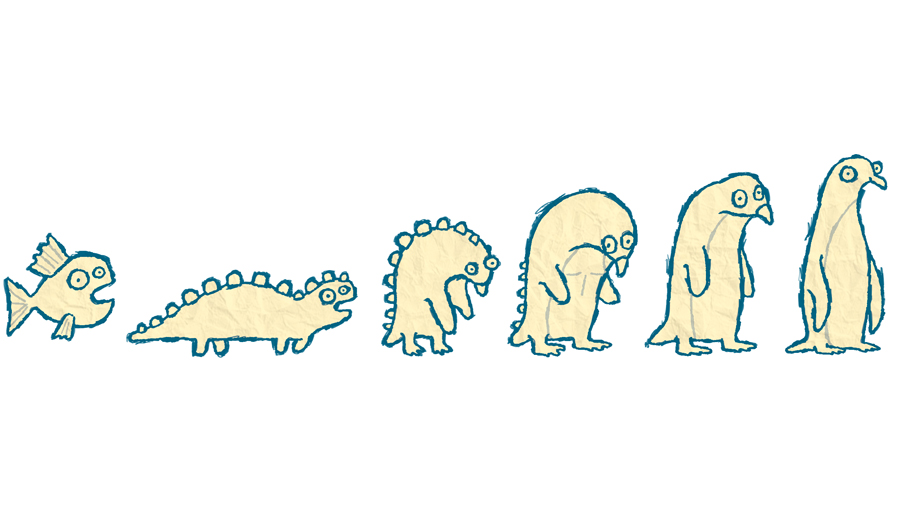

When you teach robots to be 'ethical', they turn into penguins

They huddle together for warmth

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

A Welsh roboticist who programmed a group of robots to act 'ethically' has found that the resulting behaviour bears a striking resemblance to groups of animals.

Chris Headleand from Bangor University built a simulation using digital 'agents' with extremely simple programming. He set it up so that the robots were required to reach a power source before they run out of energy and 'die' - and sure enough, the automatons immediately tried to cluster around the energy source like penguins huddling together for warmth.

But he also saw emergent behaviour, including a split of the group into different social classes. "Agents who were closest to the resources were really calm," he explained in an interview with the BBC, "But what was interesting, [those on] the outer circle, on the outer edges of the resources, were panicked and swerving around and constantly trying to dive in."

Article continues belowAltruism?

Most interestingly, some were even exhibiting what appeared to be altruistic behaviour. "We saw some agents that were sacrificing themselves to save others," said Headleand. "If you start trying to describe this using language from psychology rather than engineering, that's the point where it becomes quite interesting."

The eventual goal is to ensure that ethical behaviour is built into robots who work with humans at the lowest possible level, but that's still a long way away.

"We are not saying these robots are ethical but in some situations they can behave in a way which appears to an observer as ethical," explained Headeland. "If it looks like a duck, and quacks like a duck, for the purposes of a simulation, I'm willing to accept it's a duck."

Sign up for breaking news, reviews, opinion, top tech deals, and more.