19 graphics cards that shaped the future of gaming

Nvidia, ATi and AMD classics

Introduction

The graphics card is the most important part of any gaming PC. It's also one of the most expensive, which is why gamers today often remain loyal to one side - Nvidia or AMD.

The two companies have been battling it out to win hearts (and wallets) for the past 15 years. From Crossfire to CUDA, the ever-changing nature of the GPU has made for an epic (and often close fought) battle.

Nvidia and AMD haven't always been the industry's frontrunners - 3DFX, Matrox, Imagination, Intel and even lesser-known players (such as S3) played a large part in advancing GPU tech - but that's a story for another time.

With Nvidia's GeForce 980Ti and AMD's Fury X having entered the arena to do battle at the enthusiast end of the market, we're focusing on the graphics cards that helped today's top two powerhouses to where they are today. How many have you owned?

- Once you're done, check out the 10 best graphics cards in the world today

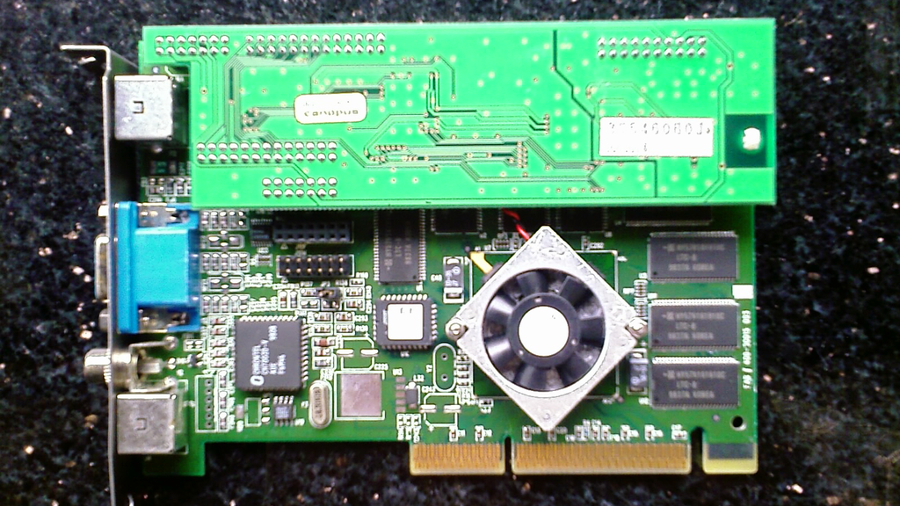

1. Nvidia Riva TNT (1999)

Codenamed NV, the TNT came with the first branded driver (called "The Detonator"). Riva is an acronym for Real-Time Interactive Video and Animation accelerator. The "TNT" (TwiN Texel) suffix refers to the chip's ability to work on two texels at once. The Riva was one of the first cards with 32-bit colour support and positioned Nvidia as a serious competitor to the top dog of the time, 3dfx, and its popular line of Voodoo graphics cards.

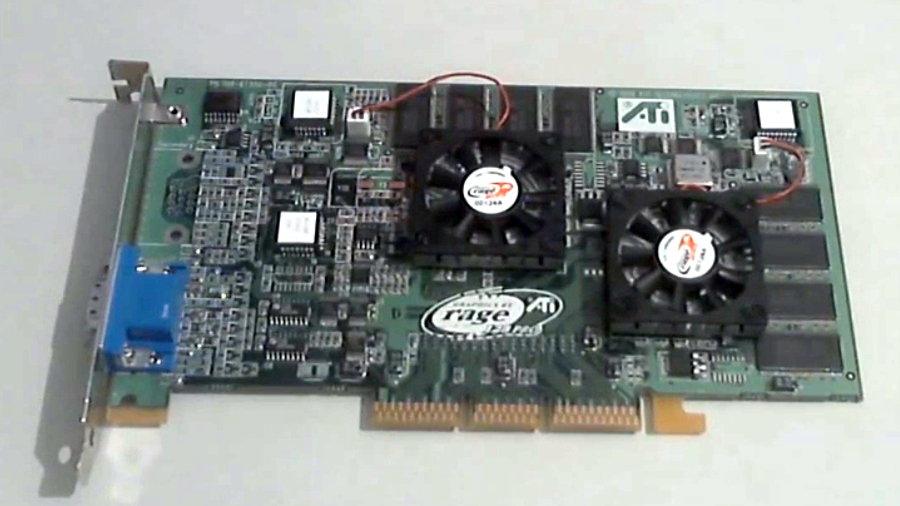

2. ATi Rage Fury Maxx (1999)

The Rage Fury Maxx emerged as a rival to Nvidia's Riva TNT and 3dfx's Voodoo 5. It arrived with two processing engines and used alternate frame rendering (AFR), which renders each frame on an independent graphics processor, to almost double performance. The Fury Maxx supported OpenGL and Direct3D and came with a (for the time) massive 64MB of onboard memory, but was held back by a lack of hardware-based transform and lighting, which featured in Nvidia's GeForce 256.

3. Nvidia GeForce 256 (1999)

With the GeForce 256, Nvidia beat its rivals to integrate transform, lighting, setup and rendering on a single GPU. The company called it "the world's first GPU (Graphics Processing Unit)", defined as "a single-chip processor with integrated lighting/triangle setup/clipping, and rendering engines that is capable of processing a minimum of 10 million polygons per second." Cube Environment Mapping, a technology that enables developers to create real-time reflections and lighting effects, was another key feature.

4. ATi Radeon 9700 Pro (2002)

The Radeon 9700 Pro was the fastest card in the town upon arrival by some stretch, and the first to come with a full DirectX 9 feature set. Adept at FSAA and Antistropic filtering, the 9700 Pro supported 2.0 pixel shaders and had eight pixel pipelines capable of laying down additional textures through individual rendering pipes. It launched clocked at 325MHz and was one of the largest and most complex GPUs of the time thanks to its 110 million transistors.

5. Nvidia GeForce 6800 Ultra (2004)

The GeForce 6800 supported shader model 3 at a time when its rivals were stuck on shader model 2. It was also the first card to support Nvidia's Scalable Link Interface (SLI) technology, allowing two compatible cards to be used in tandem to deliver increased graphical performance. With up to 512MB vRAM Superscalar 16-pipe GPU Architecture and 64-bit Texture Filtering and Blending, the 6800 was the card to beat.

6. ATi Radeon R520 (2005)

Codenamed Fudo, the ATi Radeon R520 laid claim to a number of firsts for the company. It was the first GPU produced using a 90nm fabrication process and introduced a redesigned memory bus architecture (with a new cache design) that helped ATi increase memory clock speeds while saving processing power. The benefit? Improved performance when running games at resolutions above 1,600 x 1,200 with the details dialled up. Highly optimised for Shader Model 3.0, the R520 took the fight to Nvidia's GeForce 7000 series and continued to keep its rival on its toes.

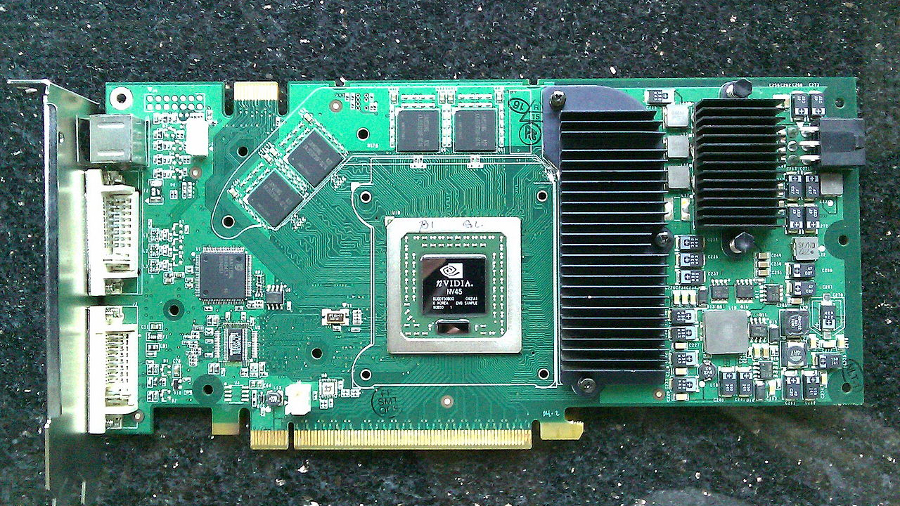

7. Nvidia GeForce 8800 GTX (2006)

Nvidia's 8800 GTX has an enduring legacy and enjoyed staying power like no other card on our list. One of the first GPUs to support CUDA (Compute Unified Device Architecture), it offered DirectX 10 compatibility out of the box and brought a huge leap in performance (double in some cases) over the DirectX 9 cards of the time. Although expensive at £475 (around US$741, or AUS$1,004), it offered 128 CUDA codes, 768MB of GDDR3 memory and a 384-bit bus. Crysis and Battlefield 3 were often used to push the 8800 GTX to the limit.

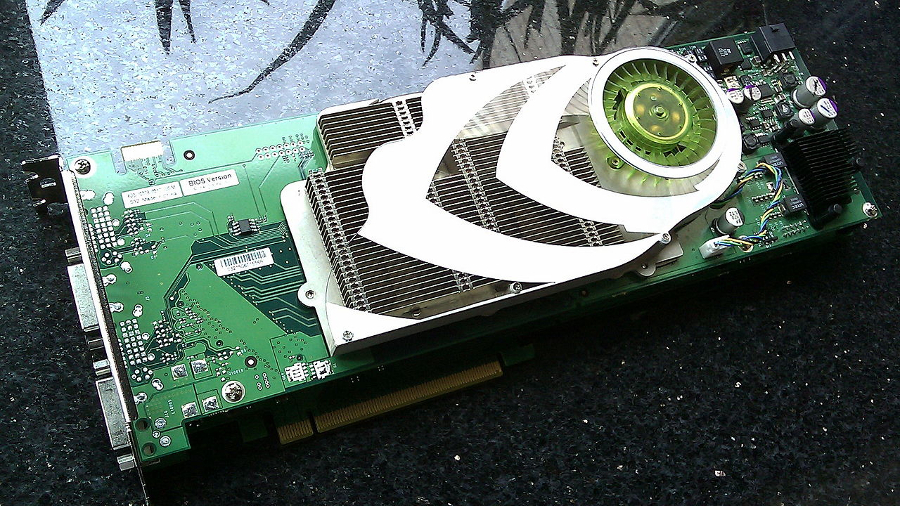

8. Nvidia GeForce 7900 GX2 (2006)

Also known as the 7900 GTX Duo, the 7900 GX 2 was hugely ambitious (and huge in size). It was primed for multi-monitor experiences and (for the time) big resolutions - up to 2,560 x 1,600. It was the first card to feature two videocards stacked to fit as a dual-slot solution, enabling quad-SLI on two PCI Express x16 slots. However, placing two GPUs on a single videocard would ultimately go on to be the preferred way of doing things. With the 7900 GX 2 requiring a monster 1000 watt PSU and an extremely well designed airflow to function, it's not hard to see why.

9. ATi Radeon HD 4870 X2 (2008)

Hot on the heals of Nvidia's 9800 GX2, which also launched in 2008, AMD's 4870 X2 was a powerhouse, instantly becoming the single fastest card on the market. Like the HD 3870 X2 before it, the 4870 X2 placed two GPUs on the same card and used Crossfire to make them work together. It had a 750MHz core and 2 x 1GB of 900MHz RAM. Hot, expensive and curiously heavy, the 4870 X2's drawbacks were soon forgotten once you saw what it could do.

10. Asus EAH 3850x3 "Trinity" (2008)

Two GPUs on a card can lead to big performance gains, but what happens when you have three? Asus set out to answer the question with the EAH3850x3 Trinity, which was launched as a technical proof of concept rather than something you could buy - only 10 made it into production. Consisting of three Radeon 3850 notebook GPUs soldered to a PCB, Asus claimed a 139% performance improvement over single-GPU solutions. Those lucky enough to get their hands on a Trinity said that it was massive, ran very hot and was held back by CrossFire's scaling-related limitations when it came to performance. Nobody has followed suit with a triple-GPU card since, so we can probably thank Asus for that.

11. ATi Radeon 5970 (2009)

Packing 4640 GFLOPs of raw computing power, the ATi Radeon 5970 was unveiled as the world's fastest graphics card in 2009, turning the tide in ATi's favour. Targeting multi-monitor and high-resolution setups, the 5970 packed 4640 GFLOPs of raw computing power. In addition to supporting CrossFireX, it was the first card to support AMD's Eyefinity setup, which uses three independent display controllers to drive three displays simultaneously with independent resolutions, refresh rates, colour controls and video overlays.

12. AMD Radeon 7970 (2011)

AMD's 7970 was powered by a Tahiti XT GPU manufactured using TSMC's 28nm fabrication process. Launched as a rival to Nvidia's GTX 680, TechRadar's own benchmarks found the the 7970 to seriously outpace that card in games that use compute-focused anti-aliasing, such as Sniper Elite V2. The 7970 was the first PCI-E 3.0 card, which was integrated with the first 3072 GDDR5 memory and 384-bit memory interface.

13. Nvidia GTX 680 (2012)

Nvidia didn't have an answer to AMD's 7970 for three months, so when it finally launched the GTX 680, there was every chance that it could've fallen behind. But, as TechRadar's Dave James noted in his review, Nvidia was merely waiting for the best time to strike. The new flagship card, which landed in March 2012 as the fastest GPU in the world, marked a more-than-successful debut of Nvidia's brand-new Kepler architecture. Its numerous features included Nvidia GPU boost, adaptive vertical sync, Nvidia Surround (support for up to four monitors) and the debut of two new anti-aliasing modes: FXAA and TXAA.

14. Nvidia GTX Titan (2013)

Graphics cards tend to have odd, nondescript names, something that Nvidia's Titan can't be accused of. Simply put, it made every other GPU at the time look small-fry. If there was the feeling that Nvidia's 680 didn't show off what Nvidia's Kepler architecture could really do, few doubts remained after the Titan. Stomping into view at $1,000 (around £800 in the UK), it was easily the fastest single-GPU card on the planet, boasting 2688 CUDA cores and 6GB of GDDR5 RAM. An aspirational product for anyone with lots of money, it provided the powerful performance of dual-GPU gaming with none of its associated vagaries.

15. Nvidia GTX 980 (2014)

The GTX 980 wasn't the company's first card to use Nvidia's Maxwell architecture (unusually, Maxwell debuted in the low-end GTX 750 and GTX 750 Ti), but it was the first real head-turner to feature it. Cranking out top-notch performance while carrying a price tag that positioned it just under top-end Kepler cards, it came with 2048 CUDA cores and 4GB of 7.0Gbps GDDR5 memory while remaining on the 28nm manufacturing process. VR-ready and capable of playing the latest titles in 4K, it also featured a new Nvidia technology called DSR that increased the graphical fidelity of games running on 1080p monitors.

16. AMD R9-295x2 (2014)

Developed as Project Hydra, the AMD R9-295x2 was (and still is) completely nuts. Coming in a silver carry case protected by a combination code lock, it's best described as a card for the gamer who has everything. Insanely powerful with a heavyweight price tag to match, the R9-295x2 totes a 500W TDP and built-in liquid cooling loop. Powered by not one, but two Hawaii XT graphics processors, each paired with a 4GB GDD5 graphics memory, it can crank out a fearsome 11.5 TFLOPS of compute performance. AMD's multi-headed monster ushered in the Ultra HD era in style.

17. Nvidia GTX Titan X (2015)

The Titan X maxed out Nvidia's Maxwell architecture and possessed some mighty fine overclocking chops. Something of a headline-grabber, it arrived primed for 4K gaming and, like the Titan before it, comfortably took the crown of the world's fastest single-GPU graphics card. That GPU is the GM 200, which packed in 50% more processing tech than the GM 204 silicon in the GTX 980 launched the previous year. Featuring a whopping 3,072 CUDA cores, 12GB of GDDR5, 192 texture units and a transistor count of 8 billion, the GTX Titan X also toted an enormous 12GB frame buffer (versus the Titan's 6GB). Once again launching at around $1,050 (around £850), it cost more than some pre-built mid-range gaming PCs. RIP wallets everywhere.

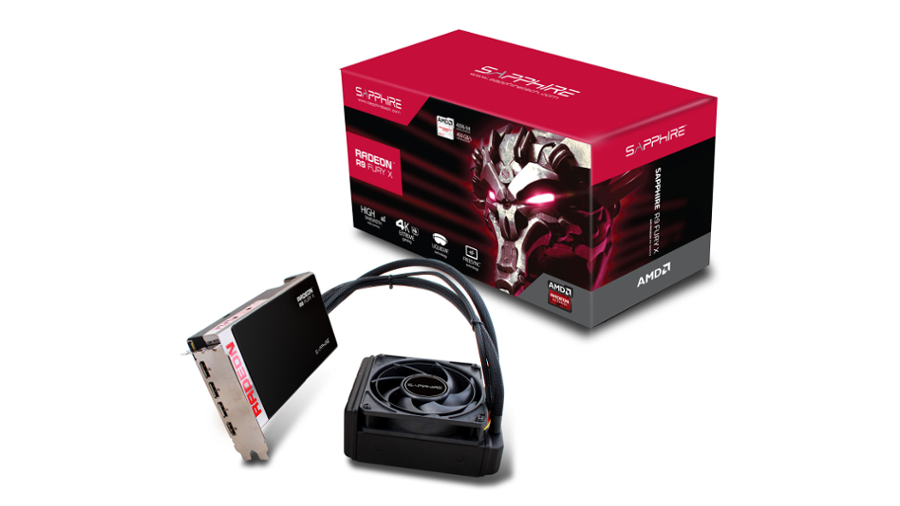

18. AMD Radeon R9 Fury X (2015)

The AMD Radeon R9 Fury X is the world's first GPU with AMD-pioneered High-Bandwidth Memory (HBM) on-chip, which focuses on driving performance in two areas of gaming: 4K and VR. If the latter is yet to arrive, the former definitely has, and it's an area the R9 Fury X is looking to beat Nvidia's similarly priced 980 Ti out of the park. The new HBM replaces the more common GDDR5 variant from the past few years and can deliver a theoretical throughput of 512GB/s, versus the 336GB/s offered by Nvidia's card. The R9 Fury X also almost one billion more transistors than the 980 Ti. But forget stats for a second and look at the bigger picture: the R9 Fury X shows that AMD still has what it takes to compete with Nvidia at the high-end after a few wobbly years, and that is excellent news for gamers and the industry.

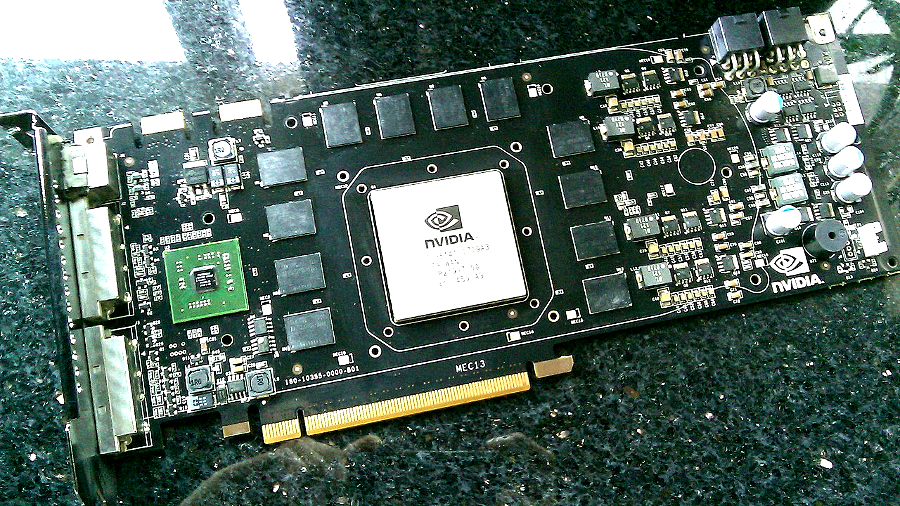

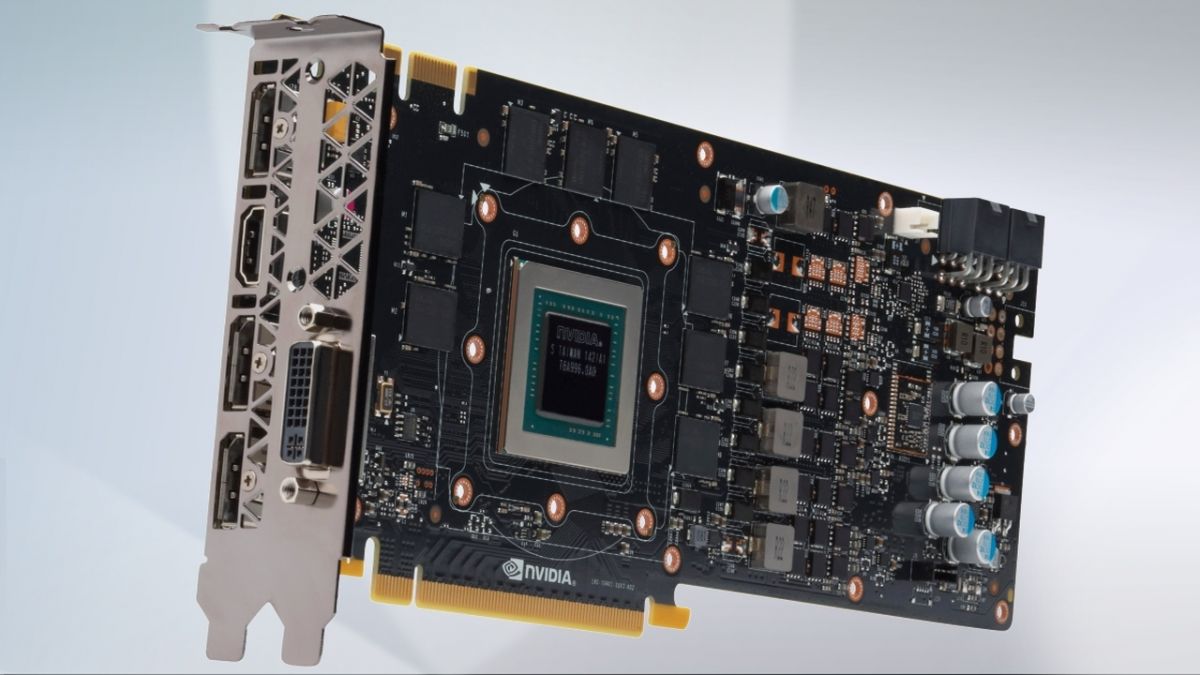

19. Nvidia GTX 980 Ti (2015)

Realising that not everybody can afford a Titan X, Nvidia launched the GTX 980 Ti for $173 (£100) less. That may not represent a huge saving over the Titan X, but its lower price places it in the realms of affordability at the top-end of world-beating cards. More importantly, it packs almost as much of a punch as Nvidia's leading Maxwell card. The 980 Ti features 2,816 CUDA cores (versus the Titan X's 3,072), 176 texture units (versus the Titan X's 192) and the same number of transistors - all 8 billion of them. While memory is half of the Titan X's, the 980 Ti's 6GB of memory, which is clocked at 7 Gbps, is the fastest GDDR5 memory around.