Nvidia drivers: how to update and install the latest Nvidia graphics drivers

Performance optimizations? That’s free real estate

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Having the latest Nvidia drivers installed on your PC will ensure that you’re making the most of your Nvidia graphics card (or Nvidia-powered gaming laptop) and that your GPU is running as intended. These drivers offer optimizations and features for both the graphics cards and the most popular games.

The Nvidia drivers will not only fix current issues but also optimize your GPU’s performance with new games so they’ll run smoother at launch. In fact, new drivers may occasionally be required to access specific graphics features, like Nvidia's RTX ray-tracing or Deep Learning Super-Sampling (DLSS), in games. They’ll also offer support for features like screen recording, Ansel, and Freestyle on compatible cards with Nvidia’s GeForce Experience installed.

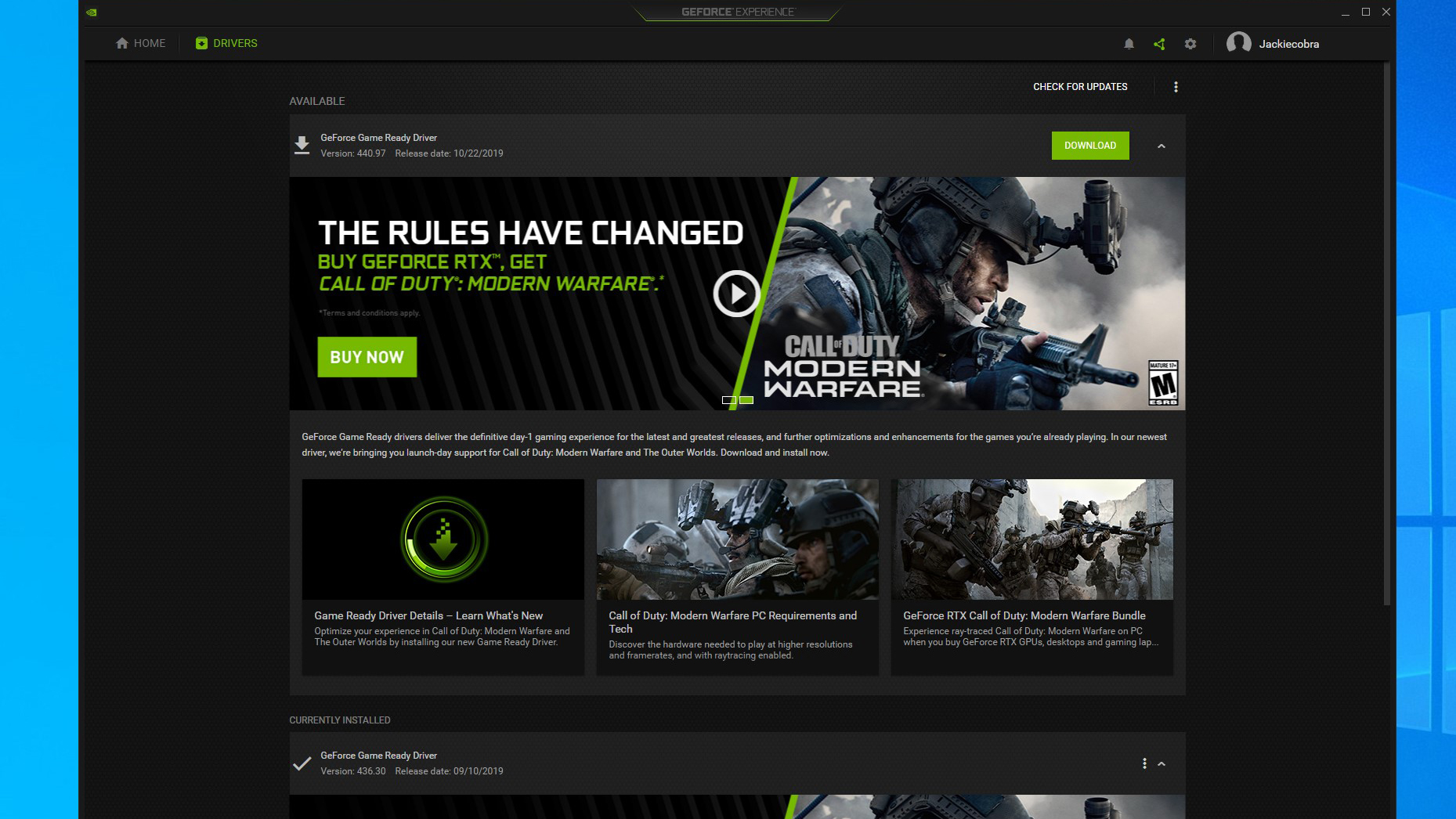

So, while you may be able to use that GPU straight out of the box, you could be missing out on the best experience without the latest Nvidia drivers. If you haven’t done so already, download them from Nvidia directly, which doesn’t require any sign-in or accounts, or check for updates through the Nvidia GeForce Experience, which you can download here.

Article continues belowThe companion application to your GeForce GTX GPU, it helps ensure you download the appropriate Nvidia drivers. It also comes with extra features and can help you optimize your games’ settings for the best performance or quality on your machine. Just bear in mind that it will require you to have an account to obtain drivers.

How often do Nvidia drivers come out?

New Nvidia GeForce Game Ready Drivers usually come out about once a month, though they can occasionally come out even more frequently than that. New drivers often coincide with the launch of popular new games, as the drivers can offer specific optimizations for performance and features in those games.

New Nvidia Studio Driver releases are somewhat less frequent, and don’t seem to follow a regular schedule. But, over the past year, they have generally come out within two months of the preceding driver.

There's also a whole host of other Nvidia drivers available for its GPUs outside of the GeForce branding, such as its enthusiast Titan, pro-grade Quadro and data-center Tesla graphics processors.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

What’s in the latest Nvidia drivers?

The latest version of the Nvidia Game Ready Driver, released on March 30, 2021, adds Nvidia reflex support for Rainbow Six: Siege, lowering system latency by up to 30%, as well as having performance optimization and Nvidia DLSS support for Outriders at launch on April 1.

The previous GeForce graphics driver, 456.55, which enables support for NVIDIA Reflex in Call of Duty: Modern Warfare and Call of Duty: Warzone, as well as offer the best experience in Star Wars: Squadrons. It also improves stability in certain titles when gaming with the RTX 30 Series GPUs.

Prior to that, the 456.38 rolled out on September 17 just in time to provide support for all new major releases like the Nvidia GeForce RTX 3080 and RTX 3090. This new driver also provides support for Fortnite’s qrecent update that brings in new ray-traced effects, a custom RTX map and Nvidia Reflex, as well as optimal day-1 support for Halo 3: ODST and Mafia: The Definitive Edition.

Then there was 452.06, which delivers support for ray tracing to World of Warcraft: Shadowlands, jazzing up its visuals. It also brings in optimizations for Microsoft Flight Simulator and Total War Saga: Troy, as well as support for eight new G-Sync compatible monitors, mainly models from Acer, but also Asus and Lenovo.

Then there’s the Game Ready driver (451.67 WHQL) from Nvidia, specifically optimized for Death Stranding, Horizon Zero Dawn, and F1 2020. So, whether you’re exploring the wastelands as Aloy, speeding around tight turns in F1, or trying to reconnect the world as Sam, you’ll enjoy improved performance and reliability with these latest Nvidia drivers.

For Death Stranding, this driver also includes support for Nvidia’s DLSS 2.0 technology on compatible graphics cards. DLSS 2.0 can help you systems achieve higher frame rates at increased resolutions, as it effectively runs the game at a lower resolution then uses AI-powered up-scaling to deliver a clear image that’s nearly indistinguishable from the native resolution.

Nvidia is also supporting one-click optimization through GeForce Experience for these three titles, making it easier to dial in in-game settings for performance or visual quality. This driver also adds support for one-click optimization for the following titles: Darksburg, Disintegration, Fishing Planet, House Flipper, Justice RTX, Outer Wilds, Persona 4 Golden, Pistol Whip, SpongeBob SquarePants: Battle for Bikini Bottom – Rehydrated, and Trackmania.

And, finally, it enables G-Sync support for the Dell S2721HGF, Dell S2721DGF, and Lenovo G25-10 gaming monitors.

What's in the latest Nvidia GeForce driver?

The latest Nvidia Game Ready Driver, released on March 30, 2021, adds a patch for Tom Clancy’s Rainbow Six: Siege, giving it Nvidia Reflex support that is designed to reduce system latency by up to 30%. As a result, shots fire faster and it’s easier to target enemies.

In addition, this latest Nvidia driver will also have performance optimization and Nvidia DLSS support for Outriders when it launches on April 1, delivering performance headroom to maximize image quality settings.

Finally, it comes with optimizations and enhancements for Dirt 5’s new ray tracing update, Evil Genius 2: World Domination and the Kingdom Hearts series, a resizable BAR on all RTX 3000 GPUs, and beta support for virtualization on GeForce GPUs.

Over the last several years, Mark has been tasked as a writer, an editor, and a manager, interacting with published content from all angles. He is intimately familiar with the editorial process from the inception of an article idea, through the iterative process, past publishing, and down the road into performance analysis.

Become a TechRadar Insider

Become a TechRadar Insider