Kubernetes - taming the cloud

Tiger Computing’s Keith Edmunds reveals how Kubernetes can be used to build a secure, resilient and scalable Linux infrastructure

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

When you want to use Linux to provide services to a business, those services will need to be secure, resilient and scalable. Nice words, but what do we mean by them?

‘Secure’ means that users can access to the data they require, be that read-only access or write access. At the same time, no data is exposed to any party that’s not authorised to see it. Security is deceptive: you can think you have everything protected only to find out later that there are holes. Designing in security from the start of a project is far easier than trying to retrofit it later.

‘Resilient’ means your services tolerate failures within the infrastructure. A failure might be a server disk controller that can no longer access any disks, rendering the data unreachable. Or the failure might be a network switch that no longer enables two or more systems to communicate. In this context, a “single point of failure” or SPOF is a failure that adversely affects service availability. A resilient infrastructure is one with no SPOFs.

Article continues below‘Scalable’ describes the ability of systems to handle spikes of demand gracefully. It also dictates how easily changes may be made to systems. For example, adding a new user, increasing the storage capacity or moving an infrastructure from Amazon Web Services to Google Cloud – or even moving it in-house.

As soon as your infrastructure expands beyond one server, there are lots of options for increasing the security, resilience and scalability. We’ll look at how these problems have been solved traditionally, and what new technology is available that changes the face of big application computing.

Enjoying what you're reading? Want more Linux and open source? We can deliver, literally! Subscribe to Linux Format today at a bargain price. You can get print issues, digital editions or why not both? We deliver to your door worldwide for a simple yearly fee. So make your life better and easier, subscribe now!

To understand what’s possible today, it’s helpful to look at how technology projects have been traditionally implemented. Back in the olden days – that is, more than 10 years ago – businesses would buy or lease hardware to run all the components of their applications. Even relatively simple applications, such as a WordPress website, have multiple components. In the case of WordPress, a MySQL database is needed along with a web server, such as Apache, and a way of handling PHP code. So, they’d build a server, set up Apache, PHP and MySQL, install WordPress and off they’d go.

By and large, that worked. It worked well enough that there are still a huge number of servers configured in exactly that way today. But it wasn’t perfect, and two of the bigger problems were resilience and scalability.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Lack of resilience meant that any significant issue on the server would result in a loss of service. Clearly a catastrophic failure would mean no website, but there was also no room to carry out scheduled maintenance without impacting the website. Even installing and activating a routine security update for Apache would necessitate a few seconds’ outage for the website.

The resilience problem was largely solved by building ‘high availability clusters’. The principle was to have two servers running the website, configured such that the failure of either one didn’t result in the website being down. The service being provided was resilient even if the individual servers were not.

Part of the power of Kubernetes is the abstraction it offers. From a developer’s perspective, they develop the application to run in a Docker container. Docker doesn’t care whether it’s running on Windows, Linux or some other operating system. That same Docker container can be taken from the developer’s MacBook and run under Kubernetes without any modification.

The Kubernetes installation itself can be a single machine. Of course, a lot of the benefits of Kubernetes won’t be available: there will be no auto-scaling; there’s an obvious single point of failure, and so on. As a proof of concept in a test environment, though, it works.

Once you’re ready for production, you can run in-house or on a Cloud provider such as AWS or Google Cloud. The Cloud providers have some built-in services that assist in running Kubernetes, but none of are hard requirements. If you want to move between Google, Amazon and your own infrastructure, you set up Kubernetes and move across. None of your applications have to change in any way.

And where is Linux? Kubernetes runs on Linux, but the operating system is invisible to the applications. This is a significant step in the maturity and usability of IT infrastructures.

The Slashdot effect

The scalability problem is a bit trickier. Let’s say your WordPress site gets 1,000 visitors a month. One day, your business is mentioned on Radio 4 or breakfast TV. Suddenly, you get more than a month’s worth of visitors in 20 minutes. We’ve all heard stories of websites ‘crashing’, and that’s typically why: a lack of scalability.

The two servers that helped with resilience could manage a higher workload than one server alone could, but that’s still limited. You’d be paying for two servers 100 per cent of the time and most of the time both were working perfectly. It’s likely that one alone could run your site. Then John Humphrys mentions your business on Today and you’d need 10 servers to handle the load – but only for a few hours.

The better solution to both the resilience and scalability problem was cloud computing. Set up a server instance or two – the little servers that run your applications – on Amazon Web Services (AWS) or Google Cloud, and if one of the instances failed for some reason, it would automatically be restarted. Set up auto-scaling correctly and when Mr Humphrys causes the workload on your web server instances to rapidly rise, additional server instances are automatically started to share the workload. Later, as interest dies down, those additional instances are stopped, and you only pay for what you use. Perfect… or is it?

Whilst the cloud solution is much more flexible than the traditional standalone server, there are still issues. Updating all the running cloud instances isn’t straightforward. Developing for the cloud has challenges too: the laptop your developers are using may be similar to the cloud instance, but it’s not the same. If you commit to AWS, migrating to Google Cloud is a complex undertaking. And suppose, for whatever reason, you simply don’t want to hand over your computing to Amazon, Google or Microsoft?

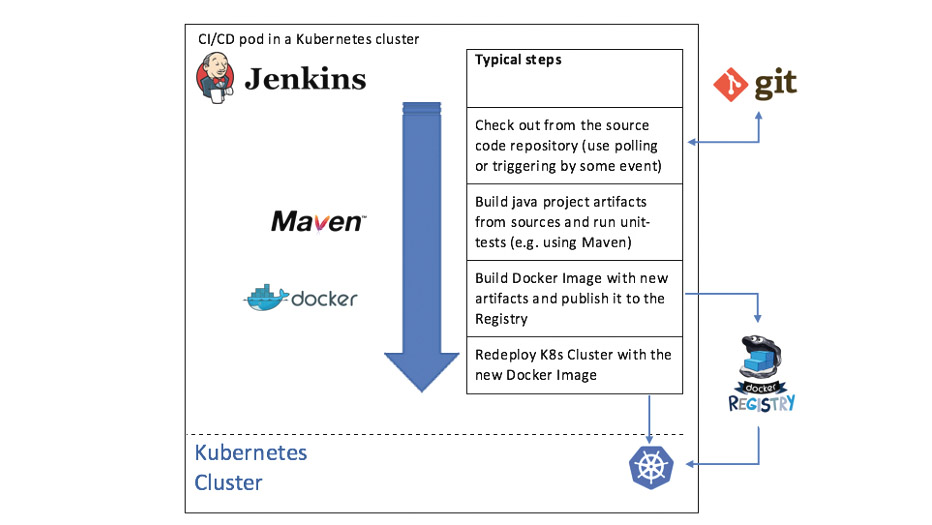

Containers have emerged as a means to wrap applications with all of their dependencies up into a single package that can be run anywhere. Containers, such as Docker, can run on your developers’ laptops in the same way as they run on your cloud instances, but managing a fleet of containers becomes increasingly challenging as the number of containers grows.

The answer is container orchestration. This is a significant shift in focus. Before, we made sure we had enough servers, be they physical or virtual, to ensure we could service the workload. Using the cloud providers’ autoscaling helped, but we were still dealing with instances. We had to configure load balancers, firewalls, data storage and more manually. With container orchestration, all of that (and much more) is taken care of. We specify the results we require and our container orchestration tools fulfil our requirements. We specify what we want done, rather than how we want it done.

Become a Kubernete

Kubernetes (ku-ber-net-eez) is the leading container orchestration tool today, and it came from Google. If anyone knows how to run huge-scale IT infrastructures, Google does. The origin of Kubernetes is Borg, an internal Google project that’s still used to run most of Google’s applications including its search engine, Gmail, Google Maps and more. Borg was a secret until Google published a paper about it in 2015, but the paper made it very apparent that Borg was the principal inspiration behind Kubernetes.

Borg is a system that manages computational resources in Google’s data centres and keeps Google’s applications, both production and otherwise, running despite hardware failure, resource exhaustion or other issues occurring that might otherwise have caused an outage. It does this by carefully monitoring the thousands of nodes that make up a Borg “cell” and the containers running on them, and starting or stopping containers as required in response to problems or fluctuations in load.

Kubernetes itself was born out of Google’s GIFEE (‘Google’s Infrastructure For Everyone Else’) initiative, and was designed to be a friendlier version of Borg that could be useful outside Google. It was donated to the Linux Foundation in 2015 through the formation of the Cloud Native Computing Foundation (CNCF).

Kubernetes provides a system whereby you “declare” your containerised applications and services, and it makes sure your applications run according to those declarations. If your programs require external resources, such as storage or load balancers, Kubernetes can provision those automatically. It can scale your applications up or down to keep up with changes in load, and can even scale your whole cluster when required. Your program’s components don’t even need to know where they’re running: Kubernetes provides internal naming services to applications so that they can connect to “wp_mysql” and be automatically connected to the correct resource.’

The end result is a platform that can be used to run your applications on any infrastructure, from a single machine through an on-premise rack of systems to cloud-based fleets of virtual machines running on any major cloud provider, all using the same containers and configuration. Kubernetes is provider-agnostic: run it wherever you want.

Kubernetes is a powerful tool, and is necessarily complex. Before we get into an overview, we need to introduce some terms used within Kubernetes. Containers run single applications, as discussed above, and are grouped into pods. A pod is a group of closely linked containers that are deployed together on the same host and share some resources. The containers within a pod work as a team: they’ll perform related functions, such as an application container and a logging container with specific settings for the application.

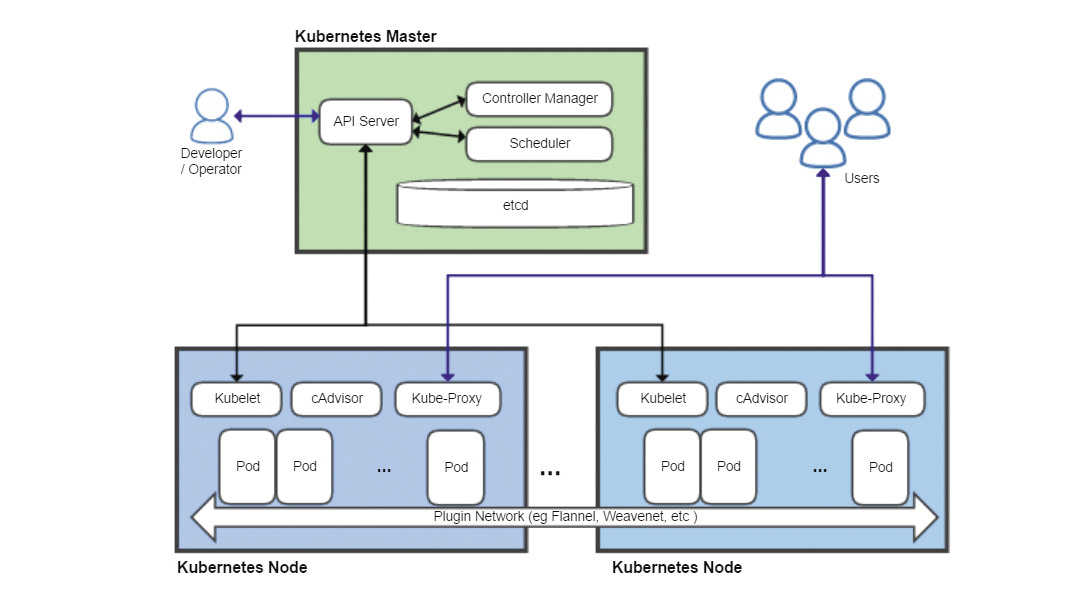

Four key Kubernetes components are the API Server, the Scheduler, the Controller Manager and a distributed configuration database called etcd. The API Server is at the heart of Kubernetes, and acts as the primary endpoint for all management requests. These may be generated by a variety of sources including other Kubernetes components, such as the scheduler, administrators via command-line or web-based dashboards, and containerised applications themselves. It validates requests and updates data stored in etcd.

The Scheduler determines which nodes the various pods will run on, taking into account constraints such as resource requirements, any hardware or software constraints, workload, deadlines and more.

The Controller Manager monitors the state of the cluster, and will try to start or stop pods as necessarily, via the API Server, to bring the cluster to the desired state. It also manages some internal connections and security features.

Each node runs a Kubelet process, which communicates with the API server and manages containers – generally using Docker – and Kube-Proxy, which handles network proxying and load balancing within the cluster.

The etcd distributed database system derives its name from the /etc folder on Linux systems, which is used to hold system configuration information, plus the suffix ‘d’, often used to denote a daemon process. The goals of etcd are to store key-value data in a distributed, consistent and fault-tolerant way.

The API server keeps all its state data in etcd and can run many instances concurrently. The scheduler and controller manager can only have one active instance but uses a lease system to determine which running instance is the master. All this means that Kubernetes can run as a Highly Available system with no single points of failure.

Putting it all together

So how do we use those components in practice? What follows is an example of setting up a WordPress website using Kubernetes. If you wanted to do this for real, then you’d probably use a predefined recipe called a helm chart. They are available for a number of common applications, but here we’ll look at some of the steps necessary to get a WordPress site up and running on Kubernetes.

The first task is to define a password for MySQL:

kubectl create secret generic mysql-pass --from-literal=password=YOUR_PASSWORD

kubectl will talk to the API Server, which will validate the command and then store the password in etcd. Our services are defined in YAML files, and now we need some persistent storage for the MySQL database.

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: mysql-pv-claim

labels:

app: wordpress

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 20Gi

The specification should be mostly self-explanatory. The name and labels fields are used to refer to this storage from other parts of Kubernetes, in this case our WordPress container.

Once we’ve defined the storage, we can define a MySQL instance, pointing it to the predefined storage. That’s followed by defining the database itself. We give that database a name and label for easy reference within Kubernetes.

Now we need another container to run WordPress. Part of the container deployment specification is:

kind: Deployment

metadata:

name: wordpress

labels:

app: wordpress

spec:

strategy:

type: Recreate

The strategy type “Recreate” means that if any of the code comprising the application changes, then running instances will be deleted and recreated. Other options include being able to cycle new instances in and removing existing instances, one by one, enabling the service to continue running during deployment of an update. Finally, we declare a service for WordPress itself, comprising the PHP code and Apache. Part of the YAML file declaring this is:

metadata:

name: wordpress

labels:

app: wordpress

spec:

ports:

- port: 80

selector:

app: wordpress

tier: frontend

type: LoadBalancer

Note the last line, defining service type as LoadBalancer. That instructs Kubernetes to make the service available outside of Kubernetes. Without that line, this would merely be an internal “Kubernetes only” service. And that’s it. Kubernetes will now use those YAML files as a declaration of what is required, and will set up pods, connections, storage and so on as required to get the cluster into the “desired” state.

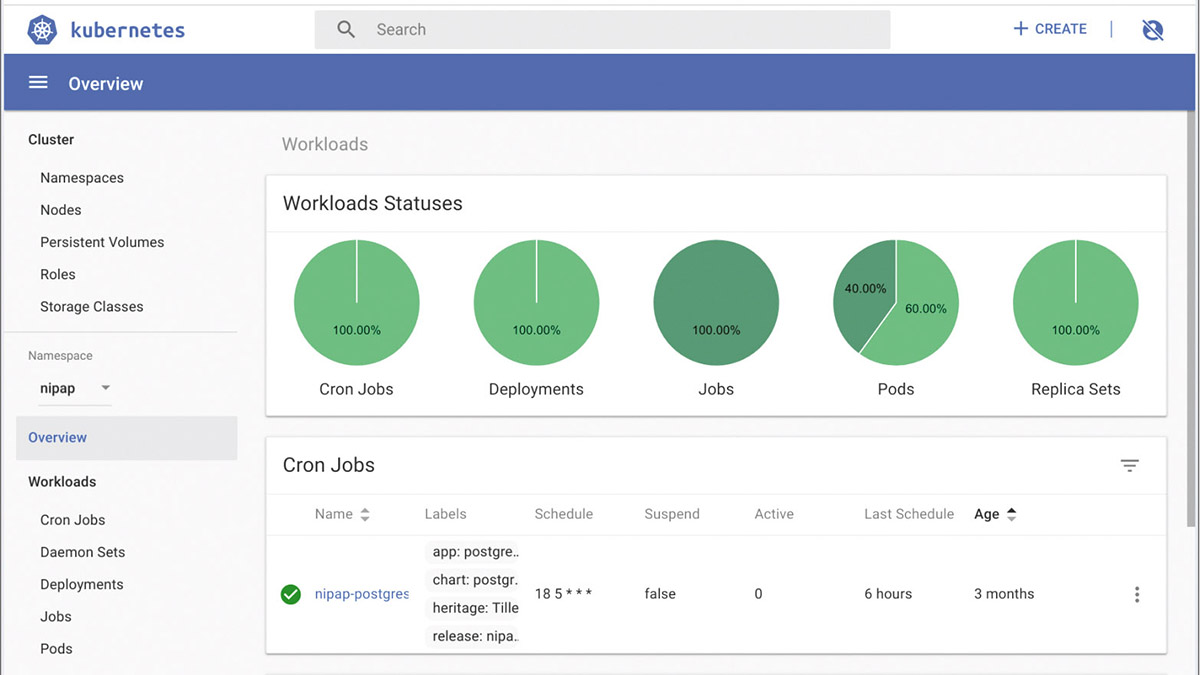

This has necessarily been only a high-level overview of Kubernetes, and many details and features of the system have been omitted. We’ve glossed over autoscaling (both pods and the nodes that make up a cluster), cron jobs (starting containers according to a schedule), Ingress (HTTP load balancing, rewriting and SSL offloading), RBAC (role-based access controls), network policies (firewalling), and much more. Kubernetes is extremely flexible and extremely powerful: for any new IT infrastructure, it must be a serious contender.

Resources

If you’re not familiar with Docker start here: https://docs.docker.com/get-started.

There’s an interactive, tutorial on deploying and scaling an app here: https://kubernetes.io/docs/tutorials/kubernetes-basics.

And see https://kubernetes.io/docs/setup/scratch for how to build a cluster.

You can play with a free Kubernetes cluster at https://tryk8s.com.

Finally, you can pore over a long, technical paper with an excellent overview of Google’s use of Borg and how that influenced the design of Kubernetes here: https://storage.googleapis.com/pub-tools-public-publication-data/pdf/43438.pdf.

Find out more about Tiger Computing.

Enjoying what you're reading? Want more Linux and open source? We can deliver, literally! Subscribe to Linux Format today at a bargain price. You can get print issues, digital editions or why not both? We deliver to your door worldwide for a simple yearly fee. So make your life better and easier, subscribe now!