How Microsoft is building a machine learning future

Redmond is very bullish about the potential for machine learning

We had the opportunity to interview Roger Barga, one of the architects of the Azure ML, Microsoft's machine learning cloud service, to discuss various aspects of the system.

"The ranking algorithm that's in our regression module, the same one running Bing search and serving up ranked results," Barga told TechRadar Pro. "It's our implementation on Azure but all the heuristics and know-how came from the years of experience running it. The same recommendation module we have in Azure ML is the same recommendation module that serves up in Xbox what player to play against next."

Azure ML can look at a document, work out what it's about and look those topics up on Bing. "We can say this is a company, this is a person, this is a product," explains Barga. "That's the same way Delve will find documents and discussions and messages that you'll want to see."

For Azure ML, Microsoft mined the expertise of dozens of researchers and product teams. "Many of these algorithms, these guys have implemented them dozens of time. And you just can't find that kind of expertise in a book, you can't buy it. We are sitting on a wealth of experience and expertise."

With existing machine learning systems, if you use the same algorithm in different systems you get different results. "You're searching all possible configurations of parameters and so you have to use heuristics to find the best model with the data you have available to you. Over the years of applying these to numerous applications our colleagues in MSR and product groups figured out the optimal heuristics. We know what are the best practices, what are the heuristics, what should we do to ensure this will be robust, scalable and performant."

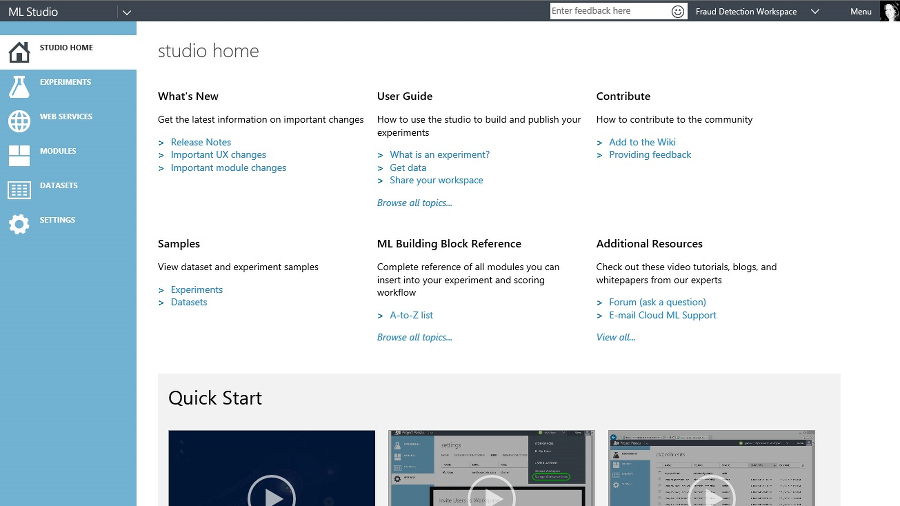

Lego building blocks

Any one of those systems would have made a useful machine learning service for Microsoft to offer. But instead, the Azure ML team sat down with Microsoft Research (MSR) and looked at all the different machine learning systems they had and built one platform they could all plug into, so users can mix and match them like Lego blocks.

"Whatever your mind's eye can see, you can start combining the pieces in creative ways and make what you want to make, as opposed to saying we're just going to give you one thing, we're just going to give you machine learning as a service á la Google prediction API. We said no, we assume you have some creativity. We have given you the right building blocks.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

"We took what was a monolithic piece of code, the MSR ML libraries, and pulled out meaningful pieces that are useful by themselves and that have consistent interfaces. We could combine any two pieces of Lego together to start to make this arbitrarily complex model that our user wants to create. We've given them the composable pieces, we've made sure that the data will flow, that the interfaces are all compatible and when they click run, they get a finished model at the end."

And connecting all those pieces together in a standard way means that as MSR comes up with new machine learning systems – like the Project Adam system that can recognise the breed of a dog or tell you if a plant is poisonous – it will be easy to plug them into Azure ML as new building blocks.

"There are new developments going on in MSR and we'll watch that," continues Barga. "Once we're confident that they're scalable, performant and accurate we'll then bring that over into our service. What we're bringing to market today is an engine for tech transfer and knowledge transfer for years to come."

Mary (Twitter, Google+, website) started her career at Future Publishing, saw the AOL meltdown first hand the first time around when she ran the AOL UK computing channel, and she's been a freelance tech writer for over a decade. She's used every version of Windows and Office released, and every smartphone too, but she's still looking for the perfect tablet. Yes, she really does have USB earrings.

Become a TechRadar Insider

Become a TechRadar Insider