Best cloud storage of 2026: tested, reviewed and rated by experts

Looking for the best cloud storage? These are my top picks

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

The best cloud storage software is perfect for storing your data on a secure and safe server - which will free up space on your device and keep your files safe from system crashes and hardware issues.

Our top recommendation is IDrive. It's really reasonably priced (and often has a great sale on) - it's user friendly and easy-to-use

It's not the only good option though, and services like Sync.com and pCloud are also great options for cloud storage.

If you need something a bit cheaper, or you're on a tight budget, I've got you. Check out our picks for best free cloud storage for some equally great options.

If you're a photographer or, like me, just take too many photos of your cat, we've also ranked the best cloud storage for photos. For more professional storage needs, we've also listed the best cloud storage for business.

I have been using cloud storage throughout my career, especially while working in media, sports journalism, and videography. I understand the need for reliable cloud storage services that are easy to use, quick to transfer files, and cost-effective.

Best cloud storage: deals

1. IDrive: 10TB of storage for $4.98

Cloud storage veteran IDrive offers an incredible amount of online space for a small outlay across a wide range of platforms. 10TB of storage for $4.98 for the first year is unmatched, as is the support for unlimited devices and the extensive file versioning system available.

2. pCloud: Save on lifetime cloud storage

pCloud is more expensive than the competition, but the one-off payment means that you won't have to worry about renewal fees that can be horrendously expensive. 2TB for life is $399 while the 10TB plan costs only $1,190.

3. Reader Offer: 50% off Sync Pro 200GB

Sync delivers outstanding value for anyone looking for terabytes of cloud storage space, and the secure file sharing and collaboration features are an added bonus. Get $15 per user, per month for unlimited storage with this exclusive offer from us.

The best cloud storage 2026:

Why you can trust TechRadar

Best cloud storage overall

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want a cloud storage service that is feature-rich: IDrive boasts a significant number of features, including Snapshots, which lets you store up to 30 different versions of your files and a physical recovery option.

✔️ Flexible backup options: With IDrive, users can back up an unlimited number of devices – computers, mobile phones, servers – to a single account.

✔️ You need cloud storage you can trust with your life: With IDrive’s end-to-end encryption protocols, you can be confident that any files you store are safe from prying eyes.

❌ You're on a strict budget: IDrive isn't the cheapest cloud storage on the market, with some of the more appealing price points only available for a “limited time only”

❌ You've got ambitious growth plans: There's no unlimited storage options, which could be an issue for some enterprises.

❌ You want a slick interface: The user interface of the desktop client does leave a little to be desired.

IDrive is a terrific cloud storage solution - a fantastic all-rounder with an easy-to-use backup option. It offers a great balance between security, usability and performance.

IDrive tops my best cloud storage list with its appealing mix of easy-to-use desktop and mobile apps, excellent backup features, strong security and great value.

Signing up gets you allowances up to 10TB (personal) all the way up to 100TB (business), but that's not all. Sync support keeps files up-to-date across all your hardware, and backup features enable protecting everything from individual files and folders to full SQL, Exchange, SharePoint and other servers.

I particularly like IDrive's extras, such as an option to transfer your data to iDrive on a physical device - ideal if you've a slow internet connection and 50TB to protect. IDrive sends you the drive, pays return postage (in the US), but it's still free once a year for personal users (three times for business plans.)

It's data transfer uses 256-bit AES encryption with the user key not stored on any IDrive servers, adding an extra layer of security for your files even while they're being transferred.

IDrive also offers backup support for Microsoft Office 365, Google Workspace, Dropbox, and Box, enabling you to recover important files and data from automated daily backups. As well as a Cloud Drive integration with Microsoft Office, IDrive also offers compatability with all PCs, Macs, and Linux computers, alongside apps for iOS and Android.

You can also access regular snapshots and versions of your data at specific points in time, helping you to monitor changes up to 30 file versions in the past.

▶ Read more: IDrive cloud storage review.

Performance:

↓ Read my full IDrive analysis ↓

This is an area where IDrive really shines. It boasts fast upload speeds and a file recovery feature that is especially handy if you think you’ve lost some important data. IDrive also provides block-level syncing when you are syncing files from a device, such as a smartphone or tablet, to the cloud. Our tests revealed iDrive's performance was a close match to Google Drive and the other top contenders.

Other backup features, including disk image backup, NAS backup and server backup, are useful additions depending on what you’re looking for from your cloud storage.

Security:

IDrive is strong on the security fundamentals, with end-to-end encryption and two-factor authentication to protect you from attack. This works via a private key that is unique to you, so look after it.

There’s also the offer of standard encryption, which although not as secure as end-to-end encryption, still provides protection against most potential breaches. It also means a bit less responsibility for the end user as iDrive keeps hold of the encryption key, so you can restore your data whenever you need to, without having to remember a private key.

Customer Support:

IDrive has live chat functionality, as well as the option of contacting support via either telephone or email, so you should be able to contact the company should an issue arise. As with many companies, some users have complained that response times vary, but we’ve been pleasantly surprised on this front whenever something's come up.

Features:

IDrive offers users the possibility of backing up their files across an unlimited number of devices, from computers to mobile devices, as well as NAS drives.

Real-time syncing and backup scheduling are both great for ensuring you never forget to back up the latest version of your files. You can also opt to receive desktop or email notifications informing you when your backups are completed.

IDrive's link-sharing functionality is super robust. You can configure links to expire after a set period - up to a maximum of 30 days - or a number of downloads. All of your active share links are also visible through the IDrive dashboard, so there’s complete clarity over what you’re sharing with external partners.

IDrive's Express service lets you put your data on all the best hard drives and post them off - for when you want the added protection that comes with physical storage.

User Interface:

IDrive is easy to use on the whole, although a revamp of its design and interface in certain areas could improve things further. Menu and setting screens are pretty clear, so you shouldn’t have too much trouble configuring IDrive exactly to your liking.

There are some differences here depending on the type of device you are accessing IDrive through. For instance, there are fewer options if you’re using the iOS version.

While the Android app lets you backup SMS messages, this isn’t possible with the iPhone and iPad versions, which are limited to contact, calendar, photos and videos. While this may be more of an Apple issue than an IDrive one, it will still affect usability for certain users.

Price

When I last checked in February 2025, IDrive offers 10GB of free storage. After that, you’ll probably want to look at the Personal plan, which costs $69.65 a year for 5TB, and you can upgrade this plan to 10TB ($104.65), 20TB ($174.65), 50TB ($349.65), and 100TB ($699.65).

The Business plan is more likely to suit your needs if you need to provide access across an unlimited number of users and devices. This is available from $69.65 a year for 250GB, increasing to $8119.65 for 50TB of space per user.

Alternatively, there’s the Team plan, which comes in several versions. Here, the cheapest plan starts at $69.65 for five computers, five users, 5TB total, for one year. This plan can be upgraded all the way up to 500 computers, with 500 users and 500TB of storage at $6999.65 per year.

TechRadar readers can get 10TB of cloud storage from iDrive for $4.98 for the first year. You can grab this exclusive deal by clicking here.

Attributes | Notes | Rating |

|---|---|---|

Design | A bit lacking in places - especially outside the desktop version | ⭐⭐⭐ |

Ease of use | Not always the most intuitive, but worth the effort. | ⭐⭐⭐⭐ |

Performance | Fast upload speed and great feature set. | ⭐⭐⭐⭐⭐ |

Security and privacy | IDrive offers end-to-end encryption | ⭐⭐⭐⭐⭐ |

Customer support | 24/7 live chat, telephone and email support | ⭐⭐⭐⭐⭐ |

Additional features | Single sign-on, server cloud backup, remote computer management | ⭐⭐⭐⭐ |

Time to upload 1GB | 4m22s - very fast | ⭐⭐⭐⭐ |

Time to download 1GB | 2m27s - still very respectable | ⭐⭐⭐ |

Platforms | PC, Mac, iOS, Android, Linux | ⭐⭐⭐⭐ |

Versions kept | 30 | ⭐⭐⭐⭐ |

Price | Not the cheapest but a great free plan | ⭐⭐⭐ |

Best cloud storage for lifetime value

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want a free plan without restrictions: pCloud implements zero limitations on file size or speeds for their plans.

✔️ You need social media integration: You can back up images and videos on your accounts, like Facebook and Instagram, directly within pCloud.

✔️ You want block-level syncing: This means upload speeds will be much quicker as only the parts of your files that have changed will be synced.

❌ You need a built-in document editor: This isn't included in pCloud, so if you need one you might be better off going with rivals like Google Drive or Microsoft Onedrive.

❌ You use a lot of devices: With pCloud, it's recommended that no more than five devices are connected to a single account - this may not be enough for individuals with a lot of devices on the go at once.

❌ The user interface is important to you: This can feel a little old-fashioned but there's a six-step wizard to help you get started.

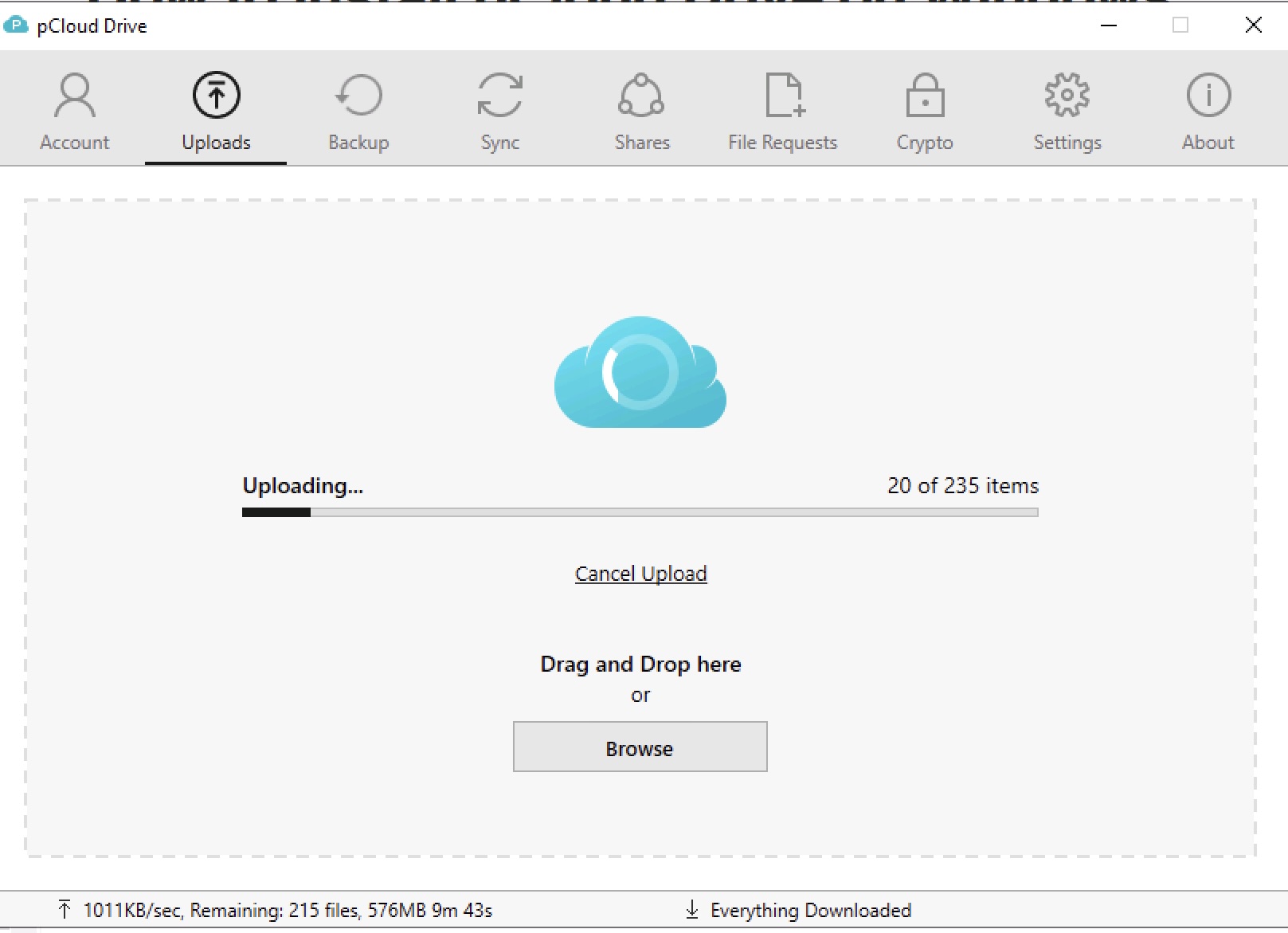

pCloud is a great value cloud storage service with a fantastic free plan and the kind of social media integration that few of its rivals can match.

The Swiss-based pCloud is a stand out cloud storage - especially thanks to its hugely advanced features for file sharing. Regular downloads are great, but pCloud lets you create specific download pages - complete with custom messages, as well as build slideshows of shared images, and even stream video or audio files straight from you storage.

The free plan comes with a generous 10GB - to get this full amount, you'll need to do the usual hoop jumping like installing the app. signing up friends - you know, the classic stuff.

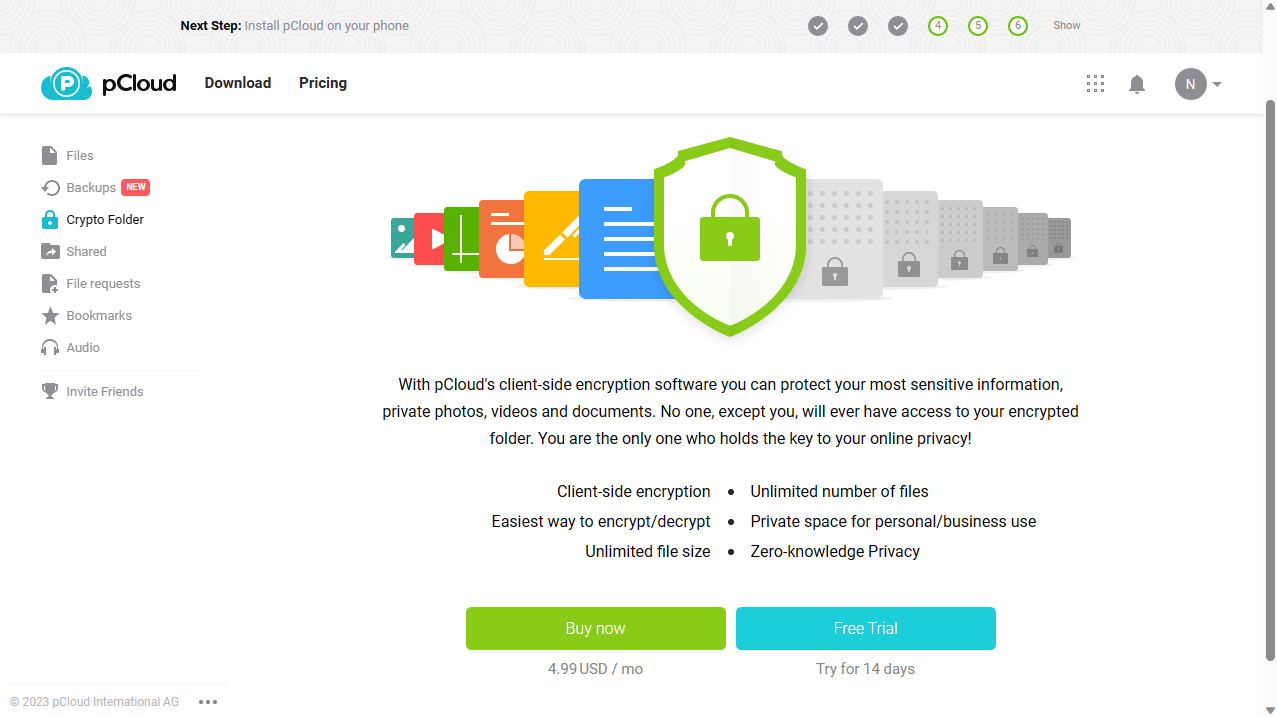

Try not to be too put off by that though, because there's a lot to like. Being based in Switzerland, which offers some of the world's strongest data protection regulations, is one of them. Your private encryption key is protected by a 4096-bit RSA algorithm, while your files and folders are encrypted with per-file and per-folder 256-bit AES keys.

If data protection is important to you, which in this day and age it probably should be, then pCloud also lets you choose from five different servers. This makes your files and backups extra secure - not to mention the option to add add an additional layer of encryption to your file storage through pCloud Encryption.

That being said, the desktop interface isn't quite as user friendly as some of its competitors, and 2Tb isn't that much in business terms - IDrive, for example, lets you store up to 20TB for just a bit more.

▶ Read more: pCloud Cloud Storage review.

Performance

↓ Read my full pCloud analysis ↓

Our tests found that pCloud’s upload speeds fared well against competitors like iDrive, Internxt, and Apple iCloud.

File recovery also performed well, but it’s worth keeping in mind that the free version of pCloud only stores file revisions for up to 15 days. If you need longer than this, you’ll have to select a paid plan.

Security

All file transfers involving pCloud use a TLS/SSL encrypted channel for additional protection and files are stored across five different server locations as a safeguard against data loss. In fact, pCloud is so confident regarding the security credentials that it offered a $100,000 reward for anyone that could crack their defenses as part of the 'pCloud Encryption Challenge'. No one was successful.

Customer Support

Response times can vary a lot and if you want to contact pCloud directly, you have to pick up the phone. Head office is in Switzerland, so US customers have to battle against the time difference. You can also send an email, but the addition of a live chat option would help speed up their customer service.

Features

Sharing files is straightforward with pCloud and there are a couple of additional features worth talking about too.

One of these is Extended History, which extends the time that pCloud retains deleted files to 360 days. Another is pCloud Crypto, which adds client-side encryption to your account.

These features are at an additional cost, however, costing $80 a year and $50 respectively.

The desktop app adds a Dropbox-like virtual drive to your file manager, simplifying operations, but Internxt's mobile app can make life even easier by (optionally) automatically uploading pictures and videos direct from your phone.

There's also offline functionality, so you can access your shared files via your computer or mobile even if you don't have a working connection. Then, as soon as the connection is restored, there'll be an automatic sync.

Browser extensions are also available for Firefox, Chrome, and Opera, allowing you to save audio, video and pictures directly from a web page to your pCloud account.

User Interface

We found the service very easy to set up and use. Sample folders and files helped us immediately try out its powerful file management and sharing features. We had no issues connecting our account to Facebook, Instagram and other social media accounts, and backing up their content.

Some users have complained pCloud’s software is not short of a bug or two, which diminishes its usability significantly. When things are working well, however, the desktop client is pretty intuitive.

Price

A strong point for pCloud is its competitive price points. There’s a generous free plan offering 10GB of storage (although you will have to complete a few simple tasks to unlock the full amount, such as recommending friends).

In terms of paid plans, There's no monthly pricing model, but annual plans are fair value at $49.99 for 500GB, and $99.99 for 2TB when we last checked in February 2025.

Where I found pCloud really shines is in its lifetime plans. You can get 500GB forever for a one-off $199, 2TB for $399, or 10TB for $1190. Despite mooted savings of up to 37%, we check this plan every month and the prices remain the same.

Interestingly, pCloud also offers not just these 'lifetime' payment plans, but the ability to stack one lifetime allowance on top of others. That's certainly an investment, but we like that the option is there, because it could pay off over time with enough use.

Attributes | Notes | Rating |

|---|---|---|

Design | Some bugs aside, pretty intuitive | ⭐⭐⭐ |

Ease of use | Clear, and a set-up wizard to get you started | ⭐⭐⭐⭐ |

Performance | Holds up well against competitors, but versioning is restricted somewhat | ⭐⭐⭐ |

Security and privacy | Good security protocols and confident about its safeguards | ⭐⭐⭐⭐⭐ |

Customer support | Somewhat lacking. A live chat option would be handy. | ⭐⭐⭐ |

Additional features | Extended History, crypto drive, file manager | ⭐⭐⭐ |

Time to upload 1GB | 4m4s - very fast | ⭐⭐⭐⭐⭐ |

Time to download 1GB | 1m15s - rapid | ⭐⭐⭐⭐⭐ |

Platforms | All | ⭐⭐⭐⭐⭐ |

Versions kept | - | Row 9 - Cell 2 |

Price | Reasonable and the lifetime-options are a bonus. | ⭐⭐⭐⭐⭐ |

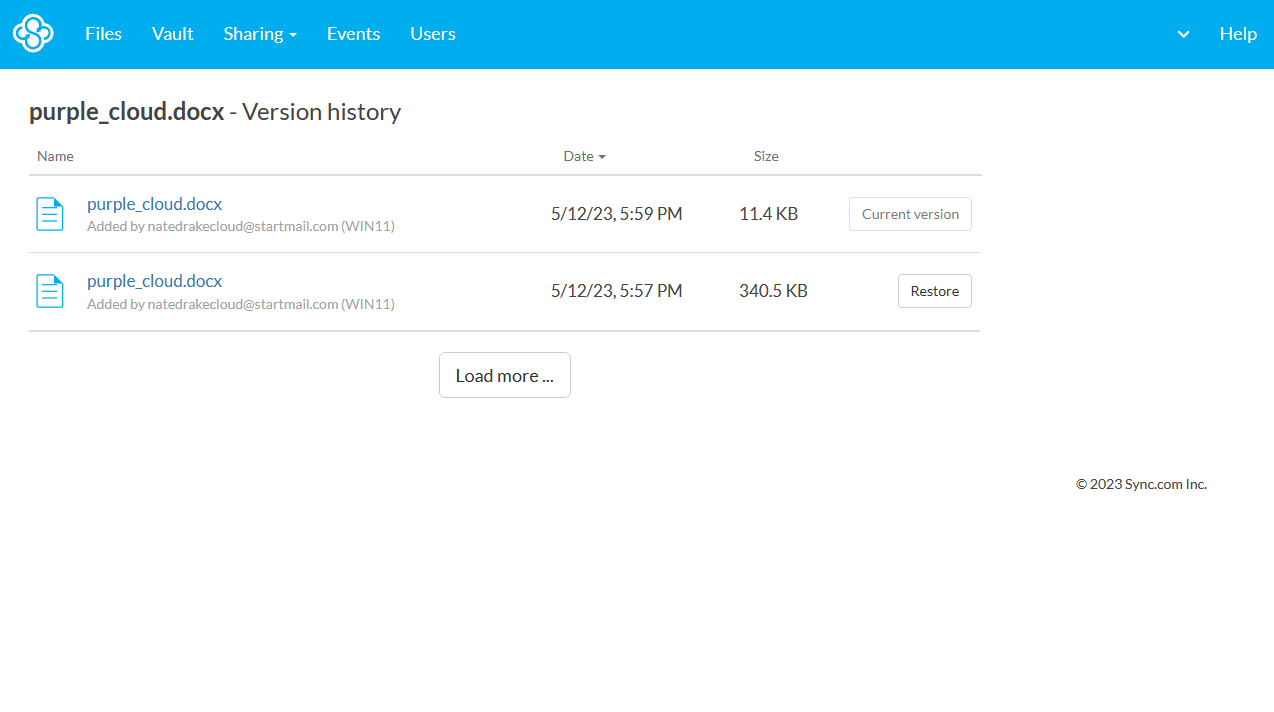

Best cloud storage for syncing

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You need a team-friendly platform: Microsoft 365 integration allows for live editing and there's strong control regarding who can see your files.

✔️ You need strong security protections: End-to-end encryption and two-factor authentication are both on offer with Sync.

✔️ You want a cloud platform that's easy to set up: Recently revamped desktop clients and mobile apps make it straightforward to get going with Sync.

❌ You want more advanced interface options: Aside from progress indicators and a recent changes list, there's not much going on with Sync's desktop dashboard.

❌ Single folder syncing is an issue: Some competitors provide block-level syncing but not Sync.

❌ You need reliable customer support: While there is user forum and knowledgebase to help with troubleshooting, the only way to directly contact Sync on some plans is via email.

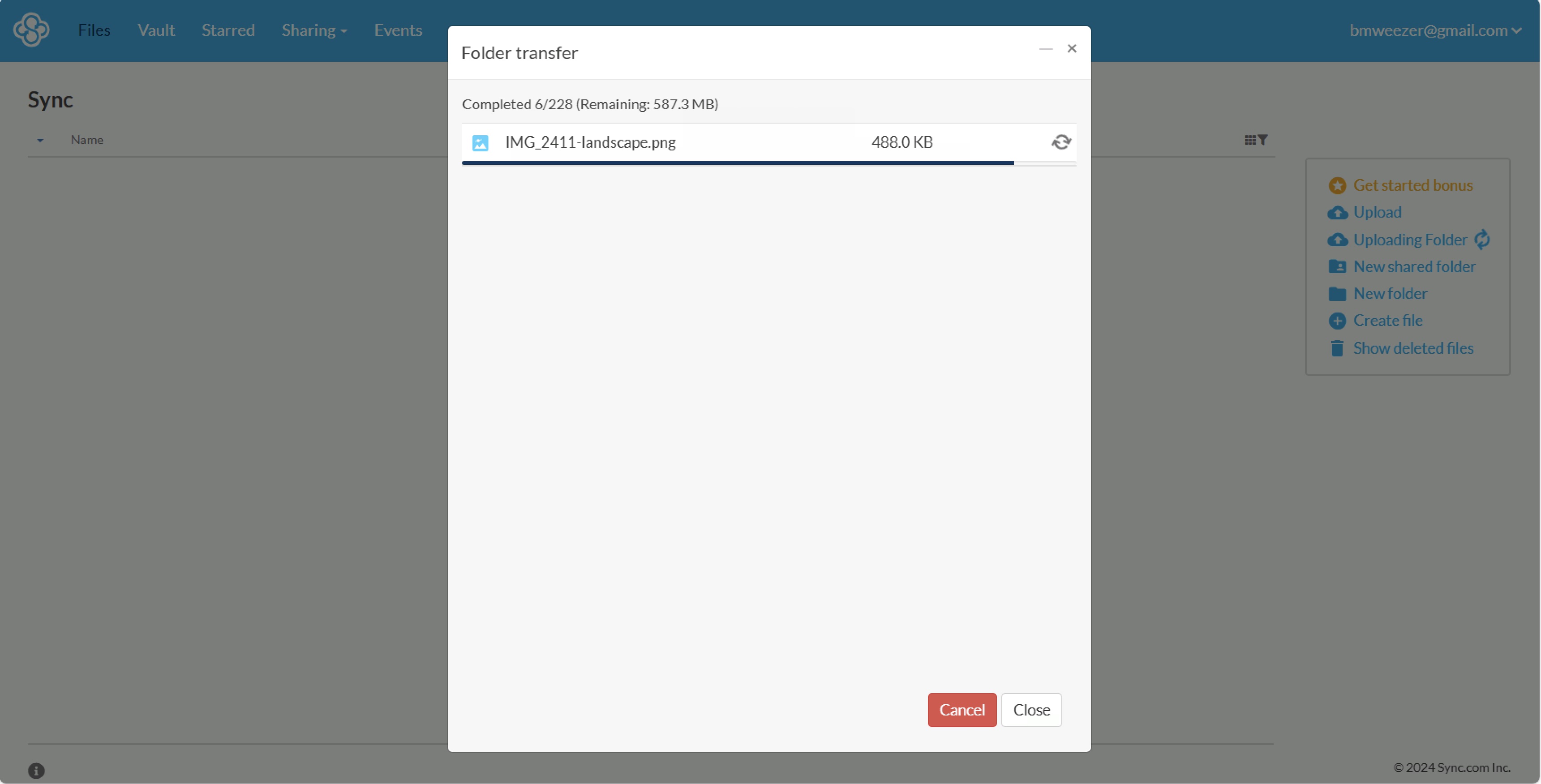

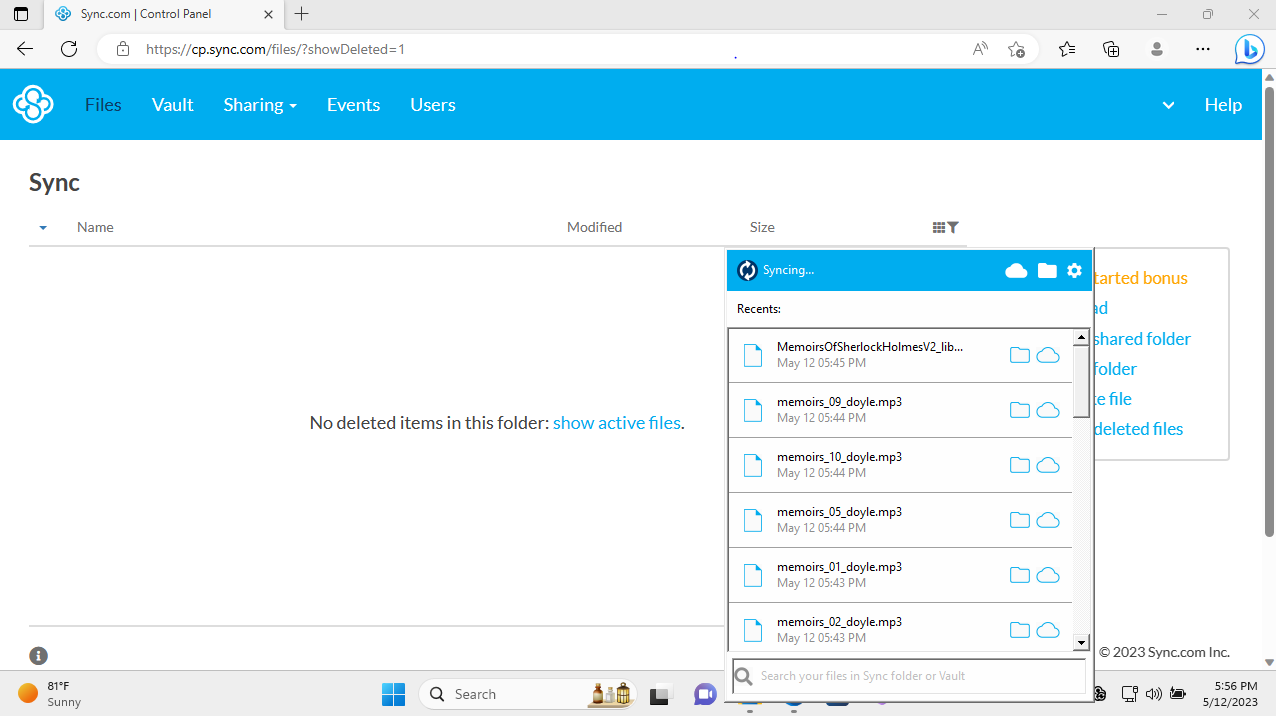

Sync is a competitively priced cloud storage platform that has some solid features. It's only let down by limited customer support and the absence of block-level syncing.

Sync.com is, unsurprisingly, focused on one thing - syncing. To be fair, it doesn't have a whole load of other sparkly features or tools - but it is really good at syncing.

That being said, it's a sophisticated service. It allows you to create read-only versions, download limits, expiring links, and password protected files should you need to. The versioning supports file restoration from the last 180 days, which is really generous (most providers give 30).

It has a simple, easy to use interface (as well as a handy mobile app which automatically syncs photos and videos as they're taken). In our testing, we were particularly impressed with the upload and download speeds - among the best we've seen!

Security features like end-to-end encryption and two-factor authentication are key protections in securing your data.

If you work in an industry with particularly strict laws, all of Sync.com's Pro plans come with GDPR and PIPEDA compliance, and all Pro plans outside of Solo Basic feature HIPAA compliance by default.

If you have a larger team, I would point you towards the Solo Professional and Teams+ Unlimited plans which offer a staggering 365 day version history and recovery.

You can also get access to Microsoft Office 365 integration, and in-app compatibility for Windows File Explorer and Mac Finder.

▶ Read more: Sync cloud storage review.

Performance

↓ Read my full Sync.com analysis ↓

One thing I was really impressed by with this platform is the upload and download speeds - but it should be noted this this is of course just for a single folder.

Users can also control these speeds - which is handy, and can throttle them where needed, for example - if you're running more pressing commuting tasks or don't have a particularly good connection, you can make sure Sync doesn't affect this.

Keeping with the theme, Sync also offers advanced sharing controls, including the option of adding password protection and expiry dates on any links you do share.

I was particularly surprised (in a good way) by the team-oriented feature set, including the ability to add comments to file-sharing links, share folders with groups of people, open and edit Office documents direct from your Sync space (if you subscribe to Office 365) and manage all your users from a central console.

If you subscribe to a team account, you'll find that your files get added security from protocols like HIPAA, GDPR, and PIPEDA. PIN code locks are also provided for the Sync mobile apps.

Security

Cloud security is an important feature at Sync. The service isn't interoperable with third-party apps (like collaboration tools) and doesn’t make its API available for others to use externally.

Now, this is a bit of a pain for anyone who wants to use some useful integrations, but it reduces the possible attack vectors for outside actors to exploit, which, in this day and age, is pretty important.

Sync has even published a white paper explaining how it uses 2048-bit RSA encryption keys to keep your data safe. Two-factor authentication is also easily enabled for your account.

Customer Support

There is a pretty extensive Help Center which can help with a whole range of things like account setup, creating shared folders, and more. But, if you want VIP support, you'll have to pay for some of the more expensive plans.

I found finding help on the cheaper plans a bit of a task - with no community forum (and you can only get directly in touch with Sync via an online email form). We did receive a helpful response pretty quickly, though.

Features

Sync's main function is in letting users maintain one single folder on their device (or devices) which is automatically synced with the cloud.

There is versioning offered, but the length of time files are kept for varies depending on which subscription you get.

The lack of more advanced features may disappoint some but Sync has chosen to focus on its core functionality instead.

User Interface

What's fantastic about Sync is the easy-to-use across its web and mobile versions (ok, they're not particularly stylish, but they work well).

There’s also an option to store files online only, without taking up space on any of your devices.

Price

Sync’s free plan comes with 5GB, but does include the option of raising this to 25GB by inviting friends and completing other tasks.

Personal users can access 200GB of storage for $5 per month, 2TB of storage for $8 per month, or 6TB for $20 per month (when paid annually).

For businesses, there’s a Teams Standard plan that offers 1TB at a cost of $6 per user per month. Enterprises may need the Teams Unlimited plan, however, which delivers unlimited cloud storage for $15 per user per month.

Best cloud storage for security

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

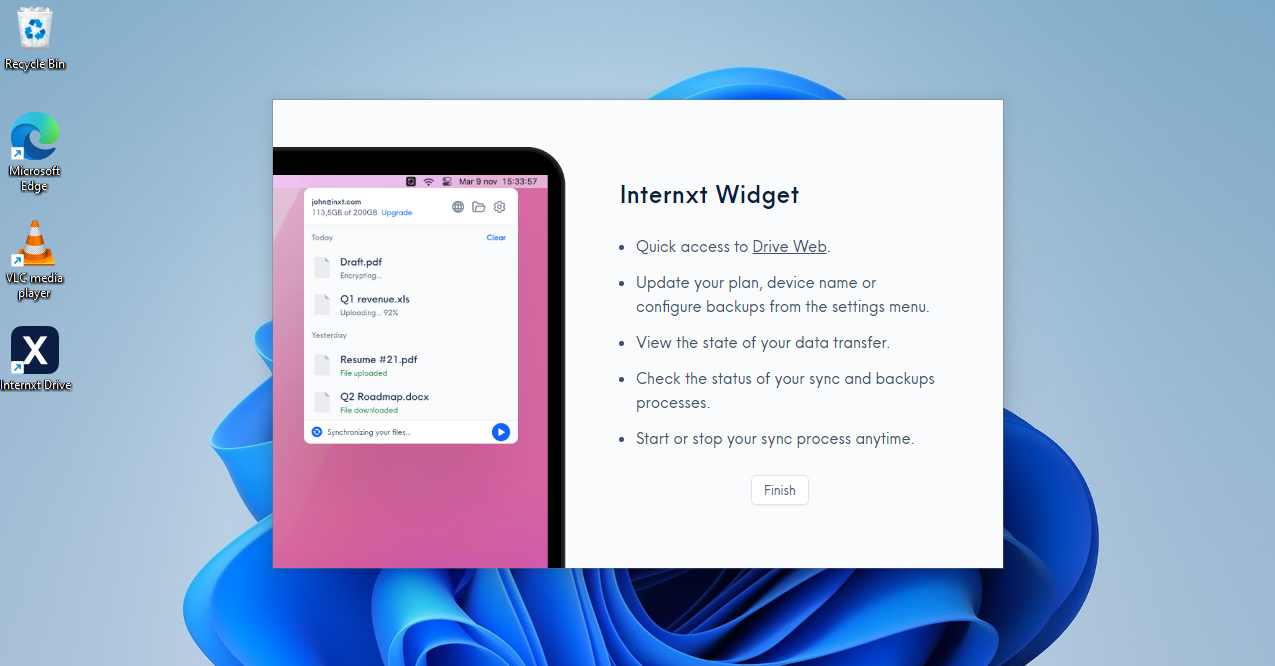

✔️ You want advanced security features: Internxt is a zero-knowledge file storage service focused on absolute privacy

✔️ You need reliable customer support: Chat support is responsive and the Help Center is extensive.

✔️ You want an easy-to-use interface: An intuitive interface most users will be instantly familiar with.

❌ You want free storage without limits: The download limit on shared files, for example, isn't included in the free plan.

❌ You need more advanced features: Features like file versioning, offered by some rivals, aren't available here.

❌ If collaboration is important to you: In-app collaboration isn't on offer.

Internxt has a clean interface and first-rate security credentials. Although some advanced features are lacking, reliable support means this is a cloud storage platform well worth a look.

Check out our in-depth Internxt review for a closer look at this cloud storage platform's excellent security credentials.

Internxt is security-focused at its core, providing cloud storage with multiple security features aimed at making sure your data stays safe.

Paid plans start from just €4.99 a month for 200GB of storage, so worth a look if you're looking to extricate yourself from a big tech provider like Google Drive.

Of course, end-to-end encryption is deployed to protect your data from any snoopers, and the platform stores your files in bits spread all around its networks, which protects from hardware failures. You also get a password generator to save you the hassle of coming up with a new, secure password to protect your accounts, a virus scanner to protect from malware, and a temporary email.

In our testing, we liked the well-designed web view and apps, and found it did a good job of helping us find and manage files.

That being said, Internxt isn't quite the powerhouse of advanced features you find with Dropbox and OneDrive - and the free plan is quite a hassle.

In my opinion, Internxt lacks some of the integrations that its competitors offer, such as Microsoft Office 365 or Google Workspace support.

Internxt's premium customer support to all paid plans is fantastic, and makes a refreshing change from the VIP customer support offered by Sync.com.

For the security conscious, I would highly recommend Internxt thanks to its zero knowledge and quantum safe end-to-end encryption, two factor authentication, password protections, and open source platform, which is regularly audited by Securitum.

▶ Read more: Internxt cloud storage review.

Performance

↓ Read my full Internxt analysis ↓

Internxt held up pretty well during our speed tests, and it was particularly impressive that the encryption process didn't have a detrimental effect on speed at all.

It took just 1 minute and 55 seconds to upload 625MB of data - which is pretty similar to other cloud storage services we've reviewed without encryption.

Security

Now, security is where Internxt really shines. First of all, your data is instantly encrypted when transferred from your device, and is only decrypted once it's downloaded back to the device in question.

You don't have to just take Internxt's word for it either, as the platform is committed to open-source, meaning the app's source code is available on Github for any developers to check and inspect.

Your data isn't just stored in a single location - it's broken up and spread across multiple servers, meaning a data breach is unlikely to result in all your files being compromised - mitigating the damage.

Internxt has also passed an independent security audit by Securitum.

Customer Support

All paid Internxt users have access to premium customer support through live chat or e-mail. The Internxt Help Center is also on hand with numerous support articles.

Features

Internxt is available on desktop, tablet, or mobile - with most of the basic features you'd expect from a cloud storage service. You can take advantage of media streaming to your video and audio files without downloading the files to your computer.

There’s automatic syncing functionality and offline access, both of which will be much appreciated by business users who can’t afford to be without access to their files. Of course, security should also be mentioned here too as it’s one of Internxt’s features that received the most praise.

Windows Explorer and Mac Finder integration makes uploading as simple as a drag and drop. Sync support keeps files up-to-date across all your devices, and you can share files with others via custom links.

User Interface

It's really simple to setup, and we found it was one of the easiest of all the cloud providers we tested.

They even provide an introductory guide to make the process as hassle-free as possible.

It has a clean and well-designed dashboard, and the drag-and-drop process for uploads means there’s little opportunity for any issues to arise.

Price

The free plan now offers just 1GB of storage - which, to be honest, isn't a whole lot. You're best to use this as a sort of free-trial to see how you get on with the service.

When paid annually, individual users can get 1TB for €120, 2TB for €240, 5TB for €3600. Lifetime plans are also available, price at $1900 for 2TB, $2900 for 5TB, or $3900 for 10TB.

That being said, as of March 2026 - there's a pretty hefty discount on all plans, which will get you roughly 85% off on average - and this sale runs a fair bit.

There are also two business plans available - the Standard and the Pro. the first offers 1TB of encrypted storage for €80 (although currently on sale for €10) and the Pro offers 2TB of storage for €100 (currently on sale for €13).

You can also get their popular 5TB Lifetime Plan at an 80% discount.

Internxt also offers up to 50% off of its lifetime plans occasionally - so the website's worth checking every now and then if you think you'll get value from the long term investment.

Attributes | Notes | Rating |

|---|---|---|

Design | An intuitive interface that gives the user plenty of support | ⭐⭐⭐⭐⭐ |

Ease of use | An introductory guide offered straight away | ⭐⭐⭐⭐ |

Performance | Good speeds even with encryption enabled | ⭐⭐⭐⭐⭐ |

Security and privacy | A real strong point. Robust encryption offered here. | ⭐⭐⭐⭐⭐ |

Customer support | Email support on-hand 24/7 | ⭐⭐⭐⭐ |

Additional features | Nothing of note, really | ⭐ |

Time to upload 1GB | 4m24s - very fast | ⭐⭐⭐⭐ |

Time to download 1GB | 2m29s - very respectable | ⭐⭐⭐ |

Platforms | PC, Mac, Linux, iOS, Android | ⭐⭐⭐⭐ |

Versions kept | 1 - "working on making that more competitive" | ⭐⭐ |

Price | Free plan may be a little misleading, but lots of options herel | ⭐⭐⭐⭐ |

Best cloud storage for backups

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want unlimited cloud backup: You can get unlimited cloud backup for just $9 per month, so you can keep all the files you need, forever.

✔️ You need straightforward operation: Everything is simple and clear - even if you need to recover your entire computer.

✔️ Affordable pricing is important to you: Even before you commit, you can try Backblaze for free for 15 days without giving up any payment card information.

❌ You need fast backups: Backblaze isn’t particularly fast - although it does intentionally throttle its speeds to avoid user disruption - but this could be a problem for some.

❌ You need more than one device backed up: Backblaze works on a one-device-per-license approach. You can purchase multiple licenses, of course, but you won't receive a discount for doing so.

❌ You want support for network drives: These aren't included, although you can back up external hard drives and portable SSDs.

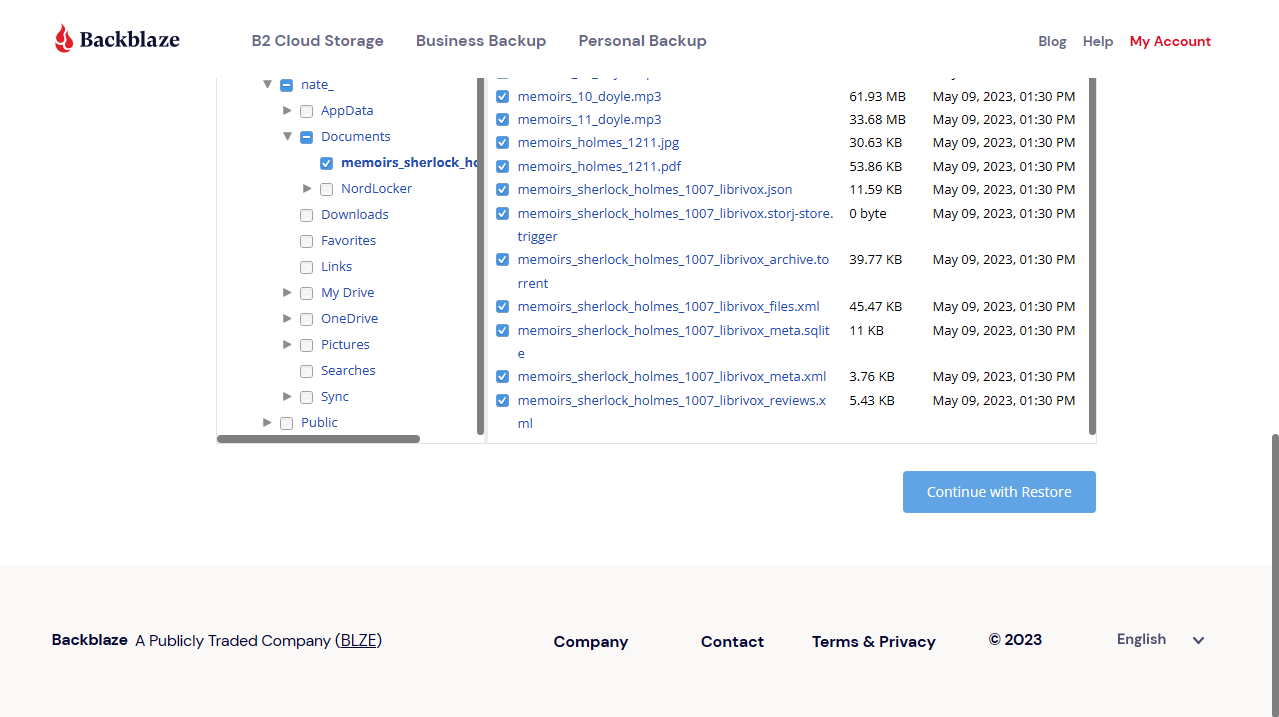

Backblaze is a great backup tool that is comprehensive and secure, working just as well for personal or business devices.

Backblaze is a high-powered cloud backup service that provides unlimited storage with no file size limits for a very fair price.

Install the Backblaze desktop app and it immediately scans for important files, and begins uploading them. You're able to take manual control, if you like, but we found it simpler to let Backblaze handle everything automatically.

The initial transfer of data to the web can take a long time, but our tests found impressive upload speeds kept delays to a minimum. And on the download front, if you need your data in a hurry, Backblaze will ship up to 8TB to your door on a USB drive.

The focus on backups means there's no file syncing, no clever collaboration tools, and only the simplest of file-sharing options. You can only protect one computer per account, too, and network drives aren't supported.

As you might have guessed from the name, Backblaze is my recommendation for those looking for a robust cloud backup option, rather than dedicated cloud storage. Backblaze does offer a B2 cloud storage service, but this is more aimed at enterprises than individual users.

Backblaze takes particular pride in boasting about its data center security, noting that it has 24-hour staff, biometric security, and redundant power to keep your data safe.

▶ Read more: Backblaze review.

Price

↓ Read my full Backblaze analysis ↓

For the Backblaze personal plan, you can access unlimited cloud storage for $9 per month, or grab a 13% discount by paying $189 for 2 years. Alternatively, you can pay per year at $99.

There's also a free 15-day trial, which you can access without having to hand over your financial details. After that, there's no free plan, but you can get three months free on an annual plan.

As for Backblaze's business offerings, you can get unlimited endpoint backup for $99 per year with a 365 day version history and no restrictions on the number of workstations.

Backblaze also offers an enterprise plan, but you will have to contact sales to get a price.

User Interface

The Backblaze interface is another strength of the platform. While the options are somewhat limited, there’s a real clear focus apparent when engaging with the user experience.

This includes a useful file manager feature, which makes it easy to search and sort through the files you have stored in the platform. The interface is minimal, which is both a strength and a weakness - especially if you are used to some of Backblaze’s competitors that offer more advanced functionality.

Features

If you also need a VPN to protect yourself online, you can alternatively get Backblaze completely free for a year when you sign up for our #1 favorite, ExpressVPN (and you get three extra months of ExpressVPN protection, too).

Attributes | Notes | Rating |

|---|---|---|

Design | A straightforward design with clear navigation. | ⭐⭐⭐⭐ |

Ease of use | Simple and clear. | ⭐⭐⭐⭐⭐ |

Performance | Not the quickest, possibly due to deliberate throttling | ⭐⭐⭐ |

Security and privacy | Encryption and 2FA make this a strong point. | ⭐⭐⭐⭐⭐ |

Customer support | Backblaze operates a ticket system, with business-hour live chat, and additional paid support options | ⭐⭐⭐⭐ |

Additional features | Choose storage location (US and EU), | ⭐⭐⭐ |

Time to upload 1GB | 7m21s - a little slow | ⭐⭐ |

Time to download 1GB | 2m52s - not bad | ⭐⭐⭐ |

Platforms | PC, Mac, iOS, Android, Linux | ⭐⭐⭐⭐ |

Versions kept | Unlimited | ⭐⭐⭐⭐⭐ |

Price | Competitive and zero discrepancy between personal and business users. | ⭐⭐⭐⭐ |

Best cloud storage for Windows file management

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want reasonable pricing plans: IceDrive's pricing is attractive, with a 10GB free plan, in addition to three subscription tiers: Lite, Pro, and Pro+.

✔️ You need a virtual drive: Assigning itself the letter 'I:' in Windows, IceDrive can be used as a virtual drive, with all operations feeling as fast as they would for files on your own hard drive.

❌ You're a Mac or Linux user: IceDrive's virtual drive offering, one of its standout features, is only available with the Windows version.

❌ You need more collaboration features: IceDrive has little to offer in the way of collaboration, outside of setting passwords and expiry dates for shared content.

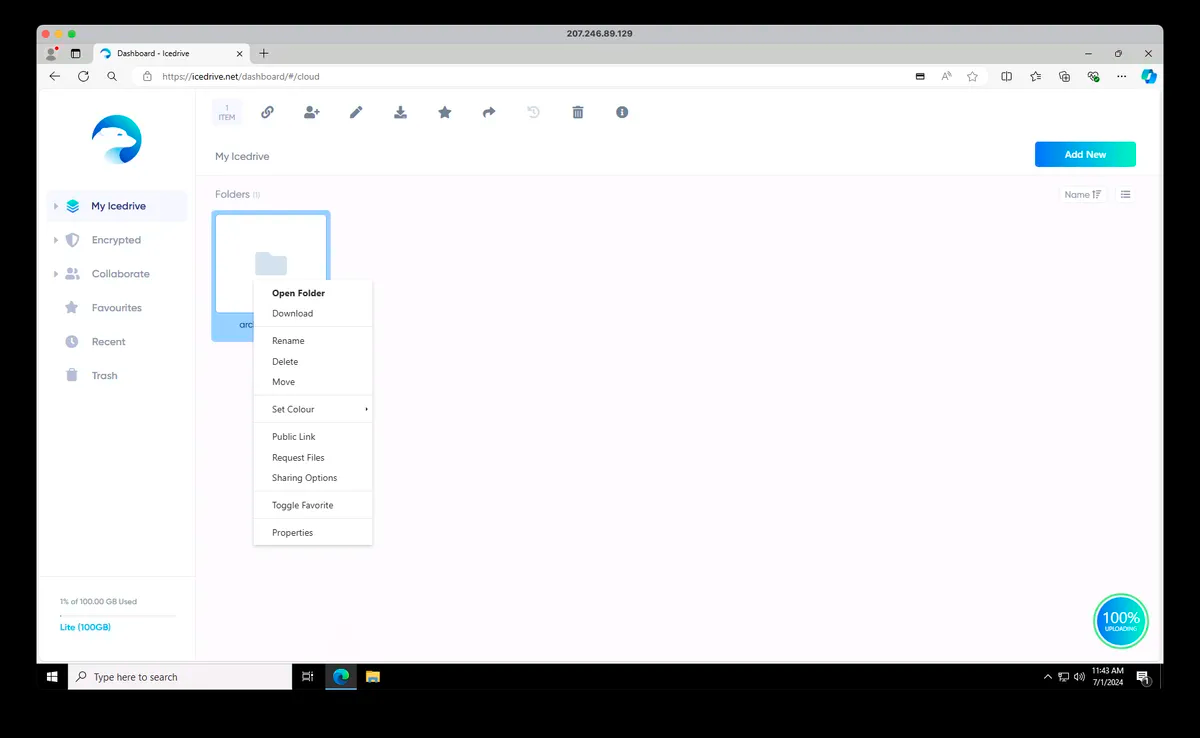

IceDrive is a decent cloud storage platform, boasting a virtual drive for Windows users to employ.

UK-based IceDrive has only been in the cloud storage business for a very few years, but its groundbreaking file management features more than justify its place in our 'Best...' list.

Windows users can browse their storage space from Explorer, for example, moving, renaming, opening, and even editing files, just like working on a local drive. Install the Windows, Mac or Linux app and you can also preview documents and stream media files without having to download them first.

The clean and easy-to-navigate browser interface is so simple that even newbies will figure it out in moments. The desktop and mobile apps have similar functionality, but we found inconsistencies in design and layout made them a little more awkward to use.

You also won't be throttled by the number of devices you use, as Icedrive offers unlimited device access. The one limiter you may encounter is the bandwidth limit, however.

▶ Read more: IceDrive review

Security

↓ Read my full IceDrive analysis ↓

IceDrive encrypts data on your device before it's transferred, and its zero-knowledge approach ensures that only you decrypt and view your data. IceDrive is also the only cloud storage provider to use the Twofish encryption algorithm, which matches the security of other 256-bit algorithms.

Two-factor authentication is available to all, while SMS authentication is only available to premium customers. We don't recommend using the latter, as text messages are far easier to intercept than one-time passwords generated by reliable authenticator apps.

Price:

A 10GB free plan gives you an easy way to get started despite restrictions (no client-side encryption, plus a 50GB/month bandwidth limit.) If you need more, annual plans range from $4.99 a month for 100GB, $7.99 a month for 1TB, and $14.99 for 3TB.

When we last checked in February 2025, and these prices have remained fairly consistent for the past few months. There are savings to be had when buying in for a year or two, but the value of that depends on your usecase.

However, IceDrive is now once again offering lifetime plans after revising its pricing structure. Right now, $299 will get you 512GB of lifetime storage, 2TB is $479, and 10TB is $1199.

Those prices are okay, but what we really like about this structure is that additional data allowances can be stacked on top with subsequent payments, currently $79 for an additional 128GB, 512GB for $199, and 2TB for $399.

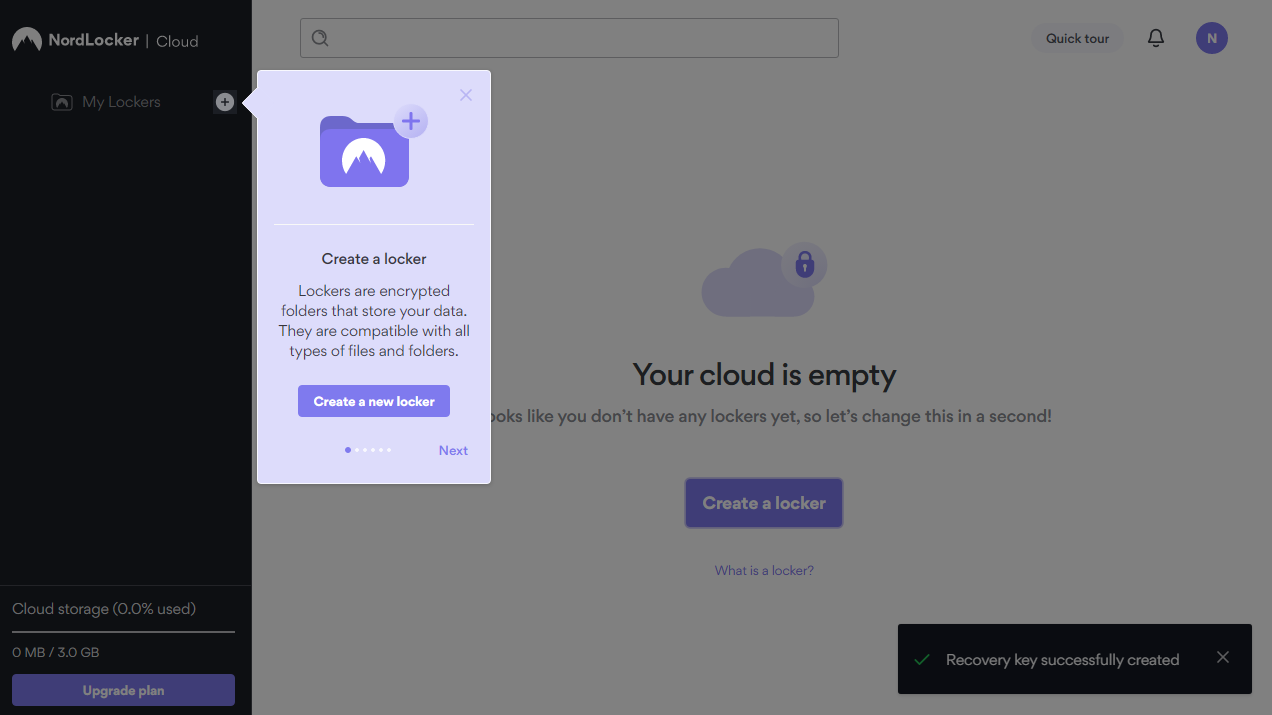

Best cloud storage for ease of use

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want a slick interface: One of the simplest cloud storage platforms to use, Nordlocker's drag-and-drop functionality makes this software extremely straightforward for novice or experienced users.

✔️ You like free storage: 3GB of storage is offered completely free of charge. And subscription plans are reasonable too.

❌ File recovery is important to you: There's no trash folder with NordLocker, so when files are deleted, they are gone for good.

❌ You need mobile access: Although there are now Android and iOS apps, these are somewhat misleading. In fact, these apps simply redirect users to a Safari-based browser portal. Users may find the lack of a dedicated mobile app disappointing.

NordLocker is a reliable feature-rich cloud storage platform that business owners will particularly enjoy.

NordLocker is hard to beat for privacy in the cloud storage space: it comes from the people behind NordVPN, one of the best VPNs around.

The service scores well on the fundamentals. We found NordLocker's leading-edge encryption extremely quick, upload times were reasonable, and the addition of multi-factor authentication protects your account from attackers.

▶ Read more: NordLocker review.

Usability: NordLocker is by far one of the easiest to use. The desktop apps enable uploading, downloading, and managing your files with little more than a drag and drop.

The mobile apps are just a mobile browser running inside an application, but that's not an obstacle to functionality.

Security: With its zero-knowledge encryption system, not even a NordLocker security worker can decrypt your stored files. But that shouldn't be a problem - such is NordLocker's confidence in its security protocols. In fact, the vendor even offered a $10,000 reward to any individual that could break into its encrypted locker.

Price: Nordlocker is offering offered 53% off its 2TB plan when billed annually, coming to $6.99 a month, or 40% off 500GB billed annually for $2.99 a month.

A free plan gives you 3GB of storage to play with, but that's disappointing compared with the 5-10GB (or Google Drive's comparatively mammoth 15GB) allocated elsewhere.

Best cloud storage for users in Microsoft's ecosystem

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You're a big Windows user: Microsoft OneDrive offers close integration with Windows, Microsoft 365, and many other apps.

✔️ You value collaboration: That Microsoft 365 integration makes working on documents live, remotely, and with the help of its artificially intelligent (AI) Copilot tool a cinch.

✔️ Design is important to you: An excellent interface from the people that know a thing or two about what tech users like and what they don't.

❌ You're a macOS user: Although OneDrive does work with Apple's operating system, it's simply not as seamless.

❌ Free storage is important to you: Although the 5GB offered here for free isn't meager by any means, it's beaten out by the flat rates offered by a good number of other cloud storage platforms.

Microsoft OneDrive doesn't have the features of some of the cloud storage competition, but its Windows and Microsoft 365 integration, XBox support, and simple interface, make this a great solution for anyone committed or open to the Microsoft ecosystem.

OneDrive comes built into Windows 10/ 11, for instance, so there's nothing to install. It shows up in Explorer, and you can drag and drop files to sync them to the cloud and your other devices.

Features: Microsoft 365 support includes auto-saving to the cloud, and collaboration options include the ability to work on documents simultaneously with others, or share them via OneDrive-generated links.

Usability: Microsoft hasn't forgotten other platforms, but we found they delivered mixed results on the usability front. The web interface covers the basics, for instance, but doesn't have the simplicity or style of Google Drive or Dropbox.

The Mac client is more straightforward, though we found some conflicts with iCloud. The elegant and intuitive mobile apps are the real highlight, though, with strong photo and video syncing tools and solid security.

Price: OneDrive's free plan offers only 5GB, half the allowance you'll get with some providers. You can pay $19.99 a year to upgrade, but that still only gets you 100GB. Microsoft 365 users get the best value, with Outlook, Word, Excel, and PowerPoint, and 1TB of OneDrive storage from $70 a year.

▶ Read more: Microsoft OneDrive review.

Best budget option

Our expert review:

Specifications

Reasons to buy

Reasons to avoid

✔️ You want a free online office suite: the likes of Google Docs and Sheets have become commonplace for firms that don't want to pay for Microsoft's proprietary software.

✔️ You want a large amount of free storage: Google Drive allows for 15GB of free storage - for some businesses, that may be enough.

✔️ You're looking for value per gigabyte: These, for our money, are some of the best price plans available for cloud storage right now. Given that Google is an absolute behemoth and able to sustain itself, this is unlikely to change.

❌ You don't want to be tied into Google's ecosystem: If you're more of a Windows or macOS user, this may not be the right cloud storage platform for you.

❌ You want to try out a paid plan: Google Drive plans are non-refundable.

Google Drive comes with a great pedigree and a name you can trust. Its generous free plan is another huge draw.

Whatever platform you choose, we found Google Drive to be intuitive and easy to use. The Android and iOS apps are a close match for the browser view, ensuring smooth operations even when you're regularly switching devices.

We've been a little disappointed by previous versions of the desktop apps, but the latest edition is just as easy to operate as Drive apps on other platforms.

Google Drive's Android and Google Workspace integration make it an ideal cloud storage platform for staunch Google users, but a generous 15GB free tier, and a host of desktop, mobile and Drive-supporting third-party apps, ensure it carries plenty of appeal for newcomers.

▶ Read more: Google Drive review.

Price: Business Workspace plans are billed per user per month, require a one year commitment and, in offering far more beyond cloud storage, cost anywhere between around $8 to $25.

These provide up to 5TB of storage per user, and allow for large scale business meetings up to 1000 users, as well as in-domain live-streaming. It's comprehensive, but probably only worth it for large companies. Enterprises are encouraged to contact Google's sales team privately for trials and custom quotes.

In terms of personal pricing, you can choose between 100GB or 2TB personal plans, which are, as of February 2025, priced at $1.99 and $9.99, per user per month respectively, with up to 16% saved when billed annually. An 'AI Premium' plan offers 2TB of storage alongside access to Google's Gemini AI service, but it also costs $19.99.

Features: Small team management is also on the cards, as you can share your allowance with up to five people, and the service more than delivers on the cloud storage essentials, with versioning support, offline access, syncing, file sharing all here.

Business users will love the Google Sheets, Docs and Slides integration, for instance, where you can create, edit and share cloud files with others, without ever downloading anything.

Security: Drive doesn't have end-to-end encryption, which means that, in theory, Google could see your files. So, if you're not a Google fan, tying yourself so tightly into the company's ecosystem may not appeal.

But for everyone else, it offers a slick and powerful free service, and plenty of benefits if you upgrade.

Best cloud storage comparison table

Cloud storage services | Free plan | Best plan | Storage capacity | Online editing and collaboration | Offline access | Device backup | File versioning | Platforms available |

|---|---|---|---|---|---|---|---|---|

10GB | $4.98 for one year of 10TB storage | Up to 100TB | Only through Microsoft Office | Yes | Yes | Yes | Web, Windows, Linux, iOS, Android | |

10GB | $399 for 2TB for life | 500GB - 10 TB | Yes | Yes | Yes | Yes | Web, Windows, Mac, Linux, iOS, Android | |

5 - 25GB | Teams Unlimited ($15 per user a month), Solo Basic ($6 a month for one user) | 2TB-6TB for individuals, potentially unlimited for businesses | Yes | Yes | Yes | Yes | Web, Windows (64 and 32 bit), Mac, iOS, Android | |

10GB | Lifetime €135 for 2TB | 20GB-20TB per users | Yes | Yes | Yes | Yes | Web, Windows, Mac, Linux, iOS, Android | |

None | Free unlimited storage for a year with ExpressVPN | Potentially unlimited | Yes | Yes | Yes | Yes | Web, Windows, Mac, Linux, iOS, Android | |

10GB | $7.99 a month for 1TB | 100GB-10TB | Yes - local changes are synced | Yes | Yes | Yes | Web, Windows, Mac, Linux, iOS, Android | |

3GB (personal plan), 2 week free trials for business plans | $6.99 a month for 2TB | 150GB-5TB - custom plans also available | No | Yes | Yes | Yes | Web, Windows, Mac, iOS, Android | |

5GB | A Microsoft 365 Personal subscription, 1TB for $70year | 100GB-6TB | Yes - with Microsoft Office files | Yes | Yes | Yes | Windows, Mac, iOS, Android | |

15GB | 100GB for $15.99 a year for individuals and teams of up to 5 | 200GB-2TB, Unlimited Business Plans available | Yes | Yes | Yes | Yes | Web, Windows, Mac, iOS, Android |

Cloud storage services: Honourable mentions

There are some providers that, for one reason or another, aren't on this list. Some are on our radar and have impressed us, but we haven't had a chance to review them yet and so we don't feel qualified to comment with an expert opinion.

There are also some providers that are a little too specialised for this guide. CloudSpot offers storage as part of a store system for freelance photography businesses, while Filepass prides itself on supporting collaboration between creatives and clients.

Meanwhile, for free cloud storage, and a generous 20GB starter allowance that bests even Google Drive's offering, I like Blomp.

I will, of course, keep adding to this list as I gather more info on newer cloud storage services that go up against the larger companies: in the short term, they can often offer more features for less money.

Frequently asked questions

What is cloud storage?

The saying; 'There is no cloud storage, it's just someone else's computer', does have an element of truth to it. Cloud storage is a remote virtual space, usually in a data center, which you can access to save or retrieve files.

Trusting your cloud storage is important, so most providers will go to lengths to prove that their service is safe, like with secure encrypted connections, for example.

Maximum security data centers ensure no unauthorized person gets access to their servers, and even if someone did break in, leading-edge encryption prevents an attacker viewing your data.

There are dozens of services which are powered by some form of cloud storage. You might see them described as online backup, cloud backup, online drives, file hosting and more, but essentially they’re still cloud storage with custom apps or web consoles to add some extra features.

Free vs Paid cloud storage: which is right for you?

If your backup budget is low (or non-existent) then opting for free cloud storage might appeal, but is it the right choice for you?

Capacities are often very low (NordLocker's free plan has just 3GB, for instance), which is likely to rule out free plans for any heavy-duty tasks. Some free options may have other limits, or leave out important features from the paid plans. IceDrive's 10GB plan looks generous, for instance, but you can only use 3GB bandwidth a day, and there's no client-side encryption.

These may not be deal-breaking issues, at least if your needs are simple, and you can do better with a little work. Signing up with one provider doesn't mean you can't use another, for instance: set up IDrive for one task, Google and OneDrive for a couple of others, and suddenly you've 30GB to play with.

Free plans aren't only useful for bargain hunters, though. However much you've got to spend, the real advantage of a free plan is it gives you time to try out different platforms before you commit.

Cloud storage glossary

Baffled by cloud storage babble? We've got all the key terms you need to know.

AES-256: one of the strongest encryption algorithms around, AES-256 is often used to protect cloud storage and ensure no-one else can access your data.

At-rest encryption: encrypts your data while stored on a device, protecting it from snoopers. See In-transit encryption.

Cloud: servers that are accessed over the internet, along with the software, databases, computing resources and services they offer.

Continuous data backup: a clever technology which automates backups by looking out for new and changed files, and uploading them as soon as they appear.

Data center: one or more physical facilities which house networked computers and the resources necessary to run, access and manage them: storage systems, routers, firewalls and more.

Egress: the transfer of data from a network to an external location, such as downloading a file from a cloud storage account. (See Ingress.)

End-to-end encryption: a method of communicating data which ensures no-one but the sender and receiver can read or modify it. In cloud storage terms, it means your files can't be intercepted and accessed by anyone, even your provider.

Ingress: the transfer of data into a network, such as uploading a file to a cloud storage account. (See Egress.)

In-transit encryption: encrypts your data before transmission to another computer, and decrypts it at the destination. Even if an attacker can intercept your communications, they won't be able to read your files. (See At-rest encryption.)

Private cloud: a cloud computing environment which offers services to a single business only. A business might use its own private cloud storage to ensure no other company gets to handle its data, for instance, improving security. (See Public cloud.)

Private encryption key: a method of encryption which means only you can access your encrypted files. This guarantees your security, but is also a little risky, because if you forget your password, the provider can't help you recover it, and your data is effectively lost.

Public cloud: a network of computers which offers cloud services to the public via the internet. Google and Microsoft are examples of public cloud providers. (See Private cloud.)

S3: A fast flexible cloud storage type invented by Amazon and used by major companies like Netflix, reddit, Ancestry and more.

Sync: the process of keeping a set of files up-to-date across two or more devices. Edit a file on one of your devices, for instance, and a cloud storage service which supports syncing will quickly upload the new version to all the others.

Two-factor authentication: a technology which requires you to enter an extra piece of information (beyond just a username and password) when logging into a web account: a pin sent by email or SMS, a fingerprint, a response to an authenticator app. It's an extra login hassle, but also makes it much more difficult for anyone to hack your account. Sometimes called 2FA or two-step authentication.

Versioning: the ability to keep multiple versions of a file in your cloud storage area. Accidentally delete something important in a document last Tuesday, and even if you've updated the file several times, you may still be able to recover the previous version.

Zero-knowledge encryption: a guarantee that no-one else, not even your cloud provider, has the password necessary to protect your data. That's great for security, but beware: if you forget your password, the provider can't remind you, and your files will be locked away forever.

How to choose the best cloud storage service

How do we test cloud storage services?

There are a few things to consider when you're looking for best-in-class cloud storage services.

When I'm asking our testing to try out a product, I remind them to look out for the upload and download speeds of file transfers - but this is a minor component of the overall rating as there are scores of other factors that affect your download or upload speeds that cannot be easily mitigated (contention rate, time of day, and server load, for instance).

The testers are also advised to check to see if the the storage provider can recover deleted files, as well as if they keep multiple versions of files in case users need to undo changes - super helpful for the clumsier among us!

They also check, of course, for the cost of a service. It's not usually a particularly high-cost investment at first, but these things add up quick, especially when you factor in additional storage requirements, and opting for premium features such as artificial intelligence support on a service that also offers collaboration tools.

Security standards and credentials are also key. Look for a cloud storage provider that can boast the certifications that promise an SLA you can rely on - and safeguards that protect your data.

Last but definitely not least is the level of customer support that a cloud storage service will provide, whether it's 24-7 over the phone or web-based only - your files are important, and knowing that your provider cares too can make a real difference.

▶ Read more detailed coverage: Cloud storage reviews: how we tested them

There are a number of factors that I look for when considering the best-in-class cloud storage services.

In directing our testers, I've advised them to look at the upload and download speeds of file transfers, but this is a minor component of the overall rating as there are scores of other factors that affect your download or upload speeds that cannot be easily mitigated (contention rate, time of day, and server load, for instance).

Testers also check to see if the storage provider can recover deleted files, as well as if they keep multiple versions of files in case users need to undo changes

The other thing I ask testers to consider is the cost of a service. While cloud storage can initially be a very low-cost investment, the money can add up when you factor in additional storage requirements, and opting for premium features such as artificial intelligence support on a service that also offers collaboration tools.

Security standards and credentials are also key. Look for a cloud storage provider that can boast the certifications that promise an SLA you can rely on - and safeguards that protect your data.

Last but certainly not least is the level of support that a cloud storage service will provide to its customers, whether it's 24-7 over the phone or web-based only.

▶ Read more detailed coverage: Cloud storage reviews: how we tested them

Finding the perfect cloud storage solution starts by thinking about your needs. What are you hoping the service will do?

If your goal is to protect all your data from harm, then look for a service with solid backup tools and the ability to access your files from anywhere.

If you'd like to share a group of files - photos, say - across multiple devices, then you'll need quick and easy syncing abilities.

If you're more interested in sharing individual files or folders with others, look for a platform that supports password-protected or time-limited links, anything that helps you stay more secure. Businesses will benefit from collaboration tools, too, allowing users to work on files together, add comments and more.

Pay attention to the figures. Most cloud storage keeps previous versions of your files for up to 30 days, for instance, but that's not always the case. If your provider says it supports 'versioning', that's good, but check the details, see how it really compares to the competition.

You'll want to consider a provider's cost and capacity, too, but be careful. Don't simply opt for a high-capacity plan just because it seeks better value: think about whether you really need that much space.

And when it comes to price, browse the small print, look out for hidden charges or fees which jump on renewal, make sure you know exactly what you're getting.

Credentials around service and security standards are also key. Look for a cloud storage provider that can boast the certifications that promise an SLA you can rely on - and safeguards that protect your data.

In addition, make sure your storage provider offers the scalability you need should you grow - and a flexible pricing model to accompany it. Perhaps the best thing to do when choosing a cloud storage provider is simply to shop around. There’s bound to be a solution that suits your needs - but don’t simply go with the first cloud provider you find.

Tested by:

Dave is a freelance tech journalist who has been writing about gadgets, apps and the web for more than two decades.

Jonas has been working with technology since childhood in the 1970's, starting with BASIC programming on a TRS-80. Today, you'll find him testing security, data, and cloud products alongside Wi-Fi routers and business software.

With several years’ experience freelancing in tech and automotive circles, Craig’s specific interests lie in technology that is designed to better our lives, including AI and ML, productivity aids, and smart fitness

Mike is a lead security reviewer at Future, where he stress-tests VPNs, antivirus and more to find out which services are sure to keep you safe, and which are best avoided.

Want to learn more about cloud storage?

To learn more about cloud storage, we have plenty of resources covering what it is and how we test it. Aside from free or lifetime deal offerings, TechRadar Pro also cover unlimited cloud storage deals, and the best cloud document storage.

Cloud storage experts recommend using a '3-2-1' backup strategy in order to keep your data safe, so I also recommend taking a look at our guide to the best cloud backup for more information.

Get in touch

- Want to find out about commercial or marketing opportunities? Click here

- Out of date info, errors, complaints or broken links? Give me a nudge

- Got a suggestion for a product or service provider? Message me directly

- You've reached the end of the page. Jump back up to the top ▲

Benedict has been with TechRadar Pro for over two years, and has specialized in writing about cybersecurity, threat intelligence, and B2B security solutions. His coverage explores the critical areas of national security, including state-sponsored threat actors, APT groups, critical infrastructure, and social engineering.

Benedict holds an MA (Distinction) in Security, Intelligence, and Diplomacy from the Centre for Security and Intelligence Studies at the University of Buckingham, providing him with a strong academic foundation for his reporting on geopolitics, threat intelligence, and cyber-warfare.

Prior to his postgraduate studies, Benedict earned a BA in Politics with Journalism, providing him with the skills to translate complex political and security issues into comprehensible copy.

- Barclay Ballard

- Ellen Jennings-TraceStaff Writer