I've gamed on the best graphics cards for the past two years. Here's what I've learned.

If we're being honest, you don't need this much power

As we get closer to the end of this graphics card generation, there is a lot of excitement around what comes next for Nvidia and AMD. I'm certainly one of those people who is eager to see what Team Green and Team Red have in store, especially if they can do more to prioritize energy efficiency and value for the customer rather than go all in on power and performance that no one – even the planet – can afford.

That said, I've been in a rather privileged position relative to most people in that I've actually been able to game on pretty much every current-gen graphics card for work, and so I've learned a thing or two about the current state of the market for the best graphics cards, and where the technology needs to go in the next generation.

Ray tracing is still a work in progress right now

Ray tracing is a fascinating technology that has huge potential to create stunning life-like scenes by mimicking the way our eyes actually perceive light, but boy howdy is it computationally expensive.

The number of calculations required to realistically light a scene in real time are enormous, and are why real-time ray tracing was long considered practically impossible on consumer grade hardware. That is, of course, until Nvidia releasing its Turing architecture with the GeForce RTX 2000-series graphics cards.

As the first generation of consumer graphics cards with real time ray tracing, it is understandable that it was a neat experimental feature, but you really couldn't do much with it while playing without absolutely cratering your frame rate. This is still true, even as we wrap up the Nvidia Ampere generation of cards.

These cards are better able to handle real time ray tracing, especially at lower resolutions, but you will still need to make a compromise between resolution and ray tracing. For example, there is no graphics card that can effectively ray trace a scene at native 4K resolution that isn't a complete slideshow other than the RTX 3090 Ti, which is able to ray trace Cyberpunk 2077 at about 24 fps with ray tracing turned on.

AMD, meanwhile, is on its first generation of graphics card hardware with real time ray tracing, and it's performance is definitely where Nvidia Turing cards more or less were when it came to ray tracing performance, which is to say not awful, but still definitely first-gen tech.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Upscaling the future

So how does anyone effectively play any of the best PC games at high resolutions with ray tracing turned on anyway if even the best gaming PC possible nowadays is going to struggle?

I'm glad you asked, because the true revolutionary development of the past few years hasn't been ray tracing, but graphics upscaling. Nvidia Deep Learning Super Sampling and AMD FidelityFX Super Resolution (as well as AMD Radeon Super Resolution), have made playing PC games at high resolutions and settings with ray tracing possible.

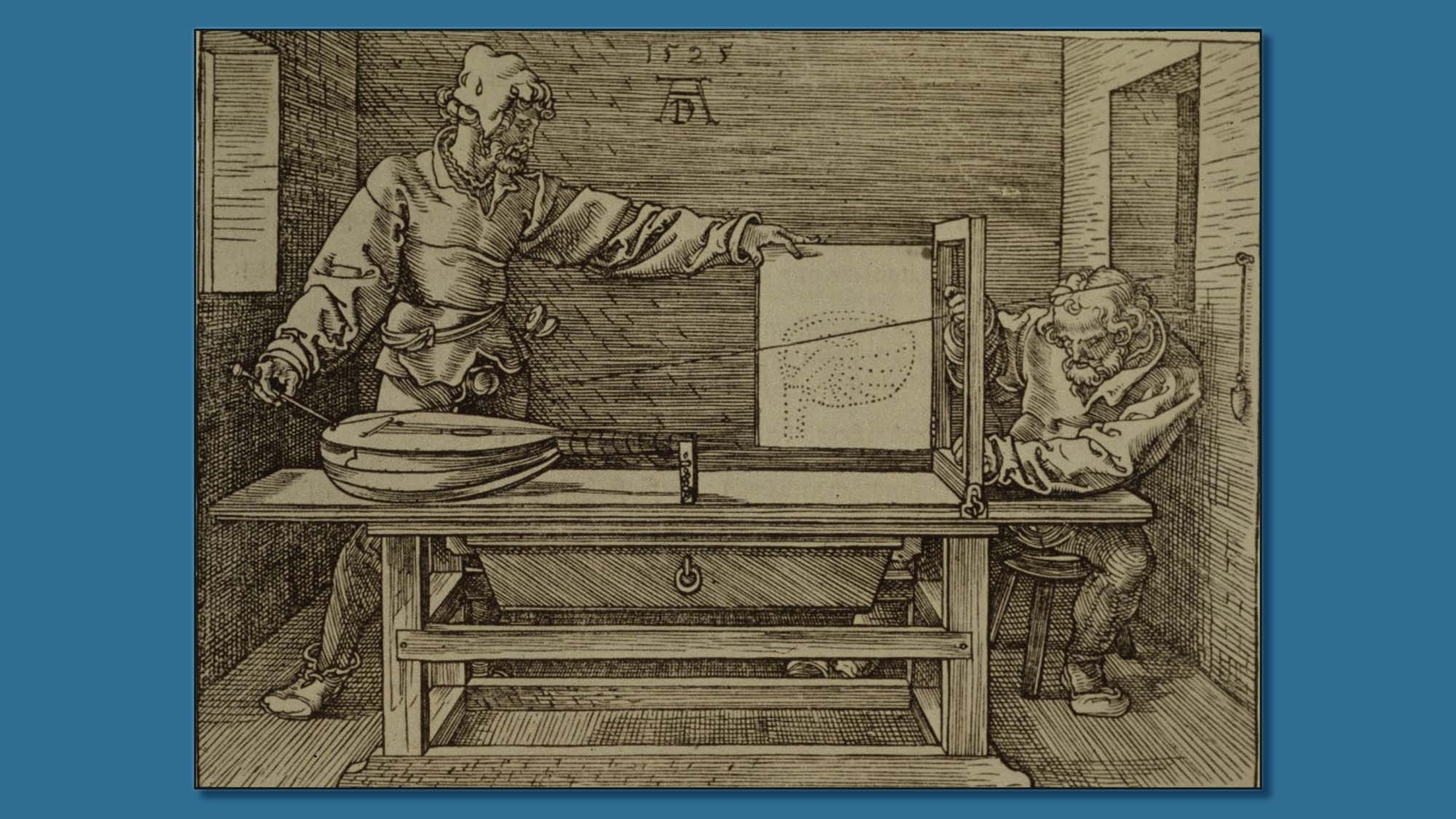

In our slideshow above, you can see the difference between native 4K with all setting and ray tracing turned up to ultra presets and how the game looks without DLSS, with DLSS set to quality, and DLSS set to performance. I can tell you that the difference isn't really apparent while running the benchmark or playing the game either.

Without upscaling, those with Nvidia GTX 1060s and AMD RX 5700 XTs would have very little reason to upgrade to a new graphics card, honestly.

Some of the best games don't take advantage of this hardware, and those that do can still suck

The thing about games is that they are rarely about the incredible graphics, but are about the experience. The kind of hardware we're seeing now makes for some great looking games, but if they are poorly optimized, what's the point? You end up with a Cyberpunk 2077, a game that launched so broken on PCs that it took a substantial amount of market value off the studio that made it, CD Projekt Red.

Meanwhile, something like Vampire Survivor can pretty much take over Steam even though it looks like it can run on an NES doped up on Adderall, largely because it hits at the core of what makes us want to play games in the first place: we want them to be fun. And the fact is, you don't need an RTX 3090 Ti to have fun, and I think way too many of us forget that.

If Nvidia and AMD were smart, they would focus less on making cutting edge graphical improvements and more on efficiency and value, so that those gamers who do want to get the best graphics and performance out of a game can do that without having to spend a fortune to do so. Gamers are going to be less and less able to pay for the best Nvidia Geforce graphics cards and the best AMD graphics cards in the years ahead, and it would honestly suck if we keep seeing an already expensive hobby get even more inaccessible.

John (He/Him) is the Components Editor here at TechRadar and he is also a programmer, gamer, activist, and Brooklyn College alum currently living in Brooklyn, NY.

Named by the CTA as a CES 2020 Media Trailblazer for his science and technology reporting, John specializes in all areas of computer science, including industry news, hardware reviews, PC gaming, as well as general science writing and the social impact of the tech industry.

You can find him online on Bluesky @johnloeffler.bsky.social

Become a TechRadar Insider

Become a TechRadar Insider