Samsung denies that its S23 Ultra Space Zoom moon photos are fake

Samsung responds to its latest lunar controversy

Samsung's Space Zoom feature for Galaxy phones has come under the microscope (or perhaps the telescope) again this week, after a Reddit post claimed that the software process involves the fabrication of additional detail. Samsung has now responded to the allegations, and disputed the claims in the form of an official quote a new blog post.

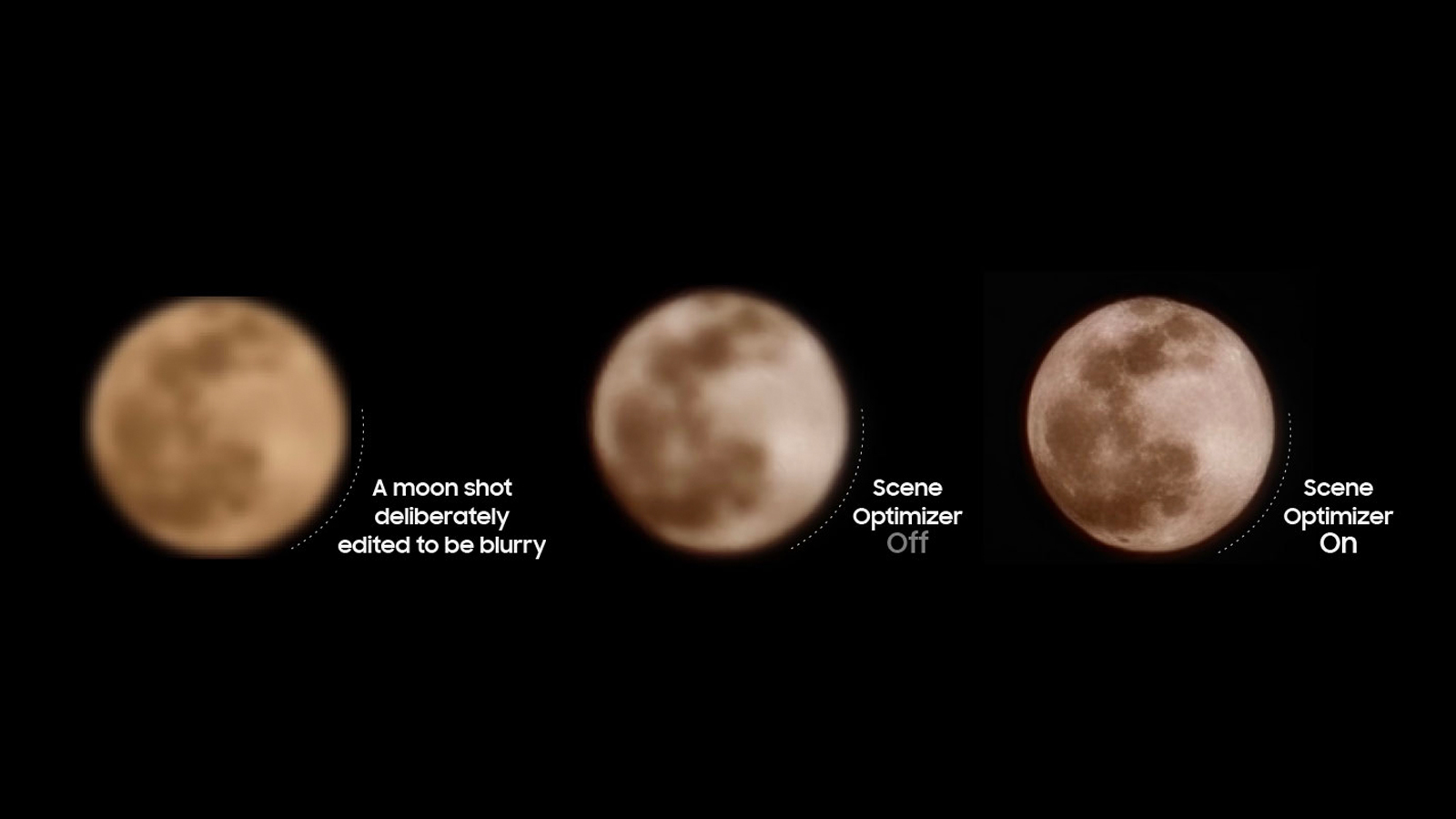

We asked Samsung directly if moon photos taken using phones such as the Samsung Galaxy S23 Ultra involve the overlaying of additional detail or texture that isn't present in the original photos. In an official statement, Samsung said: "When a user takes a photo of the Moon, the AI-based scene optimization technology recognizes the Moon as the main object and takes multiple shots for multi-frame composition, after which AI enhances the details of the image quality and colors. It does not apply any image overlaying to the photo".

Samsung added that this process isn't compulsory, stating that "users can deactivate the AI-based Scene Optimizer, which will disable automatic detail enhancements to any photos taken." However, doing this will make it impossible to achieve the kind of results that are possible when Scene Optimization is turned on, as the feature goes way beyond adjusting exposure.

These comments echo what Samsung has previously said about its Space Zoom moon shots, which have been reformatted into a new blog post that is similar to the one we saw previously on a Samsung Community board.

The post reiterates that the moon shots are based on "deep learning-based AI technology", but that this is used to "eliminate remaining noise and enhance the image details" rather than copy-and-paste extra detail not captured by the camera. Interestingly, Samsung refers to the results as being "pictures of the moon" rather than photos several times, which hints at the artificial nature of the processing.

Just another insane moon shot with the Samsung Galaxy S23 Ultra. No tripod, not even full-strength Space Zoom. #SamsungGalaxyS23Ultra pic.twitter.com/PFngh8vcBEMarch 5, 2023

So where does this leave us? The boring answer is that all photography is on a sliding scale between the so-called 'real' kind – photons hitting a camera sensor and being converted into an electrical signal – and the 'fake' kind that Samsung has again been accused of in this latest controversy.

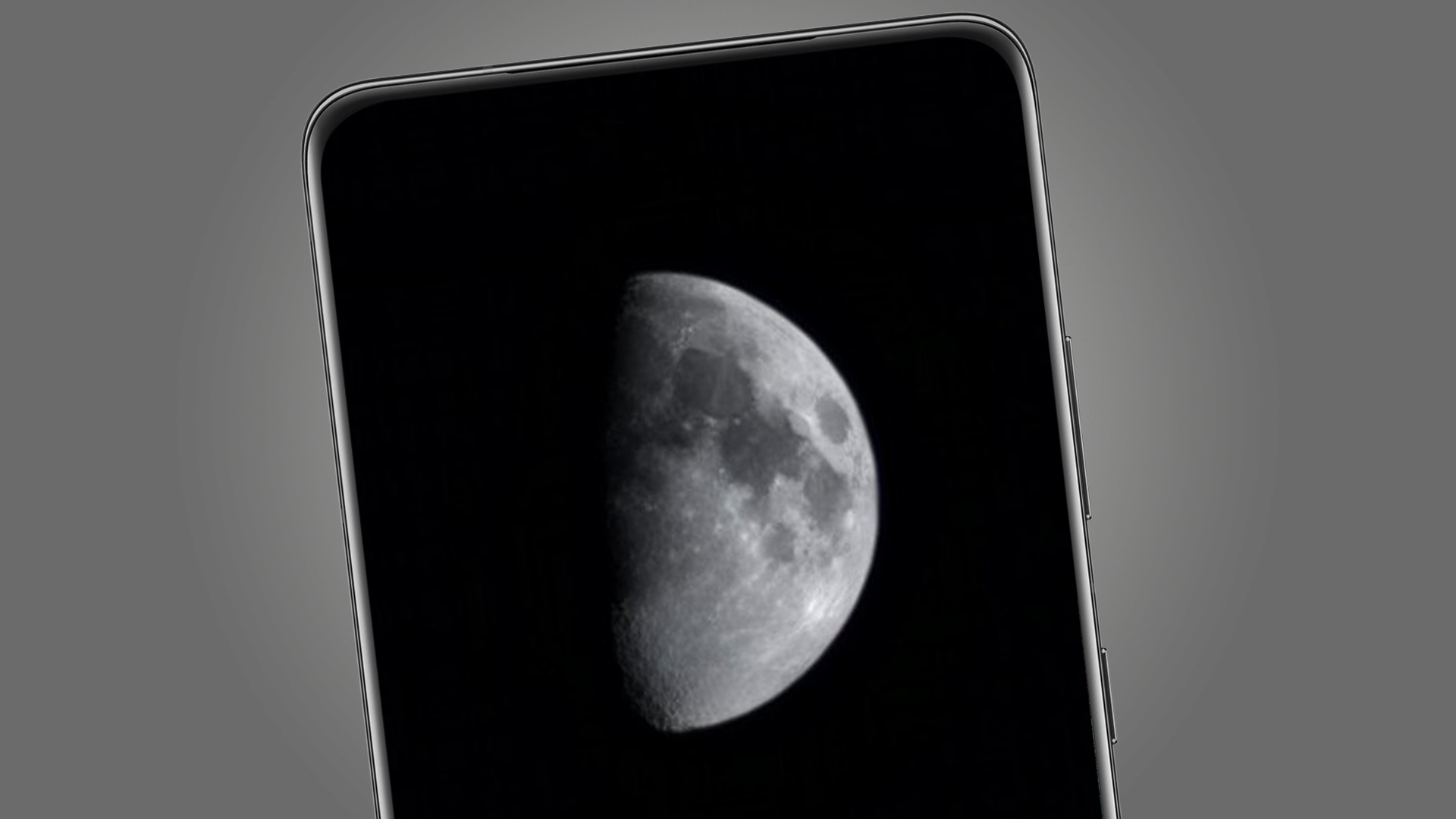

AI-powered modes like Samsung's latest Scene Optimizer, which has been producing moon shots like the one below since the Samsung Galaxy S21, undoubtedly push the photography towards the more artificial end of that scale. That's because it uses multi-frame synthesis, deep learning and what Samsung mysteriously calls a "detail improvement engine" to produce the impressive final results.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

We still don't know exactly what is happening in that engine, and it's fair to say that extra moon detail is conjured from the very limited information captured by your Galaxy's camera. But Samsung still disputes that this detail is simply applied to or overlayed on Space Zoom moon photos.

Tellingly, Samsung gives a nod to the Reddit controversy at the end of its blog post, where it says that "it continues to improve Scene Optimizer to reduce any potential confusion that may occur between the act of taking a picture of the real moon and an image of the moon".

Analysis: a debate with blurry edges

Samsung's response isn't detailed enough to settle the debate about whether or not its Space Zoom photos are 'fake', as that's really a matter of opinion. But it does counter the suggestion that it's simply slapping extra detail and texture over your shots en masse.

The issue with the debate is that every digital photo – even a raw file – is a fabrication of some kind. During the demosaicing process, when the red, green and blue values of a sensor's pixels are created, a process called interpolation simply guesses the most likely value of neighboring pixels.

Once you add multi-frame processing and AI sharpening into the mix, it's clear that every photo is artificial (and based on processing guesswork) to a large extent. But the question raised by this debate is whether Samsung's phones have moved to the point where some of its photos – in particular, its moon shots – have become completely detached from the act of capturing of photons.

That's a debate that will likely never be settled. AI algorithms fill in details based on patterns they see when trained on a vast dataset of similar photos, but Samsung says that what it isn't doing is retrieving a previous image of the moon to overlay on top of your blurry shot. Whether or not that process is acceptable to you is something for you to decide when you next see a glorious full moon and only have your Galaxy smartphone to hand.

Mark is TechRadar's Senior news editor. Having worked in tech journalism for a ludicrous 17 years, Mark is now attempting to break the world record for the number of camera bags hoarded by one person. He was previously Cameras Editor at both TechRadar and Trusted Reviews, Acting editor on Stuff.tv, as well as Features editor and Reviews editor on Stuff magazine. As a freelancer, he's contributed to titles including The Sunday Times, FourFourTwo and Arena. And in a former life, he also won The Daily Telegraph's Young Sportswriter of the Year. But that was before he discovered the strange joys of getting up at 4am for a photo shoot in London's Square Mile.

Become a TechRadar Insider

Become a TechRadar Insider