Pixel's Visual Core for third party apps tested: Here's what we found

Does this thing really work?

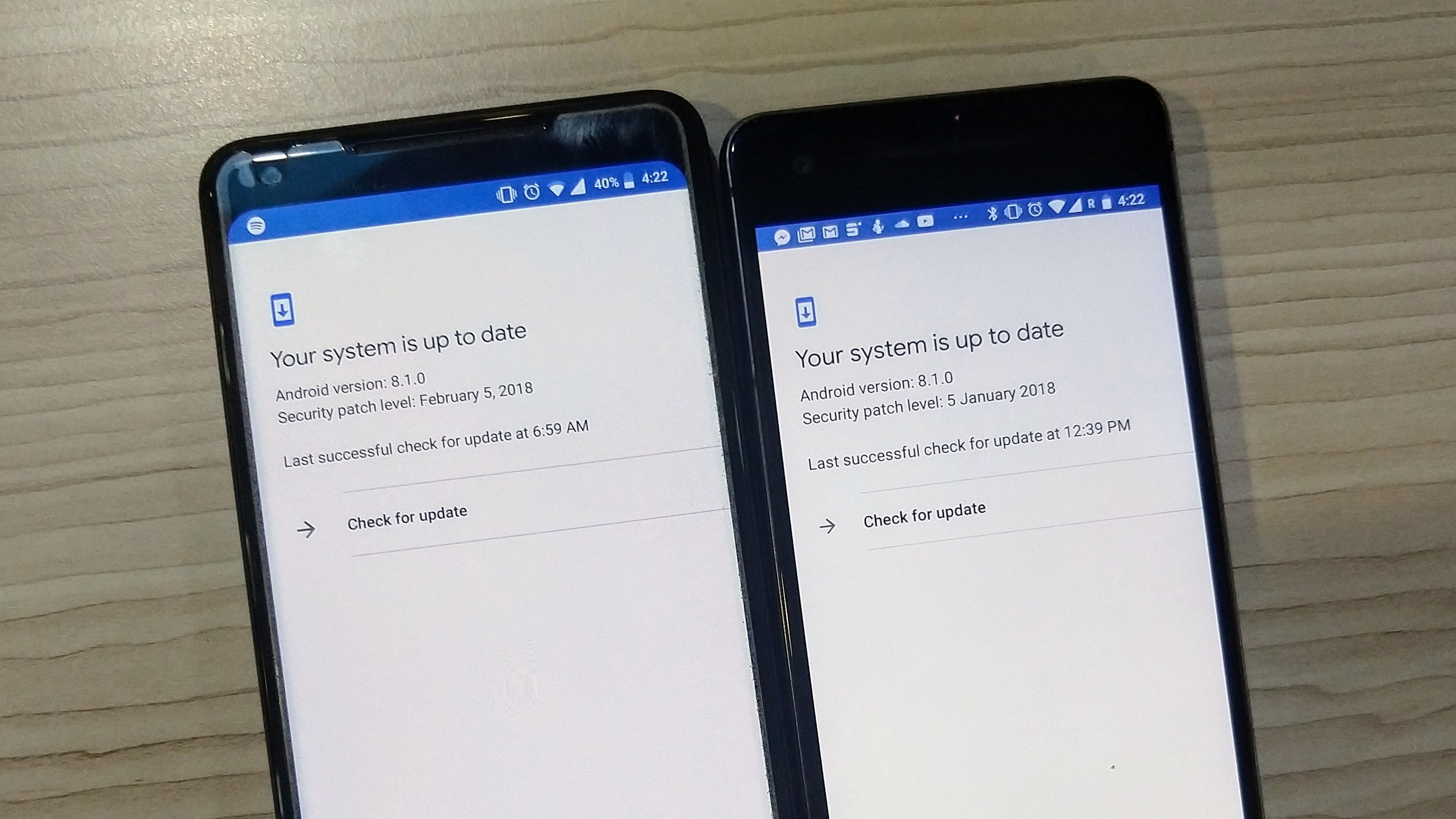

The camera on Google Pixel 2 works with a key ingredient called the Visual Core, which makes it as good as it is. The Visual Core was announced in October 2017, but it was previously gated off to developers in the the Android Oreo 8.1. But Google in its February 2018 security patch rolled out the support for Visual Core for third party apps like Instagram, WhatsApp and Snapchat.

The Visual Core is a custom physical chip (Image Processing Unit) with eight cores dedicated to HDR+ image processing, which uses machine learning to enhance your photos without depending on the SoC.

Until now, the camera didn’t fully use its computational photography skills because the phones were using the resident Snapdragon 835’s IPU for image processing.

But that’s no longer the case after Google rolled out the HDR+ support for third party apps, thanks to the combination of Neural Networking (AI) APIs on Android 8.1 and Camera2 API added in Android 5.0 Lollipop.

We compared samples from Pixel 2 XL running Visual Core update against the one without it, and found out something really interesting.

Instagram tells a different story

We started with Instagram.

Google has claimed that "the co-processor takes the detail and balance of contrast to a whole new level", which we found to be true to an extent.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

The picture below was taken indoors in artificial light, and the HDR+ did a good job of giving more definition in details. Also, the exposure looks more even, but the colour, when matched, was closer to the source in the image clicked on the Pixel 2 without the HDR+.

In the picture above, Instagram camera has tried to balance the exposure but the image looks washed out when compared to the one without HDR+. Even the colours were captured better on the Pixel 2 with Visual Core disabled.

The last picture sums up the whole story. The details are undoubtedly better with the HDR+ enabled, but it loses slight colour while underexposing the unwanted light from the source.

The Pixel 2 with no HDR+ produces punchier colours but the pictures look overexposed and lack detail.

WhatsApp camera is the differentiator

In the very first picture we clicked using the WhatsApp camera, the difference was clear. The picture using HDR+ shows better dynamic range, colours and exposure. The colour is close to the source and it also judges the scene better.

For example, check the window on the right side of the picture above. The one using Visual Core has handled the light pretty well. Also, the picture looks less shadowed, which automatically enriches the highlights of the photo.

The picture in natural light from was shot through a glass window. Both the pictures have good details but the one using the HDR+ has a much better dynamic range, despite losing colour vibrancy.

While the colours of the trees and buildings seem better in the picture with HDR+ disabled, the colour of the sky says it all. HDR+ doesn't just enhance the dynamic range, but it has also toned down the extra amount of warmth as seen in the picture without HDR+.

We have understood enough about the dynamic range and exposure control with the pictures before this. But the last picture here shows how HDR+ is eliminating the chroma noise from the picture (look at the background).

From the skin colour to the details of the hair, there's a very obvious difference visible here.

Verdict

There's no doubt over whether the Visual Core was doing its job or not. What was in question was - how well was it doing it?

The enhancement is pretty evident in the pictures we've clicked using WhatsApp. But with Instagram, things were a little confusing.

Since the update is still quite fresh, we might see some improvement in future updates from App makers like Instagram, which will possibly make the camera app more suitable for HDR+.

We must note that the Pixel Visual Core works with every app that has camera integration, but only if it targets API level 26.

Sudhanshu Singh have been working in tech journalism as a reporter, writer, editor, and reviewer for over 5 years. He has reviewed hundreds of products ranging across categories and have also written opinions, guides, feature articles, news, and analysis. Ditching the norm of armchair journalism in tech media, Sudhanshu dug deep into how emerging products and services affect actual users, and what marks they leave on our cultural landscape.

His areas of expertise along with writing and editing include content strategy, daily operations, product and team management.

Become a TechRadar Insider

Become a TechRadar Insider