Facebook is learning when to use Safety Check for disasters - and it's not easy

Deciding when to flip the switch

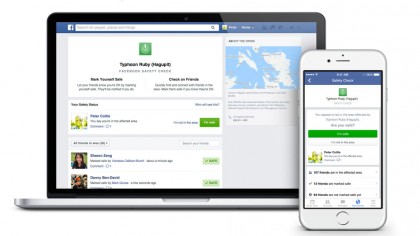

The Orlando shootings of June 2016 marked the very first time Facebook decided to turn on its Safety Check feature for users in the United States. But it is never an easy decision for the company to make - Facebook is even experimenting with an automated way to turn on the feature.

Safety Check gives those near an incident the opportunity to inform their loved ones that they're safe and sound, but, despite being used 28 times around the world for natural disasters and acts of terror, so far this is the first time Facebook has seen fit to turn on the feature in the US.

Katherine Woo, Product Lead of the Social Good project at Facebook explained, "By making the decision to go beyond natural disasters, we knew that unfortunately there were so many more human-related intentional or accidental events.

Article continues below

"It used to be that we had to wake up an engineer in the middle of the night to break the code and fix the tool. Now we have a much easier tool for people who are trained to do it."

Safety Check debuted in 2014, but Facebook only begun using the feature for human created - intentional and accidental - disasters following the 2015 Paris terrorist attacks.

Good intentions

Despite the feature debuting during the Paris attacks, some criticised Facebook for not rolling out the tech a week earlier after a double suicide attack in Beirut.

Facebook CEO Mark Zuckerberg, wrote on his profile at the time, "Until yesterday, our policy was only to activate Safety Check for natural disasters. We just changed this and now plan to activate Safety Check for more human disasters going forward as well."

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Facebook did so and used the feature further in the March 2016 Brussels attack and the Orlando shootings in June. But how does Facebook decide on what's a big enough disaster to include within Safety Check and avoid further controversy?

To enact a Safety Check, Facebook looks at three key areas. Scope is the first topic and that's simply how many people could possibly be affected by the event.

Second is scale, which is a little more complicated. Katherine Woo explains, "Is it threatening to health and or life? Is it threatening to the infrastructure of the region? Additionally could it destabilize to economic stability or even political stability in that area?"

Finally there's duration, which raises the question: how long an event takes place for and will it have a lasting effect on the region? The timing of a Safety Check is more complicated than you may first expect. Does a person exactly know if they are safe when they press the button to tell their followers where they are?

"Say there was a hurricane passing through an area," Woo said. "We want to make sure we get the timing right. We want to make sure the hurricane has passed through the area such that if you mark yourself as safe, that safety is stable."

Automated future

Facebook is succeeding in covering more disasters around the world. In 2014 and 2015 Facebook only enacted the feature 11 times but less than six months into 2016 Facebook has already used Safety Check 18 times.

In the future, Facebook may not use its current criteria to decide whether Safety Check is turned on.

"We really are doing our best to expand and touch more people. We've started to test a system where we can use community's signals to let us know when this will be relevant before an event gets big enough," Woo said.

"What we are doing is letting the community tell us when it's most relevant for them to mark themselves safe and which of their friends it would be most relevant to ask as well."

Safety Check may one day be automatically enacted by an algorithm at Facebook found by successive posts in a certain area on the same topic.

But the testing only began on June 2 so it's likely Facebook employees will continue to be the ones to press the button using the specifics of scope, scale and duration.

Safety Check is a useful feature when you're near an incident, but it's the one thing you never want to see it appear in your news feed.

James is the Editor-in-Chief at Android Police. Previously, he was Senior Phones Editor for TechRadar, and he has covered smartphones and the mobile space for the best part of a decade bringing you news on all the big announcements from top manufacturers making mobile phones and other portable gadgets. James is often testing out and reviewing the latest and greatest mobile phones, smartwatches, tablets, virtual reality headsets, fitness trackers and more. He once fell over.

Become a TechRadar Insider

Become a TechRadar Insider