Don't panic: Google is building a 'kill switch' for dangerous AI

Humans keep the upper hand for now

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

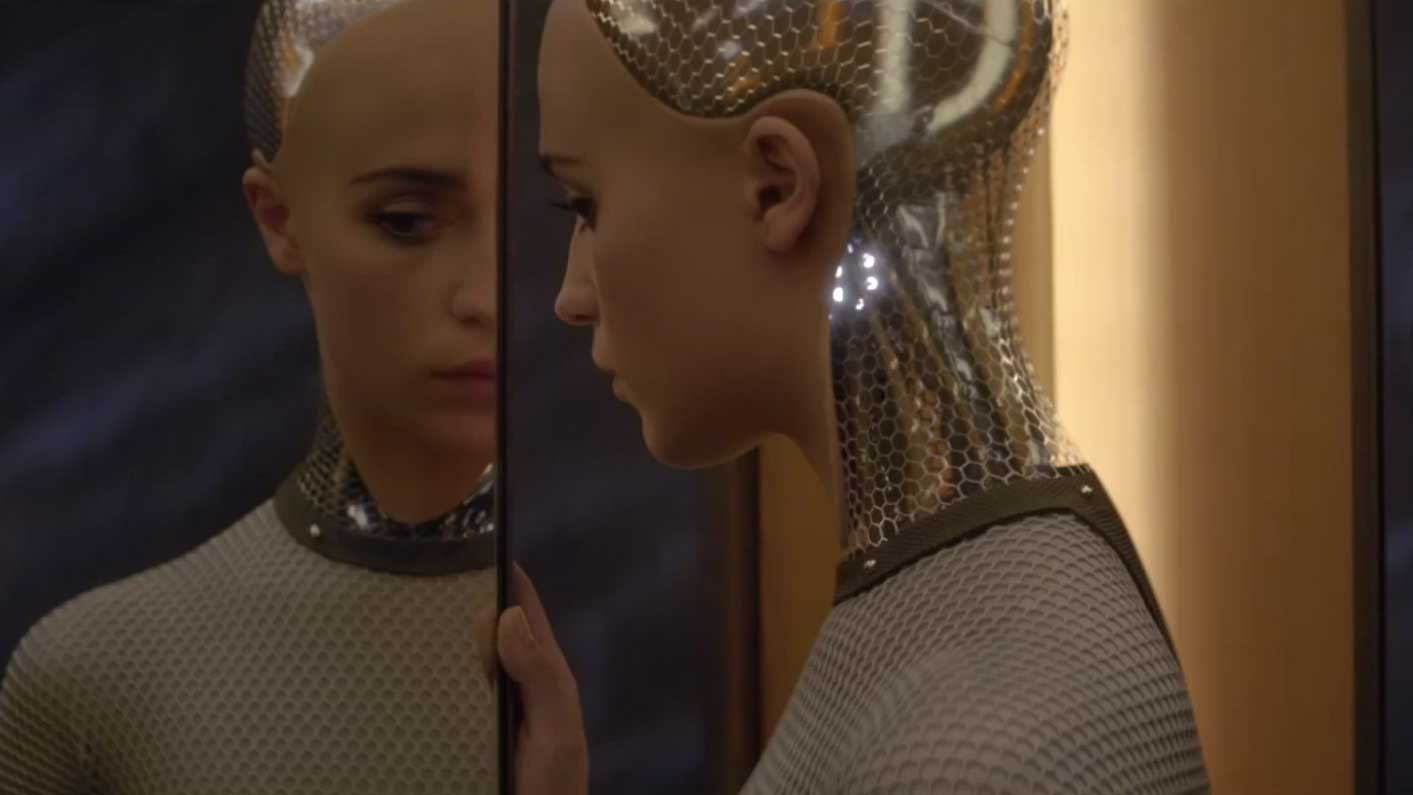

Ever since the creation of artificial intelligence, we've worried about what the consequences would be if AI suddenly decided it knew better than we did and started making decisions of its own. This hypothetical scenario hardly ever ends well.

It's comforting, then, to know that Google is working on an AI 'kill switch' that allows human operators to turn off super intelligent systems no matter how big their egos get. It's called "safe interruptibility" and it's being developed as part of the DeepMind system that recently proved its prowess at Go.

The team working on DeepMind has published a paper on the topic and set out a basic framework for a kill switch (or "big red button") that will wind down whatever robot army is currently marching on the major capitals of the world.

Article continues belowI'll open the pod bay doors myself, HAL

"Now and then it may be necessary for a human operator to press the big red button to prevent the agent from continuing a harmful sequence of actions - harmful either for the agent or for the environment - and lead the agent into a safer situation," explain Laurent Orseau and Stuart Armstrong, the paper's authors.

Not only should AI systems be prevented from disabling the big red button, they should be prevented from wanting to disable it, the researchers say. They also admit that scheduled interruptions, which machines can anticipate, may be more difficult to impose.

At the heart of the issue is the idea of rewards, or goals that the software code has been programmed to aim for - as AI gets cleverer, it may develop its own rewards that we haven't anticipated (it starts making its own judgements, in other words). Don't panic though, because the brightest minds at Google are on the case.

Watch our first impressions of the Android N beta:

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Via Engadget

Dave is a freelance tech journalist who has been writing about gadgets, apps and the web for more than two decades. Based out of Stockport, England, on TechRadar you'll find him covering news, features and reviews, particularly for phones, tablets and wearables. Working to ensure our breaking news coverage is the best in the business over weekends, David also has bylines at Gizmodo, T3, PopSci and a few other places besides, as well as being many years editing the likes of PC Explorer and The Hardware Handbook.