Move aside CPU, today is the day the GPU takes over your kingdom

GTC shows that AI is more than just a buzzword, and Nvidia is its new champion

It is often easy to forget that GPU stands for Graphics Processing Unit, but in yesterday’s busy GTC 2023 (GPU Technology Conference) sessions, Nvidia announced no less than four new processors (the company's own word) as it looks to cement its position as the AI hardware champion with barely any competition in sight.

The extraordinary success of ChatGPT has helped shape the narrative, boosting demand for bigger, better, larger, more powerful inference computing platforms.

“The number of applications for generative AI is infinite, limited only by human imagination. Arming developers with the most powerful and flexible inference computing platform will accelerate the creation of new services that will improve our lives in ways not yet imaginable.” said Jensen Huang, founder and CEO of NVIDIA.

Because Nvidia has a much smaller set of customers to service, it can deliver fine tuned products for specific tasks. The new L4 for AI video is 120x more powerful at video performance than CPUs while the L40 for image generation is 7x better at inference for Stable Diffusion than the previous generation.

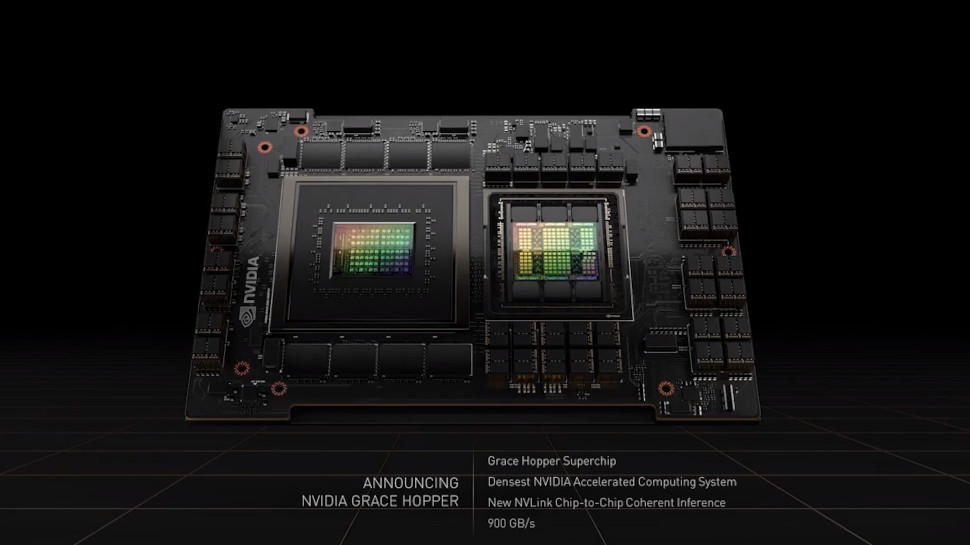

The H100 NVL has been built to tackle massive LLM (Large Language Model) deployments with a whopping 94GB of memory, helping it deliver 12x faster inference performance on the already-obsolete ChatGPT3 (and compared to the previous A100 generation). Last on the list is the Nvidia Grace Hopper superchip for recommendation models that brings Nvidia’s Arm based CPU and GPU closer and is expected later this year.

What about Intel and AMD?

For 40 years, the CPU (Central Processing Unit) has been at the core of computing but we’re witnessing - live - a leadership coup. Nvidia single handedly tilted the balance of power; Intel has been the biggest loser with its share price halved since last year while AMD continues to play second fiddle in the land of GPU (less so when it comes to CPU).

The CPU was always in the danger of being supplanted due to its generic nature; it is a jack of all trade after all. The GPU (and other more exotic processing units) leveraged their ability to bring hardware and software closer (through Nvidia’s API CUDA for example) to excel in specialized workloads.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Nvidia’s long lasting impact is illustrated by a new service called cuLitho (short for computational lithography) where it collaborated with ASML, TSMC and Synopsys to help design and manufacture the next generation of chips, regardless of whether its CPU, GPU, NAND or any other silicon-based product. Here we have GPUs accelerating the development of the next generation of GPUs with Nvidia predicting a performance improvement of 40x compared to CPU.

With a market capitalization of almost $640 billion, Nvidia is worth more than Qualcomm ($137bn), Intel ($117bn), AMD ($153bn) and Arm (est. $50bn) put together. With Nvidia having seemingly built an insurmountable lead in AI hardware, all odds are off as to who will be second best.

Désiré has been musing and writing about technology during a career spanning four decades. He dabbled in website builders and web hosting when DHTML and frames were in vogue and started narrating about the impact of technology on society just before the start of the Y2K hysteria at the turn of the last millennium.

Become a TechRadar Insider

Become a TechRadar Insider