There are two schools of thought as to why you can, or would even want to, overclock most CPUs and GPUs.

One of them takes the peace, love and understanding route, namely that the manufacturing process is never 100 per cent reliable, so not every chip that rolls off the same production line is born equal. Those with the most lustrous coats and shiniest eyes (bred on Pedigree Chum, presumably) are ready to be high-end components, but those with a bit of a squint and a runny nose may have a funny turn if they exert themselves too much.

Hence, some chips are slapped with a lower official clockspeed and sold for less groats than their beefier brethren. The potential for their intended glory remains, however. Overclocking techniques can unlock at least some of that potential, albeit at the risk of frying the chip completely.

The tinfoil hat/Angry Internet Men theory is based on the same concept but chucks in a pint of paranoia. In this scenario, every same-series processor is born equal, but The Man artificially neuters most of them and slaps different badges on what are fundamentally the same chips. Overclocking, then, is simply a way of taking back what's rightfully yours.

The truth about overclocking

The truth likely lies somewhere between the two. Mass production certainly makes more financial sense than dozens of separate lines, and it's true that a low- end CPU or GPU can be made to punch far above its weight, but their stability isn't as guaranteed as a chip that's officially able to run at a higher speed. No manufacturer wants to deal with a steady trickle of returned parts, after all. But it does mean home overclocking is almost always productive – and seemingly more so with every new hardware generation.

It's also increasingly easy. The earliest overclocking, on the 4 to 10MHz 8088- based CPUs of 1983, involved desoldering a clock crystal from the mobo and replacing it with a third-party one, with only partially successful results. Ouch. Still, the precedent was set: a dedicated bloke-at-home could exceed his chip's official spec. IBM, then very much the top dog of PCland, wasn't entirely happy about this, so follow-up hardware included hard-wired overclock blocks. More soldering, this time of a BIOS chip, managed to get around this.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

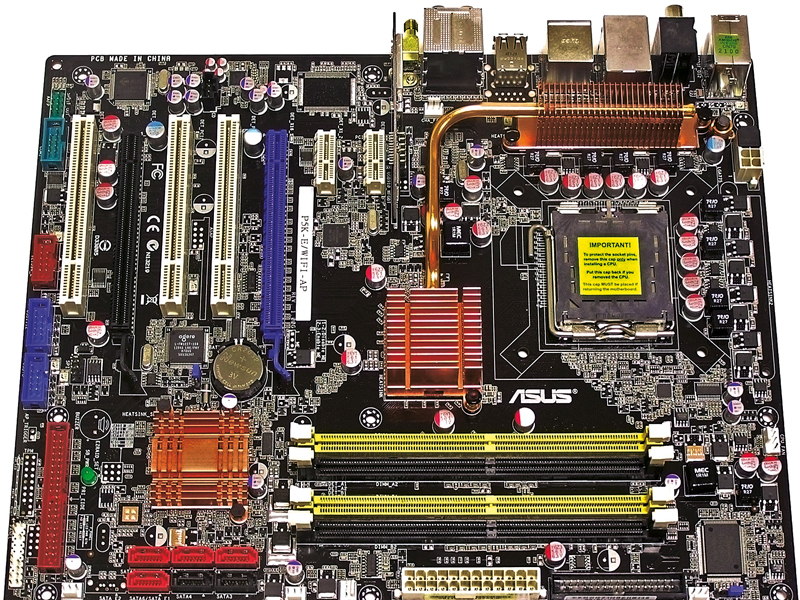

By 1986 IBM's stranglehold had been broken, resulting in a raft of 'clone' systems – and a wealth of choice. Intel's 286 and 386 processors became the de facto standard chips, and bus speed and voltage controls began to shift from physical switches and jumpers to BIOS options and settings.

The 486

It was the 486 that really changed everything, however. It's telling that this was the chip most prevalent during the era that birthed the first-person shooter as we know it: 1993's Doom very much popularised performance PCs for gaming, driving system upgrades in the same way a Half-Life 2 or Crysis does these days.

At the same time, the 486 introduced two concepts absolutely crucial to overclocking both then and now. Firstly, it popularised split product lines; no longer was it a matter of buying simply a processor, but rather which processor. The 486SX and DX offered some serious performance differential, and notably the SXs were hobbled/ failed DXs, giving rise to the ongoing practice of assigning different speeds and names to what were the same chip.

For a while too, the 25MHz SXes could be overclocked to 33MHz by adjusting a jumper on the motherboard; something less salubrious retailers took full advantage of. Secondly, it introduced the multiplier: performing more clocks per every one mustered by the system's front side bus. The 486's 2x multiplier thus effectively doubled the bus frequency. This was something overclockers would make the best of for successive processor generations – bumping up the multiplier was the simplest and often most effective way of increasing CPU speed. Nowadays (since the Pentium II, in fact), the multiplier is locked to prevent this, save for high-end chips, such as Intel's Extreme Edition series. For a while, there were complicated ways of defeating the multiplier lock: soldering on a PCB for earlier chips, third-party add-ons and the infamous practice of drawing a line onto certain AMD CPUs with a pencil. No CPU manufacturer's likely to make that mistake again.

Become a TechRadar Insider

Become a TechRadar Insider