Don’t be fooled: AI-powered tech still needs to prove its intelligence

Sorting the artificial intelligence fact from fiction

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

If you were to wander through the halls at the Consumer Electronics Show 2018 (CES) this year, chances are that one of the phrases you will have heard most often is artificial intelligence (AI). AI is, it appears, this year’s IoT or Cloud. The hot buzzword that every company wants to associate itself with.

Welcome to the inaccurate age of AI

The term has been plastered on marketing material for hundreds of disparate gadgets: Samsung’s massive 8K TVs apparently use AI to upscale lower resolution images for the big screen. Sony has created a new version of the Aibo robot dog, which this time promises more artificial intelligence. Travelmate’s robot suitcase will use AI to drive around and follow its owner wherever they go. Oh, and Kohler has invented Numi, a toilet that has Amazon’s Alexa voice assistant built in - though mercifully, it doesn’t appear to be doing any deep-learning analysis of your, umm, data.

There does appear to be something real at the heart of all of this marketing copy: it’s clear that it’s an exciting time in the tech industry, as entire product categories are being invented or transformed using these sorts of smart technologies. Products like Amazon’s Alexa, with its accurate voice recognition would have been virtually unimaginable a decade earlier, at least outside of the realms of science fiction. And Google’s ability to pick out objects from photos would have seemed like witchcraft to companies that would previously have paid humans to do the tedious business of adding metadata to images.

Article continues belowBut despite all this, it does leave me wondering: is artificial intelligence really what we should be calling this revolution? Because, well, these technologies really aren’t all that intelligent at all.

Sorting AI fact from AI fiction

It’s essentially a definitional problem: For some reason, the industry is hellbent on using AI when what is actually means is machine learning (ML). This is a much more narrow term, referring to what is essentially using trial and error to build a model that’s capable of guessing the answers to discrete questions very accurately.

For example, take image recognition: say you want to build a system that separates pictures of cats from pictures of dogs. All you have to do is feed a ML algorithm enough pictures of cats, telling the system they are cats, and then enough pictures of dogs, telling it they are dogs. It will then build a model of what patterns to look for and eventually, after enough training, you should be able to feed it an unlabelled image, and it will be able to make a fairly accurate guess as to which of the two animals is in the picture.

The trouble is that though this is very impressive, and has only been possible at scale over the last few years because of the collapsing cost and availability of processing power, it isn’t exactly ‘intelligence’, is it?

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Beyond the buzzword

Intelligence, of the sort that humans have, is very different and more broadly defined. We’re capable of a wider set of skills. A great example of this comes from an industry-wide group called the AI Index, which is attempting to measure and benchmark progress in AI. In its 2017 report, it says:

“[A] human who can read Chinese characters would likely understand Chinese speech, know something about Chinese culture and even make good recommendations at Chinese restaurants. In contrast, very different AI systems would be needed for each of these tasks.”

In other words, we’re a long way from the sort of generalised artificial intelligence that would be able to do these very different tasks. And we’re even further away from such an intelligence being able to not just carry out those tasks, but also wonder to itself why it is doing them.

Follow the money

So, given the obvious limitations of current technology, why is an entire industry obsessing over the term AI? Why is it suddenly so important? And why is every tech start-up at every major trade show touting its AI capabilities?

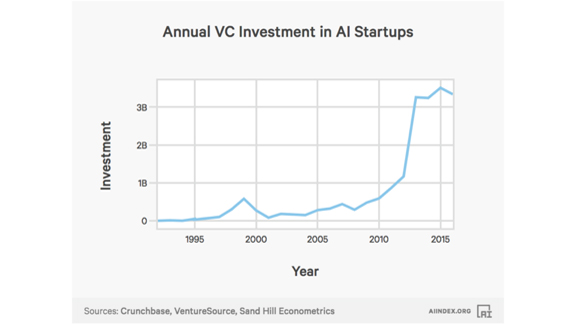

Perhaps the answer lies in this one chart (see below), which is again from the AI Index.

Ah yes, that would explain it. If you can frame your start-up as a company that is dabbling in artificial intelligence, it appears as though the investment cash will come flooding in. Around $3bn has been invested in AI start-ups annually following an enormous increase around 2013.

But this doesn’t explain why we’re mislabelling. Why we’re referring to artificial intelligence rather than machine learning. My guess here is simply that AI sounds a whole lot sexier. Think about it, if your competitors are conjuring up images of Tony Stark’s Jarvis or Data from Star Trek, you don’t want to be caught talking about boring old, harder-to-market machine learning instead.

(Machine) learning to walk before we can run

In any case, I think it’s time to exercise more caution when throwing around the label artificial intelligence, and we should save it for when we truly have systems that are approaching a more generalised form of human-like intelligence so that we don’t end up with false or misleading expectations.

Given this real milestone is at least a number of years away, in the meantime I’m going to get back to work on building a machine learning system that can figure out how to easily separate the AI fact from AI fiction.