This AI robot can recognize a ball from a baby

Robots are getting smarter ... and scarier

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

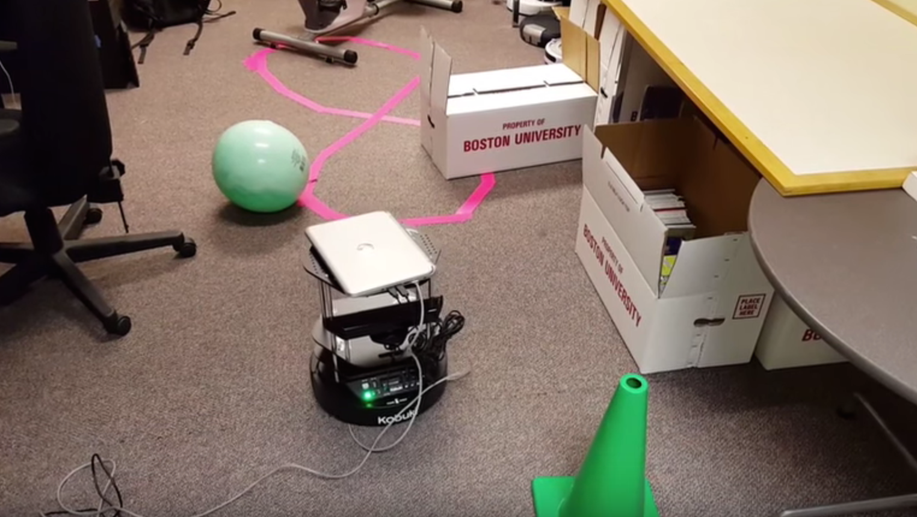

An undergraduate at Boston University has developed a robot with artificial intelligence that can move around without human direction and, most impressively, recognize what obstacle is sitting in front of it before steering around it.

The engineering student, Emily Fitzgerald, built the two-foot-tall robot with a stack of hors d'oeuvre trays and put it on wheels. The robot's smarts use a camera and a laptop sitting on top of the trays, which communicates with a desktop computer.

The system lets the robot come upon an object and determine exactly what it is, whether it be a ball, a book, or a cone.

Article continues belowDeep learning

Fitzgerald explains that the robot uses the camera to snap a range of images of the object in front of it, and the laptop collects and sends the information to the desktop computer.

The computer then uses a deep neural network, an artificial intelligence model that simulates the way our brains problem solve, to basically say, "Oh, let me think about it." It then finds a corresponding picture to use as a reference, after which it's able to say something along the lines of, "This is a ball."

However, while Fitzgerald's robot has the smarts to get itself around and call out objects, it isn't perfect, and was only a summer project for the university student.

Still, she hopes to further pursue a career in bioimaging and sees a future where robotic surgical devices use neural networks to detect objects in human patients. Fitzgerald didn't elaborate what those objects might be, but we assume she is referring to things like tumors. Robots such as this could also be used in other applications, like space exploration.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You can check out the rolling robot in the video below.