Google announced a suite of AI-powered upgrades to its Search, Maps and Lens services during its recent Paris showcase, with Lens set to benefit from a particularly useful new feature in the coming months.

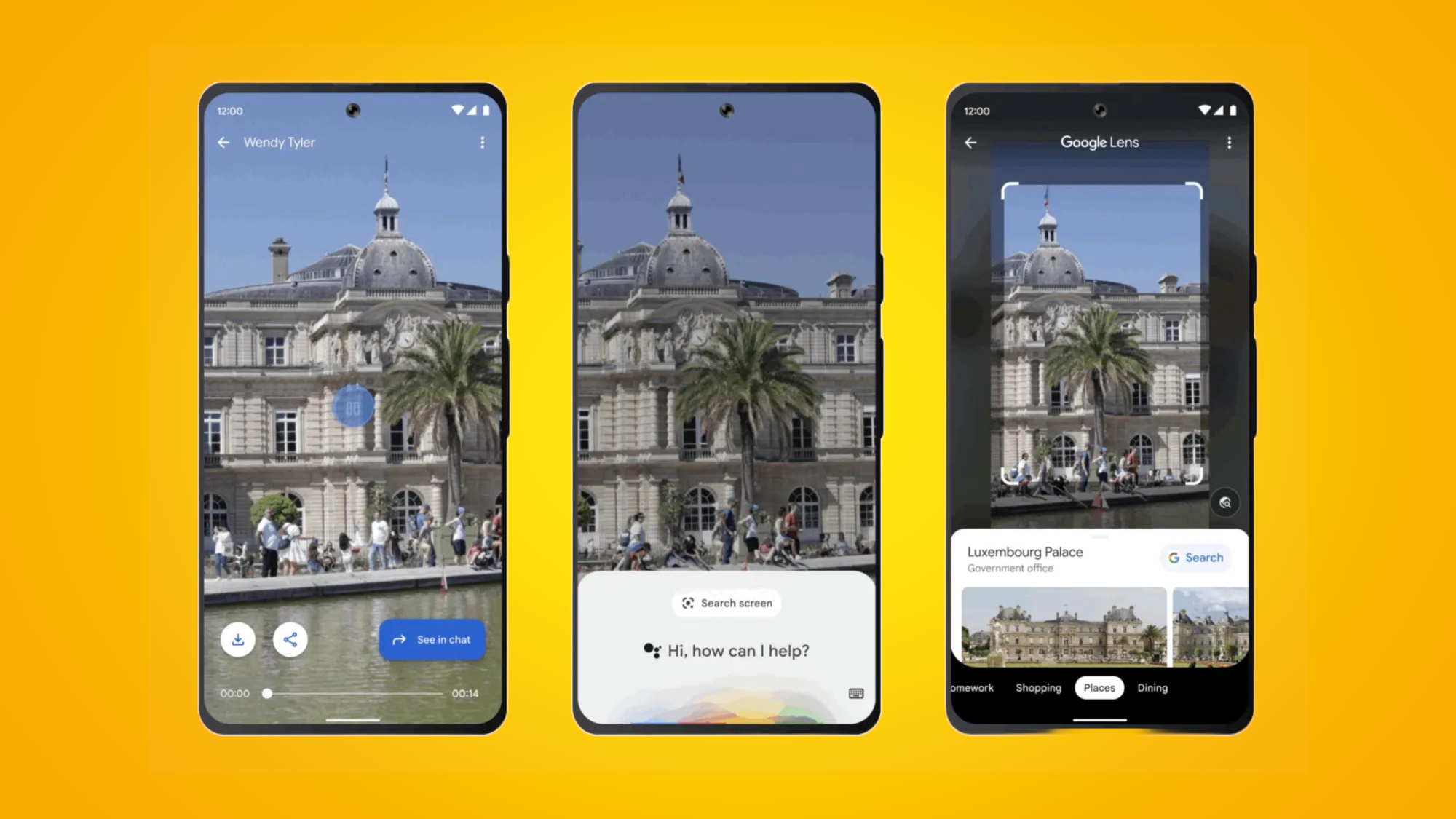

Soon, Google Lens users on Android will be able to search for what they see in photos and videos through Google Assistant alone. The integration will work across myriad websites and apps, and allow people to learn more about information contained within images – think building names, food recipes or car models – without having to navigate away from those images. As Google explained in its Paris presentation, "if you can see it, you can search it".

Confused? Check out the latest Google Lens update in action via the tweet below, which shows a user identifying Luxembourg Palace through a friend’s video of the landmark.

In the coming months, we’re introducing a ✨major update ✨ to help you search what’s on your mobile screen.You’ll soon be able to use Lens through Assistant to search what you see in photos or videos across websites and apps on Android. #googlelivefromparis pic.twitter.com/UePB421wRYFebruary 8, 2023

Google hasn’t yet offered a date for the new feature’s arrival, though the company has promised to roll out the upgrade “in the coming months” (which, for our money, likely means February or March 2023).

Significant improvements are heading to Google’s Multisearch feature, too. The ability to add a text query to Lens searches is now available globally in all supported languages and countries, and Google is also introducing the ability to find different variations (for example, shape and color) of objects captured through Lens.

As Google explained in Paris: “For example, you might be searching for ‘modern living room ideas’ and see a coffee table that you love, but you’d prefer it in another shape – say, a rectangle instead of a circle. You’ll be able to use Multisearch to add the text ‘rectangle’ to find the style you’re looking for.” See the feature in action below:

Multisearch is now live globally! Try out this new way to search with images and text at the same time. 🤯So if you see something you like, but want it in a different style, colour or fit, just snap or upload a photo with Lens then add text to find it. 🔎#googlelivefromparis pic.twitter.com/4yT6voiJknFebruary 8, 2023

A new era of search?

Elsewhere during Google’s recent showcase, the company announced a host of AI-powered updates for Google Search and Google Maps.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

For instance, Google will soon be integrating its "experimental conversational AI service,” Bard, into Search to offer users more accurate and convenient search results. As Google explained in Paris, you'll soon be able to ask questions like, "what are the best constellations to look for when star-gazing?”, and then dig deeper into what time of year is best to see them through helpful AI suggestions.

The move follows Microsoft’s announcement of a redesigned, AI-powered Bing search engine that uses the same technology as ChatGPT.

As for Google Maps, the service’s Immersive View feature – which lets you virtually tour landmarks – is getting a significant upgrade in five major cities across the globe, while its Live View feature – which uses your phone’s camera to help you explore a city through a neat AR overlay – is set for similar expansion.

We’ll be testing all of the above features for ourselves in the coming months, but for a whistle-stop rundown of everything else announced at Google’s Paris showcase, head over to our Google 'Live from Paris' liveblog.

Axel is TechRadar's Phones Editor, reporting on everything from the latest Apple developments to newest AI breakthroughs as part of the site's Mobile Computing vertical. Having previously written for publications including Esquire and FourFourTwo, Axel is well-versed in the applications of technology beyond the desktop, and his coverage extends from general reporting and analysis to in-depth interviews and opinion.

Axel studied for a degree in English Literature at the University of Warwick before joining TechRadar in 2020, where he earned an NCTJ qualification as part of the company’s inaugural digital training scheme.

Become a TechRadar Insider

Become a TechRadar Insider