Google 'Live from Paris' event recap: AI updates to Maps, Search, Lens and more

Google has revealed some new AI-powered treats

Microsoft struck first yesterday with its new ChatGPT-flavored version of Bing yesterday – and now we've just seen Google counter-punch with its AI-themed 'Live from Paris' event.

Google's event wasn't quite as grand as Microsoft's, with its own AI chatbot – called Bard – announced two days ago. Instead, Google chose to showcase the many ways it's baking AI tech into its many apps and services, including Maps, Lens and Translate.

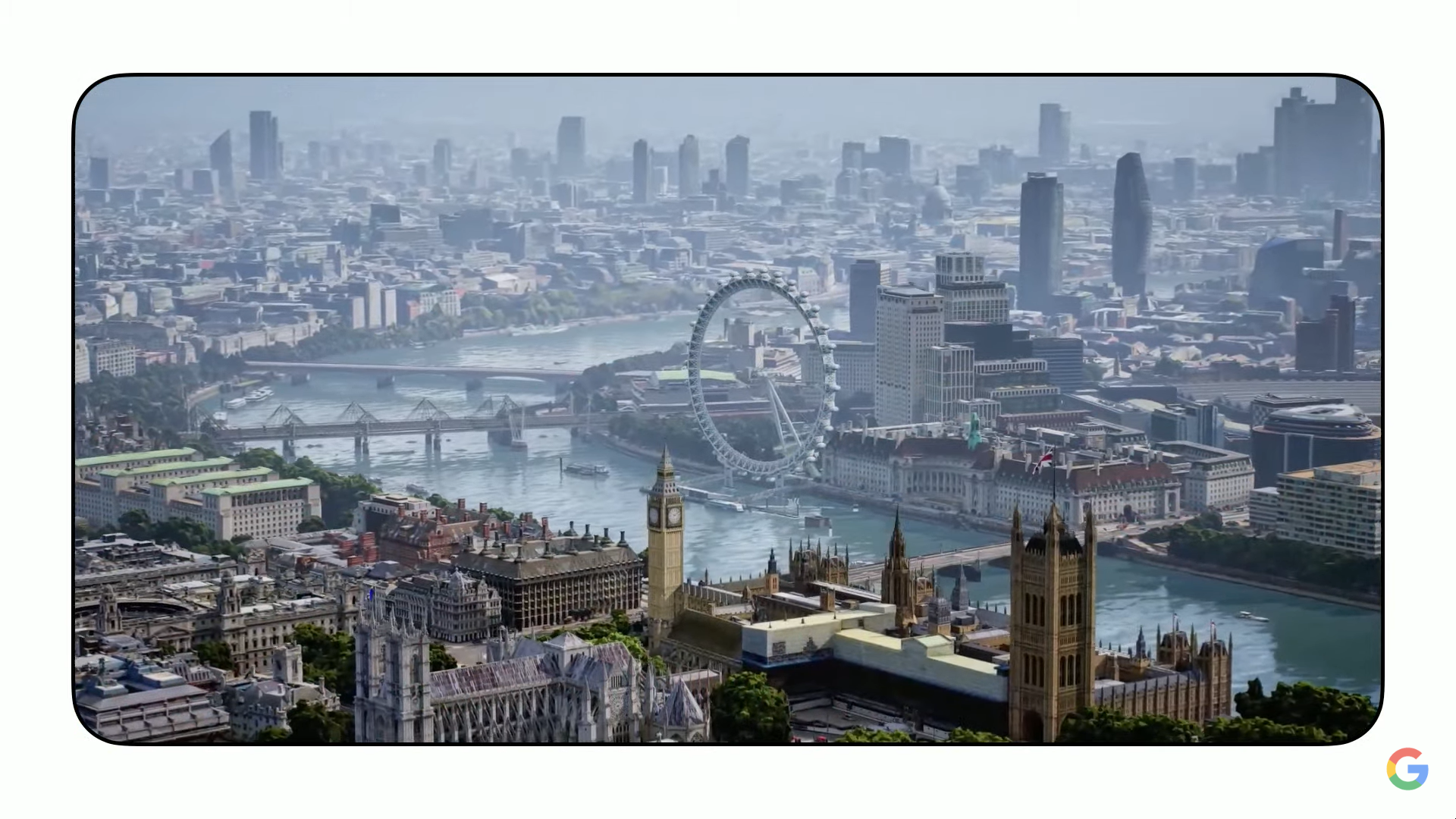

While we've seen many of the features before at events like Google I/O, a few are now actually being rolled out. For example, Google Maps' Immersive View – which gives you Superman-style flyovers of tourist hotspots, complete with simulated weather for different times of the day – is launching in more cities (including London, New York and LA) today and others in the "coming months".

Elsewhere, we also saw a preview of a new Google Lens feature that'll let Android users call on its AI powers in any context with a double-tap of their power button. Electric cars owners will also be pleased to see some new EV-specific features coming to Google Maps, including the best routes that include charging stops.

If you missed the event, the video is now available to re-watch again below. But you can also scan through our commentary to see the main announcements, or check out our quick recap below. The Microsoft Bing vs Google Bard scrap just got very interesting.

What was announced at Google's 'Live from Paris' event?

- Google's "experimental" Bard chatbot is heading to Search within weeks

- Google Maps 'Immersive View' is being rolled out to five major cities

- Google Lens expands on Android phones with new home button feature

- Google Maps Indoor Live View comes to 1,000 new destinations worldwide

- Electric car owners get EV-focused routes and features on Google Maps

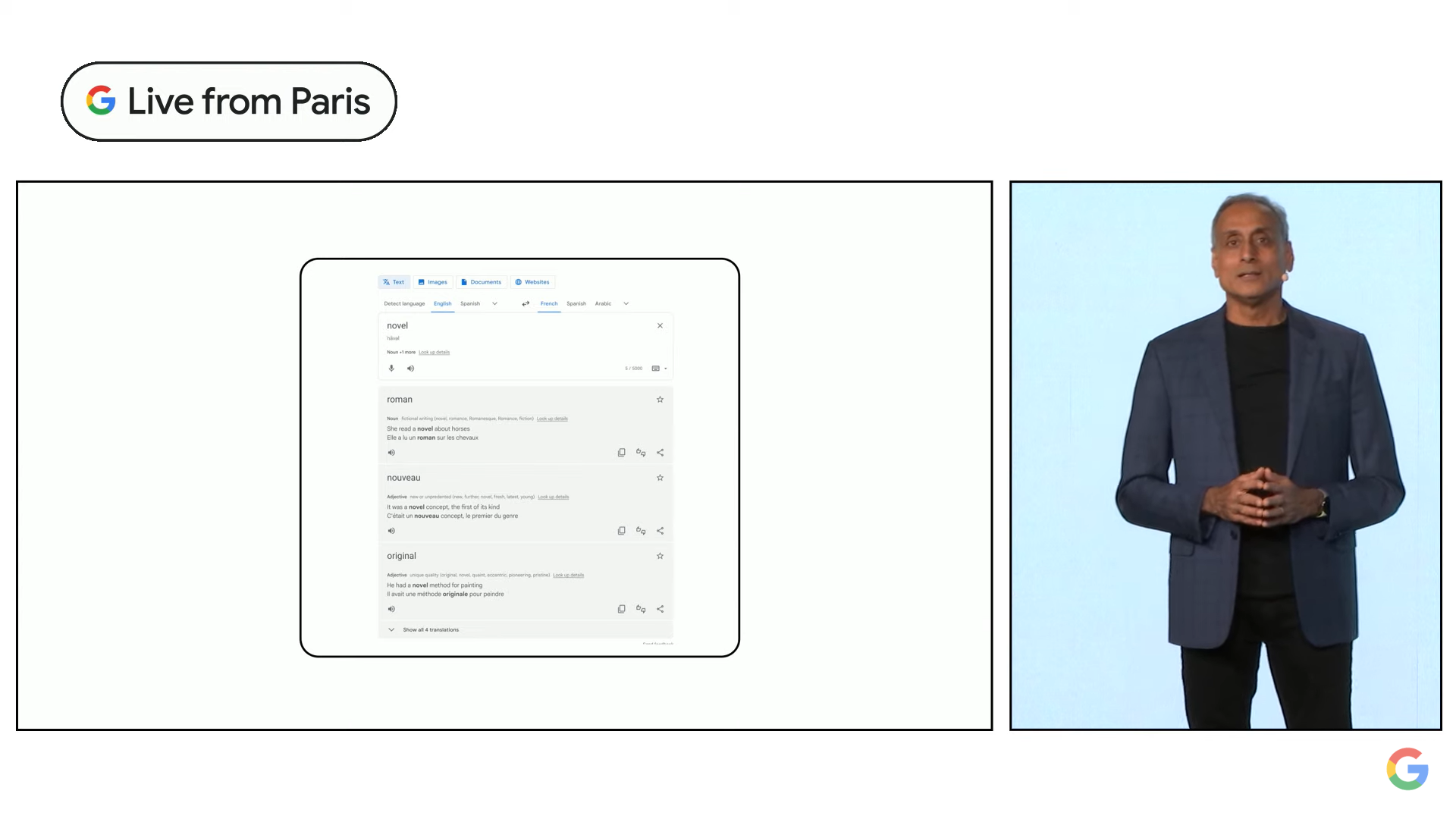

- The Google Translate app is getting a fresh redesign with AR features soon

Mark is TechRadar's Senior news editor, and formerly its Cameras editor. He's been reporting on tech since the pre-iPhone days, when the Sony Ericsson T630 was considered an exciting flagship. He's based in London and has tested phones since his very first published review of the original Nokia N-Gage.

Good morning and welcome to our Google 'Live from Paris' liveblog.

It's another exciting day in AI-land, as Google prepares to counter-punch Microsoft's big announcements for Bing and Edge yesterday. This week's tussle reminds me of the big heavyweight tech battles of the early 2010s, when Microsoft and Google traded petty attacks over mobile and desktop software.

But this is a new era and the squared circle is now AI and machine learning. Microsoft seems to think it can steal a march on Google Search – and against all odds, it might actually do it. I'll reserve judgement until we see what Google announced today.

So what exactly are we expecting to see from Google today? Clearly, Search is going to be the big theme, as we start to get more detail on exactly how Google's conversational AI is going to be baked into Search.

Any big change to Search would clearly be a huge deal, as Google has barely changed the external UI of the minimalist bar most of us type into without thinking.

But we're more likely to see baby steps today – Google has called Bard an "experimental" feature and it's only based on a "lightweight model version" of LaMDA AI tech (which is short for Language Model for Dialogue Applications, if you were wondering).

Like Microsoft's new Bing, any integration of Bard in search will likely be presented as an optional extra rather than a replacement for the classic search bar – but even that would be huge news for a search engine that has 84% market share (for now, at least).

4/ As people turn to Google for deeper insights and understanding, AI can help us get to the heart of what they're looking for. We're starting with AI-powered features in Search that distill complex info into easy-to-digest formats so you can see the big picture then explore more pic.twitter.com/BxSsoTZsrpFebruary 6, 2023

Just 15 minutes to go until Google's 'Live from Paris' event kicks off. One of the big questions for me is how interactive Google's conversational AI is going to be – in the new version of Microsoft Bing, the chat results gradually expand in more detail.

That's a big change from traditional search, because it means your first result can be the start of a longer conversation. Also, will Google be citing its sources in the same as the new Bing? The early screenshots were unclear on this, but we'll find out more very soon.

Right then, just two minutes to go until Google's livestream clicks off. It's unclear why it's chosen Paris as the location for the event – but perhaps it has something to do with those hinted Maps features...

Senior vice president Prabhakar Raghavan is on stage talking about the "next frontier of our information products and how AI is powering that future".

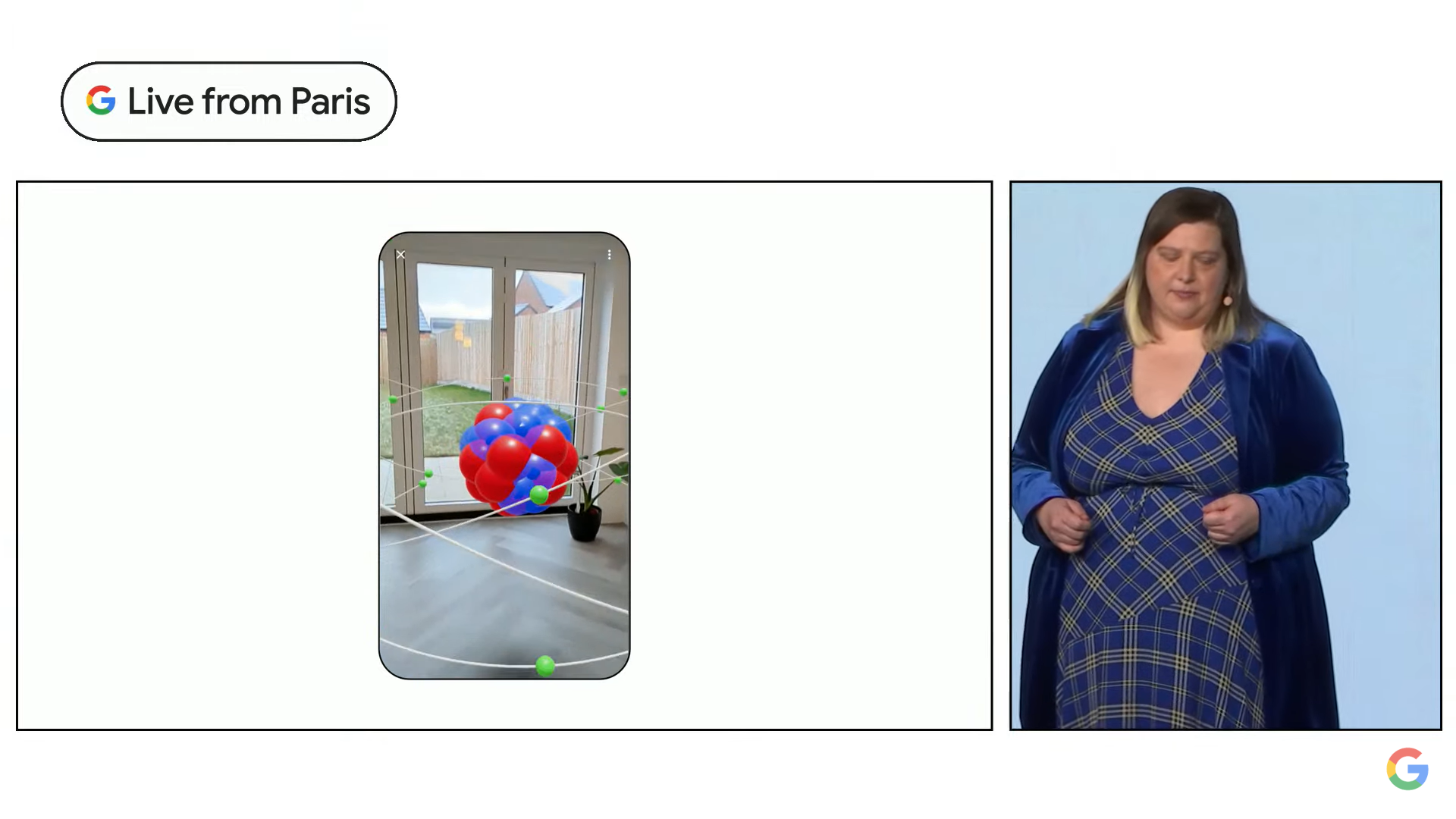

He points to Google Lens going "beyond the traditional notion of search" to help you shop and place a virtual armchair you're looking to buy in your living room. But as he says "search is never solved" and it remains Google's moonshot product.

The message right now is that Google has been using AI technologies for a while.

One billion people use Google Translate. Google says many Ukrainians seeking refuge have used it to help them navigate new environments.

A new 'Zero-shot Machine Translation' technique learns to translate into another language without needing traditional training. It's added 24 new languages to Translate using this method.

Google Lens has also reached a major milestone – people now use Lens more than 10 billion times per month. It's no longer a novelty.

Google Lens is getting a big boost. In the coming months, you’ll be able to user Lens to search your phone screen.

For example, long press the power button on Android phone to search a photo. As Google says, "if you can see it, you can search it".

Multisearch also lets you find real-world objects in different colors – for example, a shirt or chair – and is being rolled out globally for any image search image results.

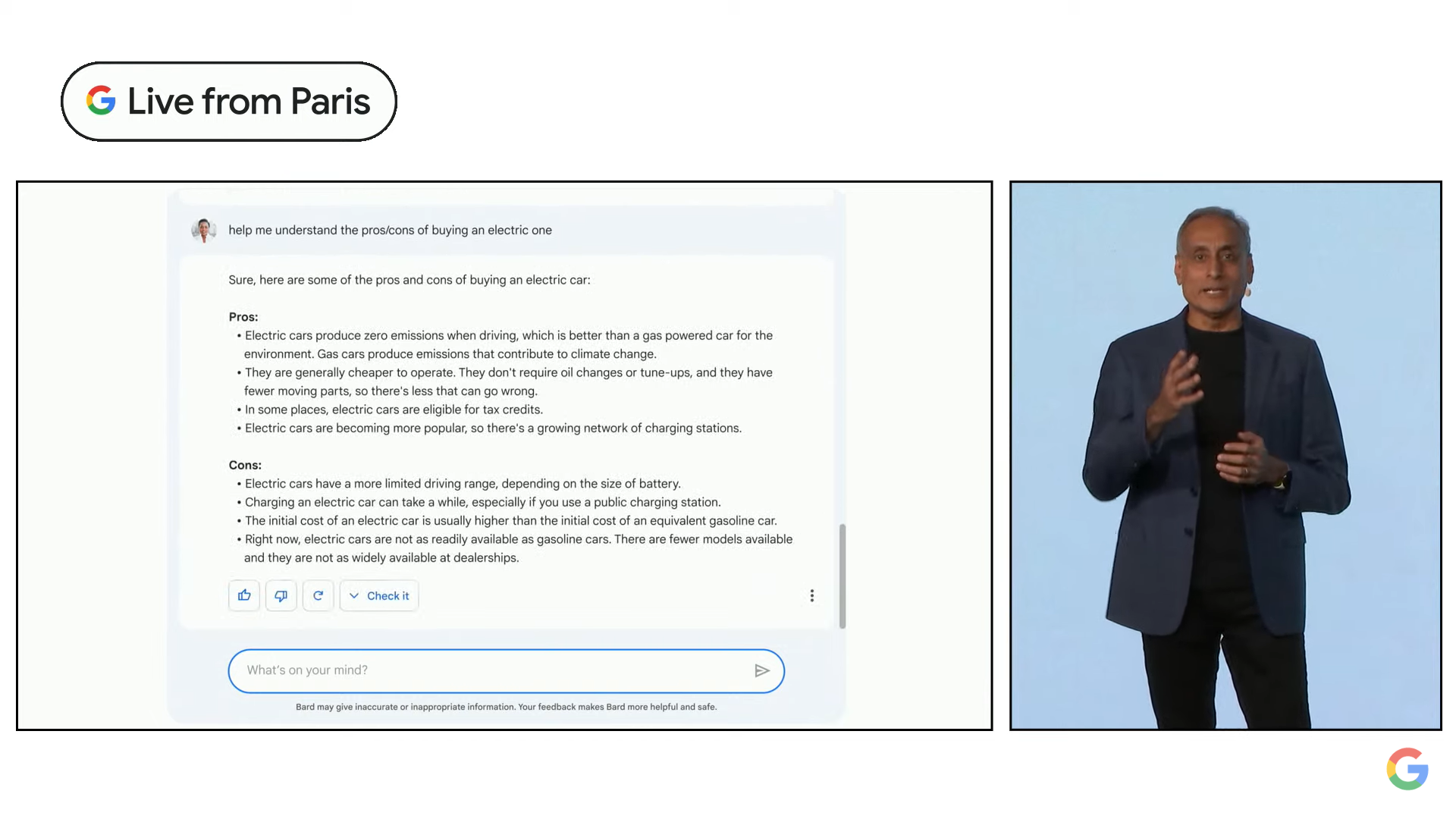

Okay, we're onto ‘large language models’, like LaMDA. It's the power behind Google's new AI chat service 'Bard', which it calls "experimental".

As Google previously announced, it's releasing a lightweight model of LaMDA to "trusted testers" this week. No news yet on when it's rolling out publicly, beyond the "coming weeks" Google mentioned earlier this week.

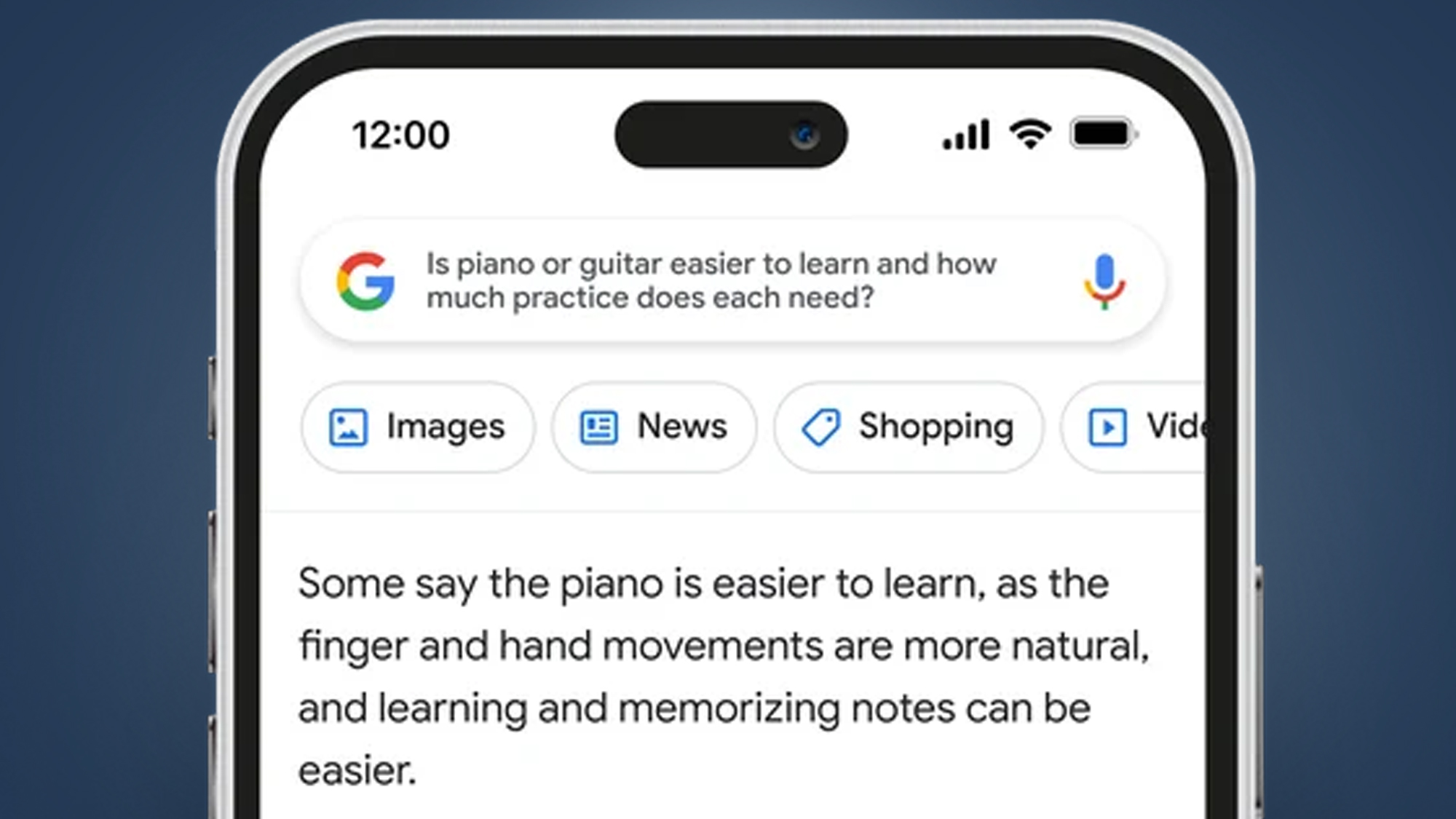

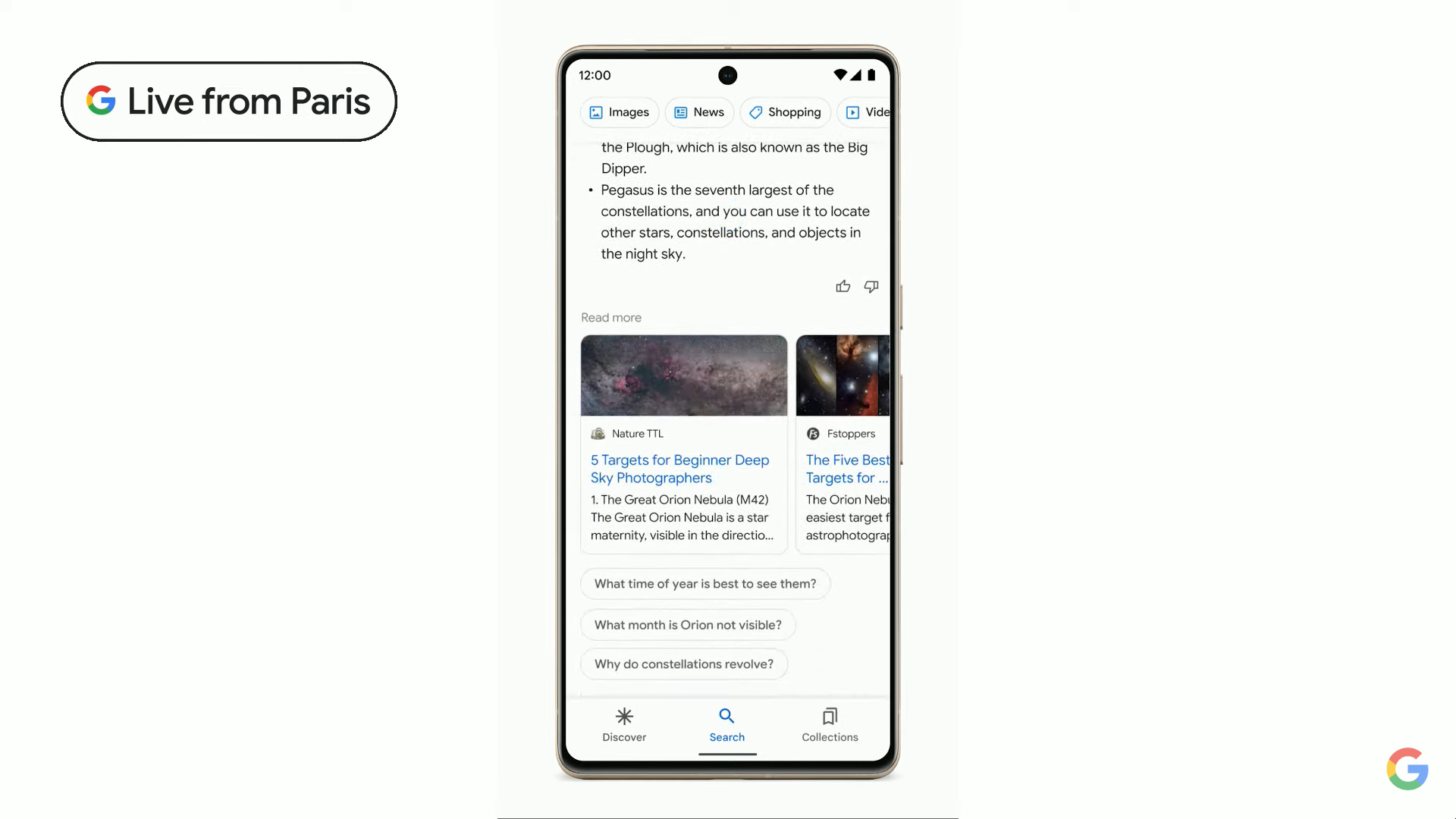

Generative AI is coming to Google Search and Google is giving more examples of how it’ll work.

For example, you'll be able to ask "what are the best constellations to look for when star-gazing”, and then dig deeper into what time of year is best to see them. All very similar to Microsoft's new Bing chat.

Google is also talking about generative imagery, which can create 3D images from stills. It says we'll be able to use them to design products, or find the perfect pocket square for your new blazer. No specifics on how that'll rollout though.

Developers will also be getting a big suite of tools and APIs to make AI-powered apps.

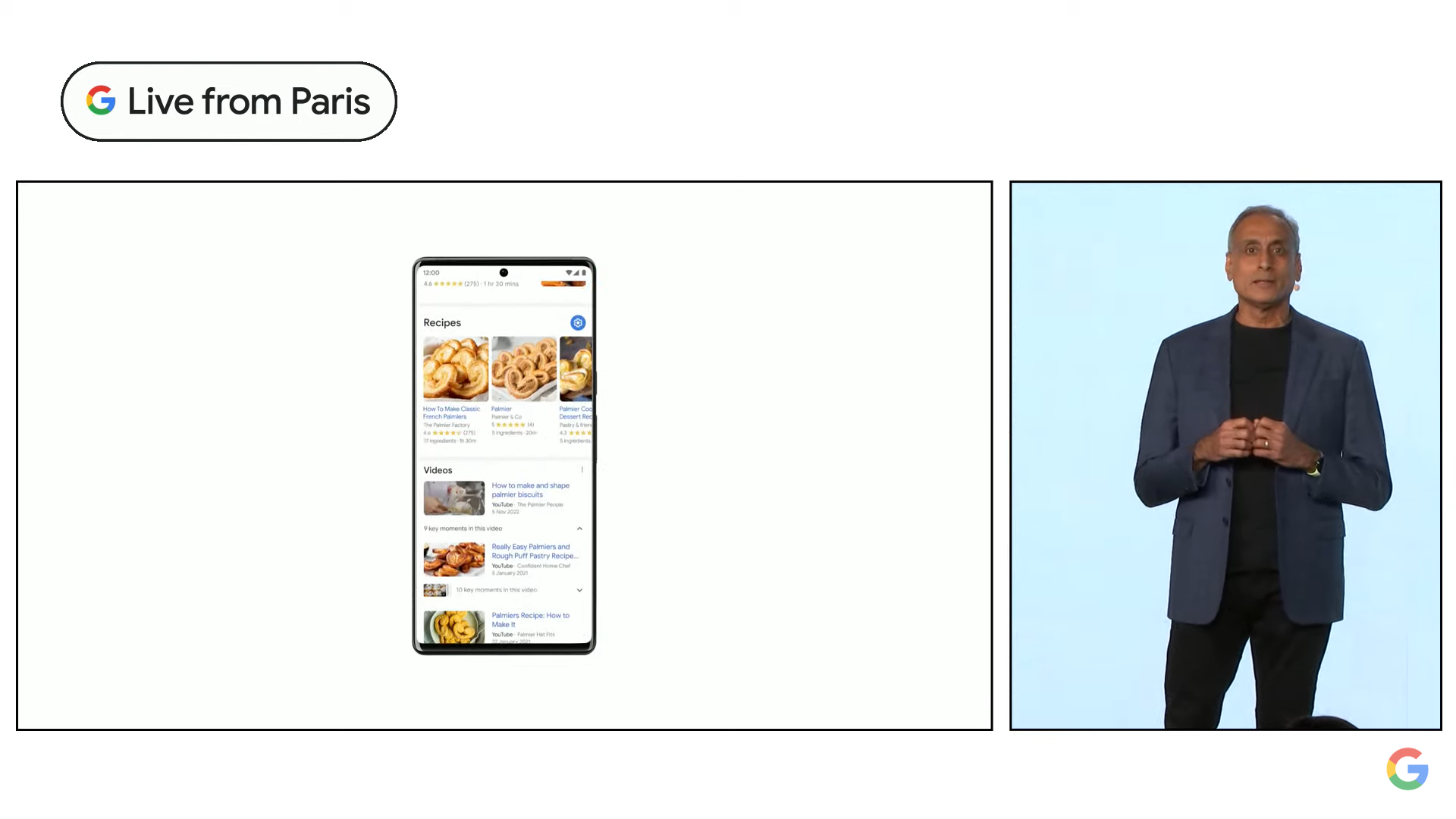

Google's VP & GM at Chris Philips now on stage talking about Google Maps. "We’re transforming Google Maps again" he says.

The very impressive 'Immersive View' is being demoed, which we've seen before. It uses AI to fuse billions of Street View images to give you Superman flyovers of big landmarks, and indoor views of restaurants.

The good news: Immersive view is finally rolling out to several cities, including London, Los Angles, New York and San Francisco today, and other cities in the next few months. Now we're looking at 'Search with Live View'...

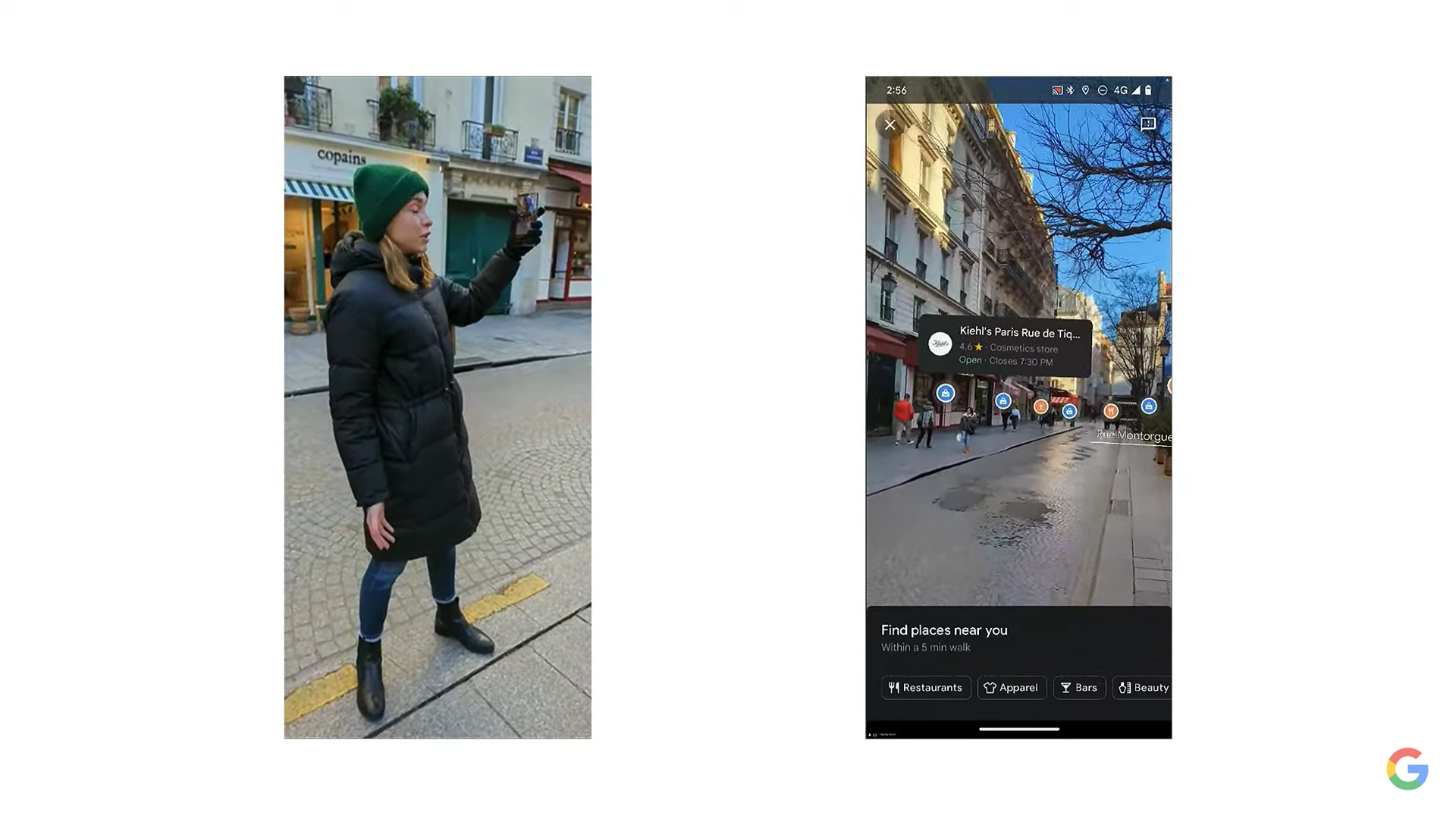

Google Maps' 'Search with Live View' combines AI with AR to help your visually find things nearby, like restaurants, ATMs and transit hubs by looking through your phone's camera.

It's already live in five cities on Android and iOS and "In the coming months" it'll be expanded to Barcelona, Dublin and Madrid. Now we're getting an outdoor demo –you tap on the camera icon in Google Maps show you real places overlaid on the camera view, including ones that are out of view.

You can see if they're busy currently and how highly they're rated. Not a brand new feature, but definitely a useful one that's being rolled out more widely.

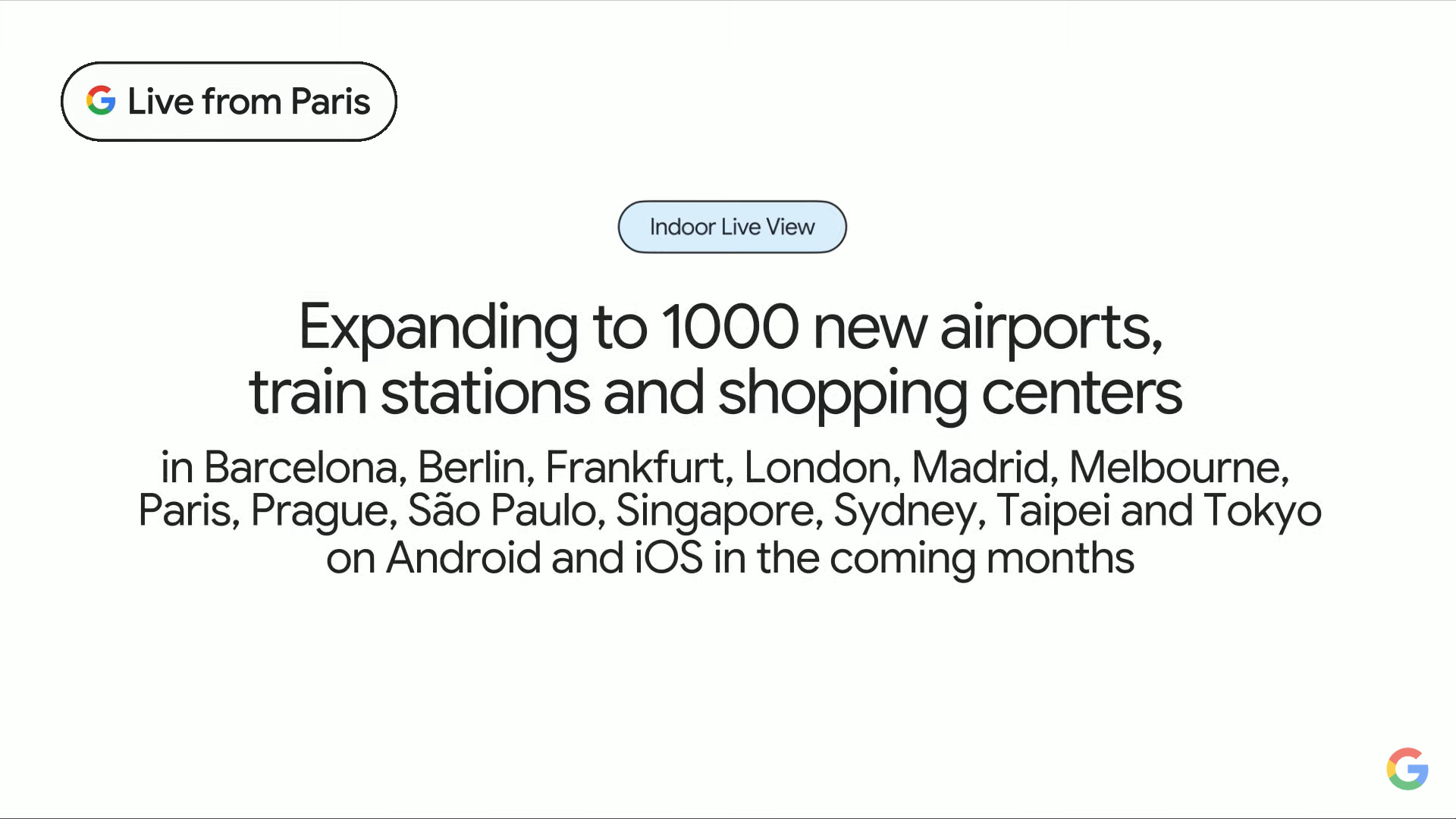

Google is now talking up the less well-known 'Indoor Live View' for airports, train stations and shopping centres. In a few select cities and locations, this uses AR arrows to show you where things like elevators and baggage claim are – pretty darn useful, if a bit limited right now.

Fortunately, Google is rolling it out more widely with its "largest expansion of Indoor Live View to date", bringing it to 1,000 new venues at airports, train stations and shopping centers in London, Tokyo and Paris "in the coming months".

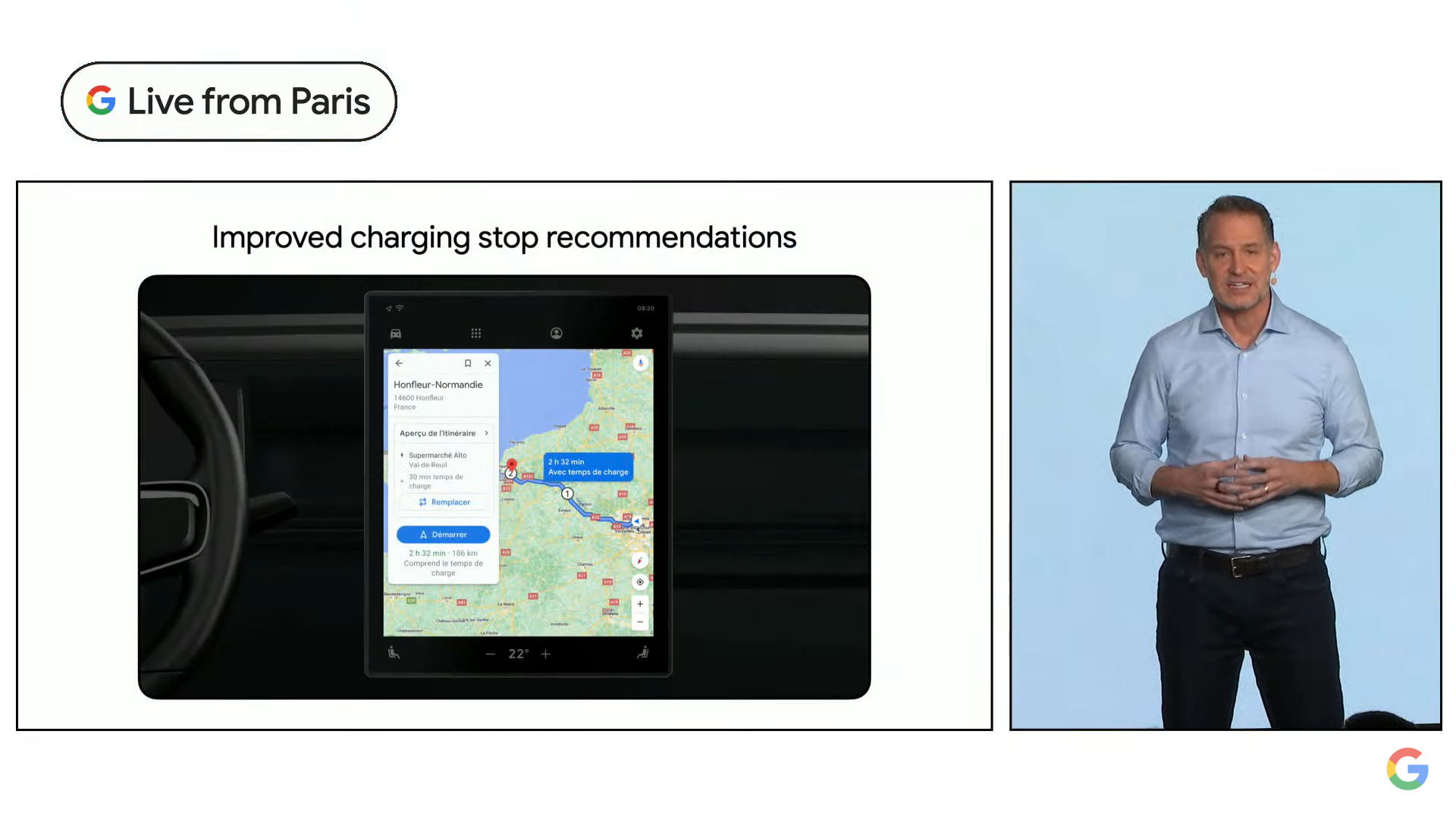

Good news for electric cars owners. Google is also launching new Maps features for EV owners to make sure you have enough charge.

“To help eliminate range anxiety, we’ll use AI to suggest the best charging stop, whether you’re taking a road trip or just running errands nearby”.

It’ll factor in traffic, charge level and the energy conniption of your trip. It’ll also show ‘very fast charging’ stops for a quick boost.

Hmm, the livestream now appears to be down – maybe Microsoft's Clippy has been up to some nefarious tricks. We'll hopefully be back shortly folks.

There were a couple of more minor announcements to finish off Google's presentation, along with an appearance from the Blob Opera.

Google has also announced a suite of new AI-powered Arts and Culture features. Among the new tools is the ability to search for – and study in impressive detail – famous paintings by thousands of artists.

The company says it's making a conscious effort to preserve endangered languages, too, by leveraging AI and Google Lens to intuitively translate words for common household items.

So that’s it from Google today – with a slightly abrupt pulling of the livestream plug to mark the end of the event.

Overall, it was pretty underwhelming and felt like a defensive ploy to pop Microsoft’s AI hype balloon. While there were a few mini announcements – an expansion of Indoor View for Google Maps, a 'search screen' feature for Google Lens, and a demo of Google Bard in Search – there certainly wasn't anything new on the scale of Microsoft's new chat function for Bing and Edge.

Google slightly undermined its own AI event by rushing to publish a Google Bard preview a couple of days ago – and we didn't really get any new information about exactly how it'll work when it's rolling out to the public in the "coming weeks".

Right, we're off to see if Google Maps' Immersive View is working yet in London – thanks for tuning in, and keep an eye out for fresh updates here as we get some more official post-event info from Google.

It's the day after Google's slightly underwhelming AI event in Paris – and it seems that AI chatbots aren't quite ready for prime-time in search engines. Reuters has spotted that one of Bard's answers in Google's demo was inaccurate.

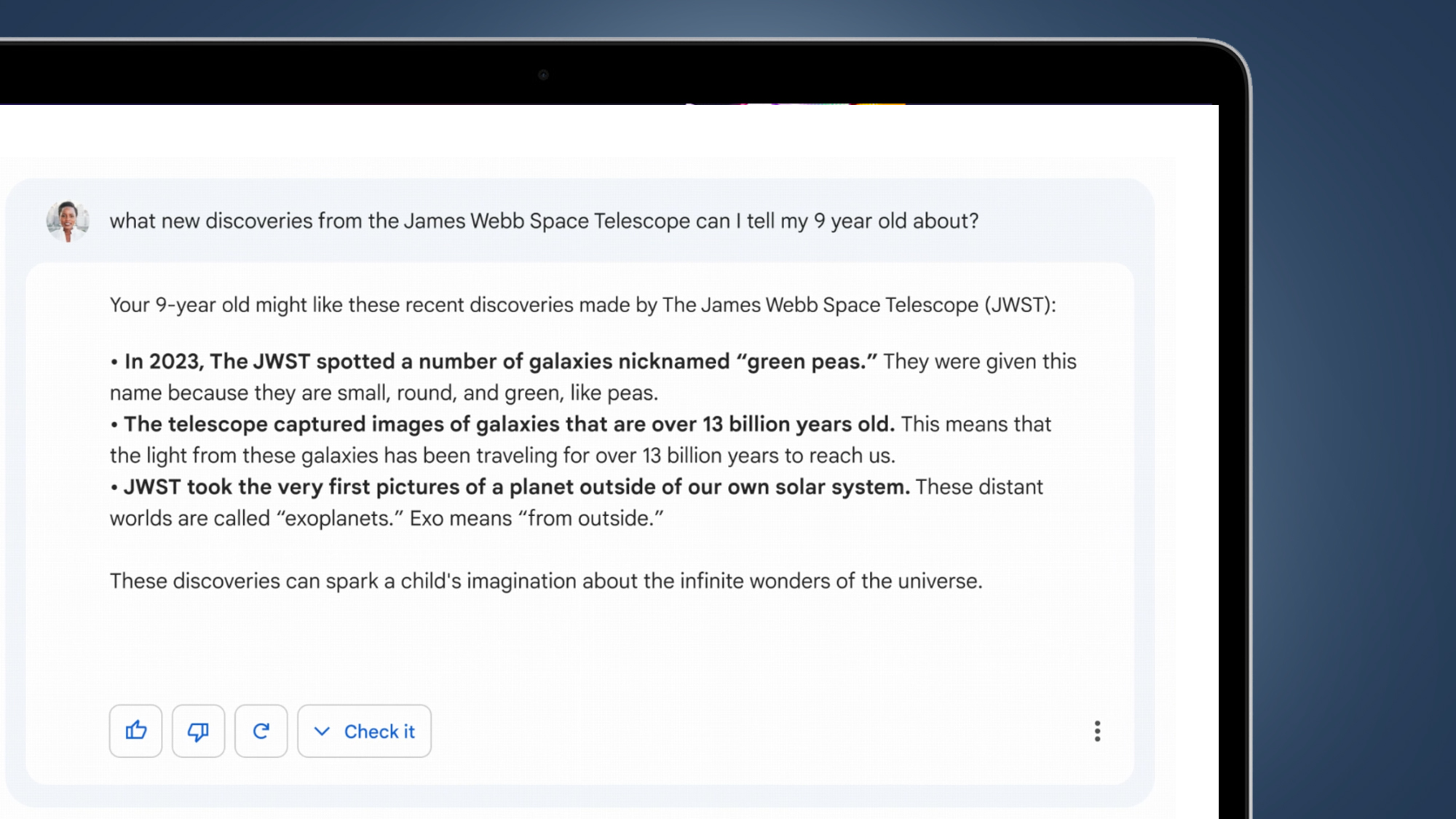

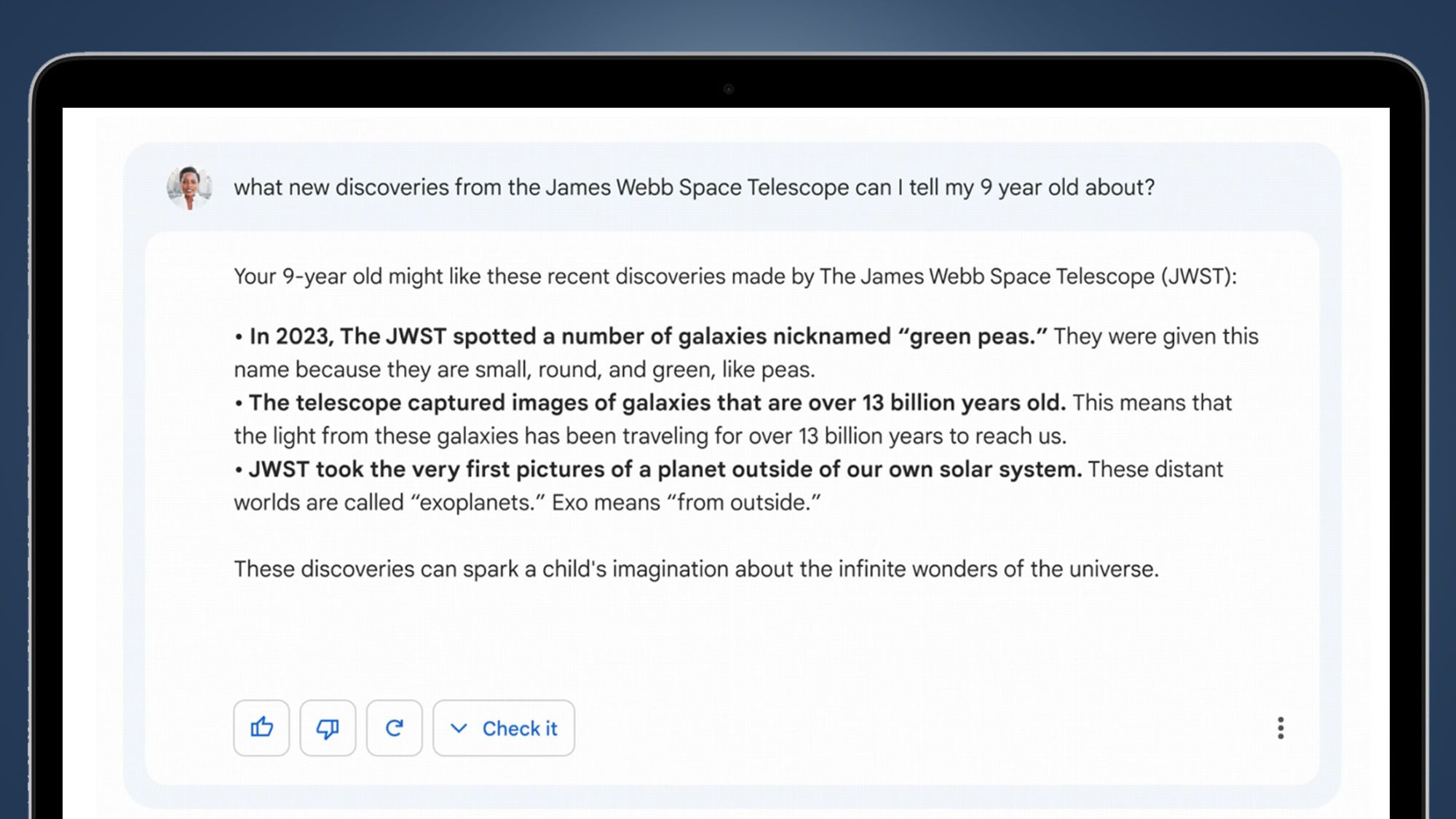

After being asked to provide some examples of discoveries from the James Webb Space Telescope, the AI chatbot suggested that the JWST "took the very first pictures of a planet out of our own solar system" – when that actually happened in 2004 thanks to the European Southern Observatory's Very Large Telescope.

A Google spokesperson admitted that “this highlights the importance of a rigorous testing process, something that we’re kicking off this week with our Trusted Tester program." The spokesperson added that “we’ll combine external feedback with our own internal testing to make sure Bard’s responses meet a high bar for quality, safety and groundedness in real-world information.”

Clearly, Microsoft has pushed Google into making Bard public a little earlier than it's comfortable with. And Google has now admitted as much in some leaked emails that suggest we're on a "long journey" to reliable chatbots.

Google has stressed that the AI chatbot is "experimental" and a disclaimer beneath the search box says that "Bard may give inaccurate or inappropriate information".

Still, that doesn't mean we aren't very much looking forward to taking Bard for a spin when it's available "in the coming weeks".