Intel Arc A770 somehow beats the Nvidia RTX 4090 in 8K video playback tests

AV1 video decoding is one area where Intel’s cheaper GPU seemingly has the edge

Intel’s Arc GPUs are finally here, and initial reception has been, well, mixed. The broad consensus seems to be that the flagship Arc A770 16GB is a decent mainstream gaming graphics card with a stellar price-to-performance ratio, but current driver and power consumption issues mean the Arc designs need a little refining.

One area where the reviews have been almost universally positive is video encoding performance. For the uninitiated, video playback (specifically, in this case, high-resolution video) requires a specialized hardware unit on the GPU, the performance of which is not dependent on the overall power of the card.

This is how, somewhat bewilderingly, the $349 Arc A770 has been able to beat the $1,599 (if you’re lucky) RTX 4090 in a benchmark test. The creator of the capture and analysis tool CapFrameX has posted the results of an AV1 video encoding performance comparison between four recent GPUs – and the winner is Intel’s humble A770.

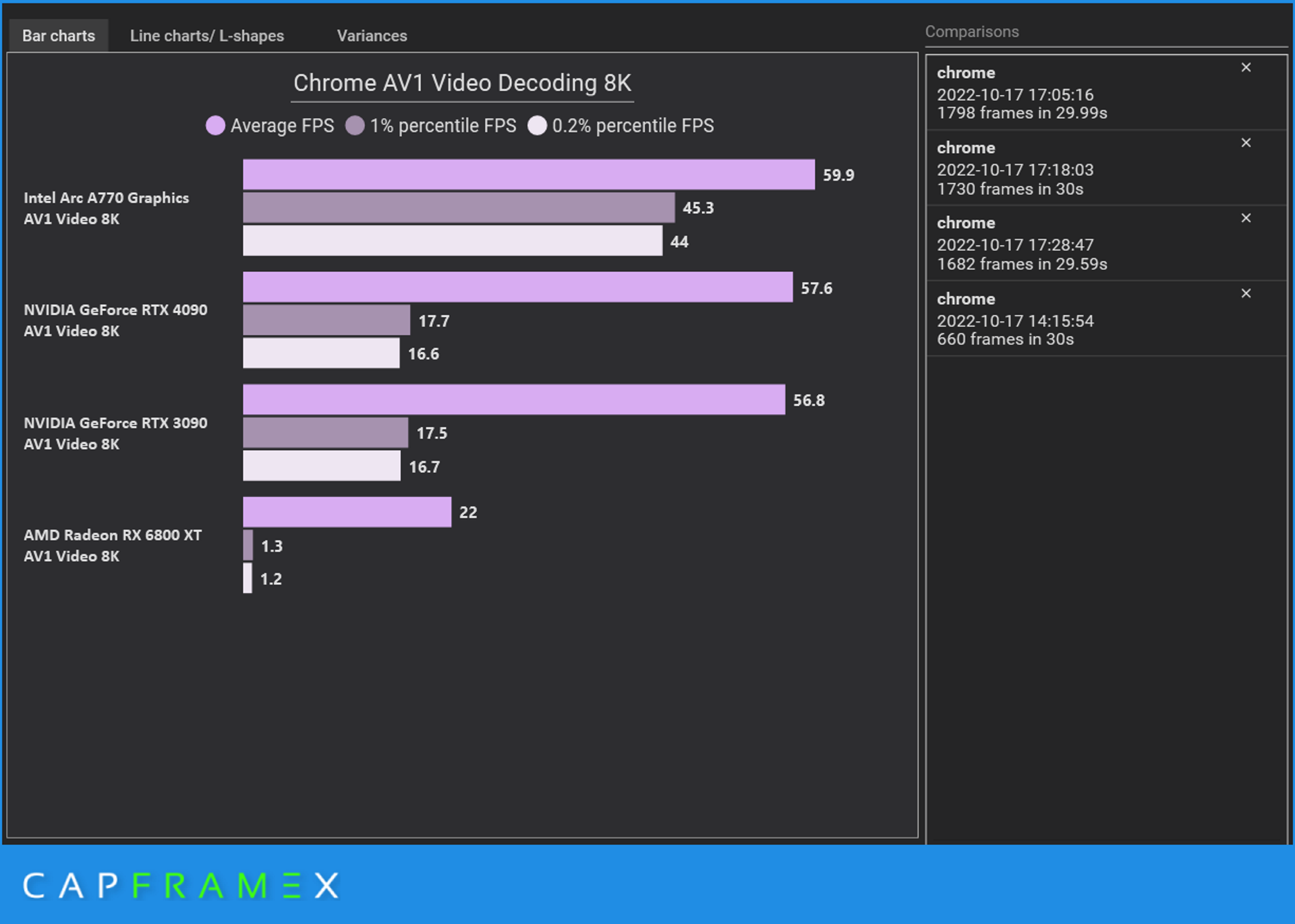

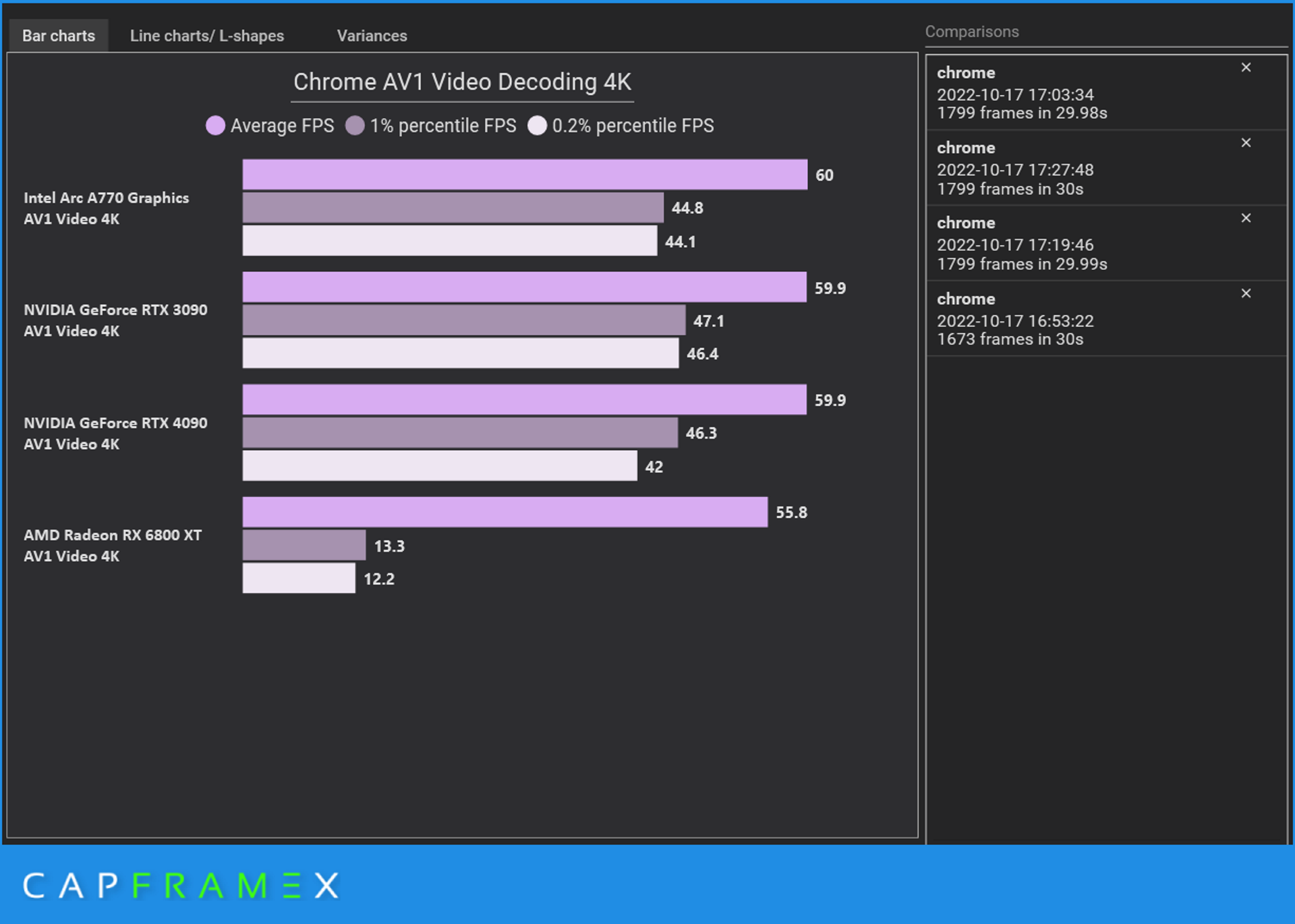

The other cards tested were the RTX 4090, the previous-gen RTX 3090, and AMD’s RX 6800 XT. The test involved decoding a video – Japan in 8K 60fps from YouTube – into 8K and 4K resolutions in the Google Chrome browser. To be ‘successful’ in the test, a GPU would need to run the video at a consistent average 60 frames per second.

As you can see from the results above, only the Arc A770 was able to deliver a perfect 60fps average at 4K, and 59.9fps at 8K. Sure the RTX cards aren’t far behind, managing 59.9fps at 4K, but they drop off more noticeably at 8K resolution, with far lower frame rates in the bottom percentiles too. The poor Radeon RX 6800 XT gets completely blown away, though in its defense AMD has always marketed it as more of a gaming GPU.

Analysis: Intel’s focus on video encoding is good news for content creators

As the dust settles after a slew of new releases, it’s starting to look like the big players in the GPU market are settling into their own specific niches. AMD, for example, is going all-out on the gaming angle, and backing this up with its amazing 3D V-cache CPU tech and great-value processors like the Ryzen 7 7700X.

Nvidia, meanwhile, is doubling down on its deep learning technology, positioning itself as the choice of GPU for people who need absolutely tons of raw graphical power. That could be enthusiast gamers looking to play at 8K, or it could be professionals who need high-end hardware for running 3D rendering or scientific analysis software.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Intel was incredibly late to the party, but it looks like Team Blue has its sights set on the budget GPU space. Aggressive pricing even on its flagship cards, combined with solid gaming performance and excellent video encoding hardware, makes the Arc A7 series a great choice for streamers and content creators who don’t want to drop thousands on Nvidia’s high-end GPUs.

With no cheaper RTX 4000 cards in sight yet, and AMD’s next-gen RDNA 3 GPUs still not here, Intel has a real chance to squeeze itself into the budget space and make life difficult for its competitors. But Nvidia and AMD’s loss is our gain; competition like this is good for the consumer, and with any luck we'll see Nvidia rethinking its currently brutal pricing.

Christian is TechRadar’s UK-based Computing Editor. He came to us from Maximum PC magazine, where he fell in love with computer hardware and building PCs. He was a regular fixture amongst our freelance review team before making the jump to TechRadar, and can usually be found drooling over the latest high-end graphics card or gaming laptop before looking at his bank account balance and crying.

Christian is a keen campaigner for LGBTQ+ rights and the owner of a charming rescue dog named Lucy, having adopted her after he beat cancer in 2021. She keeps him fit and healthy through a combination of face-licking and long walks, and only occasionally barks at him to demand treats when he’s trying to work from home.

Become a TechRadar Insider

Become a TechRadar Insider