DeepMind's new AI learns like a human

It doesn't forget how to solve a problem

We have plenty of AIs that are better than humans at specific tasks (like playing chess, for example), but none that have the breadth of ability that the human mind does – a phenomenon that researchers call 'artificial general intelligence' or AGI.

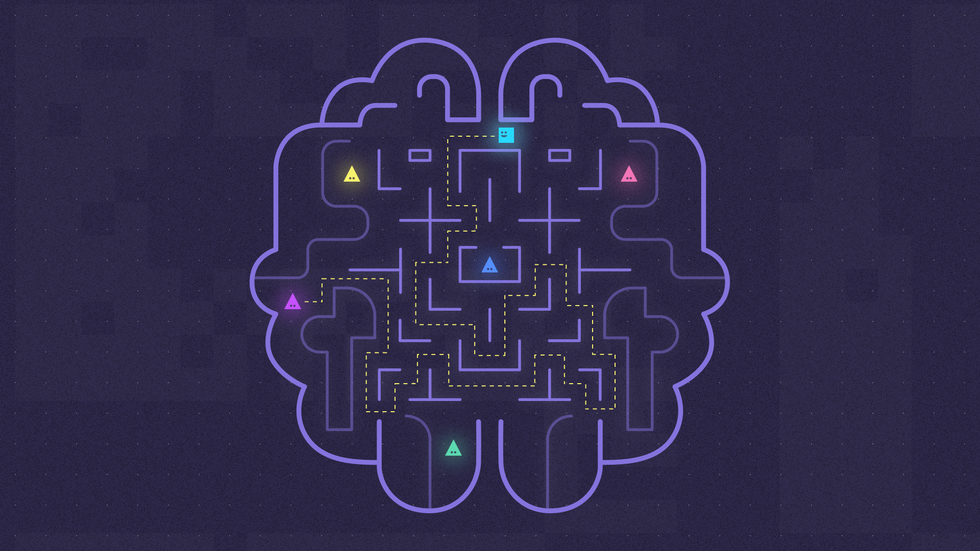

AGI has long been the holy grail of artificial intelligence research, and now Google's AI subsidiary, DeepMind, has developed an artificial intelligence that takes a step towards it. It's able to recall previously-learnt skills and apply them to new tasks.

Normally, neural networks suffer from 'catastrophic forgetting' – they can only learn a new task by overwriting their existing skills. But a team at DeepMind was able to avoid this by teaching the AI to preserve virtual brain connections that have proven useful.

Article continues belowWhen faced with a new task, it'll figure out which connections in its neural network have been important for the tasks that it has learnt to date, and make them harder to change as it learns a new skill. “If the network can reuse what it has learned then it will do,” James Kirkpatrick from DeepMind told the Guardian.

As good as a human

In testing, the research team gave the AI 10 different Atari games to play in a random order. After several days playing each game, the computer was as good as a human player at an average of seven of them.

But, interestingly, the AI performed worse than a traditional neural network when confronted with just a single game.

"We have demonstrated that it can learn tasks sequentially, but we haven’t shown that it learns them better because it learns them sequentially," Kirkpatrick added. "There’s still room for improvement."

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Solving the problem of learning is a crucial one along the path to artificial general intelligence, and it's still a long way off, research like this is an early step in the right direction. "There are many research challenges left to solve," said Kirkpatrick.

The details of DeepMind's research were published in the Proceedings of the National Academy of Sciences.

Become a TechRadar Insider

Become a TechRadar Insider