Standing on the shiny shoulders of giants

The inventions that made all of today’s tech possible

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Stories about time travel like to play with the idea of unfortunate consequences: you might travel back and accidentally kill one of your ancestors, erasing your whole family tree in the process (although if you did that, how could you go back if you never existed? Answers on a postcard to…).

But we’re less worried about failing to exist, and more about the world being festooned with all manner of gadgetry - if you were to accidentally stop any of these inventions from being created, the world of tech as we know it simply wouldn’t exist.

But here we’re less worried about us failing to exist, but rather the gadgets we all rely on today. Many of our modern devices owe their existence to technological breakthroughs made decades ago, and if just one or two of these had never happened the world now would be a very different – and probably less fun – place.

Article continues belowSo when you’re holding your shiny smartphone in your hand, marvelling at how thin it is, how speedily it works, the advanced camera in the back and how it can tell where you are at all times, take a moment to thank the following inventions, and the inventors, that made it a reality.

1. Transistor

Bell Labs’ William Shockley, John Bardeen and Walter Brattain shared a Nobel prize for the transistor, which is the building block of everything electronic.

Transistors could amplify electrical signals as well as turn them on or off, and their invention transformed everything from radios to space rockets.

The transistor was designed to solve a major problem for telephone company AT&T in the middle of the 20th century: its network used vacuum tubes to amplify current, and those tubes were unreliable, expensive and generated lots of heat.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

By creating amplifiers that were dependable, relatively cheap and considerably cooler, the researchers fired the starting pistol for the information age.

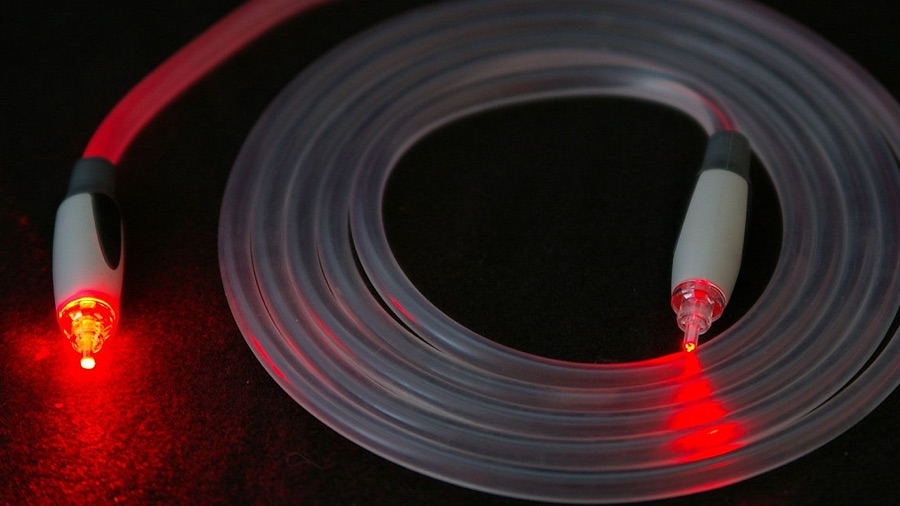

2. Fiber optics

In 1952, UK-based physicist Narinder Singh Kapany became ‘the father of fiber optics’.

He didn’t invent optical fibers, but he found a way to deliver much better image quality through them than had been managed before.

Optical fiber transmits light, not electricity, and as a result it can transmit at much higher speeds and over much longer distance than traditional wired cabling – and it doesn’t suffer from the electromagnetic interference that plagues metal cables.

Without fiber, modern super-fast broadband wouldn’t be possible – and we haven’t reached its full potential yet. In 2012, Nippon Telegraph and Telephone Corporation transmitted data at one Petabit per second – that’s a billion megabits per second – over a single fibre for 50km.

3. Cellular networks

<insert image CELLULAR.PNG>

You’re probably familiar with the term cellular network – but do you actually know what it is?

The idea of a cellular network came into being in the middle of last century. It’s a communications network that’s cabled up to the last link, which has a base station that uses radio waves for wireless connections.

That is called a cell, and each cell’s coverage slightly overlaps that of other cells to deliver continuous coverage. As you move, so does your connection: if you’re moving out of one cell’s range you’re moving into another’s, and your connection should transfer from cell to cell as you move around.

Cellular networking made the mobile phone possible, and as technology improved it became possible to deliver data as well as voice. Today’s phone networks are mainly digital – voice is just another kind of data.

4. Wi-Fi

Before Wi-Fi, computing meant cabling. Lots and lots of cabling. Now, thanks to Wi-Fi, more and more of our computing is cable-free.

The first version of Wi-Fi as we know it, the 802.11 protocol, was released in 1997 by a group chaired by a chap named Vic Hayes in a bid to create a standard everyone could follow.

Its successor, 1999’s 802.11b, delivered 11Mbps speeds and was a huge hit. The Wi-Fi Alliance was formed to manage the Wi-Fi trademark, but the standards are set by the IEEE (Institute of Electrical and Electronics Engineers).

Wi-Fi didn’t just free our computers and make surfing from the sofa easier; it’s also made smart homes and the internet of things possible, and it’s even starting to appear in cars.

5. MP3

MP3 – or MPEG-2 Audio Layer III, to give it the name its mother uses – wasn’t the first file format to compress music, but it’s the one that transformed not just the music business but the way we consume music, largely because it was the favored file format of song-swapping service Napster.

By reducing the sound quality in order to reduce file sizes, it became possible to download songs even on poorer connections – and now that broadband is much faster, an entire album in MP3 format can be downloaded in seconds.

Without MP3 there would be no iPod, no Spotify – and no endless scrolling past Ed Sheeran songs in the download charts.

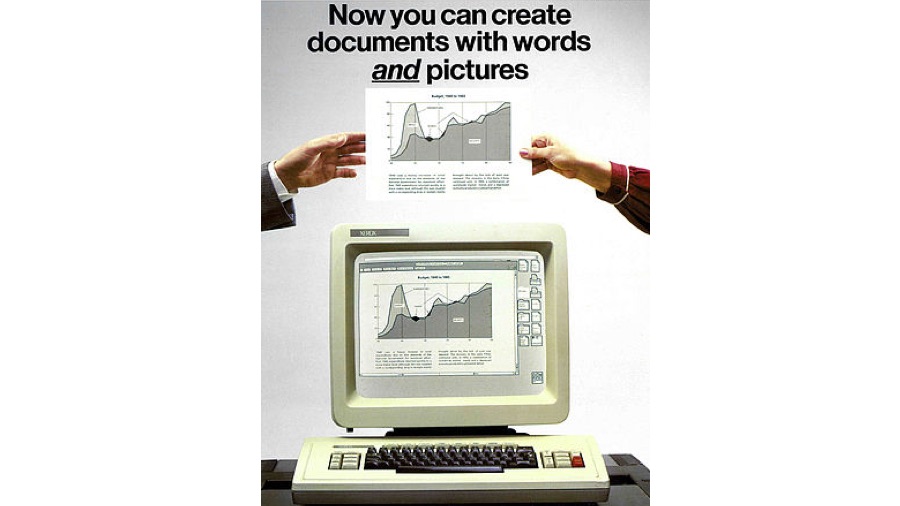

6. WIMP

The Xerox 8010 Information System might not be a name that’s on the tip of everyone’s tongue, but this 1981 workstation had something special: the Star software.

It was the first commercial system to use things that nowadays we take for granted: bitmapped displays, windows, icons, folders, mice, Ethernet, file servers, print servers and email.

The Windows, Icons, Mouse and Pointer (WIMP) interface was cheerfully nicked by Apple and Microsoft, and would ultimately end in amusing but fruitless legal battles, with Apple suing Microsoft and Xerox suing Apple over their user interfaces.

From Apple’s macOS to Android, WIMP’s influence is everywhere today, and the basis of how we interact with machines.

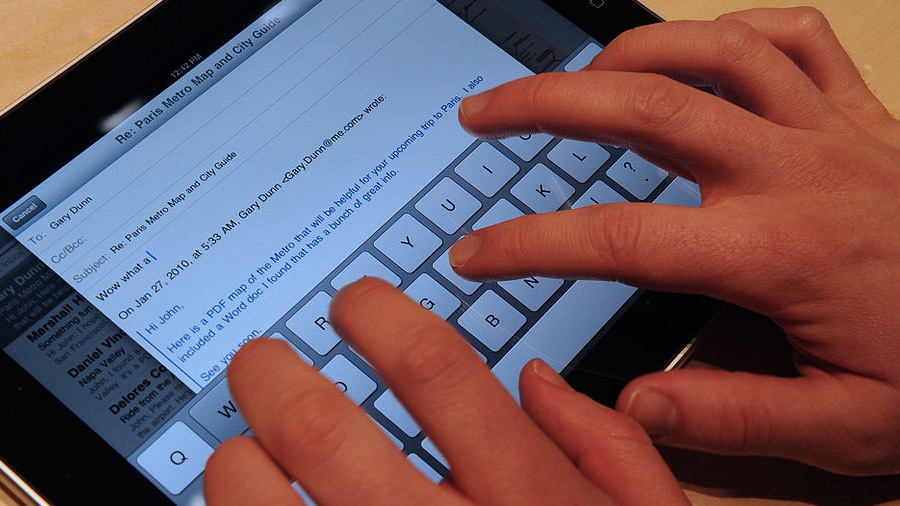

7. Multi-touch

The iPhone wasn’t the first smartphone, or the first touch-sensitive phone. But it was the first multi-touch phone, and that turned out to be very important indeed.

The technology had been kicking around since the 1970s, but Apple arguably perfected it – and Apple definitely popularized it, with the iPhone kick-starting a wave of development that revolutionized smartphone (and soon afterwards, tablet and even PC) design.

When it’s teamed up with a good user interface, multi-touch removes many of the barriers that make PCs hard to use: the notion of iPad-toting toddlers tapping on touchscreens is a cliché, but that’s because it’s true, and multi-touch clearly feels natural to a developing mind.

8. GPS

The Global Positioning System (GPS) was developed in the 1970s by the US government and is managed by the US Air Force, but unusually for a military system it’s freely available to civilian users too.

It uses satellite signals to accurately pinpoint the location of a GPS receiver, and provided you have line-of-sight access to multiple satellites’ signals it’s incredibly fast and accurate.

A second GPS system, Russia’s GLONASS, is also available, and can make receivers even more accurate, and there are other systems too – the EU’s Galileo, India’s NAVIC, China’s Beidou and so on, with more of them being stuffed into phones and watches to make them ever-more accurate at locating you.

It’s almost funny that a technology designed to help target weapons is now being used for a very different kind of targeting: social media uses location awareness to help decide what ads it’s going to show you.

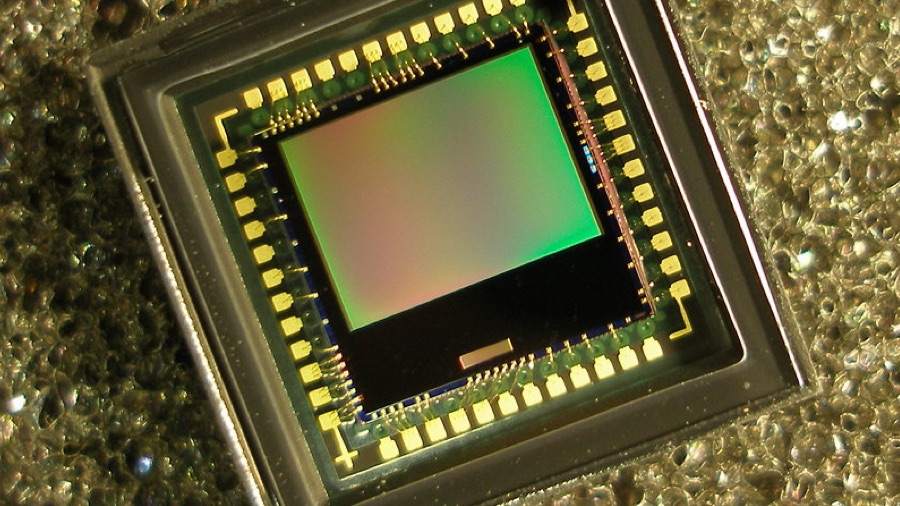

9. CMOS

The CCD – Charged-Coupled Device – was invented in 1969 and was the original sensor or ‘eye’ of a digital camera, but the newer CMOS (Complementary Metal-Oxide Semiconductor) sensor is going places where CCDs couldn’t, such as smartphones.

The move to CMOS is why smartphone and portable camera technology has changed so dramatically in the last few years: CMOS is more energy-efficient and faster than CCD, and it enables great things, such as using your phone to shoot in HD or ever-higher resolutions, Apple’s Live Pictures, superior low-light shooting.

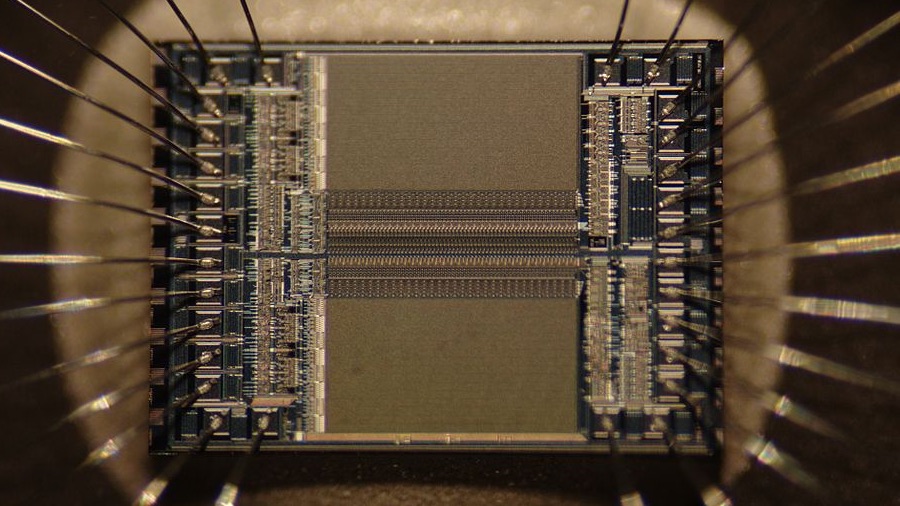

10. Integrated circuit

The integrated circuit changed everything. Without it we wouldn’t have PCs or games consoles, smartphones or Sony Walkmans, in-car entertainment or internet, space shuttles or smart TVs… if you’re a time traveller who wants to prevent the modern world from becoming modern, this is the one to go for.

Before the integrated circuit was invented technology had hit a wall: devices were so complex and used so many components and vacuum tubes that ‘the tyranny of numbers’ kicked in.

This meant adding any more components made the device less reliable and harder to run and repair. Tubes used enormous amounts of power, and needed replaced almost daily.

Like most technologies the integrated circuit has many parents, but key patents were filed in 1952 and 1953 by Sidney Darlington, Bernard Oliver and Harwick Johnson, while in 1958 Texas Instruments’ Jack Kilby invented the first prototype of an integrated circuit.

This combined transistors and other components in a single block of material: no wires, no weak connections that could fail.

The first semiconductor integrated circuit was built by Fairchild Semiconductor in 1960 under the guidance of Robert Noyce, who would help to found a plucky little startup called Intel.

Today, there are more integrated circuits on Earth than there are people.

This article was brought to you in association with Vodafone

Contributor

Writer, broadcaster, musician and kitchen gadget obsessive Carrie Marshall has been writing about tech since 1998, contributing sage advice and odd opinions to all kinds of magazines and websites as well as writing more than twenty books. Her latest, a love letter to music titled Small Town Joy, is on sale now. She is the singer in spectacularly obscure Glaswegian rock band Unquiet Mind.