Micron launches a 256GB SOCAMM2 memory module using 64 32GB LPDDR5x chips — and yes, hyperscalers can shove 8 in an AI server to reach 2TB capacity: mere mortals need not apply

Total capacity exceeds the previous module generation by a third

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

- Micron introduces dense 256GB LPDDR5x module aimed squarely at AI servers

- Eight SOCAMM2 modules can push server memory capacity to a massive 2TB

- AI inference workloads increasingly shift performance bottlenecks toward system memory capacity

Large language models (LLMs) and modern inference pipelines increasingly demand enormous memory pools, forcing hardware vendors to rethink server memory architecture.

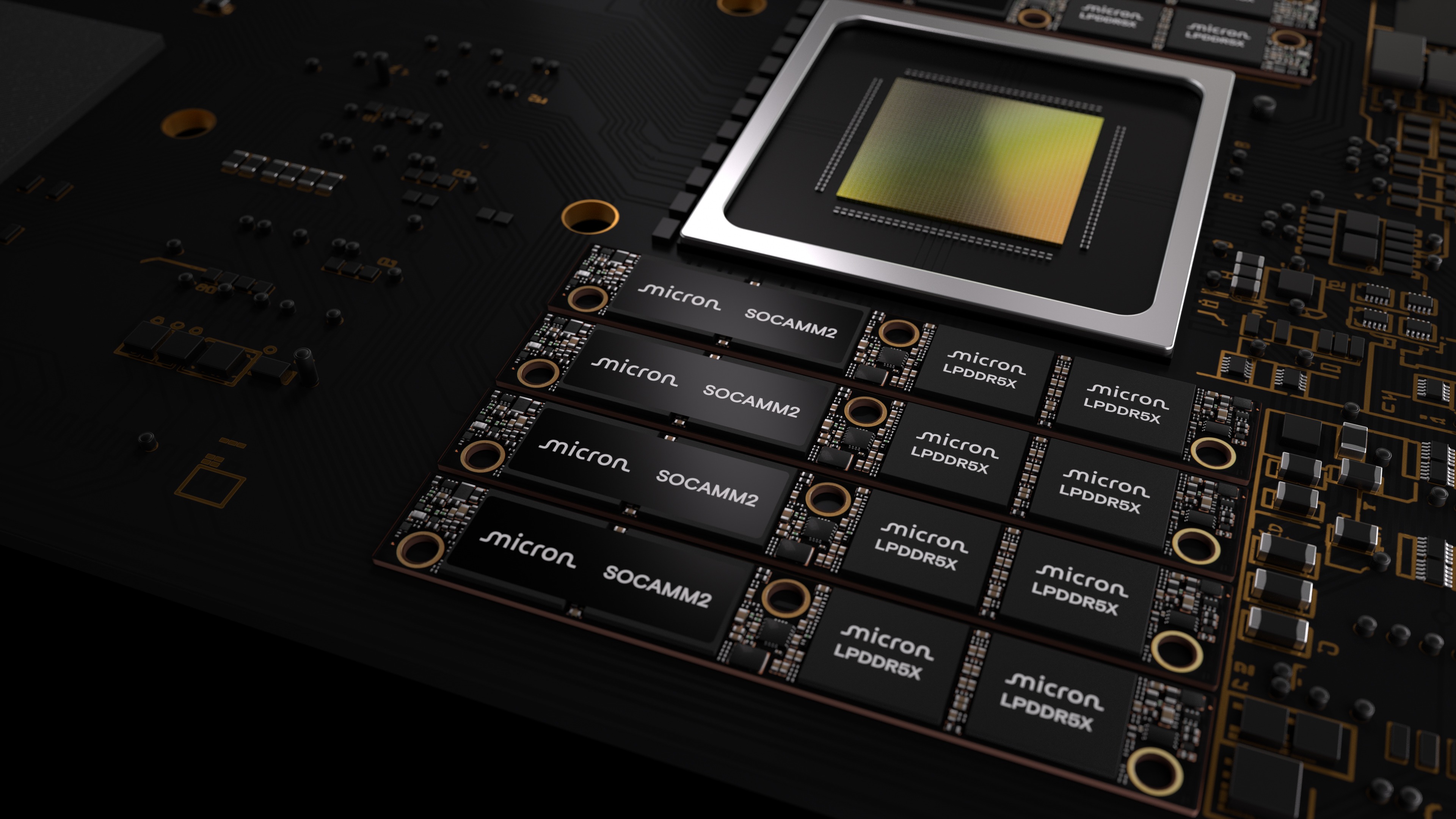

Micron has now introduced a 256GB SOCAMM2 memory module intended for data center systems where capacity, bandwidth, and power efficiency all influence overall performance.

The module relies on 64 monolithic 32GB LPDDR5x chips, forming a dense LPDRAM package that addresses the growing memory footprint required by contemporary AI workloads.

Article continues belowScaling memory capacity for AI server platforms

The module also increases the maximum memory available per processor configuration. When eight of these SOCAMM2 modules are installed in an eight-channel server CPU, the total capacity can reach 2TB of LPDRAM.

That figure exceeds the previous 192GB module generation by roughly one-third, allowing systems to accommodate larger context windows and more demanding inference tasks.

Micron describes the SOCAMM2 design as more efficient than conventional server memory modules.

“Micron’s 256GB SOCAMM2 offering enables the most power-efficient CPU-attached memory solution for both AI and HPC,” said Raj Narasimhan, senior vice president and general manager of Micron’s Cloud Memory Business Unit.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

“Our continued leadership in low-power memory solutions for data center applications has uniquely positioned us to be the first to deliver a 32Gb monolithic LPDRAM die, helping drive industry adoption of more power-efficient, high-capacity system architectures.”

The company says the new LPDRAM module consumes roughly one-third the power of comparable RDIMMs while occupying only one-third of the physical footprint.

Its reduced energy demand and smaller module size allow higher rack density inside large data center deployments, while lower memory power reduces the thermal load and infrastructure costs.

The SOCAMM2 architecture also follows a modular design intended to simplify maintenance and future expansion.

The format supports liquid-cooled server systems and can accommodate additional capacity as memory requirements grow alongside model complexity and dataset scale.

Micron states the 256GB SOCAMM2 module can influence certain inference operations under unified memory architectures.

The company reports more than a 2.3 x improvement in time-to-first-token during long-context inference when the module is used for key value cache offloading.

In standalone CPU workloads, the LPDRAM configuration reportedly delivers over 3 x better performance per watt compared with mainstream server memory modules.

The company’s LPDRAM portfolio spans components from 8GB to 64GB and SOCAMM2 modules ranging from 48GB to 256GB, and customer samples of the 256GB SOCAMM2 module are already shipping.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Efosa has been writing about technology for over 7 years, initially driven by curiosity but now fueled by a strong passion for the field. He holds both a Master's and a PhD in sciences, which provided him with a solid foundation in analytical thinking.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Become a TechRadar Insider

Become a TechRadar Insider