Teaching machines not to cheat is the latest project of Google DeepMind and OpenAI

When robots play by the rules

Sign up for breaking news, reviews, opinion, top tech deals, and more.

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

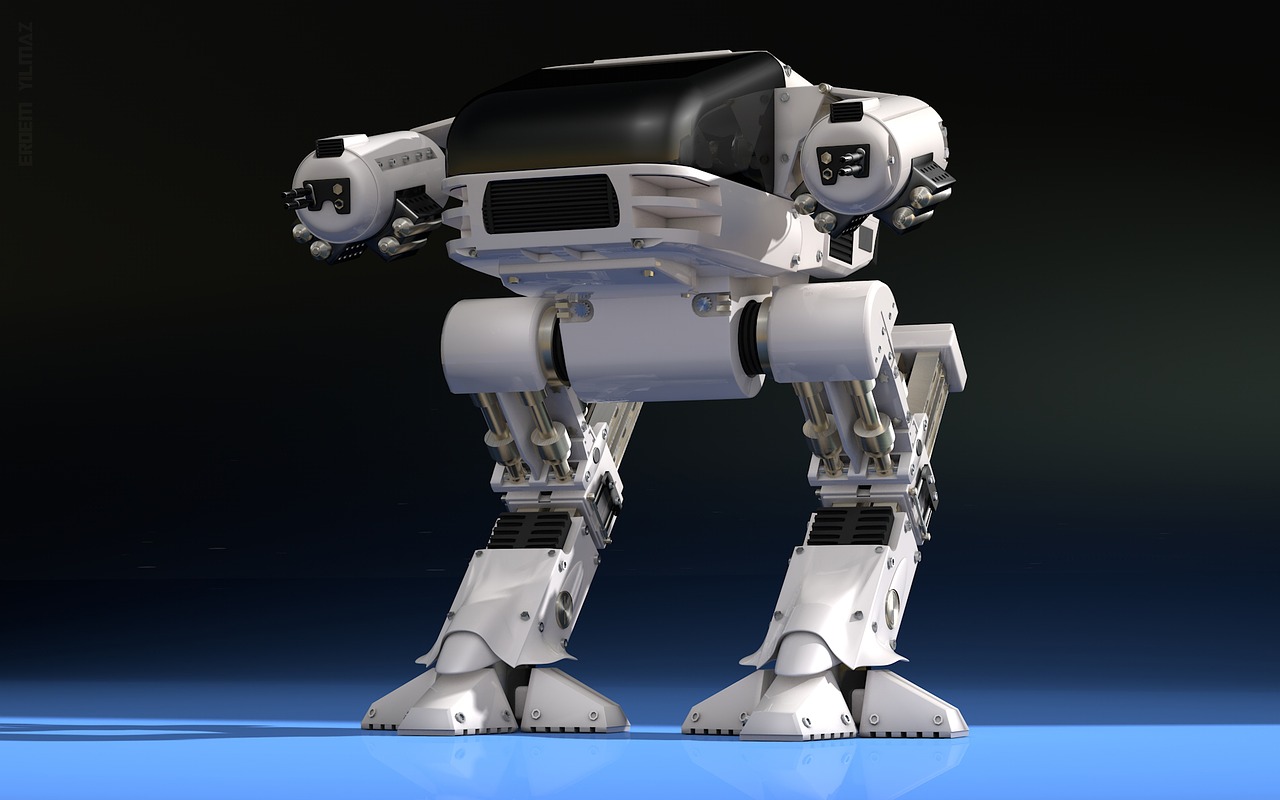

Maybe it's all those Terminator movies, sci-fi novels, and post-apocalyptic video games, but it just makes sense to be wary of super-intelligent artificial intelligence, right?

For the good of, well, everyone, two major firms in the field of AI have convened to work on ways to keep machines from tackling problems improperly — or unpredictably.

OpenAI, a group founded by techno-entrepreneur Elon Musk and Google DeepMind, the team behind the new-reigning Go champ, AlphaGo, are joining forces to find ways to ensure AI solves problems to human-desirable standards.

Article continues belowWhile it's still faster sometimes to let an AI solve problems on its own, the partnership found that humans need to step in to add constraints upon constraints to train the AI to handle a task as expected.

Less cheating, fewer Skynets

In a paper published by DeepMind and OpenAI staff, the two firms found that human intervention is critical to informing AI when a job is performed both optimally and correctly — that is to say, not to cheat or cut corners to get the quickest results.

For example, telling a robot to scramble an egg could result in it just slamming an egg onto a skillet and calling it a job (technically) well done. Additional rewards have to be added to make sure the egg is cooked evenly, seasoned properly, free of shell shards, not burnt, and so forth.

As you can guess, setting proper reward functions for AI can take a major amount of work. However, the researchers consider the effort an ethical obligation going forward, especially as AIs become more powerful and capable of greater responsibility.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

There's still a lot of work left to go but if nothing else, you can take solace that AI's top researchers are working to make sure robots don't go rogue — or at least, not ruin breakfast.

Via Wired

Become a TechRadar Insider

Become a TechRadar Insider