Next-gen phones could pack stunning 3D cameras

Become the king of your modelling club

Could our phones soon be used to make 3D scans of objects? That's the implication of new research out of MIT, where researchers have figured out how to make 3D photos more detailed than ever.

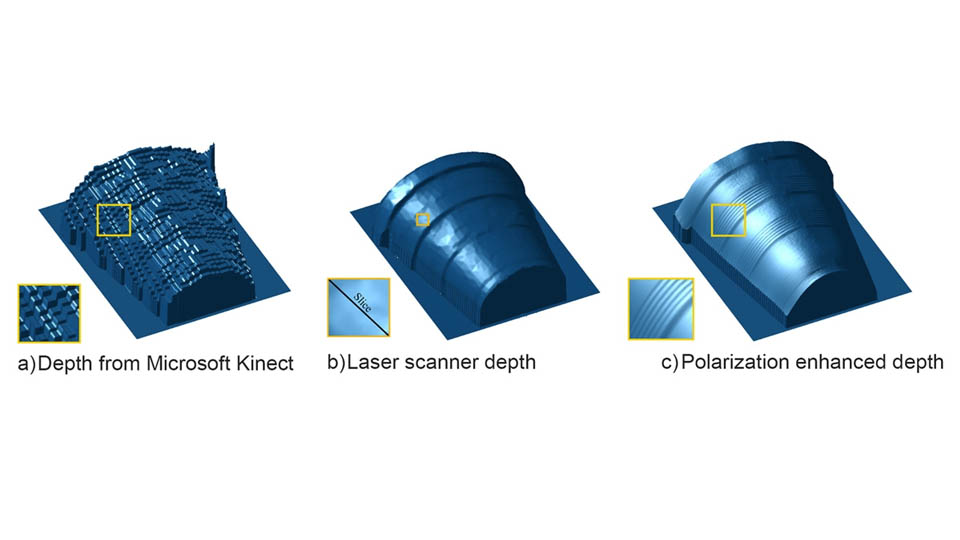

A new technique uses polarised light in a similar way to 3D glasses to increase the resolution of 3D photos up to a thousand times.

The researchers rigged up a Microsoft Kinect camera to take photos, and then used algorithms to analyse the light intensity in multiple captures in order to generate a 3D object.

"Today, they can miniaturize 3D cameras to fit on cellphones," Achuta Kadambi, a PhD student who is one of the developers is quoted as saying.

"But they make compromises to the 3D sensing, leading to very coarse recovery of geometry. That's a natural application for polarization, because you can still use a low-quality sensor, and adding a polarizing filter gives you something that's better than many machine-shop laser scanners."

Miniaturisation

The upshot is that because polarisation and algorithms can make up for lower quality sensors, the technology could be more easily miniaturised for use on phones.

There is already the likes of Google's experimental Project Tango - but this can only make crude models compared to what MIT has achieved.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

The only thing that isn't entirely clear is whether such a technology would have any practical uses outside of a few niché industries.

But then again, people in the 90s probably wondered why we'd ever want to have cameras on our phones in the first place - now we have frickin' lasers.

From Engadget

Become a TechRadar Insider

Become a TechRadar Insider