Before The Matrix and The Terminator, there was 'The Creation of the Humanoids' — how an obscure 1962 B-movie set the scene for robot takeover and introduced the concept of centralized intelligence

Today’s robots owe a lot to a 1990s MIT effort with a different vision of machine intelligence

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

You are now subscribed

Your newsletter sign-up was successful

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Long before killer cyborgs stalked Sarah Connor or sentinels patrolled dystopian skies, the low-budget 1962 film The Creation of the Humanoids (which can be found on YouTube) asked a worrying question which seems even more pertinent today: what if machines didn’t just serve humanity, but replaced it?

Set in a post-nuclear world, the film imagines a society dependent on robots. A scientist perfects a “thalamic transplant,” transferring human memories into synthetic bodies connected to a “huge central computer.”

Yet centralized knowledge alone is not enough. Only when machines gain lived sensory experience do they begin to transcend their programming — and threaten humanity.

Article continues belowThe premise of the low-budget production covered surprisingly modern ideas, like memory transfer, synthetic embodiment, centralized computation, and machine self-replication. The themes would be covered decades later in more famous film franchises. But at the time, they were hugely original.

More than thirty years after the film’s release, Popular Mechanics revisited its ideas in a July 1995 feature that shared its title. The article referenced the movie and then explored what was happening with robots in the real world at that time, focusing on work being done in the robotics lab of MIT researcher Rodney Brooks.

Although framed around The Creation of the Humanoids, the article actually opened with a different, better known cinematic reference. “In ‘2001: A Space Odyssey,’ an artificial intelligence named HAL controlled the Jupiter-bound spaceship Discovery.”

If you've seen the movie, you may recall HAL became operational on January 12, 1992 (it was 1997 in Arthur C. Clarke's source novel). When that date passed in the real world “with no HAL,” Brooks decided to pursue a different vision of machine intelligence.

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

“Instead of imbuing a spacecraft with a human soul,” the magazine wrote, Brooks was set on “putting the mind of a human into the body of a robot.”

That robot was Cog.

Not a GOFAI

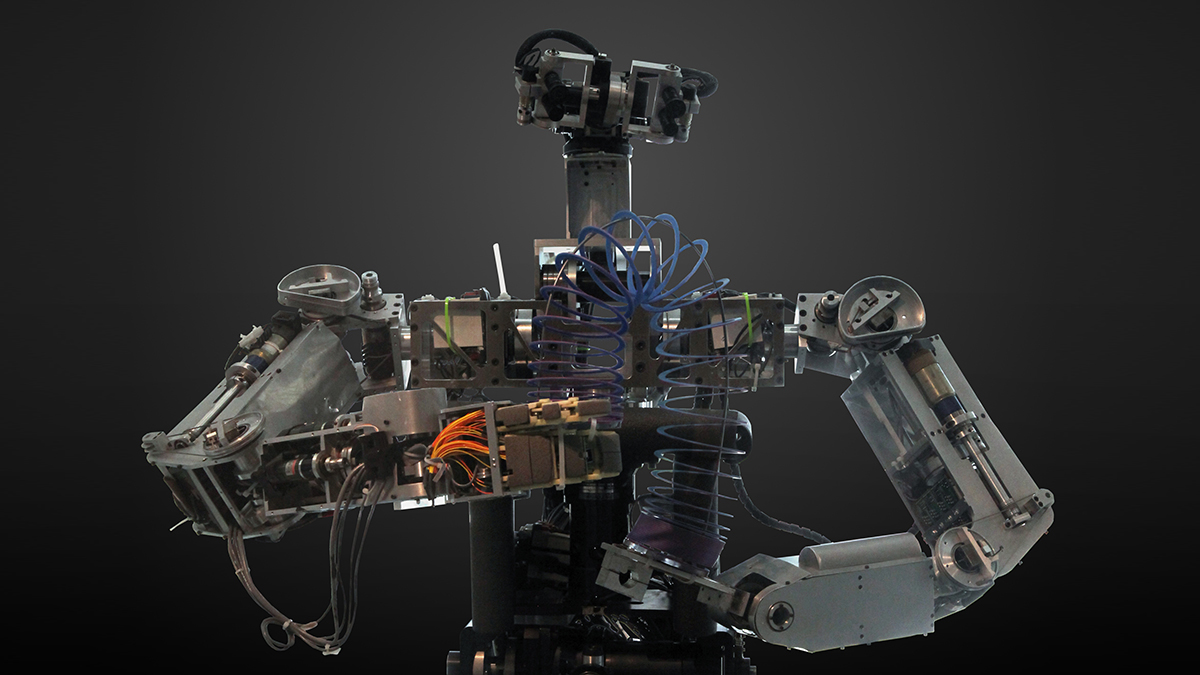

By mid-1995 standards, Cog was both ambitious and unimpressive: “a collection of computer chips, motors, joints, rods, cables, wire and video cameras hung together on a black anodized aluminum frame,” as Popular Mechanics described it. It had a head, neck, shoulders, chest and waist, but no legs, skin or fingers.

Philosophically, however, it represented a break from what researchers at the time called GOFAI — Good Old-Fashioned Artificial Intelligence — the “brain in a box” model exemplified by systems like Deep Thought.

In GOFAI, intelligence meant building a complete internal representation of the world and reasoning over it.

Brooks disagreed.

“The idea is that the complexity of the world happens in the world, not in the creature,” he said. Rather than constructing massive internal maps, Cog relied on “parallel behaviors” — simple, sensor-driven routines working together. Earlier insect-like robots built in Brooks’ lab had used the same principle to navigate obstacles without a master plan.

“Cog represents the same principle,” Brooks explained. “We just jumped a few evolutionary layers.”

Alan Turing’s thought experiment

Where the film imagined intelligence flowing from a central machine, Brooks was betting on embodiment.

He drew on Alan Turing’s 1950 thought experiment. As Popular Mechanics quoted him, “Turing argued that you should make a robot like a human and let it wander around the countryside and experience what humans do.” Brooks added, “Putting all that together — and not totally uninspired by Commander Data of Star Trek — I decided to build a human.”

As limited as Cog was, it was built around forward-looking ideas. “Each of Cog’s eyes has a wide-angle and a narrow-field camera, and each camera can pan and tilt.”

It had to “learn to relate what it sees in the camera to its own head motion.” That developmental framing, learning like an infant, anticipated modern self-supervised learning approaches, where robots build representations through exploration rather than explicit programming.

Brooks speculated about machine sensation as well, describing ways to control “the amount of current passing through Cog’s motors” to simulate fatigue or pain, and proposing a future “skin with sensors so it can learn by touch.”

Three decades later, tactile sensing arrays and compliant actuators are standard in collaborative robots.

Today’s most powerful AI systems are trained in vast data centers, their “brains” distributed across racks of GPUs rather than patch cables in a lab.

Large language models and multimodal systems undergo enormous centralized training runs before being deployed across devices. In robotics, cloud systems allow machines to offload computation to remote servers — an echo of that fictional “huge central computer.”

Brooks’ emphasis on embodiment has proven durable, with modern humanoids from Boston Dynamics, Tesla, Figure and Agility Robotics relying heavily on real-time sensor fusion.

Cameras, force sensors and joint encoders feed neural networks that learn through physical interaction. Reinforcement learning in simulation is refined in the physical world, and the world remains the model.

Run before you can walk

Although Cog was given arms, legs — and walking — were viewed as too problematic at the time. Popular Mechanics did, however, note that another MIT robotics researcher, Marc Raibert had met the challenge with his own running, balancing robots. “We think running is simpler than walking,” he said, “so one of our mottos is, ‘You have to run before you can walk.’ ”

Today’s bipeds can run, jump and recover from shoves, though autonomy at scale remains limited. Many humanoids are still supervised.

The anxiety that The Creation of the Humanoids captured — replacement, identity, loss of control — exists today, but rather than robots supplanting biological humanity, the focus is on cognitive displacement: algorithms writing, designing, diagnosing and composing.

The “central computer” is a cloud AI service. The “thalamic transplant” is training data drawn from billions of human artifacts.

Brooks once described his goal as “to bridge the gap between HAL’s boxed brain and Data’s embodied, near-human mind.” He conceded, “The end result will be, yes, like Commander Data. But that’s a long, long ways away.”

That distance has shortened in the intervening years, but it hasn’t disappeared. Humanoids can navigate warehouses, and AI systems can converse and generate images. Yet none truly “wander around the countryside and experience what humans do” in the open-ended way Turing imagined.

More than sixty years after The Creation of the Humanoids posed its warning, we’re still exploring the space between centralized intelligence and lived experience, without having fully reached either extreme.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews, and opinion in your feeds. Make sure to click the Follow button!

And of course you can also follow TechRadar on TikTok for news, reviews, unboxings in video form, and get regular updates from us on WhatsApp too.

Wayne Williams is a freelancer writing news for TechRadar Pro. He has been writing about computers, technology, and the web for 30 years. In that time he wrote for most of the UK’s PC magazines, and launched, edited and published a number of them too.

You must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Become a TechRadar Insider

Become a TechRadar Insider