Sensors working overtime

Sensors can give computers all kinds of useful input. An electronic tongue developed by the Group of Sensors and BIOSensors at the Universitat Autònoma de Barcelona could help wine makers discover defects in their products, while the Israel Institute of Technology's electronic nose can sniff a person's breath and detect the chemical signature of a cancerous tumour.

Kinect sensors add gesture recognition, depth perception and voice recognition technology. Kinect's voice recognition, like the voice recognition in Windows and various speech applications, works reasonably well, but we're still a long way from the Hollywood vision of super-intelligent computers that can carry out conversations with people.

As Microsoft's Natural Language Processing group points out on its blog, "It's ironic that natural language, the symbol system that is easiest for humans to learn and use, is hardest for a computer to master." Enter Watson, the IBM supercomputer that managed to defeat some human contestants in the game show Jeopardy.

Article continues belowIBM sees Watson as a computing breakthrough - a system capable of understanding natural language and responding in kind. Watson's game show career was just a publicity stunt; the technology is now being trialled in the healthcare industry.

As IBM puts it, "A doctor considering a patient's diagnosis could use Watson's analytics technology, in conjunction with Nuance's voice and clinical language understanding solutions, to rapidly consider all the related texts, reference materials, prior cases, and latest knowledge in journals and medical literature to gain evidence from many more potential sources than previously possible. This could help medical professionals confidently determine the most likely diagnosis and treatment options."

The 80-teraflop, 2,880-core Watson isn't the only visible result of the rapid advances in natural language processing. Microsoft and Google both offer online translators that do a decent job of translating web pages in various languages, and those translators also power impressive smartphone translation apps.

Microsoft used the technology to translate nearly 140,000 Knowledge Base articles into Spanish in 2003, and has since added a further eight languages including Japanese, French and German.

Sign up for breaking news, reviews, opinion, top tech deals, and more.

Betting on brains

Understanding and processing language is impressive, but being able to do it to human standards is tough - and we have a test to measure a computer's conversational skills.

The Turing Test, based on a 1950 paper by Alan Turing, is based on the belief that one day computers will be smart enough to make us think they're human. All a computer needs to do to pass the test is to fool the judges into thinking it's human, and so far no computer has managed it.

The test is the subject of a famous $20,000 bet between futurist Ray Kurzweil and Lotus founder Mitchell Kapor. Kurzweil believes that a computer will pass the test by 2029, while Kapor thinks his money's safe.

Kapor argues that the brain isn't a computer. "The brain's actual architecture and the intimacy of its interaction, for instance, with the endocrine system, which controls the flow of hormones, and so regulates emotion (which in turn has an extremely important role in regulating cognition) is still virtually unknown," he writes.

"In other words, we really don't know whether in the end, it's all about the bits and just the bits. My prediction is that contemporary metaphors of brain-as-computer and mental activity-as-information processing will in time [be] superseded".

As Kapor points out, carrying out a conversation is far from simple. "While it is possible to imagine a machine obtaining a perfect score on the SAT or winning Jeopardy, since these rely on retained facts and the ability to recall them, it seems far less possible that a machine could weave things together in new ways or to have true imagination in a way that matches everything people can do," he says.

Rebuilding lives

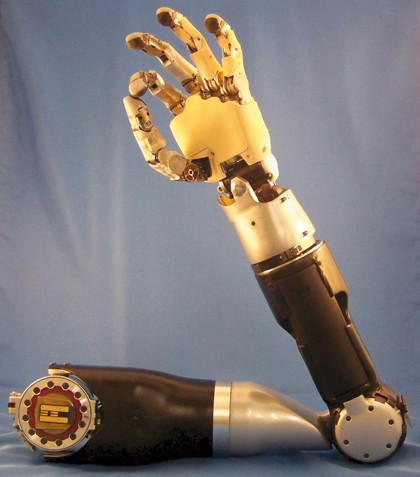

One of the most interesting areas of research is in brain-machine interfaces. Some interfaces can control prosthetic limbs - DARPA has spent five years and more than $100million developing an extraordinarily clever and life-like robotic arm that will be controlled by a microchip inserted in the brain.

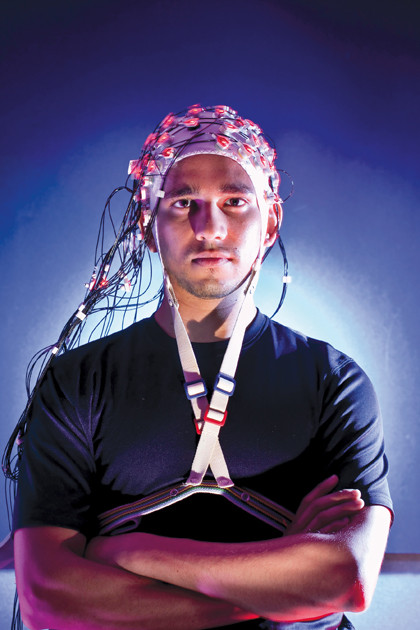

The University of Maryland's Brain Cap uses an EEG cap to control similar devices without the need to implant anything, while others prove that sometimes, two heads can be better than one.

Researchers at Columbia University have created a device called C3Vision - short for Cortically Coupled Computer Vision - that uses an electroencephalogram cap on a human user's head to track brain activity. The user is then shown 10 images per second and asked to look for abstract things that computers have a tough time processing, such as things that look 'strange' or 'silly'.

Inevitably there's a military angle to the technology: C3Vision has already been used to scan satellite images to look for surface-to-air missiles, achieving results that no human or computer could manage alone.

DARPA is also working on other kinds of brain-machine interfaces: its extraordinary robotic arm, developed over five years at a cost of more than $100million, will be controlled by a microchip embedded in users' brains. Programme manager Geoffrey Ling says the arm is "truly transformative - just like the arms each of us has."

The arm has been developed to help soldiers who have been injured in Afghanistan, but could ultimately benefit stroke victims, quadriplegics or anyone else who has lost the use of an arm. Clinical trials start later this year, and the arm could be in use within five years.

IBM's Deep Blue famously beat Garry Kasparov at chess, but was its success due to intelligence or just sheer processing power? As Computer History Museum historian Dag Spicer recalls, Deep Blue team member Feng-hsiung Hsu didn't believe that the chess-playing computer was intelligent.

"Assume that we were playing Kasparov at the World Trade Center and 9/11 hit," he told Spicer. "Kasparov would have run like hell. We would have run like hell. Deep Blue would just sit there, computing."

OK Go

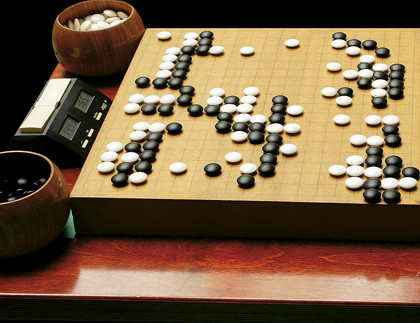

"Chess has been solved by a very brute force approach, by enumerating all the possible moves and countermoves that can be made and building up a game tree to find the optimum move," Graepel says. Deep Blue used a combination of sheer processing power and training from chess grand masters, but the techniques that worked so well in chess don't work so well in the Chinese game Go.

Creating a computerised Go master is one of the goals in artificial intelligence research, and progress was slow: human players would take on enormous handicaps and still thrash the very best computerised opponents.

"In computer Go, the situation was stagnant for many years and people were trying to use the same techniques that had worked for chess," Graepel says. "Then a new technique was invented under the name of Monte Carlo tree search."

By using random samples of games to inform the computer's decisions, Monte Carlo delivered better results than chess-playing algorithms - and it seems to scale. "The problem with previous techniques was that if someone gave you a computer that was 10 times faster, the software wouldn't run better," Graepel says. "But with Monte Carlo tree search, a faster computer made the software considerably stronger."

Thanks to this, "the Go community has managed to create programs that are competitive with good amateur Go players - not professional ones, but good amateurs."

If there's a common thread across the examples we've seen so far, it's that mimicking or beating humans in just one field is difficult enough. Creating a machine that rivals us in multiple areas is even harder.

Comparisons between supercomputers and human brains are somewhat misleading: with the exception of dedicated brain simulators like IBM's C2 and the Blue Brain Project, supercomputers aren't trying to mimic what's in our heads: they're using their power to work on things minds can't, like climate modelling, nuclear warhead simulation and aerospace engineering.

Introducing the superbrain

When it comes to intelligence, results are all that matters - so if a computer-controlled car can complete a circuit, it makes no difference whether it's the result of enormous computing power or good GPS software and sensors. By specialising, we can get what we want much more quickly than by trying to mimic millions of years of evolution - most of which is unique to humans and unnecessary or undesirable in a computer.

As Hugo Award-winning novelist Charlie Stross writes on www.antipope.org, "We may want machines that can recognise and respond to our motivations and needs, but we're likely to leave out the annoying bits, like needing to sleep for 30 per cent of the time, being lazy or emotionally unstable, and having motivations of its own. I don't want my self-driving car to argue with me about where we want to go today. I don't want my robot housekeeper to spend all its time in front of the TV watching contact sports or music videos."

The idea of intelligent artificial humans makes for fun sci-fi, but in the real world the concept seems more like Maslow's Hammer: the idea that, when all you have is a hammer, everything looks like a nail. Perhaps we've already built an intelligent machine, but because it doesn't look like a Terminator we haven't noticed.

"We already have a huge, distributed intelligence out there in the form of the internet, and maybe it's that our individual brains can't see the scope of what we've already built here," Graepel says. "There are two layers to it. There are all those connected computers, which have lots of processing power, and when they all get connected they have even more; and you can also view the internet as a mechanism to create a global human intelligence, where we have these people collaborating and creating what you might call a superbrain."

Factor in the 'internet of things' - devices and sensors of all kinds connected to the internet - and things start getting really interesting. "You need three things," Graepel says. "Sensors, actuators and the processing power that connects them. These three things can become ubiquitous."

We're already seeing some of that in the form of cloud computing, like the smartphone apps that take voice input, send it to servers for processing and return the results instantly. Imagine the same technology with more sensors, more miniaturisation and access to more kinds of data and you've got what Intel calls a 'digital personal handler" - a virtual assistant in a device wired into your glasses that "would see what you see, constantly pull data from the cloud and whisper information to you - telling you where people are, where to buy an item you just saw, or how to adjust your plans when something comes up."

Intel calls it "collaborative perception", with sensors embedded in everything around us. "For example, an intelligent home management system should not only be able to recognise who is at home to determine what entertainment content to display, but also their mood so as to determine appropriate tone of voice, volume and lighting levels," Intel says.

Such empathy requires multiple technologies: sensors, prior learning, cloud data and even the odd bit of human input. What Intel's describing isn't an intelligent machine; it's an intelligent network. In isolation a device can't hope to rival the human brain, but if you connect it to the cloud it becomes a node in an incredibly powerful network, a network with multiple eyes and ears, multiple processor cores and effectively unlimited storage.

On their own, our devices are just devices. Connected, they form a brain the size of the planet.

-------------------------------------------------------------------------------------------------------

First published in PC Plus Issue 313. Read PC Plus on PC, Mac and iPad

Liked this? Then check out In pictures: Fujitsu K - world's fastest supercomputer

Sign up for TechRadar's free Week in Tech newsletter

Get the best tech stories of the week, plus the most popular news and reviews delivered straight to your inbox. Sign up at http://www.techradar.com/register

Follow us on Twitter * Find us on Facebook * Add us on Google+

- 1

- 2

Current page: AI and brain-machine interfaces

Prev Page Could technology make your brain redundant?

Become a TechRadar Insider

Become a TechRadar Insider